An Industrial Process Monitoring Method Based on Total Measurement Point Coupling Structure Analysis and Estimation

-

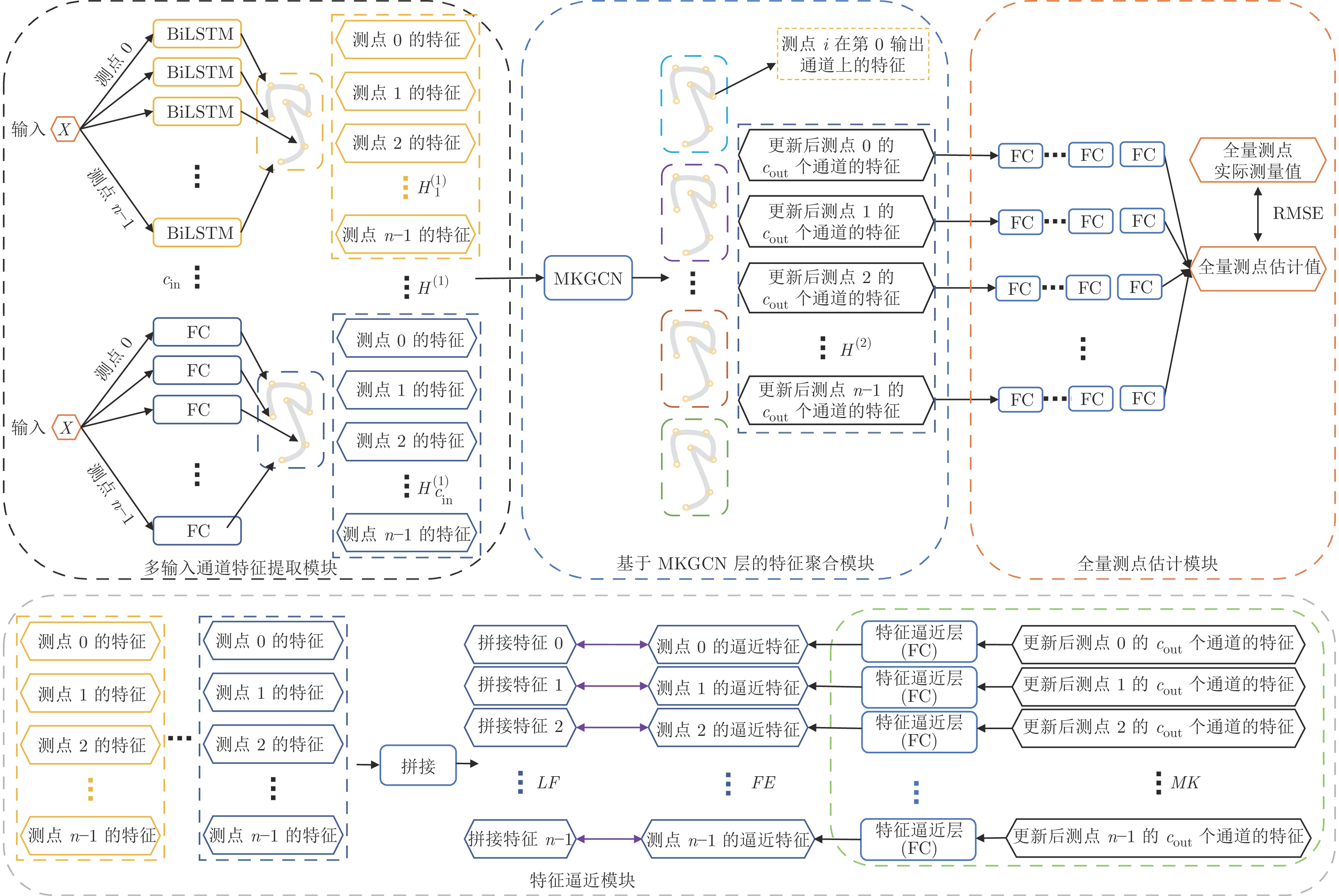

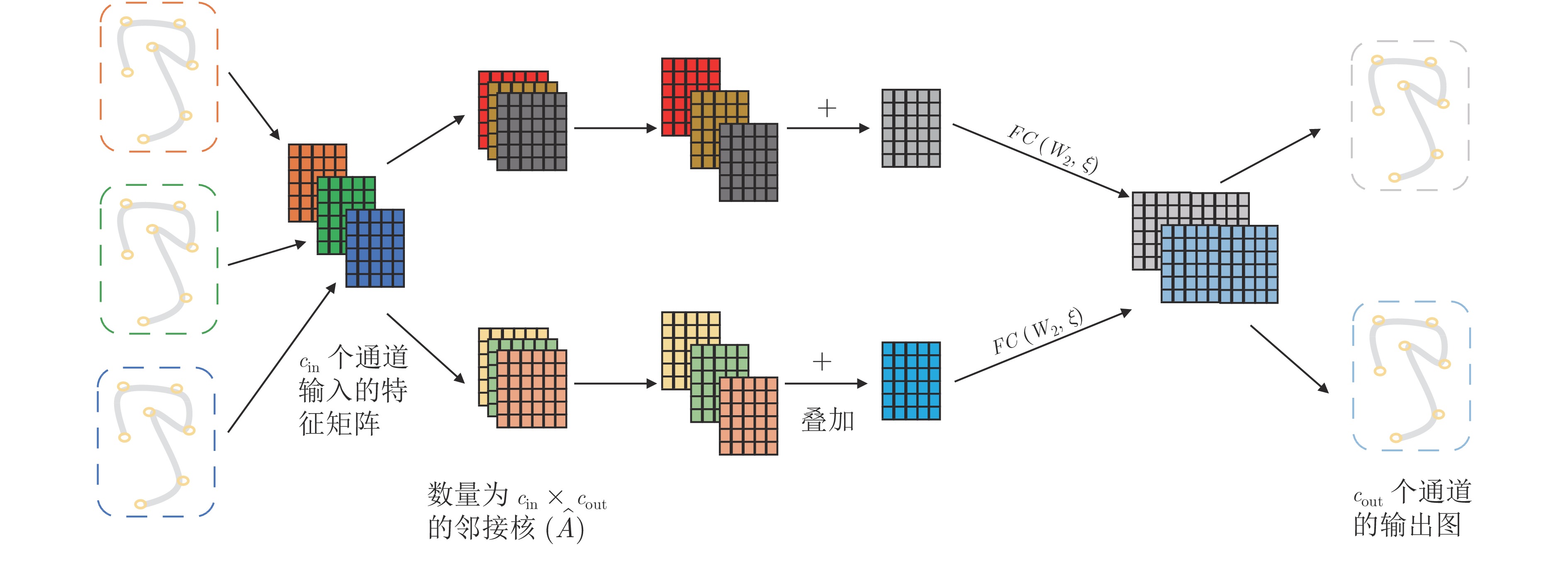

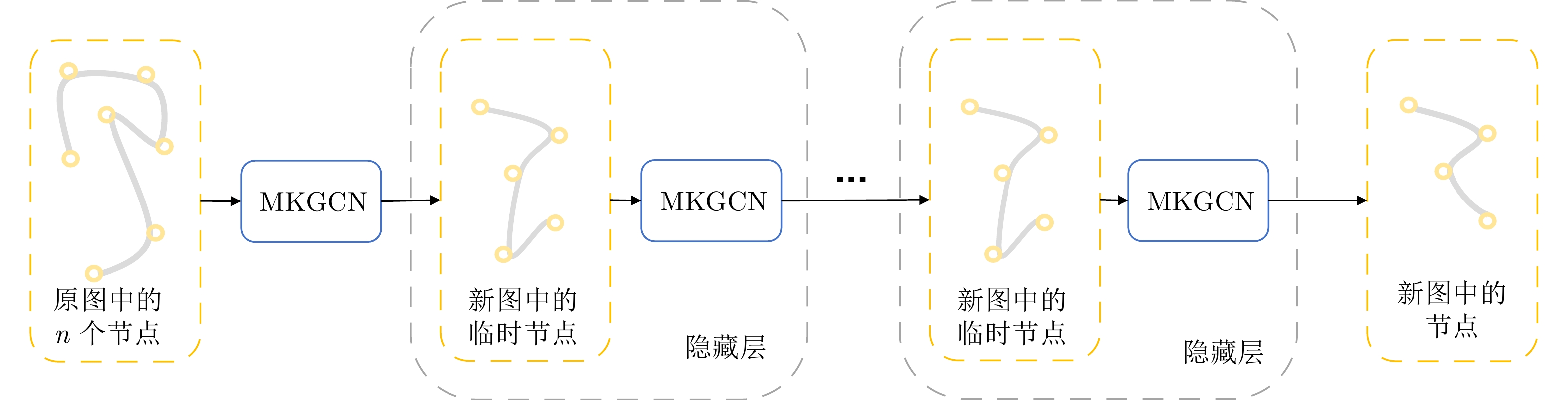

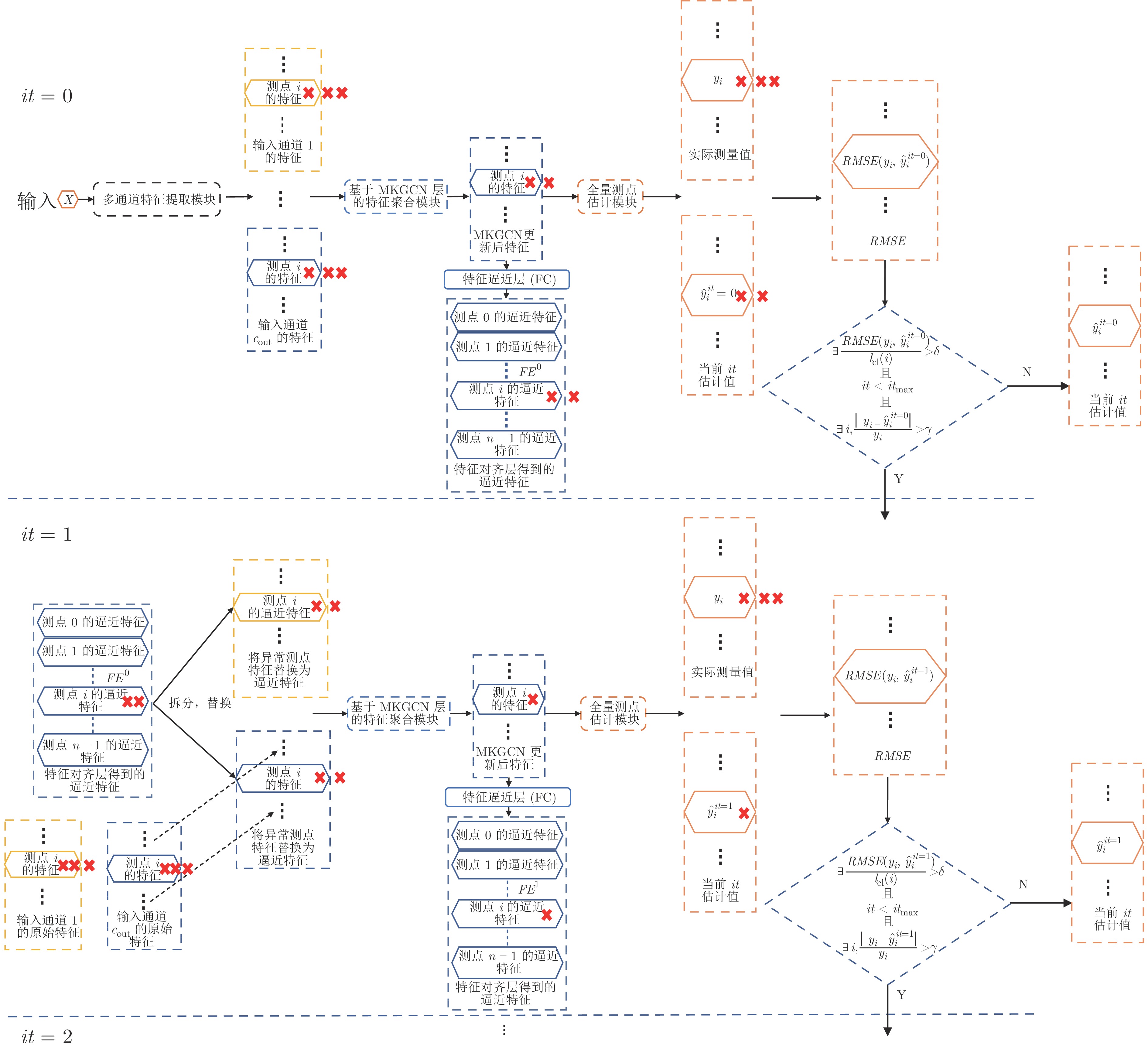

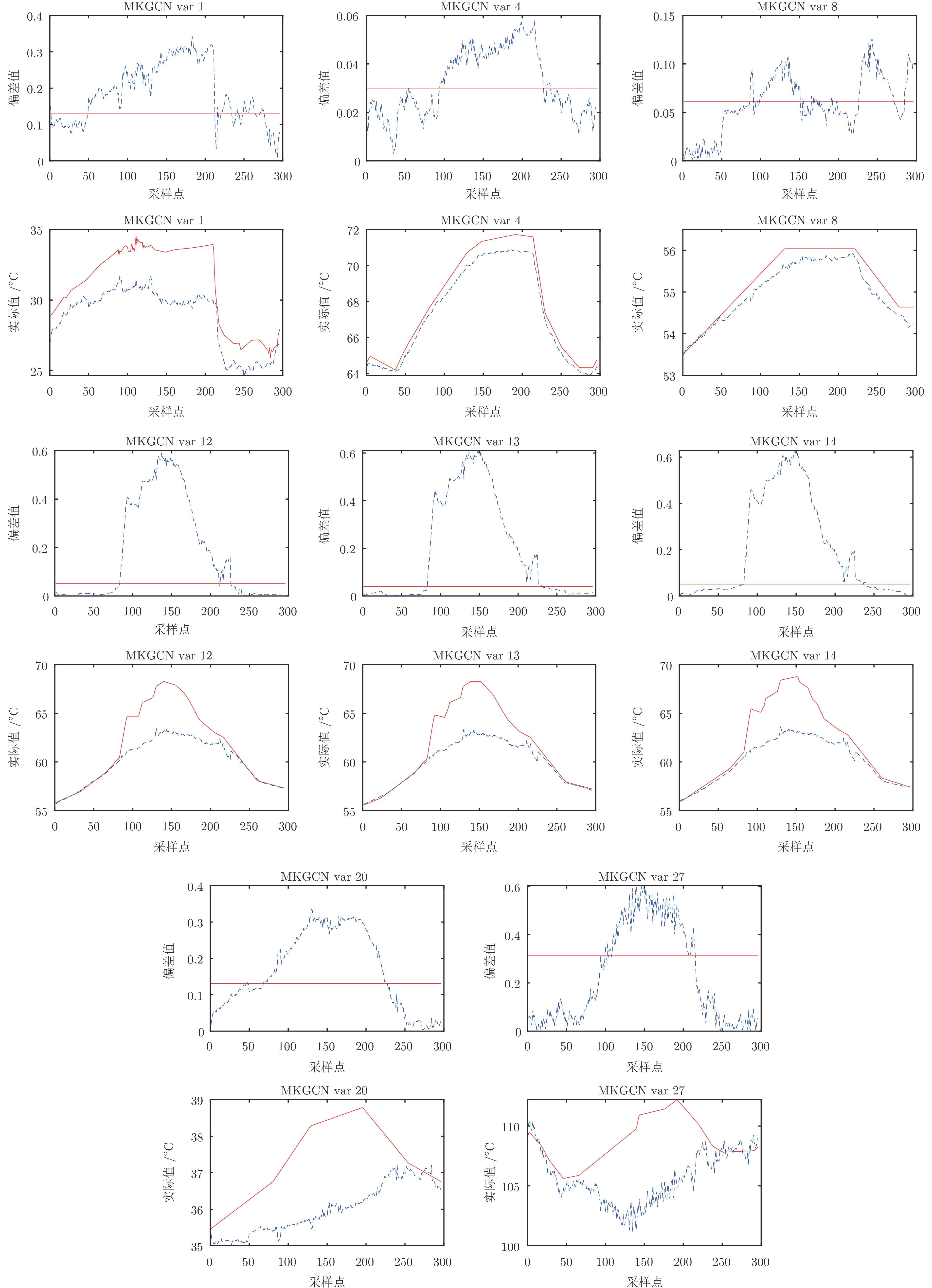

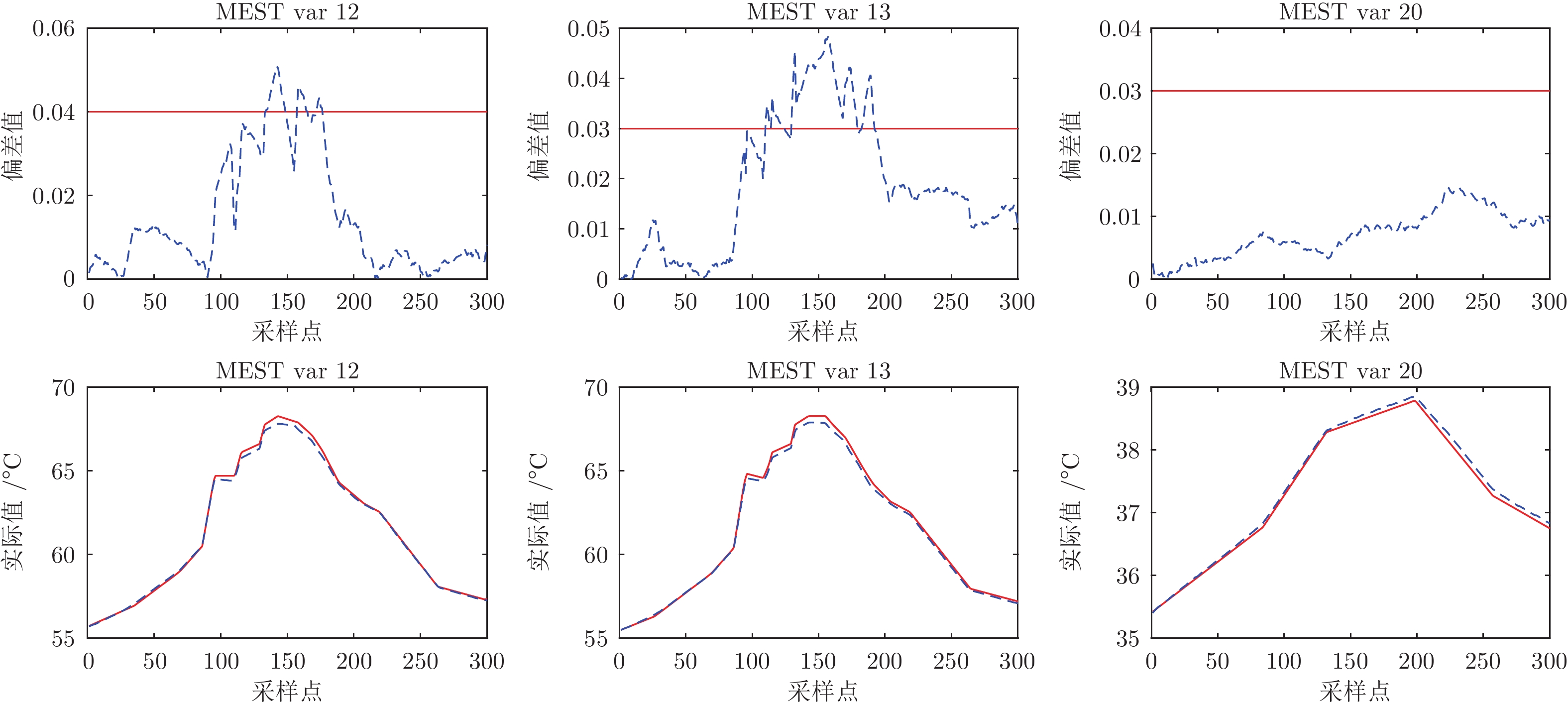

摘要: 实际工业场景中, 需要在生产过程中收集大量测点的数据, 从而掌握生产过程运行状态. 传统的过程监测方法通常仅评估运行状态整体的异常与否, 或对运行状态进行分级评估, 这种方式并不会直接定位故障部位, 不利于故障的高效检修. 为此, 提出一种基于全量测点估计的监测模型, 根据全量测点估计值与实际值的偏差定义监测指标, 从而实现全量测点的分别精准监测. 为克服原有的基于工况估计的监测方法监测不全面且对测点间耦合关系建模不充分的问题, 提出多核图卷积网络(Multi-kernel graph convolutional network, MKGCN), 通过将全量传感器测点视为一张全量测点图, 显式地对测点间耦合关系进行建模, 从而实现全量传感器测点的同步工况估计. 此外, 面向在线监测场景, 设计基于特征逼近的自迭代方法, 从而克服在异常情况下由于测点间强耦合导致的部分测点估计值异常的问题. 所提出的方法在电厂百万千瓦超超临界机组中引风机的实际数据上进行验证, 结果显示, 与其他典型方法相比, 所提出的监测方法能够更精准地检测出发生故障的测点.Abstract: In the actual industrial scenario, it is necessary to collect a large number of data from measuring points in the production process, so as to master the operational state of the production process. Traditional process monitoring methods usually only evaluate whether the overall operation state is abnormal or not, or carry out hierarchical evaluation of the state. These methods do not directly locate the fault location, which is not conducive to the efficient maintenance of the fault. Therefore, in this paper, a monitoring model based on total measurement point estimation is proposed, and the monitoring indicators are defined according to the deviation between the estimated value and the actual value of total measurement points, so as to realize the separate and accurate monitoring of total measurement points. In order to overcome the problems of incomplete monitoring and insufficient modeling of coupling relationship between measuring points in the original monitoring method based on condition estimation, a multi-kernel graph convolution network (MKGCN) is proposed. By treating the measuring points as a graph of the total measurement points, the coupling relationship between measuring points is explicitly modeled, thus realizing the synchronous estimation of total measuring points. In addition, for the on-line monitoring scenario, a self-iteration method based on feature approximation is designed to overcome the issue of abnormal estimation of some measurement points due to the strong coupling between measurement points under abnormal system state. The method proposed in this paper is verified on the actual data of induced draft fan in

1000 MW ultra-supercritical thermal power unit of power plant. The results show that the monitoring method proposed in this paper can detect the fault measuring points more accurately than other typical methods. -

表 1 引风机测点对应表

Table 1 Measuring points of induced draft fan

测点编号 物理量 测点编号 物理量 测点编号 物理量 0 功率信号三选值 11 引风机水平振动 22 引风机油箱温度 1 进气温度 12 引风机后轴承温度 1 23 引风机中轴承温度 1 2 引风机电机定子线圈温度 1 13 引风机后轴承温度 2 24 引风机中轴承温度 2 3 引风机电机定子线圈温度 2 14 引风机后轴承温度 3 25 引风机中轴承温度 3 4 引风机电机定子线圈温度 3 15 引风机键相 26 炉膛压力 5 引风机电机水平振动 1 16 引风机静叶位置反馈 27 引风机出口风温 6 引风机电机水平振动 2 17 引风机前轴承温度 1 28 引风机入口压力 7 引风机电机轴承温度 1 18 引风机前轴承温度 2 29 引风机出口风压 8 引风机电机轴承温度 2 19 引风机前轴承温度 3 30 引风机静叶开度指令 9 引风机电流 20 引风机润滑油温度 31 总燃料量 10 引风机风垂直振动 21 引风机润滑油压力 32 炉膛压力 表 2 基于MKGCN层的工况估计模型结构

Table 2 Structure of working condition estimation model based on MKGCN layer

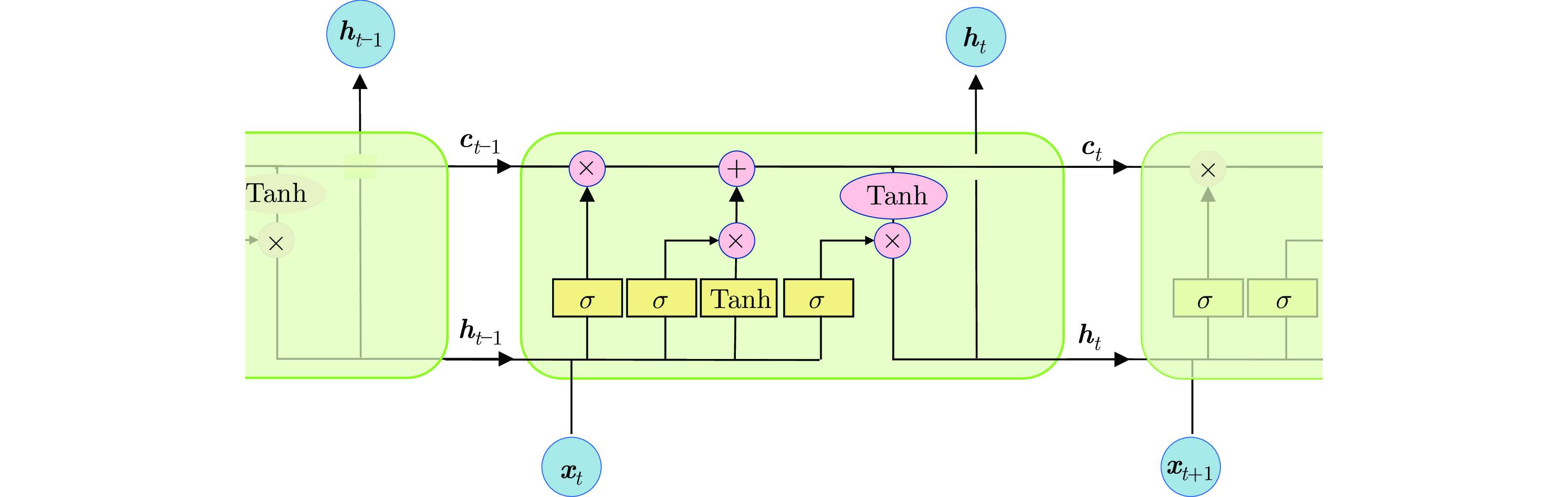

序号 网络层 数目 参数 激活函数 1 BiLSTM $n$ $[{ {\rm{input}}\_{\rm{size}}} = len, {{\rm{hidden}}\_{\rm{size}}} = ld]$ None FC $n$ $[{ {\rm{input}}\_{\rm{size}}} = len, {{\rm{output}}\_{\rm{size}}} = 2 \times ld]$ 2 MKGCN $1$ $[ {{c_{{\rm{in}}}} = 1,n{o_{{\rm{in}}}} = n,f{e_{{\rm{in}}}} = 2 \times ld} $,

$ {{c_{{\rm{out}}}} = oc,n{o_{{\rm{out}}}} = n,f{e_{{\rm{out}}}} = 4 \times ld}] $Tanh 3 FC 0 $n$ $[{ {\rm{input}}\_{\rm{size}}} = 4 \times ld, {{\rm{output}}\_{\rm{size}}} = 2 \times ld]$ Tanh 4 FC 1 $n$ $[{ {\rm{input}}\_{\rm{size}}} = 2 \times ld, {{\rm{output}}\_{\rm{size}}} = 1]$ Tanh 5 FC 2 $n$ $[{ {\rm{input}}\_{\rm{size}}} = \;oc, {{\rm{output}}\_{\rm{size}}} = 1]$ None 6 特征逼近层 (FC) $n$ $[{ {\rm{input}}\_{\rm{size}}} = oc, {{\rm{output}}\_{\rm{size}}} = 1]$ None 表 3 基于GCN的工况估计模型结构

Table 3 Structure of working condition estimation model based on GCN

序号 网络层 数目 参数 激活函数 1 BiLSTM $n$ $[{ {\rm{input}}\_{\rm{size}}} = len, {{\rm{hidden}}\_{\rm{size}}} = ld]$ None 2 GCN 1 $[{\rm{in}}\_{\rm{feature}} = 2 \times ld, {\rm{out}}\_{\rm{feature}} = 4 \times ld]$ Tanh 3 FC 0 $n$ $[{ {\rm{input}}\_{\rm{size}}} = 4 \times ld, {{\rm{output}}\_{\rm{size}}} = 2 \times ld]$ Tanh 4 FC 1 $n$ $[{ {\rm{input}}\_{\rm{size}}} = 2 \times ld, {{\rm{output}}\_{\rm{size}}} = ld]$ Tanh 5 FC 2 $n$ $[{ {\rm{input}}\_{\rm{size}}} = ld, {{\rm{output}}\_{\rm{size}}} = 1]$ None 表 4 模型实现和参数网格搜索范围

Table 4 Model implementation and parameter grid search range

方法 Python包 超参数 超参数调整范围 PLSR scikit-learn $nc$ $nc = \left\{ {5,10,15,20,25} \right\}$ ELM D.C. Lambert $E,\alpha $ $ E = \left\{ {50,100,150,200,250} \right\}, $

$ \alpha = \left\{ {0.1,0.3,0.5,0.7,0.9} \right\} $FC PaddlePaddle $ld$ $ld = \left\{ {8,16,32,64,128} \right\}$ BiLSTM PaddlePaddle $ld$ $ld = \left\{ {8,16,32,64,128} \right\}$ Conv1D PaddlePaddle $ld$ $ld = \left\{ {8,16,32,64,128} \right\}$ GCN PaddlePaddle $ld$ $ld = \left\{ {8,16,32,64,128} \right\}$ MKGCN PaddlePaddle $ld,oc$ $ ld = \left\{ {8,16,32,64,128} \right\},$

$ oc = \left\{ {2,4,8,16,32} \right\} $表 5 网格搜索结果与深度神经网络方法在最优超参数下总参数量

Table 5 Grid search results and total parameters of depth neural network method with optimal hyperparameters

方法 最优超参数 模型数 总参数量 PLSR $nc = 15$ $n$ / ELM $E = 200,\alpha = 0.9$ $n$ / MEST / / / FC $ld = 128$ $n$ 5 × 105 BiLSTM $ld = 128$ $n$ 6.9 × 106 Conv1D $ld = 128$ $n$ 9 × 105 GCN $ld = 64$ $1$ 9.8 × 106 MKGCN $ld = 8,oc = 32$ $1$ 1.8 × 105 表 6 测试数据上不同模型的工况估计结果(RMSE)

Table 6 Results of different working condition estimation models on test data (RMSE)

变量 PLSR ELM FC BiLSTM Conv1D GCN MEST MKGCN $\text{var} \in N$ 0.042 0.064 0.059 0.052 0.060 0.042 0.005 0.044 $\text{var} \in F$ 0.046 0.076 0.059 0.049 0.082 0.049 0.006 0.046 表 7 测试数据上不同模型的工况估计结果(MAE)

Table 7 Results of different working condition estimation models on test data (MAE)

变量 PLSR ELM FC BiLSTM Conv1D GCN MEST MKGCN $\text{var} \in N$ 0.034 0.052 0.049 0.043 0.051 0.034 0.004 0.036 $\text{var} \in F$ 0.039 0.066 0.050 0.041 0.070 0.043 0.005 0.039 表 8 监测数据上各监测指标$( \text{var} \in N)$

Table 8 Monitoring indicators on monitoring data $( \text{var} \in N)$

指标 PLSR ELM FC BiLSTM Conv1D GCN MEST MKGCN ${False}_\text{p}$ 13.267 29.573 34.267 27.392 42.581 23.568 2.853 4.500 ${False}_\text{n}$ 0 0 0 0 0 0 0 0 F1 92.895 82.648 79.324 84.131 72.951 86.642 98.553 97.698 表 9 监测数据上各监测指标$( \text{var} \in F)$

Table 9 Monitoring indicators on monitoring data $( \text{var} \in F)$

指标 PLSR ELM FC BiLSTM Conv1D GCN MEST MKGCN ${False}_\text{p}$ 15.958 30.583 31.375 32.390 37.162 10.769 0 10.769 ${False}_\text{n}$ 24.250 5.042 5.917 1.140 1.774 6.968 33.208 1.056 F1 79.681 80.203 79.362 80.302 76.644 91.092 80.090 93.836 表 10 基于AE的工况估计模型的结构

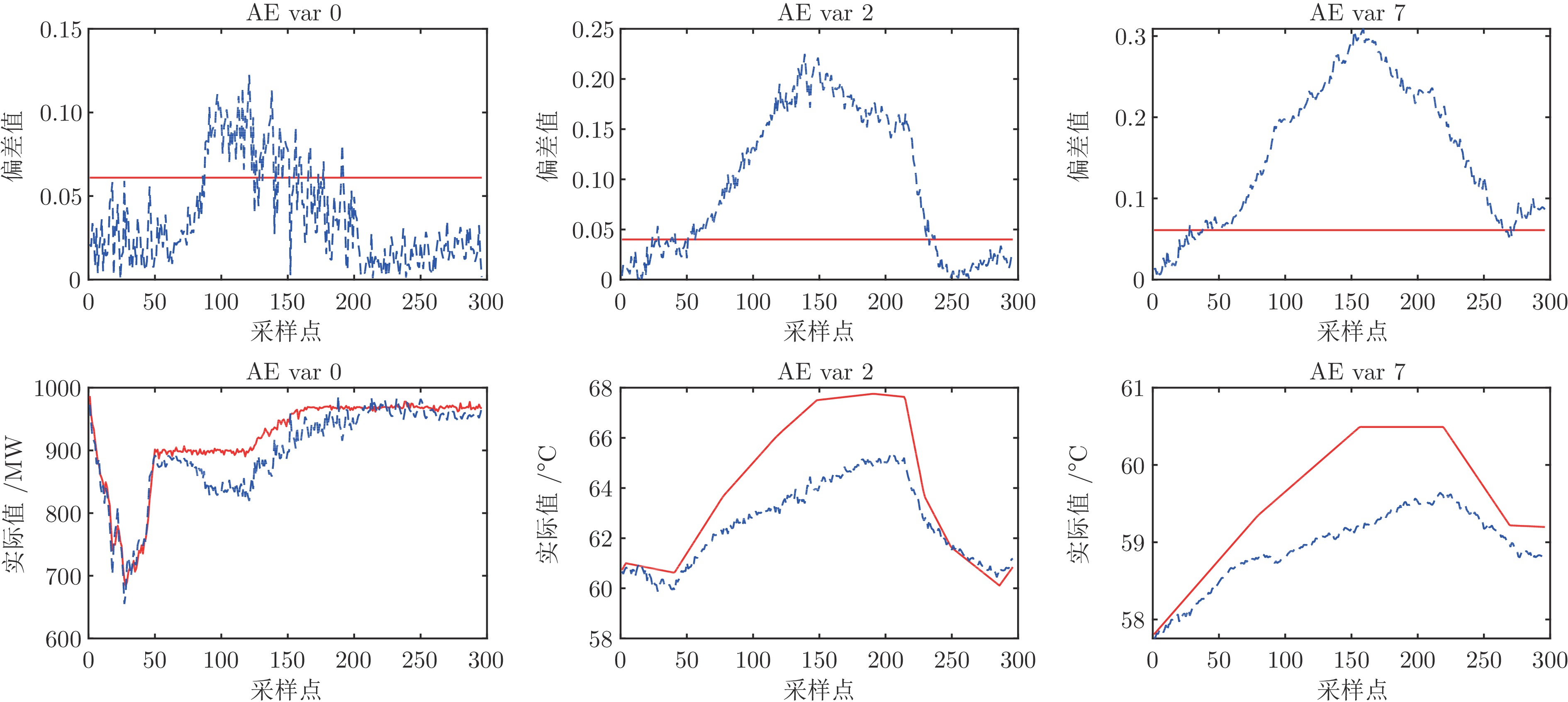

Table 10 Structure of working condition estimation model based on AE

序号 网络层 数目 参数 激活函数 1 BiLSTM 1 $[{ {\rm{input}}\_{\rm{size}}} = \;len,{{\rm{hidden}}\_{\rm{size}}} = 2 \times ld]$ None 2 FC 0 1 $[{ {\rm{input}}\_{\rm{size}}} = 4 \times ld,{{\rm{output}}\_{\rm{size}}} = 2 \times ld]$ Tanh 3 FC 1 1 $[{ {\rm{input}}\_{\rm{size}}} = 2 \times ld,{{\rm{output}}\_{\rm{size}}} = ld]$ Tanh 4 FC 2 1 $[{ {\rm{input}}\_{\rm{size}}} = ld,{{\rm{output}}\_{\rm{size}}} = 2 \times ld]$ Tanh 5 FC 3 1 $[{ {\rm{input}}\_{\rm{size}}} = 2 \times ld,{{\rm{output}}\_{\rm{size}}} = n]$ None 表 11 AE与MKGCN实验结果对比(MKGCN实验结果同表6 ~ 9)

Table 11 Comparison of experimental results between AE and MKGCN (The experimental results of MKGCN are the same as Tables 6 ~ 9)

指标 AE MKGCN RMSE, $\text{var} \in N$ 0.020 0.044 RMSE, $\text{var} \in F$ 0.022 0.046 MAE, $\text{var} \in N$ 0.016 0.036 MAE, $\text{var} \in F$ 0.019 0.039 ${False}_\text{p}$, $\text{var} \in N$ 38.811 4.500 ${False}_\text{n}$, $\text{var} \in N$ 0 0 F1, $\text{var} \in N$ 75.922 97.698 ${False}_\text{p}$, $\text{var} \in F$ 35.009 10.769 ${False}_\text{n}$, $\text{var} \in F$ 0.887 1.056 F1, $\text{var} \in F$ 78.505 93.836 表 12 单输出通道与多输出通道性能对比

Table 12 Performance comparison between single output channel and multiple output channels

指标 $oc = 1$ $oc = 32$ RMSE, $\text{var} \in N$ 0.043 0.044 RMSE, $\text{var} \in F$ 0.055 0.046 MAE, $\text{var} \in N$ 0.035 0.036 MAE, $\text{var} \in F$ 0.049 0.039 ${False}_\text{p}$, $\text{var} \in N$ 38.486 4.500 ${False}_\text{n}$, $\text{var} \in N$ 0 0 F1, $\text{var} \in N$ 76.172 97.698 ${False}_\text{p}$, $\text{var} \in F$ 36.022 10.769 ${False}_\text{n}$, $\text{var} \in F$ 5.237 1.056 F1, $\text{var} \in F$ 76.385 93.836 表 13 单输入通道与多输入通道性能对比

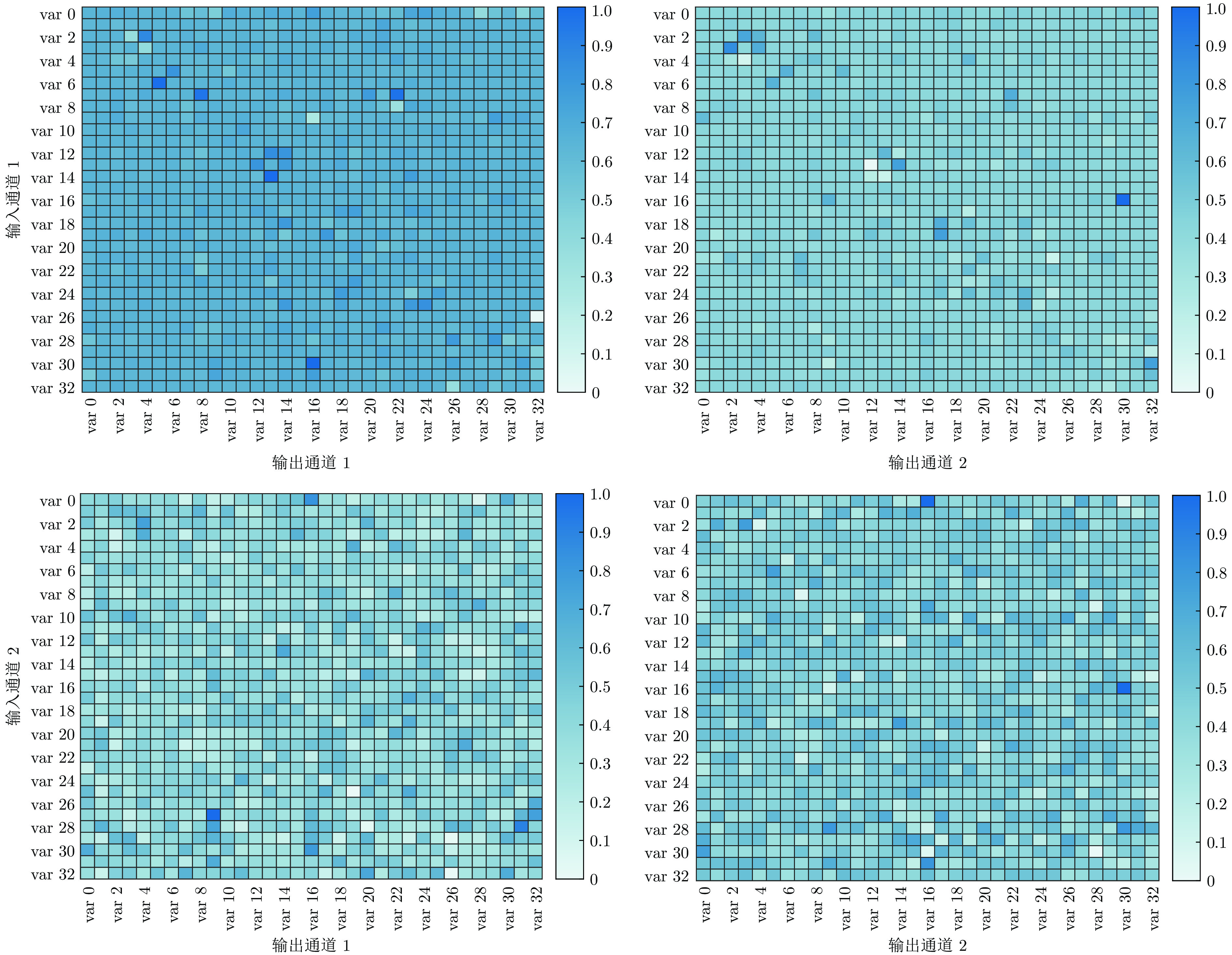

Table 13 Performance comparison between single input channel and multiple input channels

指标 $c_{\rm{in}}^1$ $c_{\rm{in}}^2$ $c_{\rm{in}}^{1,2}$ RMSE, $\text{var} \in N$ 0.084 0.046 0.044 RMSE, $\text{var} \in F$ 0.044 0.044 0.046 MAE, $\text{var} \in N$ 0.072 0.038 0.036 MAE, $\text{var} \in F$ 0.037 0.038 0.039 ${False}_\text{p}$, $\text{var} \in N$ 22.703 5.527 4.500 ${False}_\text{n}$, $\text{var} \in N$ 0 0 0 F1, $\text{var} \in N$ 87.195 97.158 97.698 ${False}_\text{p}$, $\text{var} \in F$ 33.405 15.372 10.769 ${False}_\text{n}$, $\text{var} \in F$ 16.765 10.093 1.056 F1, $\text{var} \in F$ 73.991 87.187 93.836 表 14 自迭代效果对比

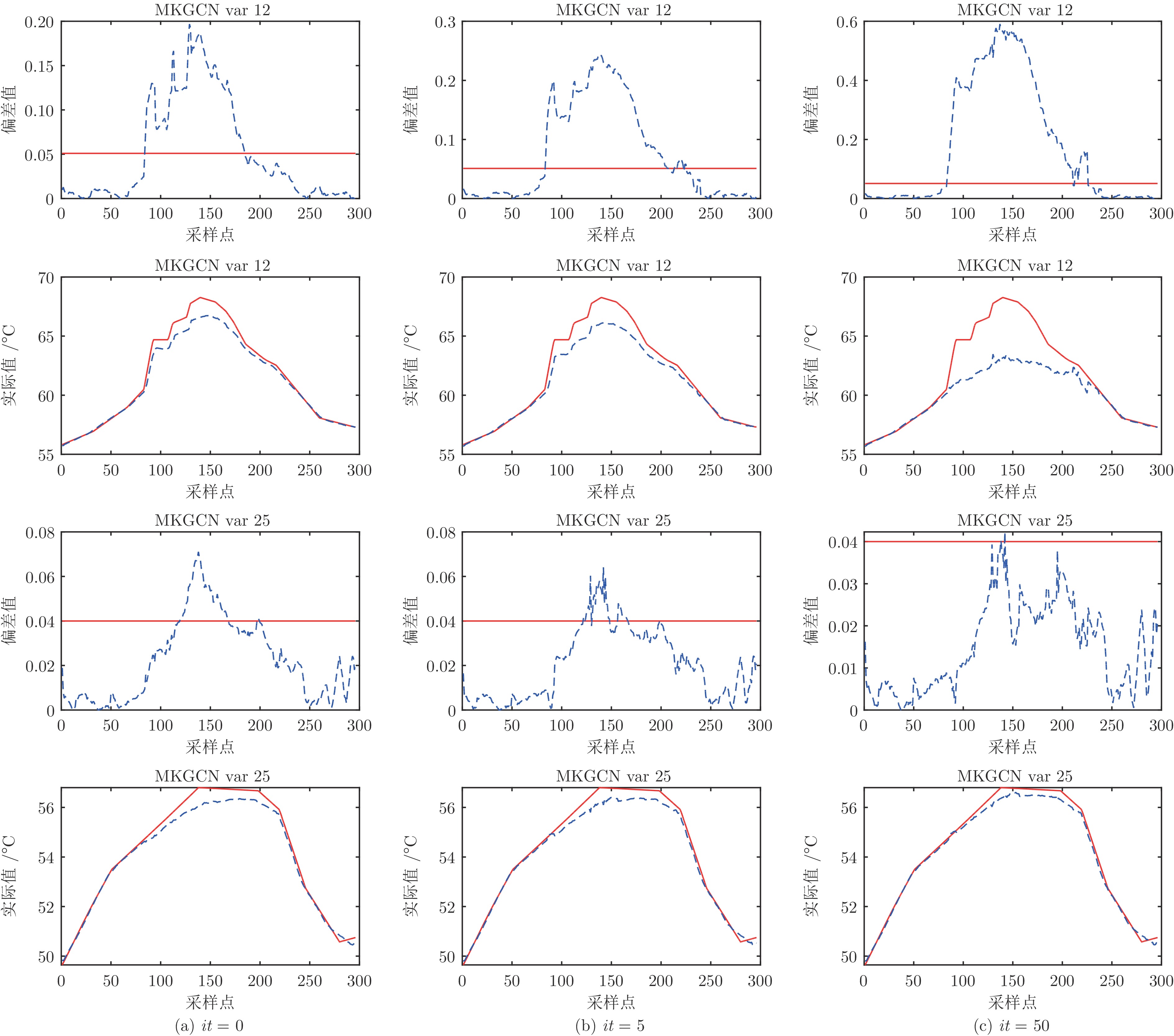

Table 14 Comparison of self-iteration effect

指标 $it = 0$ $it = 5$ $it = 50$ ${False}_\text{p}$, $\text{var} \in N$ 11.662 7.527 4.500 ${False}_\text{n}$, $\text{var} \in N$ 0 0 0 F1, $\text{var} \in N$ 93.808 96.089 97.698 ${False}_\text{p}$, $\text{var} \in F$ 11.740 12.289 10.769 ${False}_\text{n}$, $\text{var} \in F$ 1.732 0.676 1.055 F1, $\text{var} \in F$ 93.000 93.157 93.837 -

[1] 柴天佑. 工业人工智能发展方向. 自动化学报, 2020, 46(10): 2003−2012Chai Tian-You. Development directions of industrial artificial intelligence. Acta Automatica Sinica, 2020, 46(10): 2003−2012 [2] 马亮, 彭开香, 董洁. 工业过程故障根源诊断与传播路径识别技术综述. 自动化学报, 2022, 48(7): 1650−1663 doi: 10.16383/j.aas.c200257Ma Liang, Peng Kai-Xiang, Dong Jie. Review of root cause diagnosis and propagation path identification techniques for faults in industrial processes. Acta Automatica Sinica, 2022, 48(7): 1650−1663 doi: 10.16383/j.aas.c200257 [3] Zhao C H. Perspectives on nonstationary process monitoring in the era of industrial artificial intelligence. Journal of Process Control, 2022, 116: 255−272 doi: 10.1016/j.jprocont.2022.06.011 [4] He Y L, Geng Z Q, Zhu Q X. Soft sensor development for the key variables of complex chemical processes using a novel robust bagging nonlinear model integrating improved extreme learning machine with partial least square. Chemometrics and Intelligent Laboratory Systems, 2016, 151: 78−88 doi: 10.1016/j.chemolab.2015.12.010 [5] 赵春晖, 胡赟昀, 郑嘉乐, 陈军豪. 数据驱动的燃煤发电装备运行工况监控——现状与展望. 自动化学报, 2022, 48(11): 2611−2633Zhao Chun-Hui, Hu Yun-Yun, Zheng Jia-Le, Chen Jun-Hao. Data-driven operating monitoring for coal-fired power generation equipment: The state of the art and challenge. Acta Automatica Sinica, 2022, 48(11): 2611−2633 [6] Sun X, Marquez H J, Chen T W, Riaz M. An improved PCA method with application to boiler leak detection. ISA Transactions, 2005, 44(3): 379−397 doi: 10.1016/S0019-0578(07)60211-0 [7] You L X, Chen J. A variable relevant multi-local PCA modeling scheme to monitor a nonlinear chemical process. Chemical Engineering Science, 2021, 246: Article No. 116851 doi: 10.1016/j.ces.2021.116851 [8] Zhao C H, Sun H. Dynamic distributed monitoring strategy for large-scale nonstationary processes subject to frequently varying conditions under closed-loop control. IEEE Transactions on Industrial Electronics, 2019, 66(6): 4749−4758 doi: 10.1109/TIE.2018.2864703 [9] Song P Y, Zhao C H. Slow down to go better: A survey on slow feature analysis. IEEE Transactions on Neural Networks and Learning Systems, 2024, 35(3): 3416−3436 [10] Zhao C H, Chen J H, Jing H. Condition-driven data analytics and monitoring for wide-range nonstationary and transient continuous processes. IEEE Transactions on Automation Science and Engineering, 2021, 18(4): 1563−1574 doi: 10.1109/TASE.2020.3010536 [11] 樊继聪, 王友清, 秦泗钊. 联合指标独立成分分析在多变量过程故障诊断中的应用. 自动化学报, 2013, 39(5): 494−501Fan Ji-Cong, Wang You-Qing, Qin S. Joe. Combined indices for ICA and their applications to multivariate process fault diagnosis. Acta Automatica Sinica, 2013, 39(5): 494−501 [12] Ma L Y, Ma Y G, Lee K Y. An intelligent power plant fault diagnostics for varying degree of severity and loading conditions. IEEE Transactions on Energy Conversion, 2010, 25(2): 546−554 doi: 10.1109/TEC.2009.2037435 [13] Zhao R, Yan R Q, Wang J J, Mao K Z. Learning to monitor machine health with convolutional bi-directional LSTM networks. Sensors, 2017, 17(2): Article No. 273 doi: 10.3390/s17020273 [14] Shen Y, Abubakar M, Liu H, Hussain F. Power quality disturbance monitoring and classification based on improved PCA and convolution neural network for wind-grid distribution systems. Energies, 2019, 12(7): Article No. 1280 doi: 10.3390/en12071280 [15] Yu J, Rashid M M. A novel dynamic Bayesian network-based networked process monitoring approach for fault detection, propagation identification, and root cause diagnosis. AIChE Journal, 2013, 59(7): 2348−2365 doi: 10.1002/aic.14013 [16] Dimokranitou A. Adversarial Autoencoders for Anomalous Event Detection in Images [Master thesis], Purdue University, USA, 2017. [17] De Castro-Cros M, Rosso S, Bahilo E, Velasco M, Angulo C. Condition assessment of industrial gas turbine compressor using a drift soft sensor based in autoencoder. Sensors, 2021, 21(8): Article No. 2708 doi: 10.3390/s21082708 [18] Lutz M A, Vogt S, Berkhout V, Faulstich S, Dienst S, Steinmetz U, et al. Evaluation of anomaly detection of an autoencoder based on maintenace information and scada-data. Energies, 2020, 13(5): Article No. 1063 doi: 10.3390/en13051063 [19] Guo Y F, Liao W X, Wang Q L, Yu L X, Ji T X, Li P. Multidimensional time series anomaly detection: A GRU-based Gaussian mixture variational autoencoder approach. In: Proceedings of the 10th Asian Conference on Machine Learning. Cambridge MA, USA: JMLR, 2018. 97−112 [20] Yu W K, Zhao C H. Robust monitoring and fault isolation of nonlinear industrial processes using denoising autoencoder and elastic net. IEEE Transactions on Control Systems Technology, 2020, 28(3): 1083−1091 doi: 10.1109/TCST.2019.2897946 [21] Hu Y Y, Wang Y, Zhao C H. A sparse fault degradation oriented fisher discriminant analysis (FDFDA) algorithm for faulty variable isolation and its industrial application. Control Engineering Practice, 2019, 90: 311−320 doi: 10.1016/j.conengprac.2019.07.007 [22] 赵春晖, 余万科, 高福荣. 非平稳间歇过程数据解析与状态监控——回顾与展望. 自动化学报, 2020, 46(10): 2072−2091 doi: 10.16383/j.aas.c190586Zhao Chun-Hui, Yu Wan-Ke, Gao Fu-Rong. Data analytics and condition monitoring methods for nonstationary batch processes——Current status and future. Acta Automatica Sinica, 2020, 46(10): 2072−2091 doi: 10.16383/j.aas.c190586 [23] Gross K C, Singer R M, Wegerich S W, Herzog J P, VanAlstine R, Bockhorst F. Application of a model-based fault detection system to nuclear plant signals. In: Proceedings of the 9th International Conference on Intelligent Systems Applications to Power Systems. Seoul, Korea: Argonne National Lab., 1997. [24] Zavaljevski N, Gross K C. Sensor fault detection in nuclear power plants using multivariate state estimation technique and support vector machines. In: Proceedings of the 3rd International Conference of the Yugoslav Nuclear Society. Belgrade, Yugoslavia: Argonne National Lab., 2020. [25] Cheng S F, Pecht M. Multivariate state estimation technique for remaining useful life prediction of electronic products. In: Proceedings of the 2007 AAAI Fall Symposium on Artificial Intelligence for Prognostics. Arlington, USA: AAAI, 2007. [26] Wang Z Q, Liu C L. Wind turbine condition monitoring based on a novel multivariate state estimation technique. Measurement, 2021, 168: Article No. 108388 doi: 10.1016/j.measurement.2020.108388 [27] Bockhorst F K, Gross K C, Herzog J P, Wegerich S W. MSET modeling of crystal river-3 venturi flow meters. In: Proceedings of the 6th International Conference on Nuclear Engineering. San Diego, USA: Argonne National Lab., 1998. [28] Fan Y J, Tao B, Zheng Y, Jang S S. A data-driven soft sensor based on multilayer perceptron neural network with a double LASSO approach. IEEE Transactions on Instrumentation and Measurement, 2020, 69(7): 3972−3979 doi: 10.1109/TIM.2019.2947126 [29] Zhang M, Liu X G, Zhang Z Y. A soft sensor for industrial melt index prediction based on evolutionary extreme learning machine. Chinese Journal of Chemical Engineering, 2016, 24(8): 1013−1019 doi: 10.1016/j.cjche.2016.05.030 [30] Ke W S, Huang D X, Yang F, Jiang Y H. Soft sensor development and applications based on LSTM in deep neural networks. In: Proceedings of the 2017 IEEE Symposium Series on Computational Intelligence (SSCI). Honolulu, USA: IEEE, 2017. 1−6 [31] Yuan X F, Qi S B, Wang Y L, Xia H B. A dynamic CNN for nonlinear dynamic feature learning in soft sensor modeling of industrial process data. Control Engineering Practice, 2020, 104: Article No. 104614 doi: 10.1016/j.conengprac.2020.104614 [32] Zhu W B, Ma Y, Zhou Y Z, Benton M, Romagnoli J. Deep learning based soft sensor and its application on a pyrolysis reactor for compositions predictions of gas phase components. Computer Aided Chemical Engineering, 2018, 44: 2245−2250 [33] 常树超, 赵春晖. 一种时空协同的图卷积长短期记忆网络及其工业软测量应用. 控制与决策, 2022, 37(1): 77−86 doi: 10.13195/j.kzyjc.2020.0901Chang Shu-Chao, Zhao Chun-Hui. A spatio-temporal synergistic graph convolution long short-term memory network and its application for industrial soft sensors. Control and Decision, 2022, 37(1): 77−86 doi: 10.13195/j.kzyjc.2020.0901 [34] Kipf T N, Welling M. Semi-supervised classification with graphconvolutional networks. In: Proceedings of the 5th International Conference on Learning Representations. Toulon, France: arXiv.org, 2017. [35] Feng L J, Zhao C H, Li Y L, Zhou M, Qiao H L, Fu C. Multichannel diffusion graph convolutional network for the prediction of endpoint composition in the converter steelmaking process. IEEE Transactions on Instrumentation and Measurement, 2021, 70: 1−13 [36] Wu Z H, Pan S R, Long G D, Jiang J, Chang X J, Zhang C Q. Connecting the dots: Multivariate time series forecasting with graph neural networks. In: Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. New York, USA: Association for Computing Machinery, 2020. 753−763 [37] Hochreiter S, Schmidhuber J. Long short-term memory. Neural Computation, 1997, 9(8): 1735−1780 doi: 10.1162/neco.1997.9.8.1735 [38] Gers F A, Schmidhuber J, Cummins F. Learning to forget: Continual prediction with LSTM. Neural Computation, 2000, 12(10): 2451−2471 doi: 10.1162/089976600300015015 [39] Feng L J, Zhao C H, Sun Y X. Dual attention-based encoder-decoder: A customized sequence-to-sequence learning for soft sensor development. IEEE Transactions on Neural Networks and Learning Systems, 2021, 32(8): 3306−3317 doi: 10.1109/TNNLS.2020.3015929 [40] Feng L J, Zhao C H, Huang B. Adversarial smoothing tri-regression for robust semi-supervised industrial soft sensor. Journal of Process Control, 2021, 108: 86−97 doi: 10.1016/j.jprocont.2021.11.001 [41] Schuster M, Paliwal K K. Bidirectional recurrent neural networks. IEEE Transactions on Signal Processing, 1997, 45(11): 2673−2681 doi: 10.1109/78.650093 [42] Krizhevsky A, Sutskever I, Hinton G E. ImageNet classification with deep convolutional neural networks. Communications of the ACM, 2017, 60(6): 84−90 doi: 10.1145/3065386 [43] Vaswani A, Shazeer N, Parmar N, Uszkoreit J, Jones L, Gomez A N, et al. Attention is all you need. In: Proceedings of the 31st International Conference on Neural Information Processing Systems. Long Beach, USA: ACM, 2017. 6000−6010 [44] Glorot X, Bengio Y. Understanding the difficulty of training deep feedforward neural networks. In: Proceedings of the 13th International Conference on Artificial Intelligence and Statistics. Sardinia, Italy: PMLR, 2010. 249−256 [45] Li Q M, Han Z C, Wu X M. Deeper insights into graph convolutional networks for semi-supervised learning. In: Proceedings of the 32nd AAAI Conference on Artificial Intelligence and 30th Innovative Applications of Artificial Intelligence Conference and Eighth AAAI Symposium on Educational Advances in Artificial Intelligence. New Orleans, USA: AAAI, 2018. 3538−3545 [46] Chiang W L, Liu X Q, Si S, Li Y, Bengio S, Hsieh C J. Cluster-GCN: An efficient algorithm for training deep and large graph convolutional networks. In: Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. Anchorage, USA: Association for Computing Machinery, 2019. 257−266 [47] Terrell G R, Scott D W. Variable kernel density estimation. The Annals of Statistics, 1992, 20(3): 1236−1265 [48] Gilbertson D D, Kent M, Pyatt F B. Data analysis and interpretation III: Correlation and regression using spearman's rank correlation coefficient and semi-averages regression. Practical Ecology for Geography and Biology. New York, USA: Springer, 1985. 218−236 [49] Geladi P, Kowalski B R. Partial least-squares regression: A tutorial. Analytica Chimica Acta, 1986, 185: 1−17 doi: 10.1016/0003-2670(86)80028-9 [50] Huang G B, Zhu Q Y, Siew C K. Extreme learning machine: Theory and applications. Neurocomputing, 2006, 70(1−3): 489−501 doi: 10.1016/j.neucom.2005.12.126 [51] Kiranyaz S, Avci O, Abdeljaber O, Ince T, Gabbouj M, Inman D J. 1D convolutional neural networks and applications: A survey. Mechanical Systems and Signal Processing, 2021, 151: Article No. 107398 doi: 10.1016/j.ymssp.2020.107398 -

下载:

下载: