A Detection Method for the Interlacing Degree of Filament Yarn Based on Semantic Information Enhancement

-

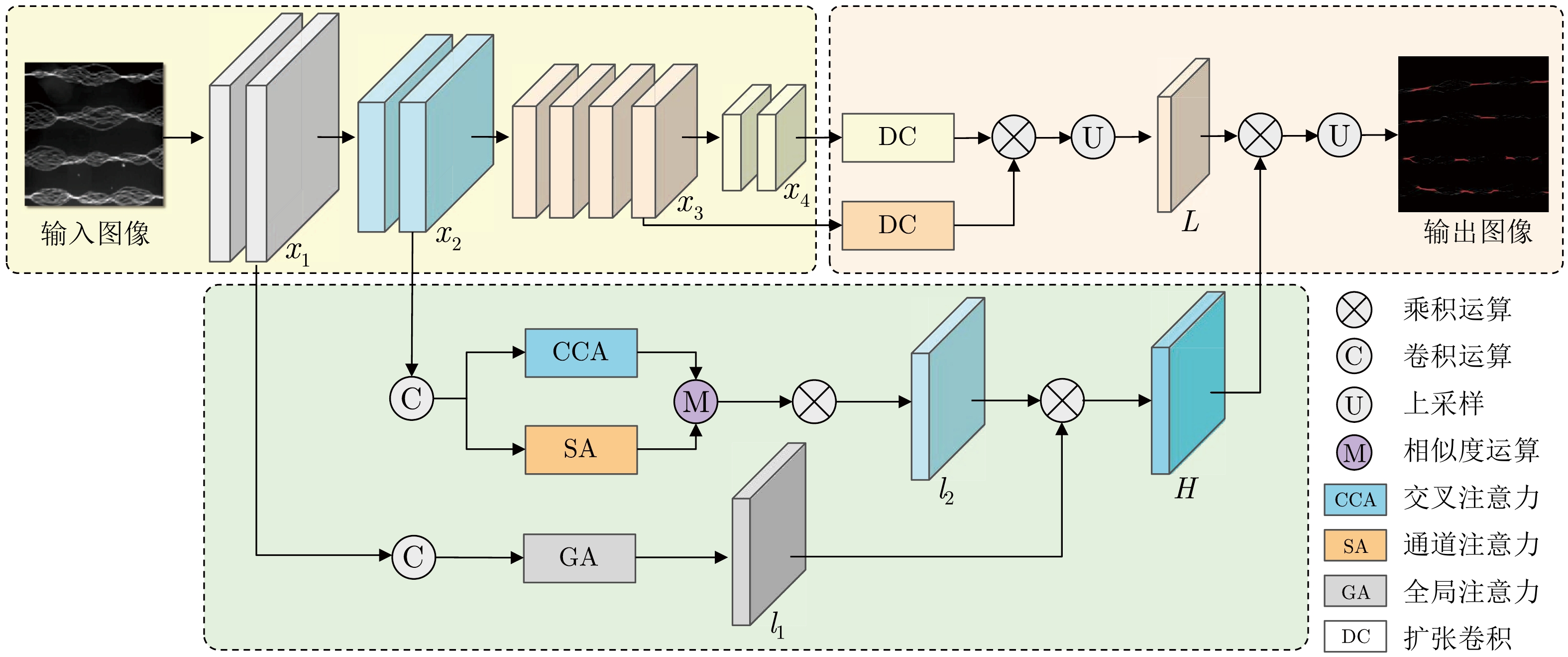

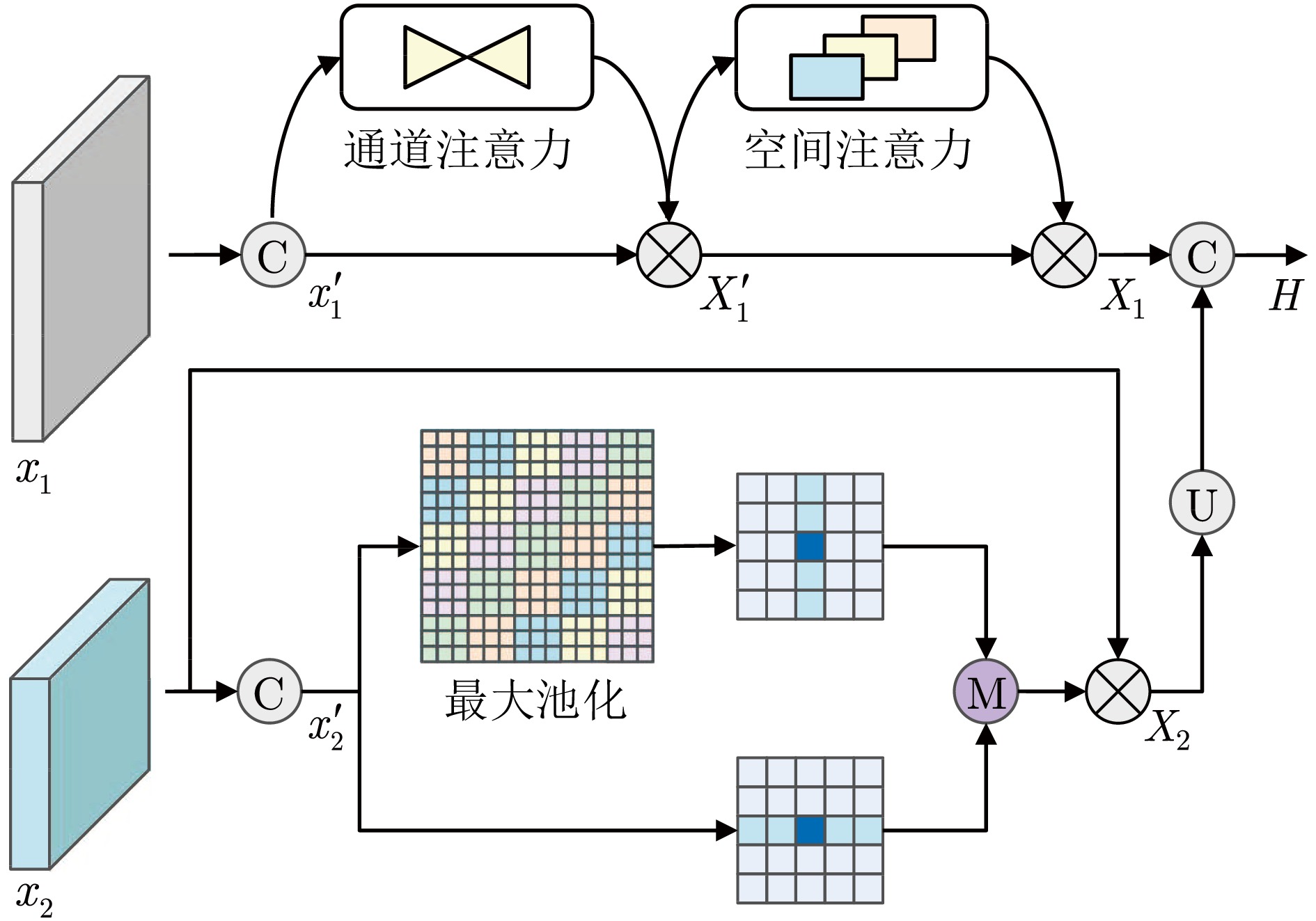

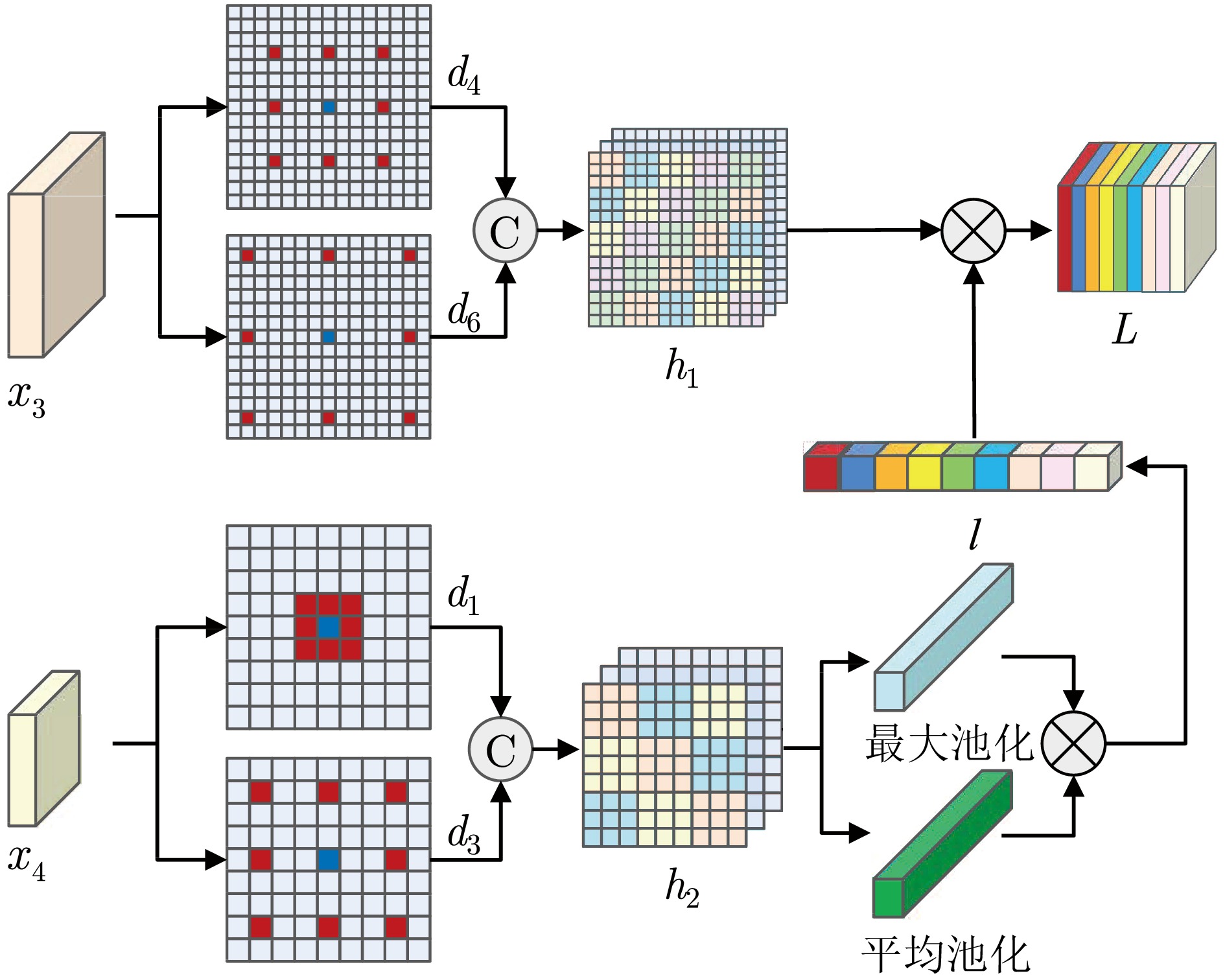

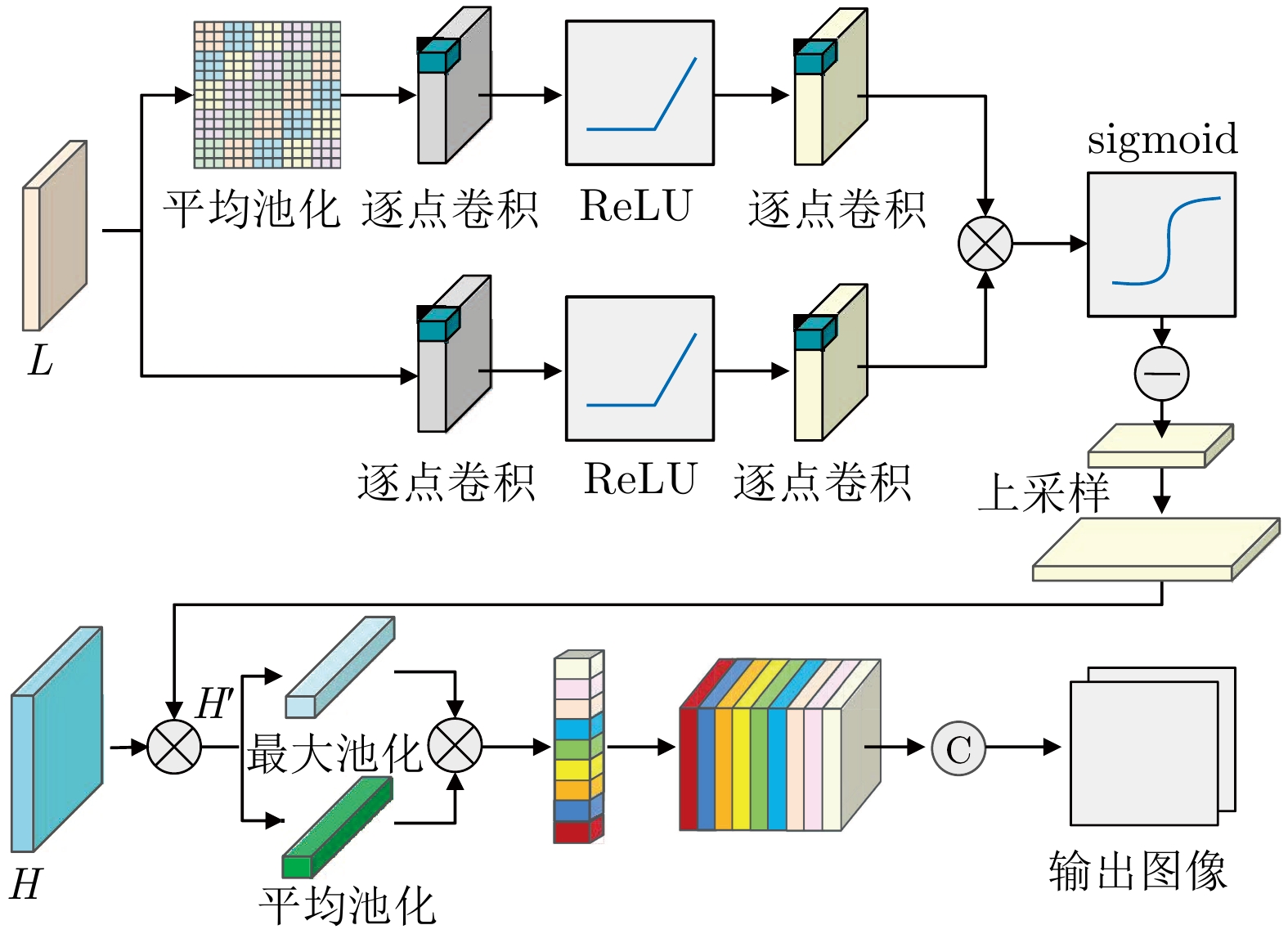

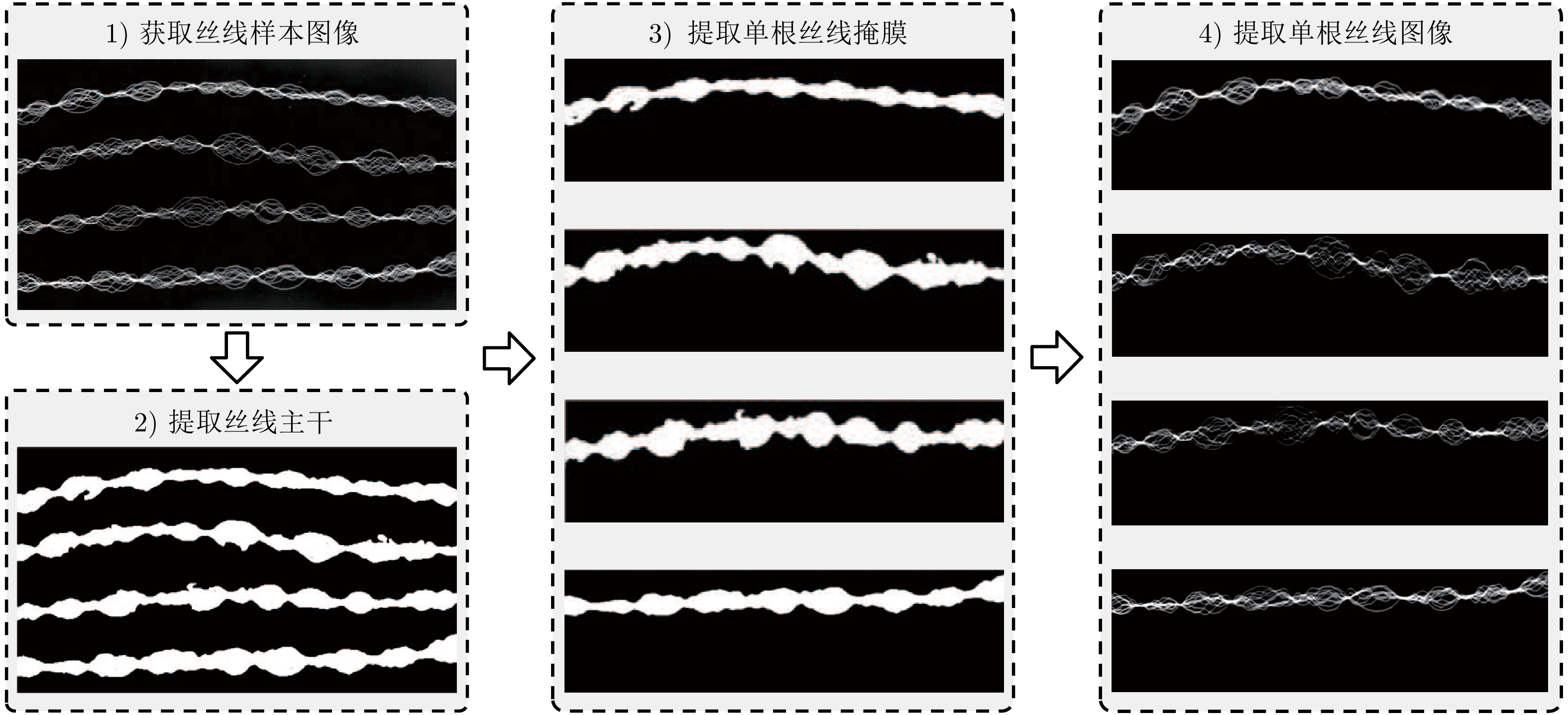

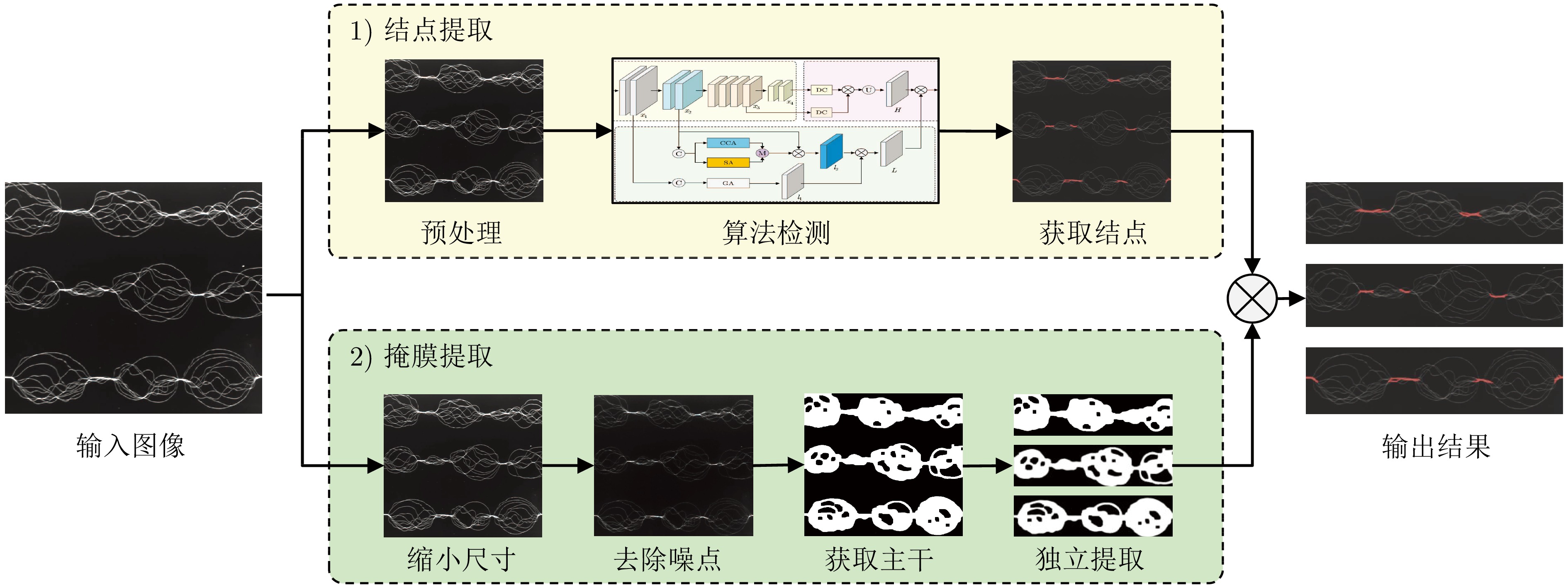

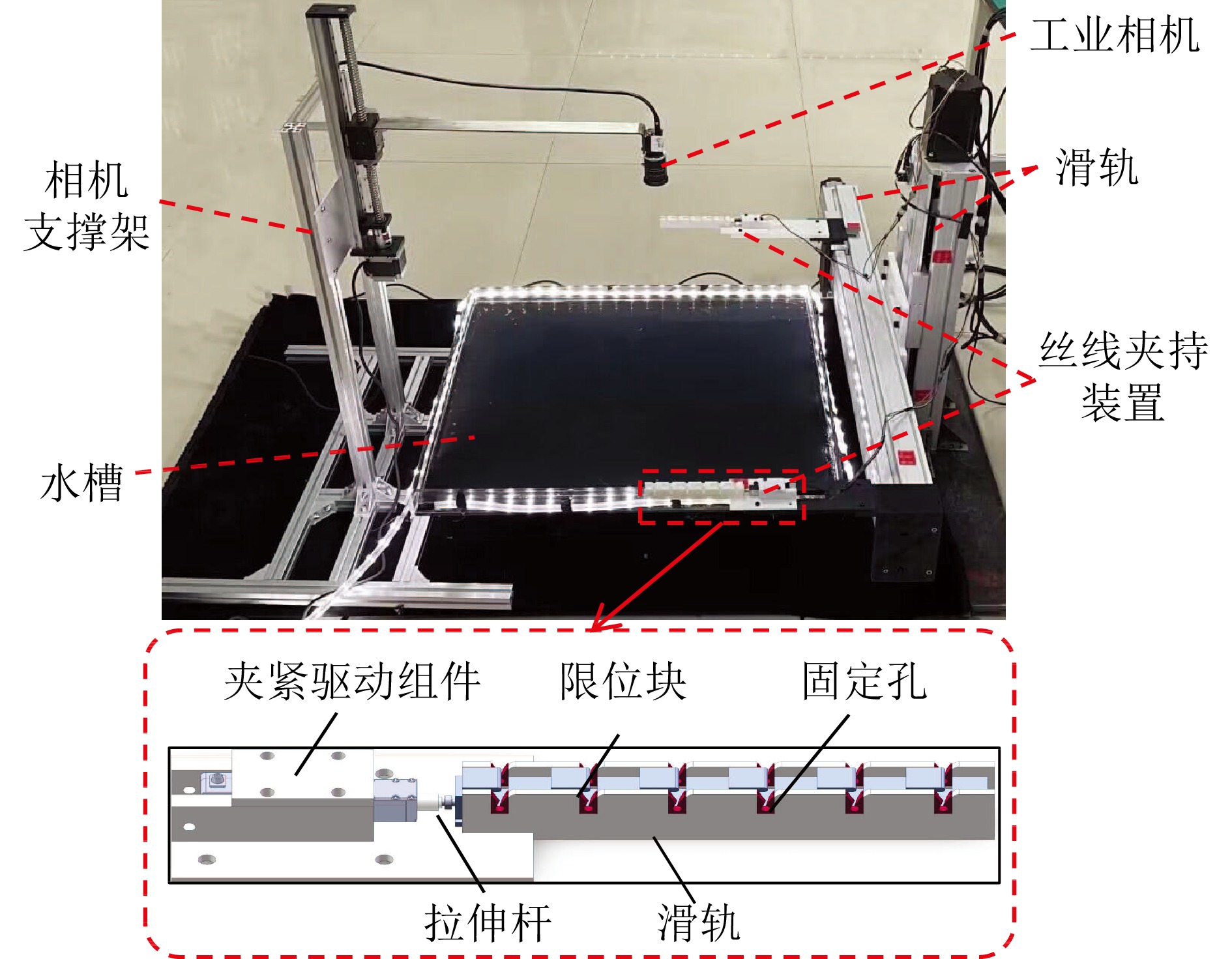

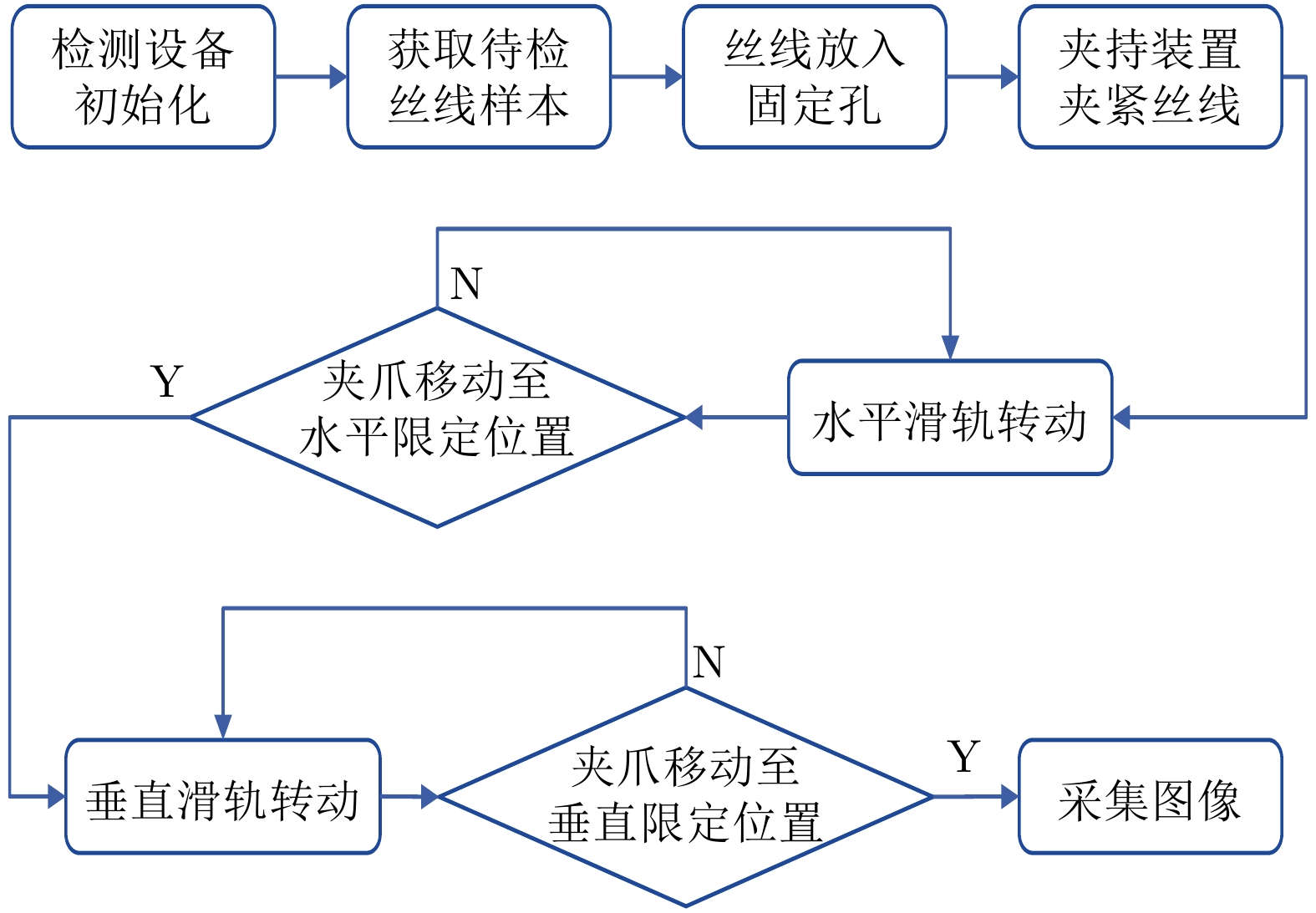

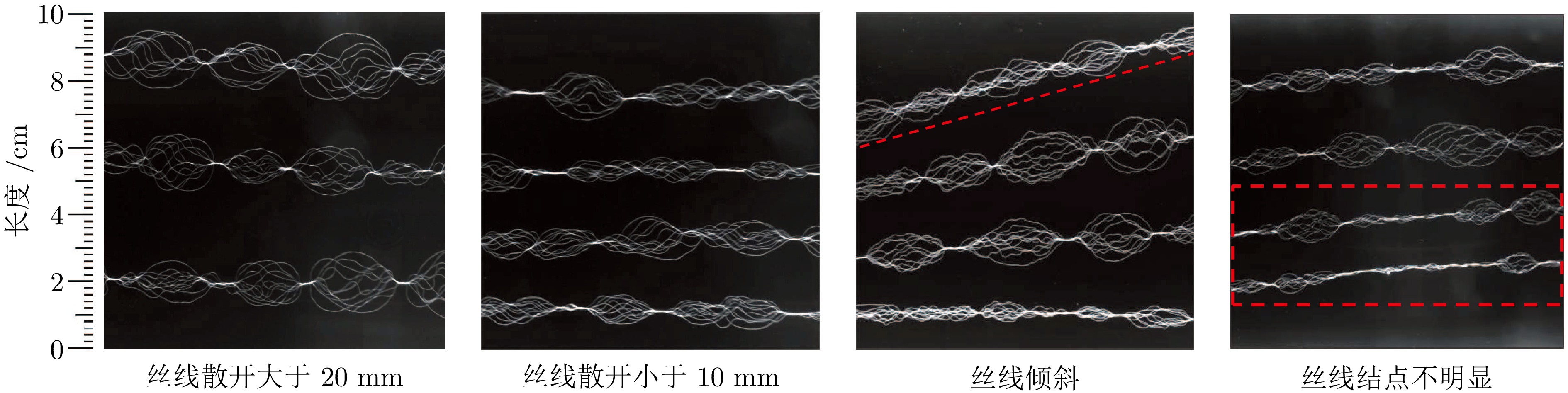

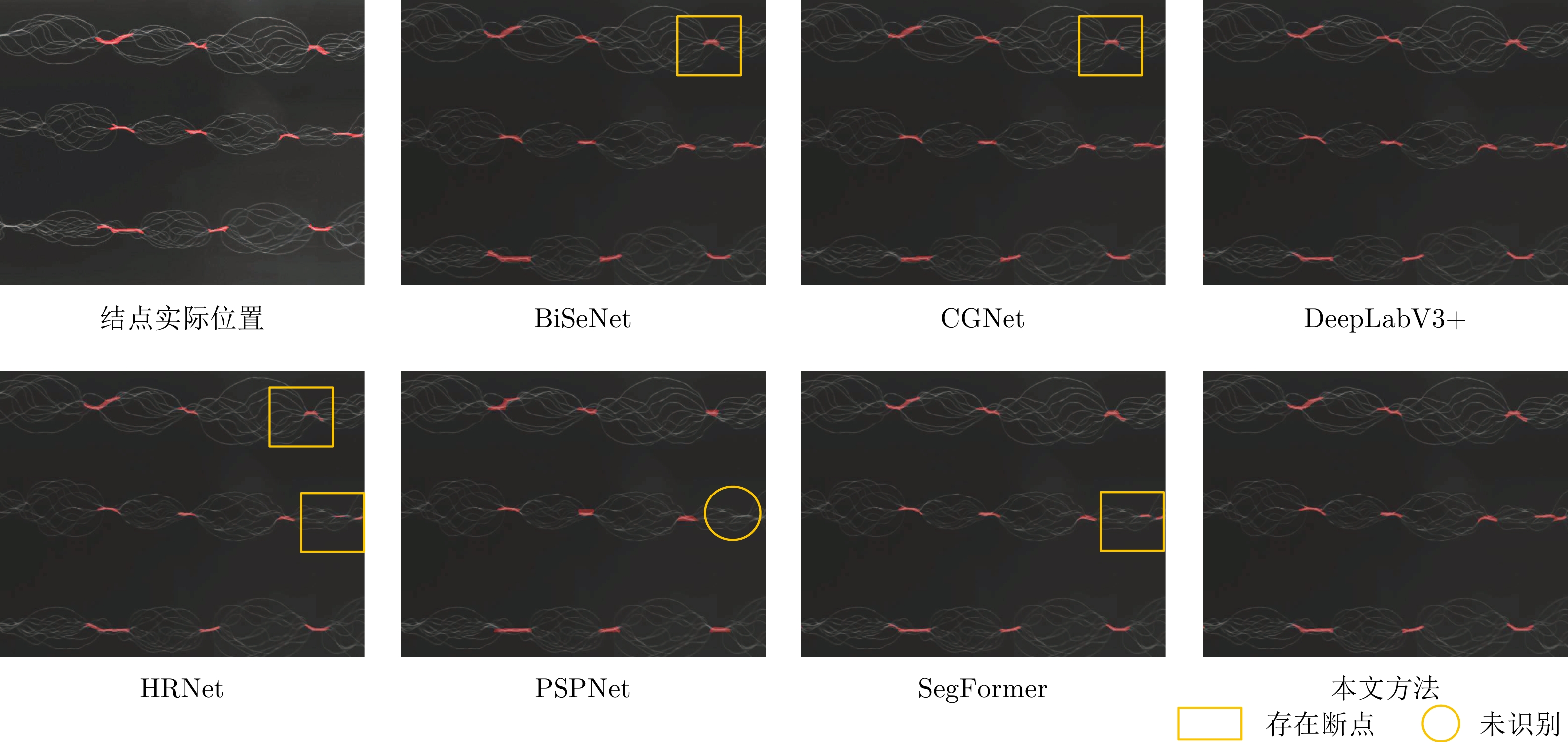

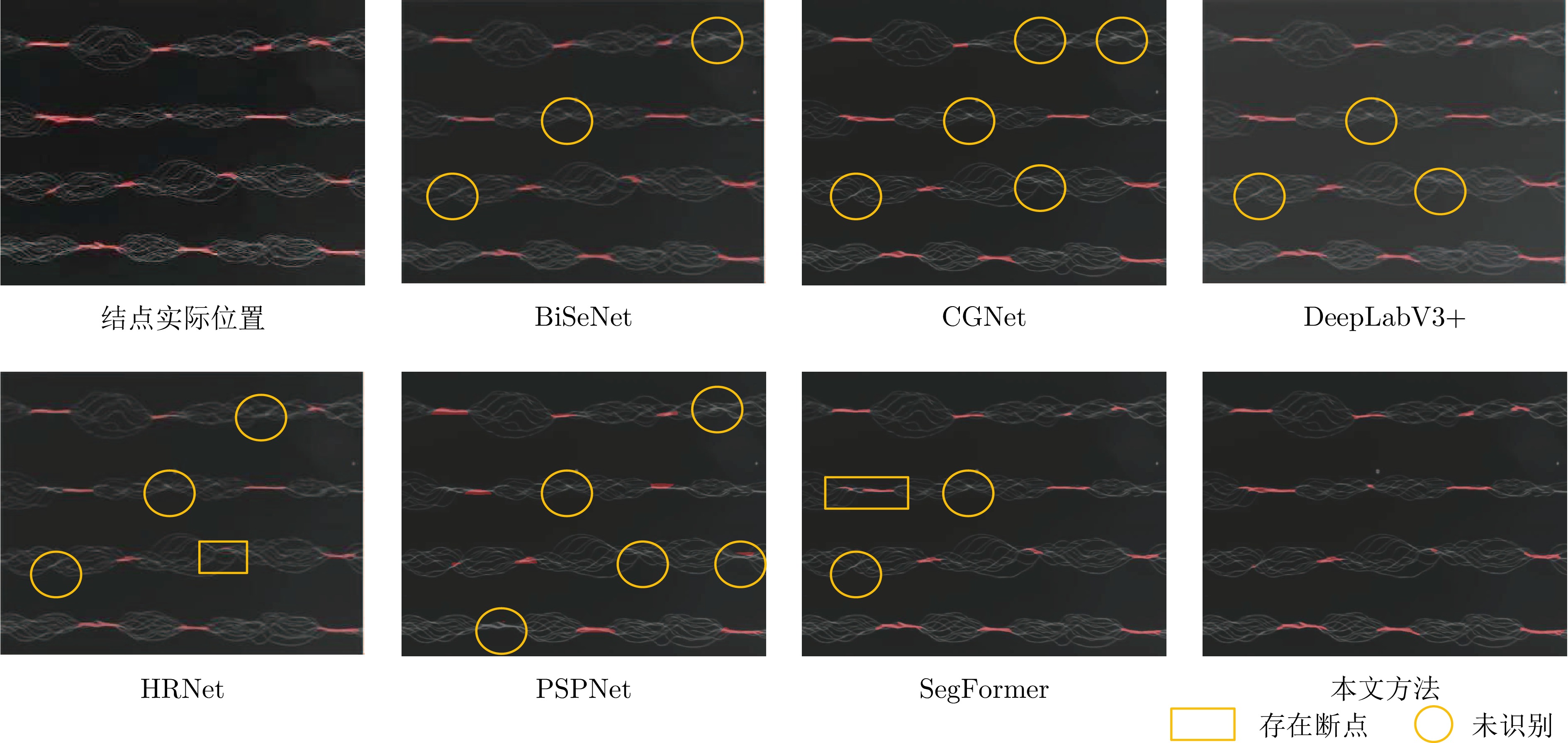

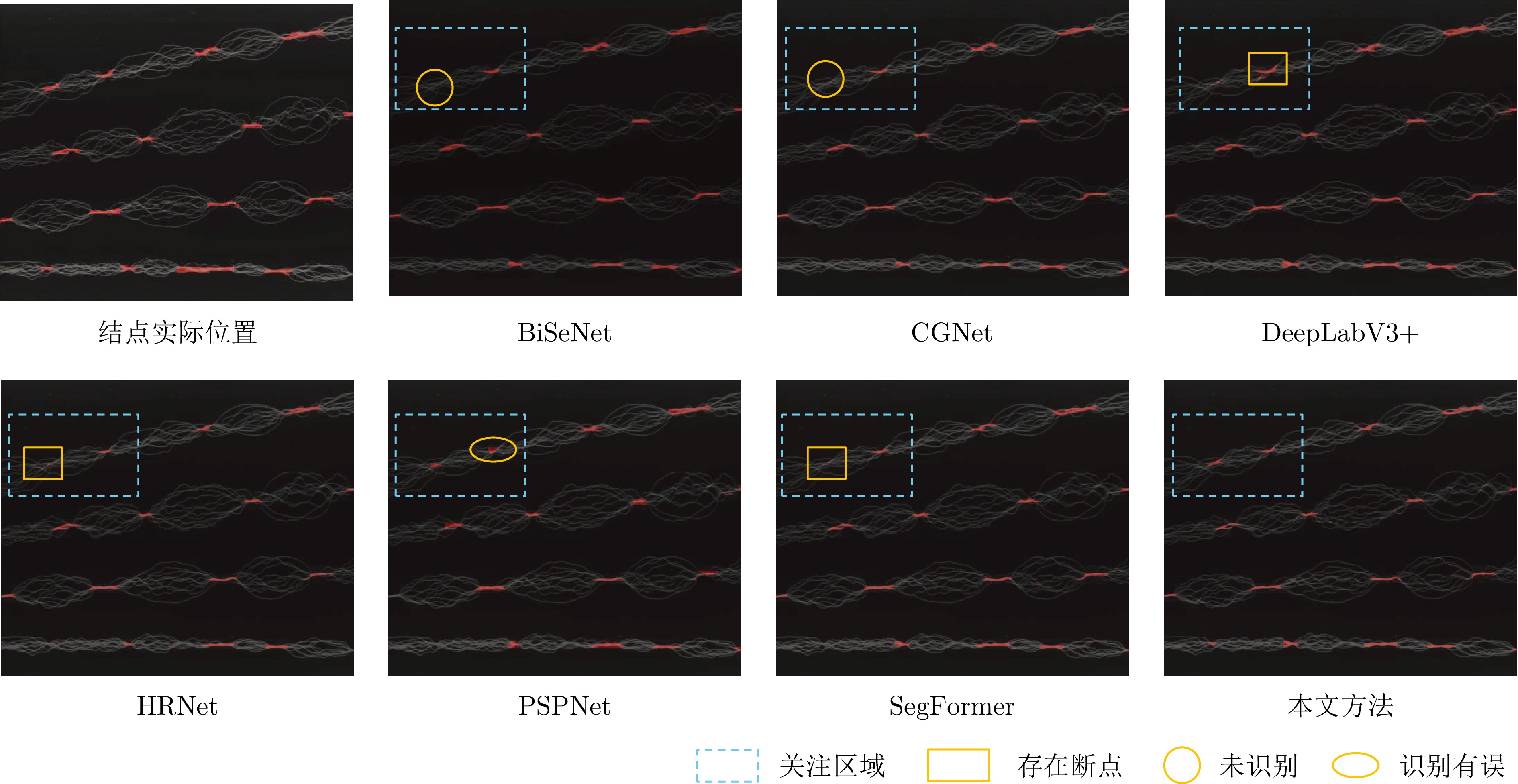

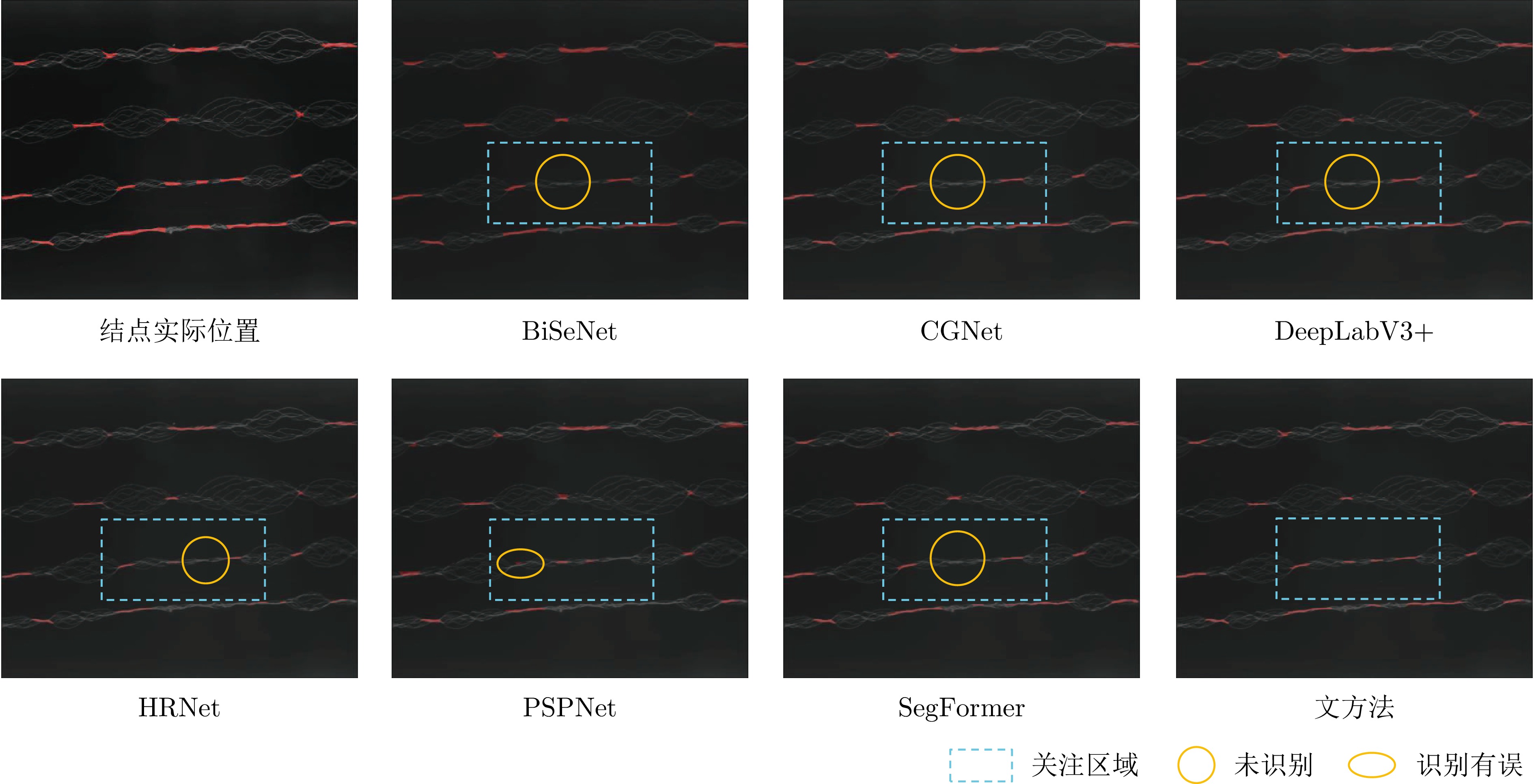

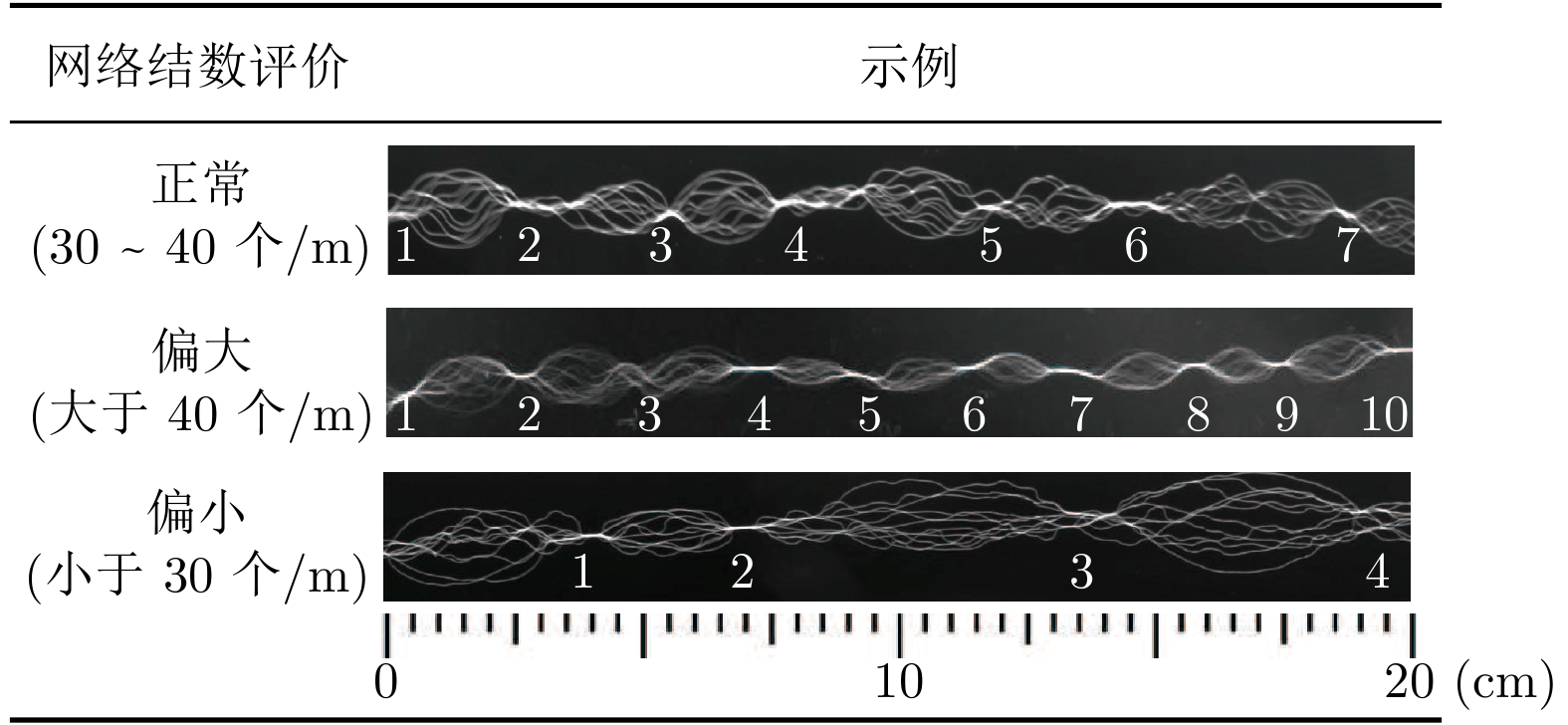

摘要: 网络度是衡量化纤丝线及化纤织物性能的重要指标之一, 在生产车间中通常采用人工方式进行检测. 为解决人工检测误检率较高的问题, 提出一种基于语义信息增强的化纤丝线网络度并行检测方法. 首先, 为提升单根化纤丝线网络结点识别的准确度, 使用基于MobileNetV2优化的主干网络结构提取语义信息, 以提高模型的运算速度. 在所提主干网络的基础上, 设计语义信息增强模块和多级特征扩张模块处理主干网络的特征信息, 同时, 设计像素级注意力掩膜对特征信息进行加权和融合, 以提高网络度检测的准确性. 然后, 为实现多根化纤丝线网络度的批量计算, 基于所提语义信息增强算法, 设计网络度并行检测方法. 使用算法检测丝线网络结点, 同时使用连通域分析及掩膜提取的方法并行检测, 提取视野内每条丝线的独立区域. 随后, 将并行检测结果融合, 以准确获取每根丝线的网络度检测结果. 为验证所提方法的有效性, 使用自主研发的网络度检测设备建立了化纤丝线数据集, 并进行了实验验证. 结果表明, 所提出的方法能够有效地提高检测的准确性.Abstract: The interlacing degree serves as an important indicator for evaluating the performance of filament yarns and fabrics, typically detected manually in production workshop. To address the issues of high false detection rates in manual inspection, a parallel detection method for filament yarn interlacing degree based on semantic information enhancement is proposed. Firstly, to improve the recognition accuracy of interlacing nodes in a filament yarn, an improved backbone architecture based on MobileNetV2 is used for semantic information extraction to improve the computational speed of model. Building upon the proposed backbone architecture, semantic information enhancement module and multilevel feature dilated module are designed to process the feature information of the backbone architecture. Meanwhile, a pixel-level attention mask is designed to weight and fuse the feature, in order to improve the accuracy of interlacing degree detection. Then, based on the proposed enhancement algorithm for semantic information, a parallel detection method of interlacing degree is designed to achieve batch calculation for interlacing degree of multiple filament yarns. The algorithm is used to detect interlacing node, while connected domain analysis and mask extraction are used for parallel detection to extract independent regions of each filament yarn within the field. The parallel detection results are then fused to accurately obtain the interlacing degree detection results for each filament yarn. To validate the effectiveness of the proposed method, a synthetic filament yarn dataset is established using a self-developed interlacing degree detection device, and experimental verification is conducted. The results demonstrate that the proposed method can effectively improve the accuracy of detection.

-

表 1 主干网络架构

Table 1 Architecture of the backbone network

特征尺寸(像素) 扩展因子 循环次数 输出通道数 步长 512 × 512 × 3 — 1 32 2 256 × 256 × 32 1 2 32 1 128 × 128 × 32 6 4 64 2 64 × 64 × 64 6 2 96 2 32 × 32 × 96 — — — — 表 2 模型训练环境配置

Table 2 Configuration of model training environment

项目 版本参数 操作系统 Ubuntu 18.04.6 LTS CUDA cuda 11.3 GPU NVIDIA RTX 3 090 训练框架 PyTorch 1.10.2 内存 128 GB 编程语言 Python 3.8 表 3 模型训练超参数配置

Table 3 Configuration of model training hyperparameter

参数 配置信息 输入图像尺寸 512 × 512 像素 下采样倍数 16 初始学习率 $5 \times 10^{-3}$ 最小学习率 $5 \times 10^{-5}$ 优化器 Adam 权值衰减 $5 \times 10^{-4}$ 批量大小 12 表 4 不同方法的评价指标比较

Table 4 Comparison of evaluation indicators for different methods

方法 平均交并比

(%)$F_{1}$分数

(%)每秒传输

帧数(帧/s)参数量

(MB)BiSeNet 78.95 86.76 63.84 48.93 CGNet 79.00 86.79 33.17 2.08 DeepLabV3+ 79.50 87.35 43.11 209.70 HRNet 78.74 86.69 12.43 37.53 PSPNet 73.58 82.46 49.80 178.51 SegFormer 79.04 86.84 40.87 14.34 UNet 79.83 87.63 22.54 94.07 本文方法 81.52 88.12 76.16 7.98 注: 加粗字体表示各列最优结果. 表 5 模块有效性验证实验结果

Table 5 Results of module validity verification experimental

方案序号 语义信息增强模块 多级特征扩张模块 阶段性特征融合模块 MIoU (%) FPS (帧/s) 1 $\times$ $\times$ $\times$ 77.18 72.75 2 $\surd$ $\times$ $\times$ 79.91 72.15 3 $\times$ $\surd$ $\times$ 79.85 66.32 4 $\times$ $\times$ $\surd$ 79.33 68.31 5 $\times$ $\surd$ $\surd$ 80.71 55.30 6 $\surd$ $\times$ $\surd$ 81.15 61.48 7 $\surd$ $\surd$ $\times$ 80.25 78.16 8 $\surd$ $\surd$ $\surd$ 81.52 76.16 注: $\surd$指使用此模块, $\times$指不使用此模块. 表 6 不同主干网络提取效率比较

Table 6 Comparison of extraction efficiency of different backbone networks

方案序号 主干网络 MIoU (%) FPS (帧/s) 1 FCN 79.65 33.45 2 MobileNetV2 80.25 43.11 3 Xception 79.61 27.45 4 VGGNet 77.45 30.12 5 ResNet18 77.52 45.21 6 ResNet50 78.01 47.06 7 本文方法 81.52 76.16 表 7 不同语义信息提取方法结果比较

Table 7 Comparison results of extraction method for different context information

方案序号 注意力选择 MIoU (%) 1 SA 80.36 2 SE 80.44 3 CBAM 80.83 4 ECA 79.89 5 本文方法 81.52 表 8 不同扩张卷积提取方式结果比较

Table 8 Comparison results of different dilated convolution extraction methods

方案序号 $x_{3}$ $x_{4}$ MIoU (%) 1 $\times$ $\times$ 80.56 2 $\surd$ $\times$ 81.02 3 $\times$ $\surd$ 81.13 4 $\surd$ $\surd$ 81.52 注: $\surd$指使用此模块, $\times$指不使用此模块. 表 9 阶段性特征融合方法实验比较

Table 9 Comparison results of staged feature fusion module

方案序号 全局平均池化 逐点卷积 组合方法 MIoU (%) 1 $\surd$ $\times$ 无 80.91 2 $\times$ $\surd$ 无 80.65 3 $\surd$ $\surd$ 串行 78.91 4 $\surd$ $\surd$ 并行 81.52 注: $\surd$指使用此模块, $\times$指不使用此模块. -

[1] 陈向玲, 王华平, 吉鹏. 我国化纤智能制造的柔性与多目标生产. 纺织导报, 2020, 916(3): 13−14, 16−18, 21−22, 24−25 doi: 10.3969/j.issn.1003-3025.2020.03.004Chen Xiang-Ling, Wang Hua-Ping, Ji Peng. Flexibility and multi-objective production of intelligent manufacturing of China's chemical fiber industry. China Textile Leader, 2020, 916(3): 13−14, 16−18, 21−22, 24−25 doi: 10.3969/j.issn.1003-3025.2020.03.004 [2] Wang M, Zhan Y L, Yao L. A new method to evaluate interlacing yarns. Textile Research Journal, 2020, 90(7−8): 838−846 doi: 10.1177/0040517519881820 [3] 张叶兴, 陈浩秋, 高国洪, 黄猛富, 肖永新, 刘丽娜. 合成纤维长丝网络度测试方法比较分析. 中国纤检, 2010, 359(15): 54−57 doi: 10.3969/j.issn.1671-4466.2010.15.019Zhang Ye-Xing, Chen Hao-Qiu, Gao Guo-Hong, Huang Meng-Fu, Xiao Yong-Xin, Liu Li-Na. Comparative analysis about tesiting method of synthetic filament network degree. China Fiber Inspection, 2010, 359(15): 54−57 doi: 10.3969/j.issn.1671-4466.2010.15.019 [4] Yan N, Zhu L L, Yang H M, Li N N, Zhang X D. Online yarn breakage detection: A reflection-based anomaly detection method. IEEE Transactions on Instrumentation and Measurement, 2021, 70: 1−13 [5] Guo M R, Gao W D, Wang J A. Online measurement of sizing yarn hairiness based on computer vision. Fibers and Polymers, 2023, 24(4): 1539−1552 doi: 10.1007/s12221-023-00136-5 [6] Wang L, Lu Y C, Pan R R, Gao W D. Evaluation of yarn appearance on a blackboard based on image processing. Textile Research Journal, 2021, 91(19−20): 2263−2271 doi: 10.1177/00405175211002863 [7] Khaddam H S, Ahmad G G. A method to evaluate the diameter of carded cotton yarn using image processing and artificial neural networks. The Journal of the Textile Institute, 2022, 113(8): 1648−1657 doi: 10.1080/00405000.2021.1943259 [8] 唐嘉潞, 杨钟亮, 张凇, 毛新华, 董庆奇. 结合显微视觉和注意力机制的毛羽检测方法. 智能系统学报, 2022, 17(6): 1209−1219 doi: 10.11992/tis.202112035Tang Jia-Lu, Yang Zhong-Liang, Zhang Song, Mao Xin-Hua, Dong Qing-Qi. Detection of yarn hairiness combining microscopic vision and attention mechanism. CAAI Transactions on Intelligent Systems, 2022, 17(6): 1209−1219 doi: 10.11992/tis.202112035 [9] Long J, Shelhamer E, Darrell T. Fully convolutional networks for semantic segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Boston, USA: IEEE, 2015. 640−651 [10] Zhao H S, Shi J P, Qi X J, Wang X G, Jia J Y. Pyramid scene parsing network. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Honolulu, USA: IEEE, 2017. 6230−6239 [11] Zhou Z W, Siddiquee M M R, Tajbakhsh N, Liang J M. UNet++: Redesigning skip connections to exploit multiscale features in image segmentation. IEEE Transactions on Medical Imaging, 2019, 39(6): 1856−1867 [12] Chen L C, Zhu Y, Papandreou G, Schroff F, Adam H. Encoder-decoder with atrous separable convolution for semantic image segmentation. In: Proceedings of the European Conference on Computer Vision. Munich, Germany: Springer, 2018. 801−818 [13] Chen L C, Papandreou G, Schroff F, Adam H. Rethinking atrous convolution for semantic image segmentation. arXiv preprint arXiv: 1706.05587, 2017. [14] Chen L C, Papandreou G, Kokkinos I, Murphy K, Yuille A L. DeepLab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected CRFs. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2018, 40(4): 834−848 doi: 10.1109/TPAMI.2017.2699184 [15] Rush A M, Chopra S, Weston J. A neural attention model for abstractive sentence summarization. arXiv preprint arXiv: 1509.00685, 2015. [16] Vaswani A, Shazeer N, Parmar N, Uszkoreit J, Jones L, Gomez A N, et al. Attention is all you need. arXiv preprint arXiv: 1706.03762, 2023. [17] Cheng H X, Han X F, Xiao G Q. TransRVNet: Lidar semantic segmentation with transformer. IEEE Transactions on Intelligent Transportation Systems, 2023, 24(6): 5895−5907 doi: 10.1109/TITS.2023.3248117 [18] Liu Z, Hu H, Lin Y T, Yao Z L, Xie Z D, Wei Y X, et al. Swin transformer v2: Scaling up capacity and resolution. In: Proceedings of the International Conference on Computer Vision. New Orleans, USA: IEEE, 2022. 12009−12019 [19] Huang T Y, Chen K X, Jiang L F. DS-UNeXt: Depthwise separable convolution network with large convolutional kernel for medical image segmentation. Signal, Image and Video Processing, 2023, 17(5): 1775−1783 doi: 10.1007/s11760-022-02388-9 [20] Sinha A, Dolz J. Multi-scale self-guided attention for medical image segmentation. IEEE Journal of Biomedical and Health Informatics, 2020, 25(1): 121−130 [21] 彭秀平, 仝其胜, 林洪彬, 冯超, 郑武. 一种面向散乱点云语义分割的深度残差−特征金字塔网络框架. 自动化学报, 2021, 47(12): 2831−2840Peng Xiu-Ping, Tong Qi-Sheng, Lin Hong-Bin, Feng Chao, Zheng Wu. A deep residual-feature pyramid network framework for scattered point cloud semantic segmentation. Acta Automatica Sinica, 2021, 47(12): 2831−2840 [22] Zhai W Z, Gao M L, Li Q L, Jeon G, Anisetti M. FPANet: Feature pyramid attention network for crowd counting. Applied Intelligence, 2023, 53(16): 19199−19216 doi: 10.1007/s10489-023-04499-3 [23] Mei Y Q, Fan Y C, Zhang Y L, Yu J H, Zhou Y Q, Liu D, et al. Pyramid attention network for image restoration. International Journal of Computer Vision, 2023, 131(12): 3207−3225 doi: 10.1007/s11263-023-01843-5 [24] 范兵兵, 葛利跃, 张聪炫, 李兵, 冯诚, 陈震. 基于多尺度变形卷积的特征金字塔光流计算方法. 自动化学报, 2023, 49(1): 197−209Fan Bing-Bing, Ge Li-Yue, Zhang Cong-Xuan, Li Bing, Feng Cheng, Chen Zhen. A feature pyramid optical flow estimation method based on multi-scale deformable convolution. Acta Autom atica Sinica, 2023, 49(1): 197−209 [25] 金侠挺, 王耀南, 张辉, 刘理, 钟杭, 贺振东. 基于贝叶斯CNN和注意力网络的钢轨表面缺陷检测系统. 自动化学报, 2019, 45(12): 2312−2327Jin Xia-Ting, Wang Yao-Nan, Zhang Hui, Liu Li, Zhong Hang, He Zhen-Dong. DeepRail: Automatic visual detection system for railway surface defect using Bayesian CNN and attention network. Acta Automatica Sinica, 2019, 45(12): 2312−2327 [26] Sandler M, Howard A, Zhu M L, Zhmoginov A, Chen L C. MobilenetV2: Inverted residuals and linear bottlenecks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Salt Lake City, USA: IEEE, 2018. 4510−4520 [27] Chollet F. Xception: Deep learning with depthwise separable convolutions. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Honolulu, USA: IEEE, 2017. 1251−1258 [28] Kim S, Min D B, Ham B, Jeon S, Lin S, Sohn K. FCSS: Fully convolutional self-similarity for dense semantic correspondence. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2019, 41(3): 581−595 doi: 10.1109/TPAMI.2018.2803169 [29] Kirisci M. New cosine similarity and distance measures for fermatean fuzzy sets and topsis approach. Knowledge and Information Systems, 2023, 65(2): 855−868 doi: 10.1007/s10115-022-01776-4 [30] Yu C Q, Wang J B, Peng C, Gao C X, Yu G, Sang N. BiSeNet: Bilateral segmentation network for real-time semantic segmentation. In: Proceedings of the European Conference on Computer Vision. Munich, Germany: IEEE, 2018. 325−341 [31] 孟俊熙, 张莉, 曹洋, 张乐天, 宋倩. 基于Deeplab v3+ 的图像语义分割算法优化研究. 激光与光电子学进展, 2022, 59(16): 161−170Meng Jun-Xi, Zhang Li, Cao Yang, Zhang Le-Tian, Song Qian. Optimization of image semantic segmentation algorithms based on Deeplab v3+. Laser and Optoelectronics Progress, 2022, 59(16): 161−170 [32] Wu T Y, Tang S, Zhang R, Zhang Y D. CGNet: A light-weight context guided network for semantic segmentation. IEEE Transactions on Image Processing, 2020, 30: 1169−1179 [33] Sun K, Xiao B, Liu D, Wang J D. Deep high-resolution representation learning for human pose estimation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Long Beach, USA: IEEE, 2019. 5693−5703 [34] Xie E, Wang W H, Yu Z D, Anandkumar A, Alvarez J M, Luo P. SegFormer: Simple and efficient design for semantic segmentation with transformers. arXiv preprint arXiv: 2105.15203, 2021. -

下载:

下载: