-

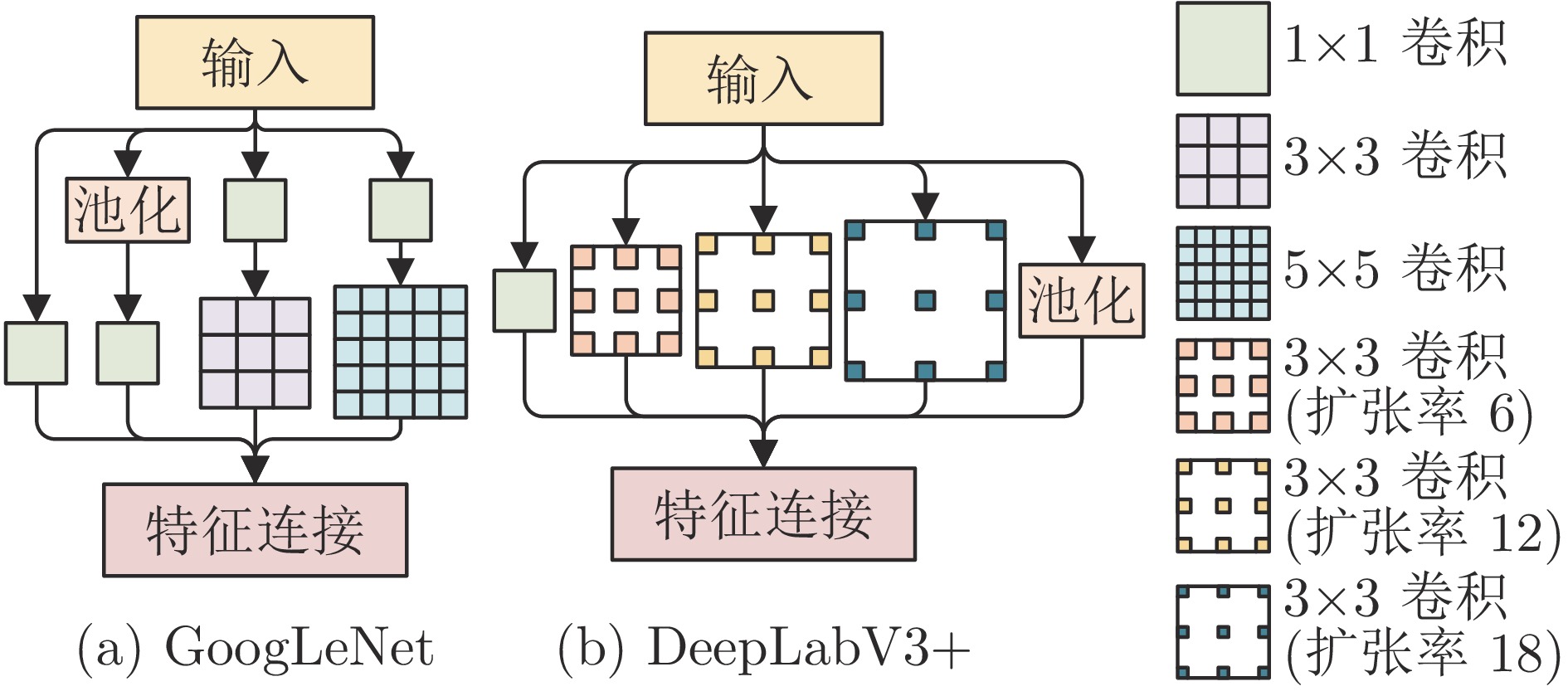

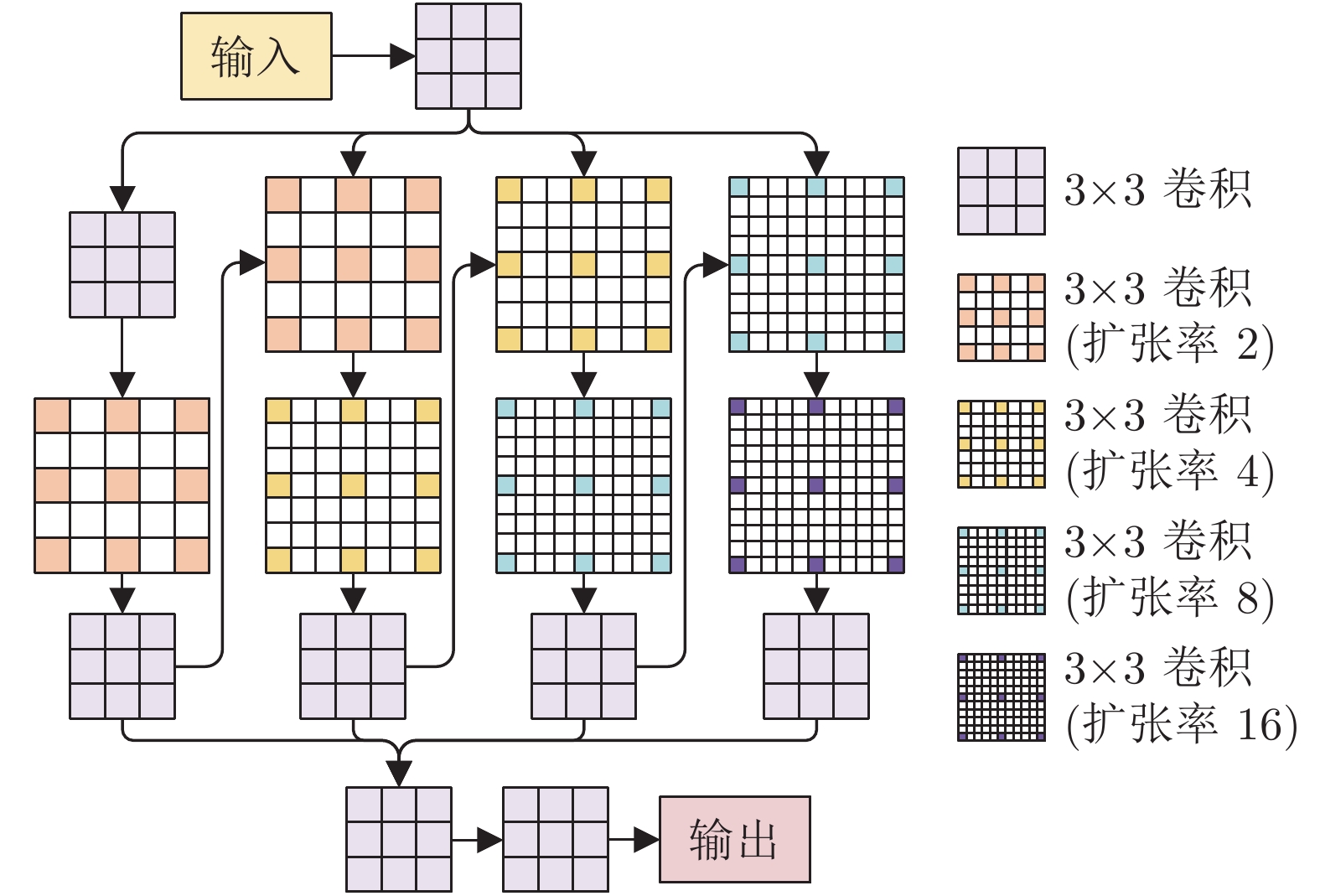

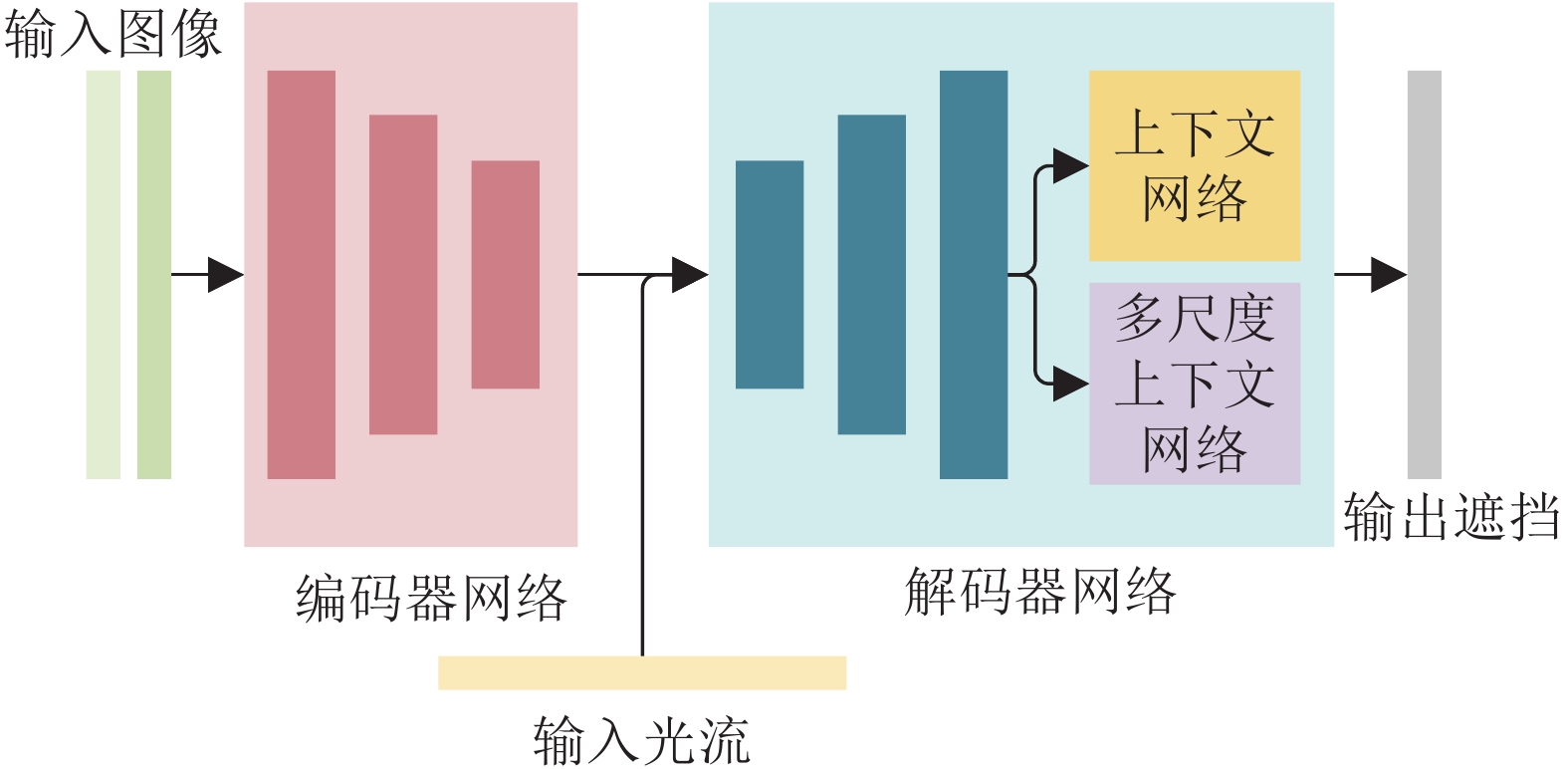

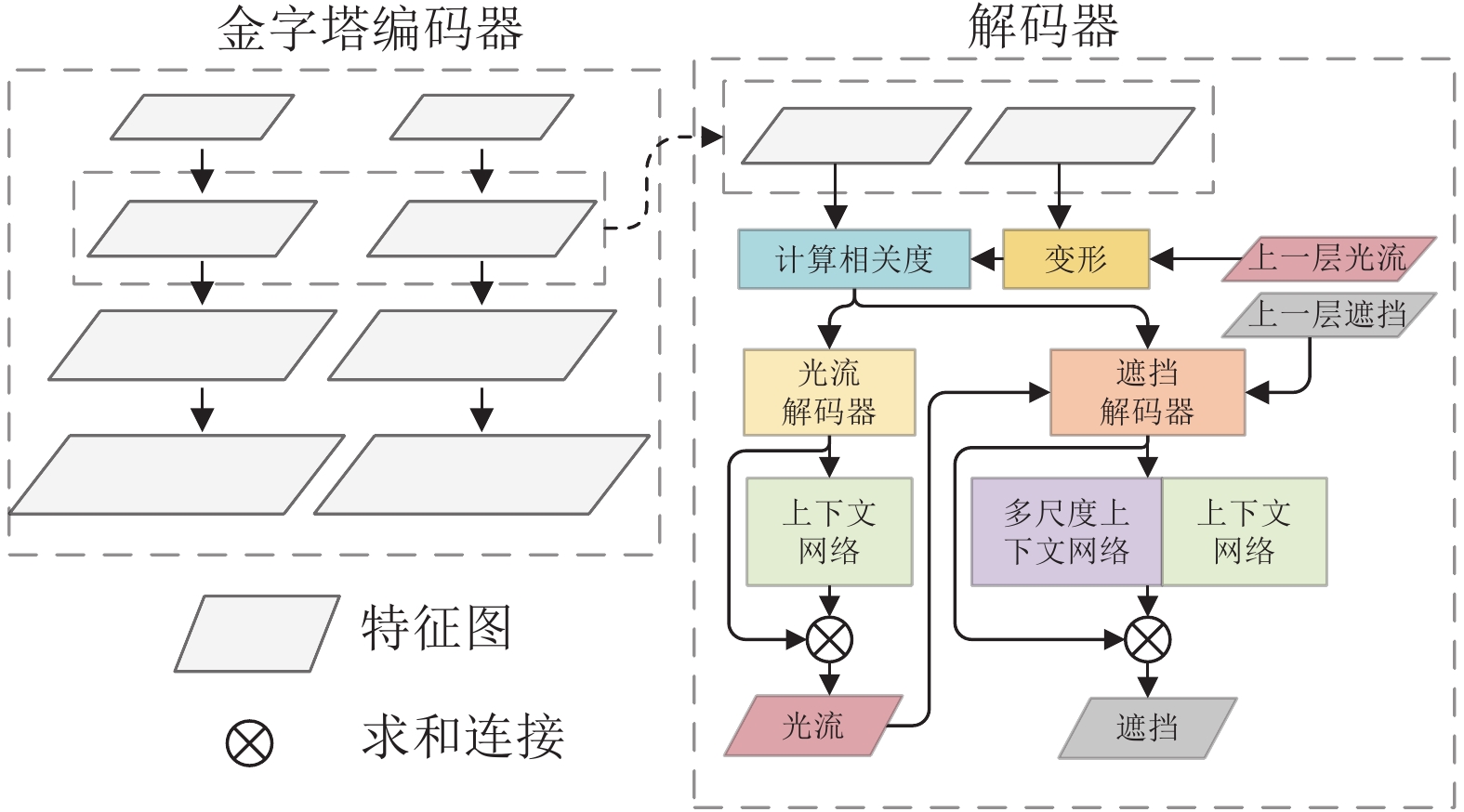

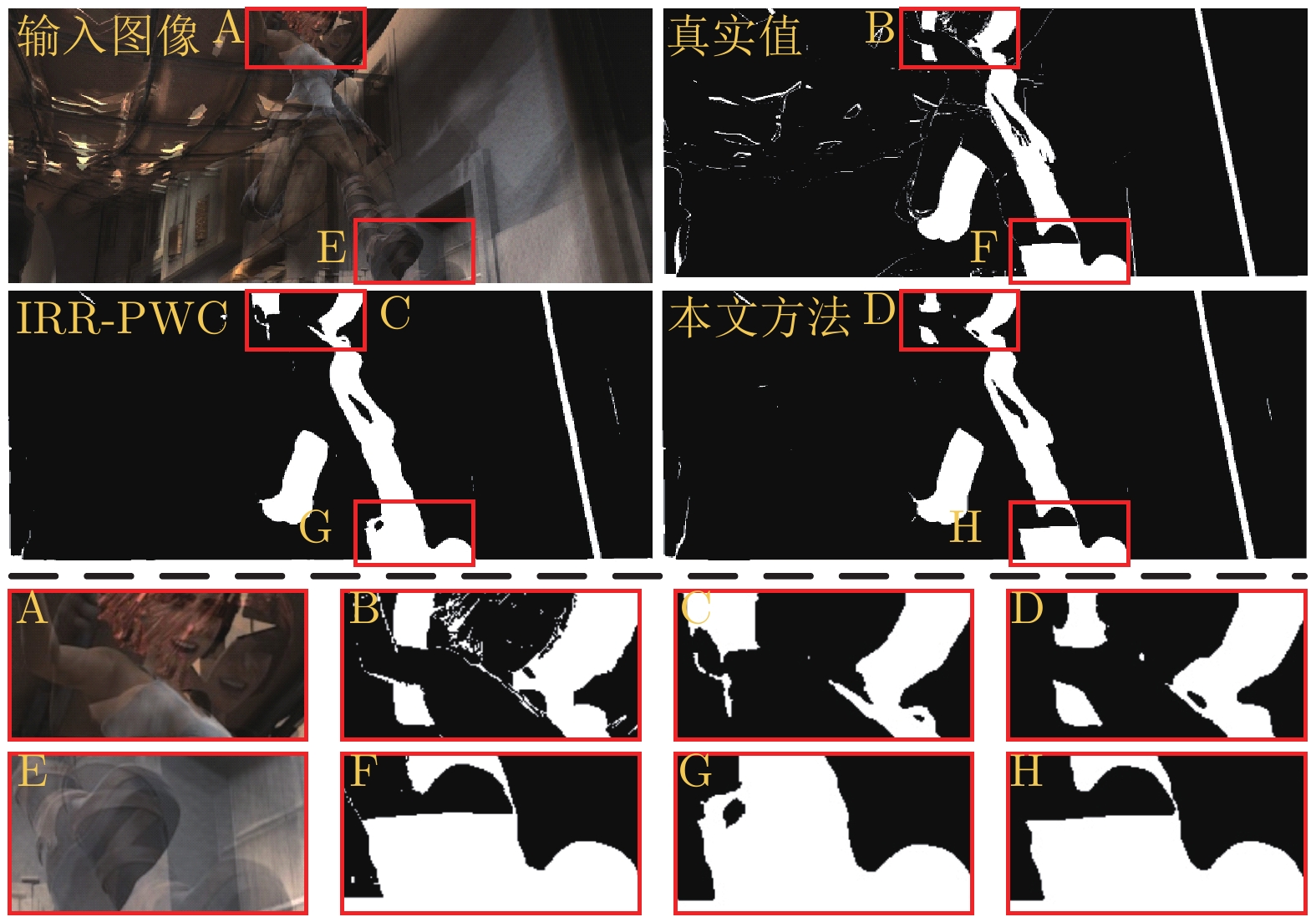

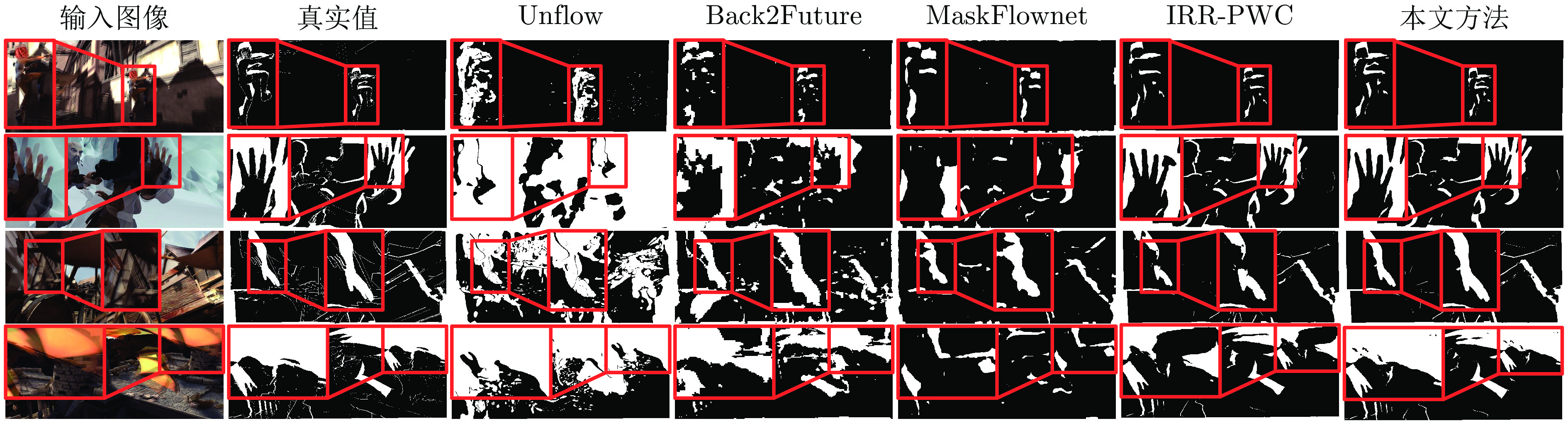

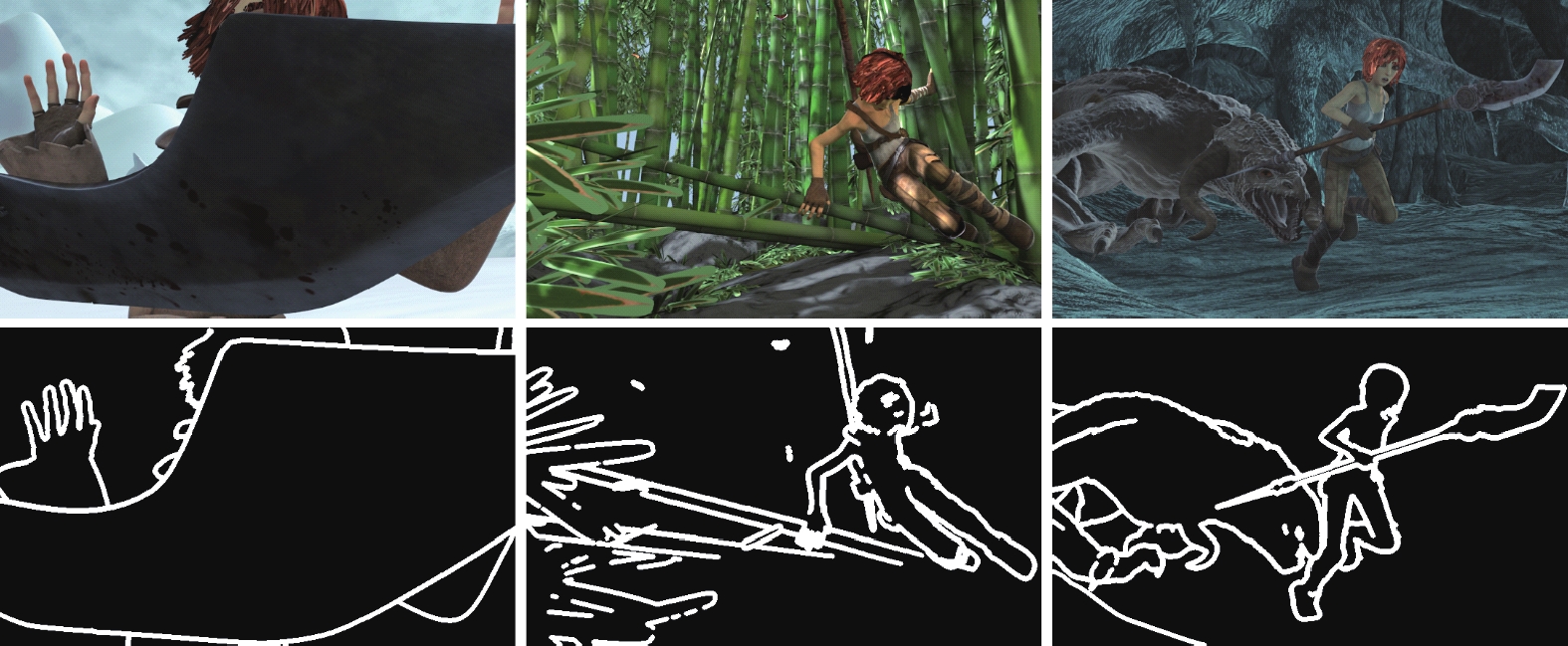

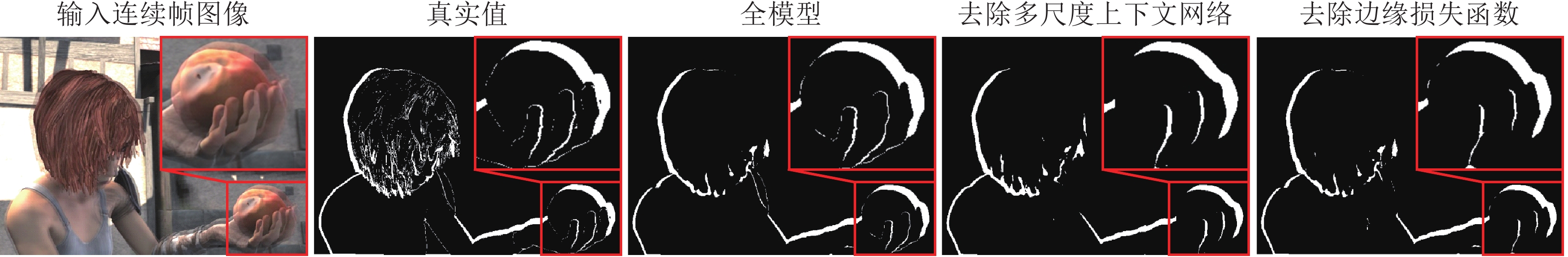

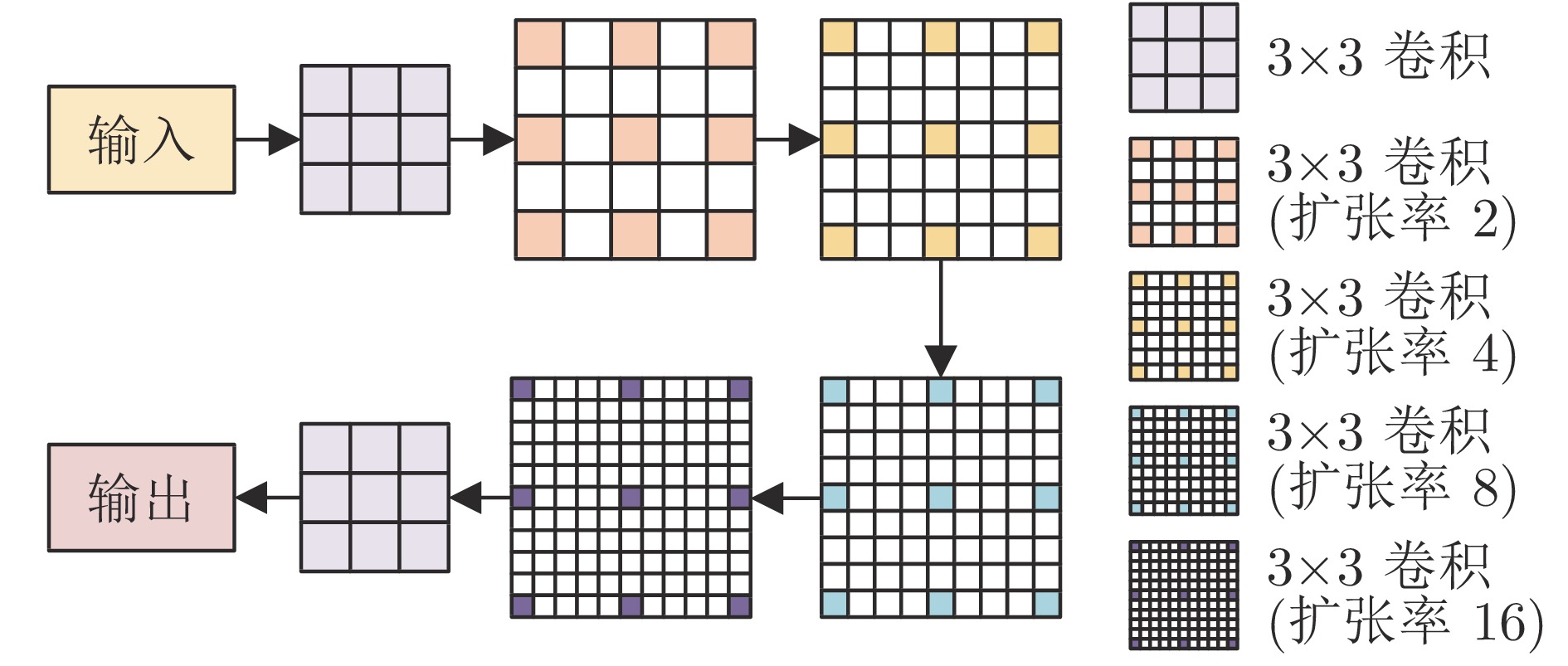

摘要: 针对非刚性运动和大位移场景下运动遮挡检测的准确性与鲁棒性问题, 提出一种基于光流与多尺度上下文的图像序列运动遮挡检测方法. 首先, 设计基于扩张卷积的多尺度上下文信息聚合网络, 通过图像序列多尺度上下文信息获取更大范围的图像特征; 然后, 采用特征金字塔构建基于多尺度上下文与光流的端到端运动遮挡检测网络模型, 利用光流优化非刚性运动和大位移区域的运动检测遮挡信息; 最后, 构造基于运动边缘的网络模型训练损失函数, 获取准确的运动遮挡边界. 分别采用MPI-Sintel和KITTI测试数据集对所提方法与现有的代表性方法进行实验对比与分析. 实验结果表明, 所提方法能够有效提高运动遮挡检测的准确性和鲁棒性, 尤其在非刚性运动和大位移等困难场景下具有更好的遮挡检测鲁棒性.Abstract: In order to improve the accuracy and robustness of occlusion detection under non-rigid motion and large displacements, we propose an occlusion detection method of image sequence motion based on optical flow and multiscale context. First, we design a multiscale context information aggregation network based on dilated convolution which obtains a wider range of image features through multiscale context information of image sequence. Then, we construct an end-to-end motion occlusion detection network model based on multiscale context and optical flow using feature pyramid, utilize the optical flow to optimize the performance of occlusion detection in areas of non-rigid motion and large displacements region. Finally, we present a novel motion edge training loss function to obtain the accurate motion occlusion boundary. We compare and analysis our method with the existing representative approaches by using the MPI-Sintel datasets and KITTI datasets, respectively. The experimental results show that the proposed method can effectively improve the accuracy and robustness of motion occlusion detection, especially gains the better occlusion detection robustness under non-rigid motion and large displacements.

-

Key words:

- Image sequence /

- occlusion detection /

- deep learning /

- multiscale context /

- non-rigid motion

-

表 1 MPI-Sintel数据集平均F1分数对比结果

Table 1 Comparison of average F1 score on MPI-Sintel dataset

表 2 MPI-Sintel数据集平均漏检率与误检率对比结果(%)

Table 2 Comparison of average omission rate and false rate on MPI-Sintel dataset (%)

表 3 非刚性运动与大位移图像序列运动遮挡检测平均F1分数对比结果

Table 3 Comparison of average F1 scores of motion occlusion detection between non-rigid motion and large displacement image sequences

对比方法 clean final alley_2 ambush_2 market_6 temple_2 alley_2 ambush_2 market_6 temple_2 Unflow[24] 0.414 9 0.431 3 0.433 0 0.324 3 0.405 7 0.392 0 0.449 9 0.312 0 Back2Future[25] 0.681 6 0.588 8 0.629 0 0.271 2 0.675 6 0.519 9 0.623 9 0.268 3 MaskFlownet[27] 0.505 7 0.540 3 0.466 0 0.383 8 0.503 9 0.408 5 0.473 5 0.350 8 IRR-PWC[26] 0.870 9 0.917 2 0.815 5 0.740 4 0.877 0 0.780 9 0.802 3 0.690 5 本文方法 0.881 1 0.921 6 0.830 4 0.774 7 0.876 4 0.795 9 0.810 6 0.710 3 注: 加粗字体表示各列最优结果. 表 4 不同方法的时间消耗对比

Table 4 Comparison of time consumption of different methods

表 5 MPI-Sintel全图像序列平均F1分数对比

Table 5 Comparison of average F1 scores of whole image sequence on MPI-Sintel

模型类型 MPI-Sintel 训练数据集 clean final 运行时间(s) 训练时间(d) 全模型 0.75 0.72 0.19 13 去除多尺度上下文网络 0.72 0.68 0.18 12 去除边缘损失函数 0.74 0.71 0.19 13 注: 加粗字体表示评价最优值. 表 6 MPI-Sintel全图像序列在不同运动边界区域内的平均F1分数对比

Table 6 Comparison of average F1 scores of whole image sequence in different motion boundary regions on MPI-Sintel

模型类型 MPI-Sintel 训练数据集 clean final $N=1 $ $N=3 $ $N=5 $ $N=10 $ $N=1 $ $N=3 $ $N=5 $ $N=10 $ 全模型 0.63 0.67 0.69 0.71 0.59 0.62 0.64 0.67 去除多尺度上下文网络 0.59 0.62 0.65 0.67 0.55 0.59 0.61 0.63 去除边缘损失函数 0.60 0.64 0.67 0.69 0.56 0.60 0.62 0.64 注: 加粗字体表示评价最优值. -

[1] 张世辉, 何琦, 董利健, 杜雪哲. 基于遮挡区域建模和目标运动估计的动态遮挡规避方法. 自动化学报, 2019, 45(4): 771−786Zhang Shi-Hui, He Qi, Dong Li-Jian, Du Xue-Zhe. Dynamic occlusion avoidance approach by means of occlusion region model and object motion estimation. Acta Automatica Sinica, 2019, 45(4): 771−786 [2] Yu C, Bo Y, Bo W, Yan W D, Robby T. Occlusion-aware networks for 3D human pose estimation in video. In: Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV). Seoul, South Korea: IEEE, 2019. 723−732 [3] 张聪炫, 陈震, 熊帆, 黎明, 葛利跃, 陈昊. 非刚性稠密匹配大位移运动光流估计. 电子学报, 2019, 47(6): 1316−1323 doi: 10.3969/j.issn.0372-2112.2019.06.019Zhang Cong-Xuan, Chen Zhen, Xiong Fan, Li Ming, Ge Li-Yue, Chen Hao. Large displacement motion optical flow estimation with non-rigid dense patch matching. Acta Electronica Sinica, 2019, 47(6): 1316−1323 doi: 10.3969/j.issn.0372-2112.2019.06.019 [4] 姚乃明, 郭清沛, 乔逢春, 陈辉, 王宏安. 基于生成式对抗网络的鲁棒人脸表情识别. 自动化学报, 2018, 44(5): 865−877Yao Nai-Ming, Guo Qing-Pei, Qiao Feng-Chun, Chen Hui, Wang Hong-An. Robust facial expression recognition with generative adversarial networks. Acta Automatica Sinica, 2018, 44(5): 865−877 [5] Pan J Y, Bo H. Robust occlusion handling in object tracking. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Minneapolis, USA: IEEE, 2007. 1−8 [6] 刘鑫, 许华荣, 胡占义. 基于 GPU 和 Kinect 的快速物体重建. 自动化学报, 2012, 38(8): 1288−1297Liu Xin, Xu Hua-Rong, Hu Zhan-Yi. GPU based fast 3D-object modeling with Kinect. Acta Automatica Sinica, 2012, 38(8): 1288−1297 [7] 张聪炫, 陈震, 黎明. 单目图像序列光流三维重建技术研究综述. 电子学报, 2016, 44(12): 3044−3052 doi: 10.3969/j.issn.0372-2112.2016.12.033Zhang Cong-Xuan, Chen Zhen, Li Ming. Review of the 3D reconstruction technology based on optical flow of monocular image sequence. Acta Electronica Sinica, 2016, 44(12): 3044−3052 doi: 10.3969/j.issn.0372-2112.2016.12.033 [8] Bailer C, Taetz B, Stricker D. Flow fields: Dense correspondence fields for highly accurate large displacement optical flow estimation. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2019, 41(8): 1879−1892 doi: 10.1109/TPAMI.2018.2859970 [9] Wolf L, Gadot D. PatchBatch: A batch augmented loss for optical flow. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Las Vegas, USA: IEEE, 2016. 4236−4245 [10] Li Y S, Song R, Hu Y L. Efficient coarse-to-fine patch match for large displacement optical flow. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Las Vegas, USA: IEEE, 2016. 5704−5712 [11] Menze M, Heipke C, Geiger A. Discrete optimization for optical flow. In: Proceedings of the German Conference on Pattern Recognition (GCPR). Aachen, Germany: Springer Press, 2015. 16−28 [12] Chen Q F, Koltun V. Full flow: Optical flow estimation by global optimization over regular grids. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Las Vegas, USA: IEEE, 2016. 4706−4714 [13] Guney F, Geiger A. Deep discrete flow. In: Proceedings of the Asian Conference on Computer Vision (ACCV). Taipei, China: Springer Press, 2016. 207−224 [14] Hur J, Roth S. Joint optical flow and temporally consistent semantic segmentation. In: Proceedings of the European Conference on Computer Vision (ECCV). Amsterdam, The Netherlands: Springer, 2016. 163−177 [15] Ince S, Konrad J. Occlusion-aware optical flow estimation. IEEE Transactions on Image Process, 2008, 17(8): 1443−1451 doi: 10.1109/TIP.2008.925381 [16] Sun D Q, Liu C, Pfister H. Local layering for joint motion estimation and occlusion detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Columbus, USA: IEEE, 2014. 1098−1105 [17] Sun D Q, Sudderth E B, Black M J. Layered image motion with explicit occlusions, temporal consistency, and depth ordering. In: Proceedings of the 24th International Conference on Neural Information Processing Systems (NIPS). Vancouver, Canada: Curran Associates Inc., 2010. 2226−2234 [18] Vogel C, Roth S, Schindler K. View-consistent 3D scene flow estimation over multiple frames. In: Proceedings of the European Conference on Computer Vision (ECCV). Zurich, Switzerland: Springer Press, 2014. 263−278 [19] Zanfir A, Sminchisescu C. Large displacement 3D scene flow with occlusion reasoning. In: Proceedings of the IEEE International Conference on Computer Vision (ICCV). Santiago, Chile: IEEE, 2015. 4417−4425 [20] Zhang C X, Chen Z, Wang M R, Li M, Jiang S F. Robust non-local TV-L1 optical flow estimation with occlusion detection. IEEE Transactions on Image Process, 2017, 26(8): 4055−4067 doi: 10.1109/TIP.2017.2712279 [21] 张聪炫, 陈震, 汪明润, 黎明, 江少锋. 基于光流与Delaunay三角网格的图像序列运动遮挡检测. 电子学报, 2018, 46(2): 479−485 doi: 10.3969/j.issn.0372-2112.2018.02.030Zhang Cong-Xuan, Chen Zhen, Wang Ming-Run, Li Ming, Jiang Shao-Feng. Motion occlusion detecting from image sequence based on optical flow and Delaunay triangulation. Acta Electronica Sinica, 2018, 46(2): 479−485 doi: 10.3969/j.issn.0372-2112.2018.02.030 [22] Kennedy R, Taylor C J. Optical flow with geometric occlusion estimation and fusion of multiple frames. In: Proceedings of International Workshop on Energy Minimization Methods in Computer Vision and Pattern Recognition (EMMCVPR). Hong Kong, China: IEEE, 2015. 364−377 [23] Yu J J, Harley A W, Derpanis K G. Back to basics: Unsupervised learning of optical flow via brightness constancy and motion smoothness. In: Proceedings of the European Conference on Computer Vision (ECCV). Amsterdam, The Netherlands: Springer, 2016. 3−10 [24] Meister S, Hur J, Roth S. UnFlow: Unsupervised learning of optical flow with a bidirectional census loss. In: Proceedings of the 31st AAAI Conference on Artificial Intelligence (AAAI). San Francisco, USA: AAAI, 2017. 7251−7259 [25] Janai J, Güney F, Ranjan A, Black M, Geiger A. Unsupervised learning of multi-frame optical flow with occlusions. In: Proceedings of the European Conference on Computer Vision (ECCV). Munich, Germany: Springer, 2018. 713−731 [26] Hur J, Roth S. Iterative residual refinement for joint optical flow and occlusion estimation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). Long Beach, USA: IEEE, 2019. 5747−5756 [27] Zhao S Y, Sheng Y L, Dong Y, Chang E I C, Xu Y. MaskFlownet: Asymmetric feature matching with learnable occlusion mask. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). Virtual Event: IEEE, 2020. 6277−6286 [28] Yang M K, Yu K, Zhang C, Li Z W, Yang K Y. DenseASPP for semantic segmentation in street scenes. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). Salt Lake City, USA: IEEE, 2018. 3684−3692 [29] Mehta S, Rastegari M, Caspi A, Shapiro L, Hajishirzi H. ESPNet: Efficient spatial pyramid of dilated convolutions for semantic segmentation. In: Proceedings of the European Conference on Computer Vision (ECCV). Munich, Germany: Springer, 2018. 561−580 [30] Szegedy C, Liu W, Jia Y Q, Sermanet P, Reed S, Anguelov D, et al. Going deeper with convolutions. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). Boston, USA: IEEE, 2015. 1−9 [31] Chen L C, Zhu Y K, Papandreou G, Schroff F, Adam H. Encoder-decoder with atrous separable convolution for semantic image segmentation. In: Proceedings of the European Conference on Computer Vision (ECCV). Munich, Germany: Springer, 2018. 833−851 [32] Yu F, Koltun V. Multi-scale context aggregation by dilated convolutions [Online], available: https://arxiv.org/abs/1511.07122, Apr 30, 2016 [33] Butler D J, Wulff J, Stanley G B, Black M J. A naturalistic open source movie for optical flow evaluation. In: Proceedings of the European Conference on Computer Vision (ECCV). Florence, Italy: Springer, 2012. 611−625 [34] Menze M, Geiger A. Object scene flow for autonomous vehicles. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). Boston, USA: IEEE, 2015. 2061−3070 -

下载:

下载: