Motif-augmented Contrastive Learning-based Defense Against Backdoor Attack on Graph Neural Networks

-

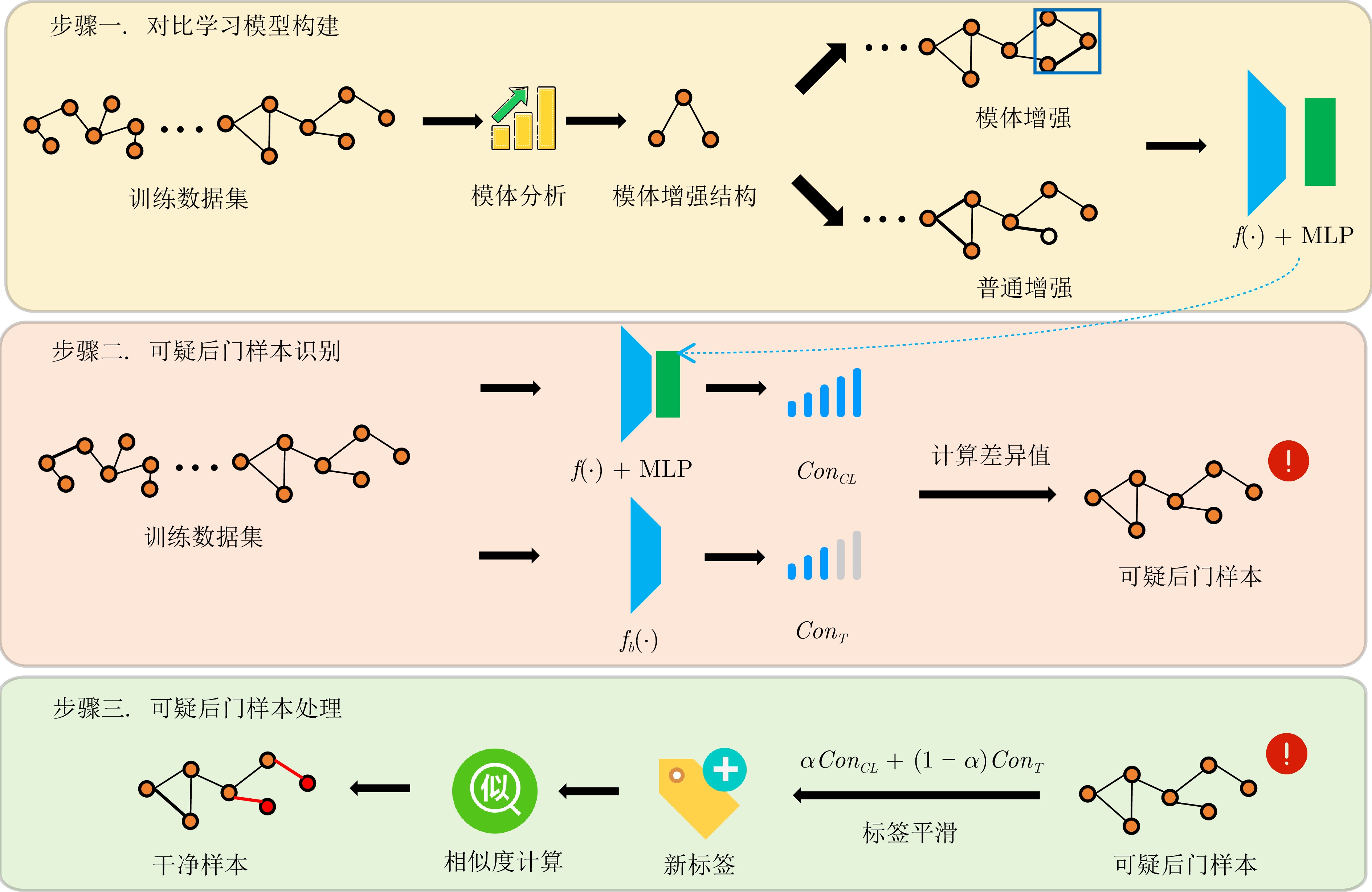

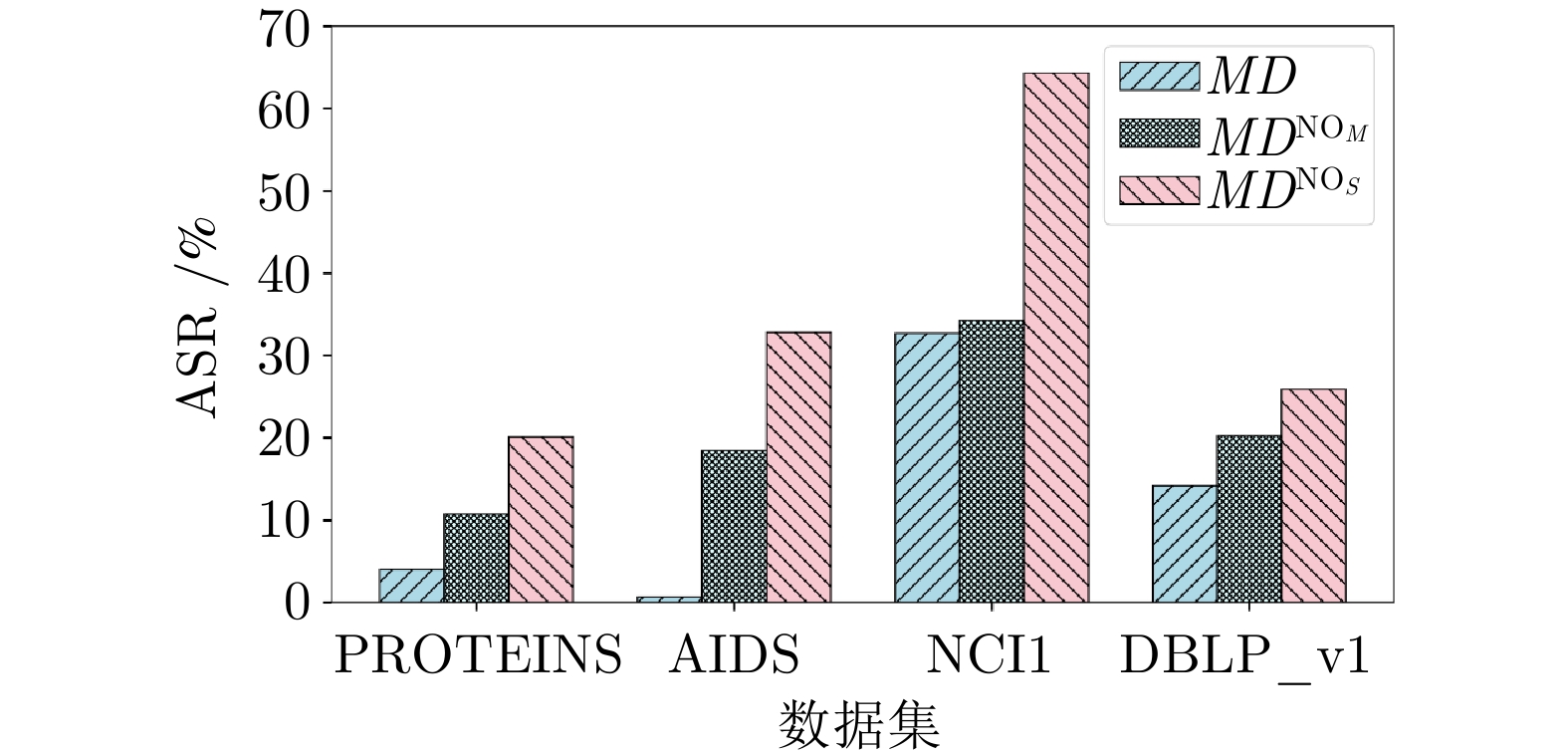

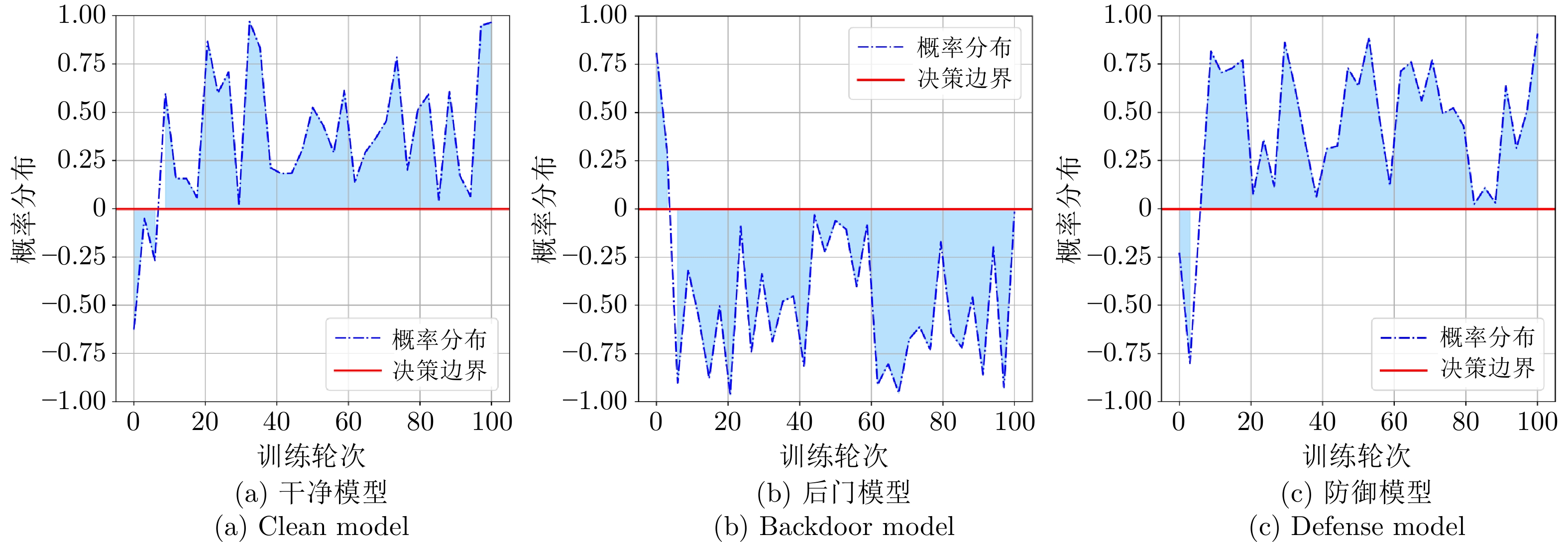

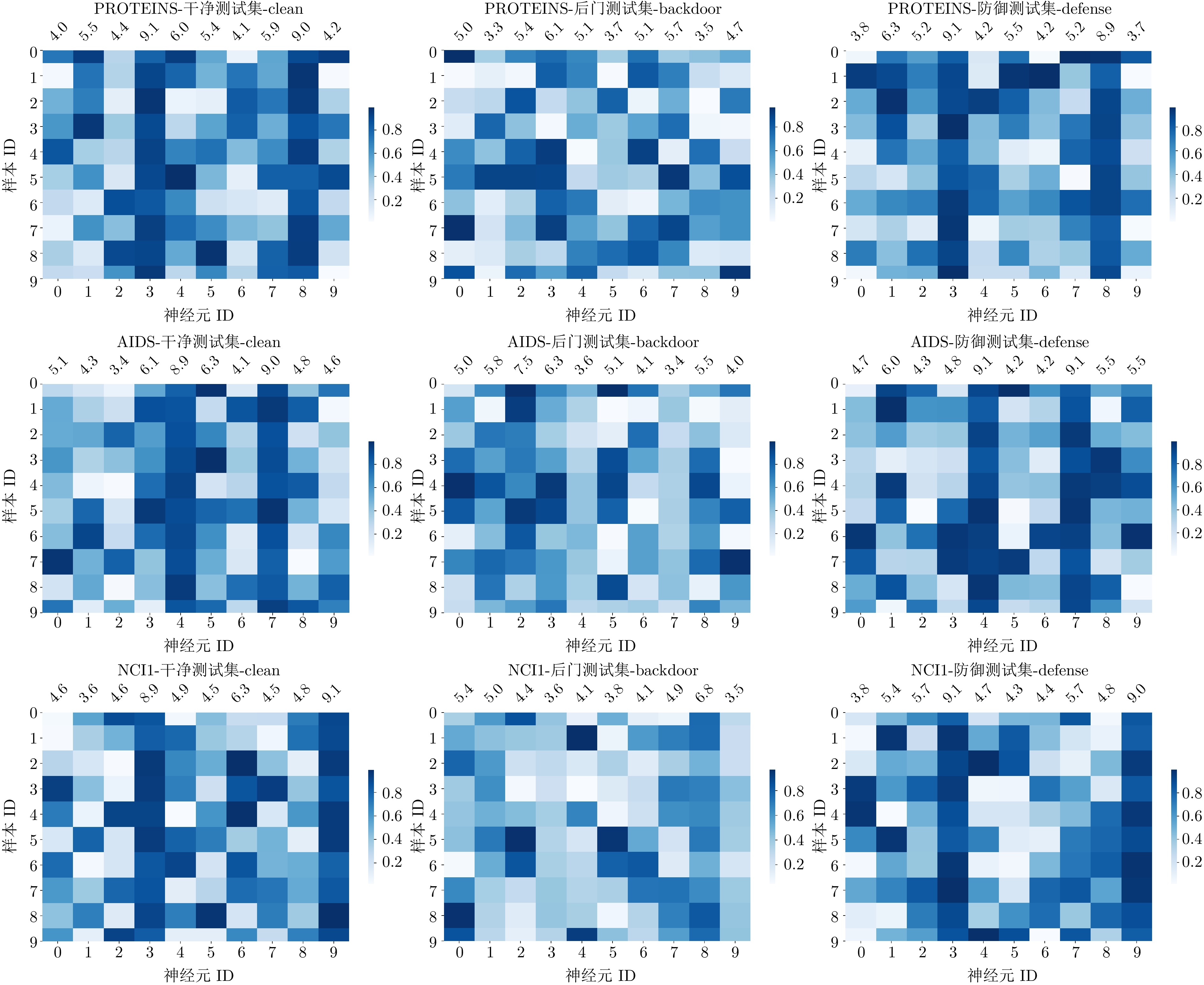

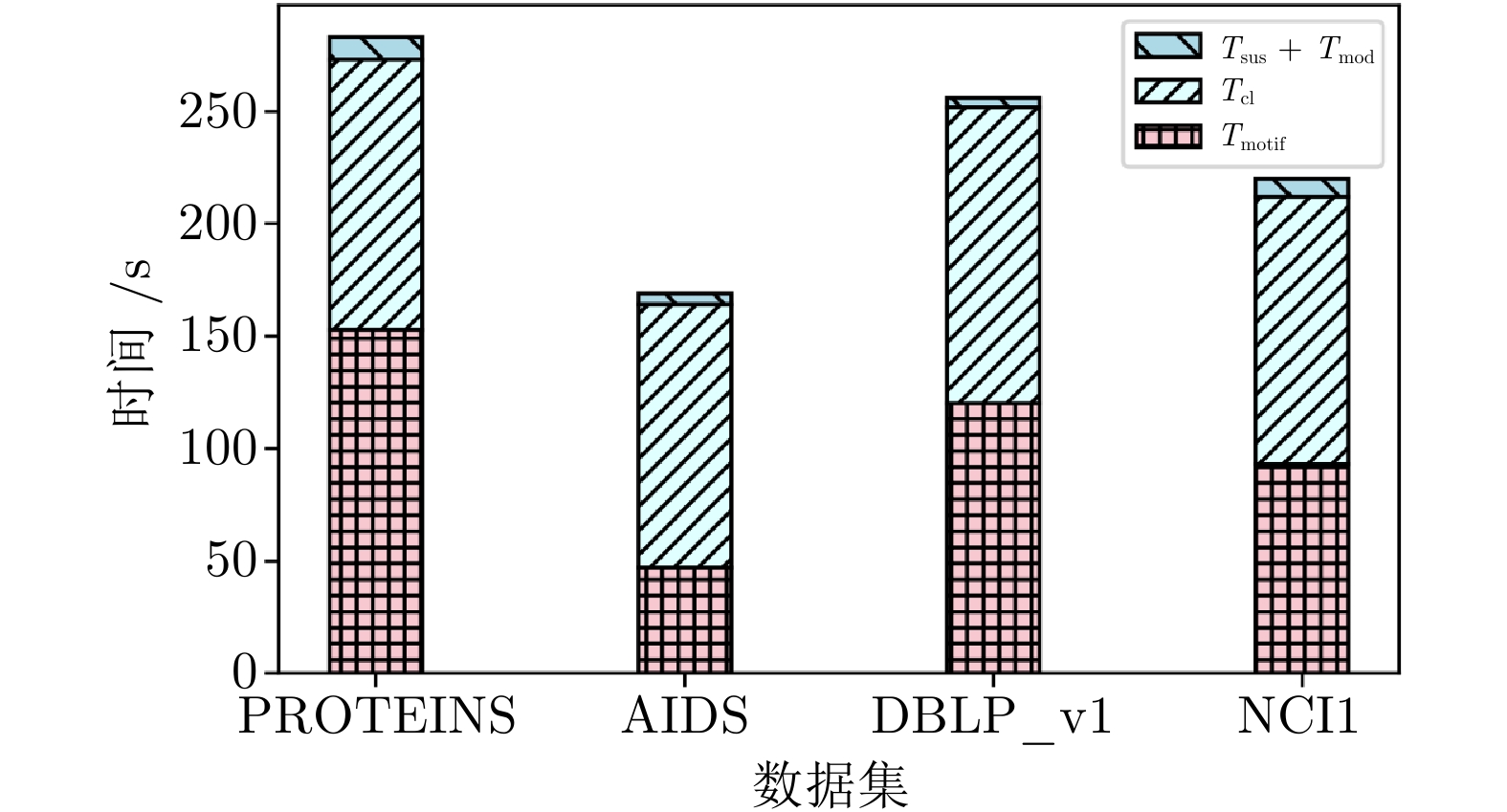

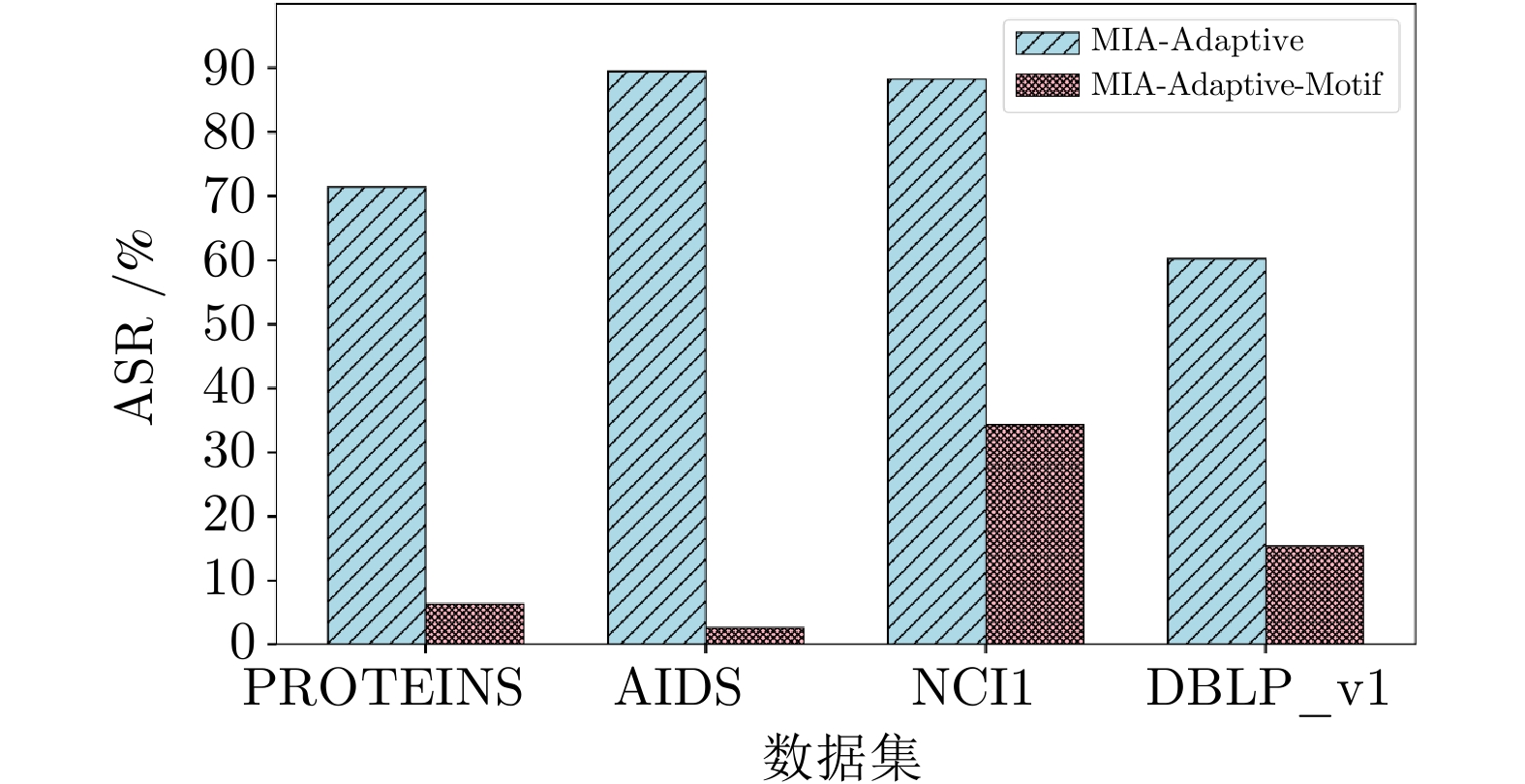

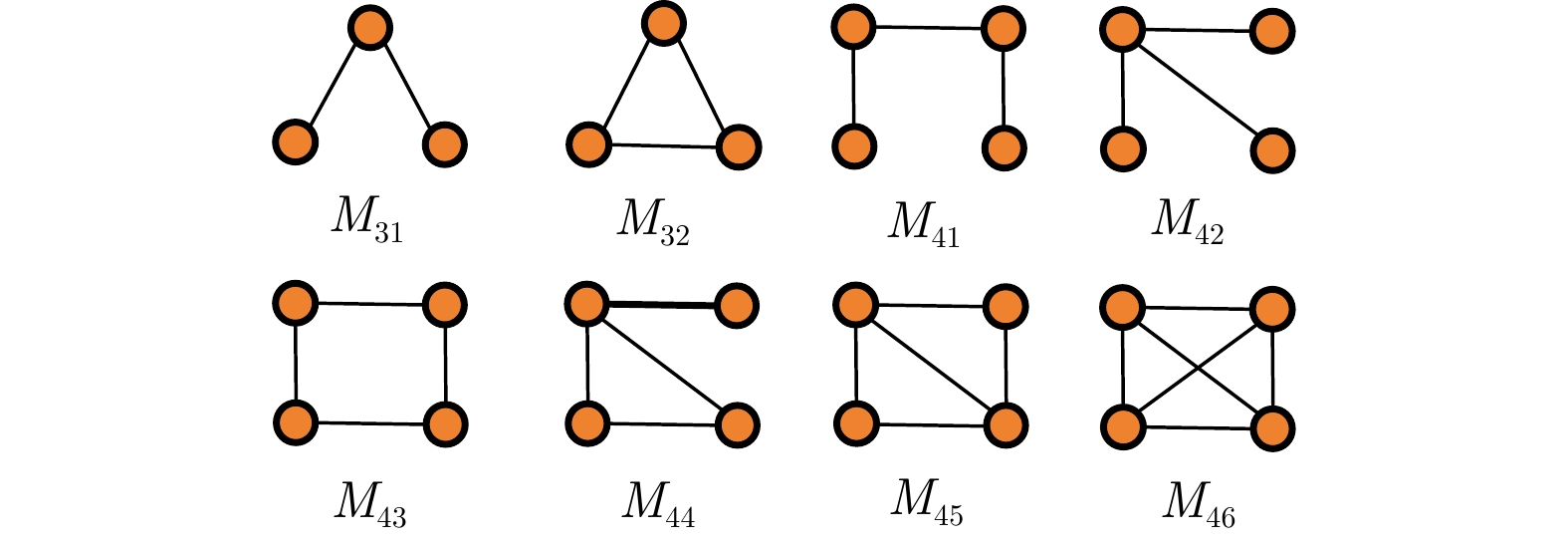

摘要: 图神经网络在图数据挖掘任务中表现出卓越性能, 因此广泛应用于社交网络、商品推荐等领域. 在图分类任务中, 模型决策高度依赖全局拓扑结构, 使得图神经网络易受后门攻击. 已有研究表明, 在训练数据中注入中毒信息使得训练获得的模型容易被触发样本欺骗, 严重威胁模型安全. 然而, 现有防御方法仍然存在一些挑战, 即对不同后门攻击的防御泛化性弱、无法有效均衡主任务性能与防御成功率等问题. 为此, 首次提出一种基于模体增强对比学习的图神经网络后门攻击防御方法(Motif-Defense), 可高效防御多种未知类型的后门攻击, 且主任务性能仅略有下降. 首先, 设计模体角度增强图的对比学习模型, 选取可疑后门样本. 其次, 使用Jaccard相似度和标签平滑策略将可疑后门样本净化为干净样本, 实现对图后门攻击的防御. 最终, 在四个真实数据集上展开防御实验, Motif-Defense平均降低84.70%的攻击成功率, 且分类准确率平均仅下降2.53%.Abstract: Graph neural networks (GNNs) have demonstrated strong performance in graph data mining tasks and are widely applied in social networks and recommendation systems. In graph classification, model decisions rely heavily on global topological structures, making GNNs vulnerable to backdoor attacks. Existing studies show that injecting poisoned samples into the training set can implant hidden triggers, causing the trained model to be misled by trigger patterns at inference time and thus posing serious security threats to model security. However, existing defense methods still suffer from limited generalization to different backdoor attacks and difficulties in balancing defense success rate with main task performance. To address these challenges, we first propose a motif-augmented contrastive learning-based defense method against backdoor attacks on graph neural networks, termed Motif-Defense, which can effectively defend against multiple unknown backdoor attacks while incurring only a slight performance degradation on the main task. Specifically, a motif-oriented enhanced contrastive learning framework is designed to identify suspicious backdoor samples, which are then purified into clean samples using Jaccard similarity and label smoothing strategies, thereby achieving defense against graph backdoor attacks. Extensive experiments on four real-world datasets against six backdoor attack methods and five defense baselines show that Motif-Defense reduces the average attack success rate by 84.70%, while the classification accuracy decreases by only 5.32%.

-

Key words:

- graph neural networks /

- backdoor attacks /

- defense /

- contrastive learning /

- motif

-

表 1 数据集基本统计数据

Table 1 The basic statistics of datasets

数据集 图样本数 节点数 链路数 图标签分布 目标类 网络类型 PROTEINS 1113 39.06 72.82 663$ [0] $, 450$ [1] $ 1 生物信息 AIDS 2000 15.69 16.20 400$ [0] $, 1600 $ [1] $0 小分子 NCI1 4110 29.87 32.30 2053 $ [0] $,2057 $ [1] $0 小分子 DBLP_v1 19456 10.48 19.65 9530 $ [0] $,9926 $ [1] $0 社交网络 表 2 不同攻击场景下 Motif-Defense的防御性能 (%)

Table 2 The defense performance of Motif-Defense in different attack scenarios (%)

数据集 评价指标 防御方法 后门攻击方法 MIA GTA ER-B MaxDCC Motif-Backdoor UGBA PROTEINS (76.23) ASR 无防御 68.07 95.80 72.23 93.28 94.12 98.94 Prune 20.56 18.63 28.57 10.76 79.85 80.69 BloGGaD 20.67 9.75 13.92 11.26 24.87 23.63 MD-GNN 22.35 16.47 18.15 30.00 25.43 16.64 CLB-Defense 4.86 3.58 9.39 15.86 11.76 8.96 ES 13.61 12.35 9.71 11.76 16.64 15.37 Motif-Defense 4.03 2.26 8.93 9.07 10.92 4.58 ACC 无防御 66.82 65.02 64.13 66.37 74.89 73.95 Prune 73.45 72.38 72.56 73.00 76.23 72.72 BloGGaD 73.90 72.56 72.56 70.76 75.93 74.52 MD-GNN 66.69 69.23 65.47 69.06 71.33 70.97 CLB-Defense 73.35 72.44 72.24 74.39 75.03 71.45 ES 71.57 71.30 68.43 72.55 76.23 71.14 Motif-Defense 73.69 73.93 73.49 69.24 72.11 74.69 AIDS (98.92) ASR 无防御 92.19 99.34 80.94 91.88 98.75 96.72 Prune 38.99 47.33 35.63 22.43 39.63 93.47 BloGGaD 14.75 20.75 14.63 9.19 19.87 22.41 MD-GNN 16.87 25.19 16.00 23.62 27.75 19.25 CLB-Defense 23.99 17.56 25.38 8.13 20.75 15.84 ES 1.21 9.63 4.33 11.33 19.63 18.25 Motif-Defense 0.68 4.12 7.87 7.03 10.62 9.54 ACC 无防御 94.00 98.25 94.00 95.25 99.00 98.65 Prune 96.50 96.33 96.25 82.33 97.45 94.93 BloGGaD 96.30 96.25 95.25 97.10 97.20 95.92 MD-GNN 95.45 94.47 94.75 95.85 98.11 96.38 CLB-Defense 97.45 97.07 96.57 97.86 98.16 97.05 ES 95.45 95.25 96.10 97.00 98.15 96.72 Motif-Defense 97.55 97.15 97.55 97.95 98.25 97.65 NCI1 (80.41) ASR 无防御 96.98 100.00 98.33 100.00 100.00 100.00 Prune 50.50 45.10 38.20 46.00 53.30 98.32 BloGGaD 33.26 39.44 27.36 30.25 20.64 28.34 MD-GNN 37.23 41.04 34.92 38.54 62.66 32.63 CLB-Defense 55.36 46.85 45.39 43.58 48.96 23.09 ES 39.57 40.31 39.46 32.07 41.00 18.36 Motif-Defense 32.74 30.38 25.58 23.58 34.36 13.64 ACC 无防御 73.36 76.52 70.80 76.89 80.41 81.45 Prune 76.23 76.74 73.48 77.47 74.96 78.33 BloGGaD 74.31 73.55 74.92 74.33 73.84 80.84 MD-GNN 75.50 72.90 77.10 75.00 73.80 77.97 CLB-Defense 76.06 76.87 75.74 76.53 75.78 79.36 ES 75.06 77.20 73.58 77.13 75.11 80.33 Motif-Defense 77.41 78.99 77.88 77.34 76.47 79.67 DBLP_v1 (80.83) ASR 无防御 48.75 62.17 62.29 69.86 70.84 72.56 Prune 19.20 11.50 25.00 23.00 60.50 68.00 BloGGaD 8.60 10.90 19.20 17.50 24.00 19.20 MD-GNN 18.40 20.10 32.77 24.00 29.50 35.40 CLB-Defense 10.33 10.28 15.28 13.66 24.39 18.52 ES 18.80 10.36 18.89 28.25 31.00 26.79 Motif-Defense 14.21 6.60 13.67 8.75 22.33 10.35 ACC 无防御 73.46 67.78 79.52 76.23 78.85 80.36 Prune 76.00 75.20 74.05 78.00 73.70 70.03 BloGGaD 75.90 72.80 76.50 74.10 72.50 75.03 MD-GNN 77.20 73.60 75.80 79.30 74.70 76.32 CLB-Defense 79.52 79.33 79.62 79.78 78.54 78.14 ES 77.92 77.72 77.01 77.84 75.11 76.94 Motif-Defense 79.68 79.39 79.87 79.96 80.24 79.11 注: 数据集列括号内数值为 GIN 模型原始准确率. 表 3 不同阈值$ o_1 $下的详细性能结果(AIDS + GTA) (%)

Table 3 The performance results under different threshold $ o_1 $ (AIDS + GTA) (%)

$ o_1 $ ASR ACC DR FDR 0.3 8.53 95.81 99.00 6.81 0.4 5.27 96.59 97.51 3.02 0.5 4.12 97.15 95.84 1.53 0.6 6.81 97.45 92.07 0.82 0.7 10.34 97.86 86.23 0.45 -

[1] Hamilton W L, Ying Z, Leskovec J. Inductive representation learning on large graphs. In: Proceedings of the 31st International Conference on Neural Information Processing Systems. Long Beach, USA: Curran Associates Inc., 2017. 1025−1035 [2] Wang D X, Lin J B, Cui P, Jia Q H, Wang Z, Fang Y M, et al. A semi-supervised graph attentive network for financial fraud detection. In: Proceedings of the IEEE International Conference on Data Mining (ICDM). Beijing, China: IEEE, 2019. 598−607 [3] Bai L, Yao L N, Kanhere S, Wang X Z, Liu W, Yang Z. Spatio-temporal graph convolutional and recurrent networks for citywide passenger demand prediction. In: Proceedings of the 28th ACM International Conference on Information and Knowledge Management. Beijing, China: ACM, 2019. 2293−2296 [4] Kipf T N, Welling M. Semi-supervised classification with graph convolutional networks. In: Proceedings of the 5th International Conference on Learning Representations. Toulon, France: ICLR, 2017. 1−14 [5] Cui H J, Dai W, Zhu Y Q, Kan X, Gu A A C, Lukemire J, et al. BrainGB: A benchmark for brain network analysis with graph neural networks. IEEE Transactions on Medical Imaging, 2023, 42(2): 493−506 doi: 10.1109/TMI.2022.3218745 [6] Stokes J M, Yang K, Swanson K, Jin W G, Cubillos-Ruiz A, Donghia N M, et al. A deep learning approach to antibiotic discovery. Cell, 2020, 180(4): 688−702 doi: 10.1016/j.cell.2020.01.021 [7] Yumlembam R, Issac B, Jacob S M, Yang L Z. IoT-based android malware detection using graph neural network with adversarial defense. IEEE Internet of Things Journal, 2023, 10(10): 8432−8444 doi: 10.1109/JIOT.2022.3188583 [8] Zhang Z X, Jia J Y, Wang B H, Gong N Z. Backdoor attacks to graph neural networks. In: Proceedings of the 26th ACM Symposium on Access Control Models and Technologies. New York, USA: ACM, 2021. 15−26Zhang Z X, Jia J Y, Wang B H, Gong N Z. Backdoor attacks to graph neural networks. In: Proceedings of the 26th ACM Symposium on Access Control Models and Technologies. New York, USA: ACM, 2021. 15−26 [9] Xu J, Xue M H, Picek S. Explainability-based backdoor attacks against graph neural networks. In: Proceedings of the 3rd ACM Workshop on Wireless Security and Machine Learning. Abu Dhabi, United Arab Emirates: ACM, 2021. 31−36 [10] Xi Z H, Pang R, Ji S L, Wang T. Graph backdoor. In: Proceedings of the 30th USENIX Security Symposium. Berkeley, USA: USENIX Association, 2021. 1523−1540Xi Z H, Pang R, Ji S L, Wang T. Graph backdoor. In: Proceedings of the 30th USENIX Security Symposium. Berkeley, USA: USENIX Association, 2021. 1523−1540 [11] Sheng Y, Chen R, Cai G Y, Kuang L. Backdoor attack of graph neural networks based on subgraph trigger. In: Proceedings of the 17th EAI International Conference on Collaborative Computing: Networking, Applications and Worksharing (CollaborateCom 2021). Virtual Event: Springer, 2021. 276−296Sheng Y, Chen R, Cai G Y, Kuang L. Backdoor attack of graph neural networks based on subgraph trigger. In: Proceedings of the 17th EAI International Conference on Collaborative Computing: Networking, Applications and Worksharing (CollaborateCom 2021). Virtual Event: Springer, 2021. 276−296 [12] Zheng H B, Xiong H Y, Chen J Y, Ma H N, Huang G H. Motif-backdoor: Rethinking the backdoor attack on graph neural networks via motifs. IEEE Transactions on Computational Social Systems, 2024, 11(2): 2479−2493 doi: 10.1109/TCSS.2023.3267094 [13] Dai E Y, Lin M H, Zhang X, Wang S H. Unnoticeable backdoor attacks on graph neural networks. In: Proceedings of the ACM Web Conference. Austin, USA: ACM, 2023. 2263−2273 [14] Alon U. Network motifs: Theory and experimental approaches. Nature Reviews Genetics, 2007, 8(6): 450−461 doi: 10.1038/nrg2102 [15] Liu K, Dolan-Gavitt B, Garg S. Fine-pruning: Defending against backdooring attacks on deep neural networks. In: Proceedings of the 21st International Symposium on Research in Attacks, Intrusions, and Defenses (RAID). Heraklion, Crete, Greece: Springer, 2018. 273−294 [16] Yang X, Li G L, Tao X Y, Zhang C F, Li J H. Black-box graph backdoor defense. In: Proceedings of the 23rd International Conference on Algorithms and Architectures for Parallel Processing (ICA3PP). Tianjin, China: Springer, 2023. 163−180 [17] Jiang B C, Li Z. Defending against backdoor attack on graph nerual network by explainability. arXiv preprint arXiv: 2209.02902, 2022. [18] 陈晋音, 熊海洋, 马浩男, 郑雅羽. 基于对比学习的图神经网络后门攻击防御方法. 通信学报, 2023, 44(4): 154−166 doi: 10.11959/j.issn.1000-436x.2023074Chen Jin-Yin, Xiong Hai-Yang, Ma Hao-Nan, Zheng Ya-Yu. CLB-Defense: Based on contrastive learning defense for graph neural network against backdoor attack. Journal on Communications, 2023, 44(4): 154−166 doi: 10.11959/j.issn.1000-436x.2023074 [19] Xiao Y, Li J, Su W G. A lightweight metric defence strategy for graph neural networks against poisoning attacks. In: Proceedings of the 23rd International Conference on Information and Communications Security (ICICS). Chongqing, China: Springer, 2021. 55−72 [20] You Y N, Chen T L, Sui Y D, Chen T, Wang Z Y, Shen Y. Graph contrastive learning with augmentations. In: Proceedings of the 34th International Conference on Neural Information Processing Systems. Vancouver, Canada: Curran Associates Inc., 2020. Article No. 488 [21] Qiu J Z, Chen Q B, Dong Y X, Zhang J, Yang H X, Ding M, et al. GCC: Graph contrastive coding for graph neural network pre-training. In: Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. New York, USA: ACM, 2020. 1150−1160Qiu J Z, Chen Q B, Dong Y X, Zhang J, Yang H X, Ding M, et al. GCC: Graph contrastive coding for graph neural network pre-training. In: Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. New York, USA: ACM, 2020. 1150−1160 [22] Hassani K, Khasahmadi A H. Contrastive multi-view representation learning on graphs. In: Proceedings of the 37th International Conference on Machine Learning. Virtual Event: PMLR, 2020. 4116−4126Hassani K, Khasahmadi A H. Contrastive multi-view representation learning on graphs. In: Proceedings of the 37th International Conference on Machine Learning. Virtual Event: PMLR, 2020. 4116−4126 [23] Yu J L, Yin H Z, Xia X, Chen T, Cui L Z, Nguyen Q V H. Are graph augmentations necessary? Simple graph contrastive learning for recommendation. In: Proceedings of the 45th International ACM SIGIR Conference on Research and Development in Information Retrieval. Madrid, Spain: ACM, 2022. 1294−1303 [24] Cai X H, Huang C, Xia L H, Ren X B. LightGCL: Simple yet effective graph contrastive learning for recommendation. In: Proceedings of the 11th International Conference on Learning Representations. Kigali, Rwanda: ICLR, 2023. 1−15 [25] Xu B W, Wang X L, Liu Z J, Kang L W. A GAN combined with graph contrastive learning for traffic forecasting. In: Proceedings of the 4th International Conference on Computing, Networks and Internet of Things. Xiamen, China: ACM, 2023. 866−873 [26] Sankar A, Zhang X Y, Chang K C C. Motif-based convolutional neural network on graphs. arXiv preprint arXiv: 1711.05697, 2017. [27] Yang C, Liu M X, Zheng V W, Han J W. Node, motif and subgraph: Leveraging network functional blocks through structural convolution. In: Proceedings of the IEEE/ACM International Conference on Advances in Social Networks Analysis and Mining (ASONAM). Barcelona, Spain: IEEE, 2018. 47−52 [28] Zhao H, Zhou Y Q, Song Y Q, Lee D K. Motif enhanced recommendation over heterogeneous information network. In: Proceedings of the 28th ACM International Conference on Information and Knowledge Management. Beijing, China: ACM, 2019. 2189−2192 [29] Dareddy M R, Das M, Yang H. Motif2vec: Motif aware node representation learning for heterogeneous networks. In: Proceedings of the IEEE International Conference on Big Data (Big Data). Los Angeles, USA: IEEE, 2019. 1052−1059 [30] Shao P, Yang Y, Xu S Y, Wang C P. Network embedding via motifs. ACM Transactions on Knowledge Discovery From Data, 2021, 16(3): Article No. 44 [31] Zhao M, Zhang Y L, Xia X W, Xu X. Motif-aware adversarial graph representation learning. IEEE Access, 2022, 10: 8617−8626 doi: 10.1109/ACCESS.2022.3144233 [32] Wang L, Ren J, Xu B, Li J X, Luo W, Xia F. MODEL: Motif-based deep feature learning for link prediction. IEEE Transactions on Computational Social Systems, 2020, 7(2): 503−516 doi: 10.1109/TCSS.2019.2962819 [33] Milo R, Shen-Orr S, Itzkovitz S, Kashtan N, Chklovskii D, Alon U. Network motifs: Simple building blocks of complex networks. Science, 2002, 298(5594): 824−827 doi: 10.1126/science.298.5594.824 [34] Hočevar T, Demšar J. A combinatorial approach to graphlet counting. Bioinformatics, 2014, 30(4): 559−565 doi: 10.1093/bioinformatics/btt717 [35] Freeman L C. Centrality in social networks conceptual clarification. Social networks, 1978, 1(3): 215−239 [36] 张重生, 陈杰, 李岐龙, 邓斌权, 王杰, 陈承功. 深度对比学习综述. 自动化学报, 2023, 49(1): 15−39 doi: 10.16383/j.aas.c220421Zhang Chong-Sheng, Chen Jie, Li Qi-Long, Deng Bin-Quan, Wang Jie, Chen Cheng-Gong. Deep contrastive learning: A survey. Acta Automatica Sinica, 2023, 49(1): 15−39 doi: 10.16383/j.aas.c220421 [37] He K M, Fan H Q, Wu Y X, Xie S N, Girshick R. Momentum contrast for unsupervised visual representation learning. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). Seattle, USA: IEEE, 2020. 9726−9735 [38] Freeman L C. A set of measures of centrality based on betweenness. Sociometry, 1977, 40(1): 35−41 doi: 10.2307/3033543 [39] Bonacich P. Factoring and weighting approaches to status scores and clique identification. The Journal of Mathematical Sociology, 1972, 2(1): 113−120 doi: 10.1080/0022250X.1972.9989806 [40] 李晓庆, 唐昊, 司加胜, 苗刚中. 面向混合属性数据集的改进半监督FCM聚类方法. 自动化学报, 2018, 44(12): 2259−2268 doi: 10.16383/j.aas.2018.c170510Li Xiao-Qing, Tang Hao, Si Jia-Sheng, Miao Gang-Zhong. An improved semi-supervised FCM clustering method for mixed data sets. Acta Automatica Sinica, 2018, 44(12): 2259−2268 doi: 10.16383/j.aas.2018.c170510 [41] Xu K, Hu W H, Leskovec J, Jegelka S. How powerful are graph neural networks? In: Proceedings of the 7th International Conference on Learning Representations. New Orleans, USA: ICLR, 2019. 1−17 -

下载:

下载: