Bionic Intelligent Grasping Method of Space Manipulators Based on Progressive Imitation-reinforcement Learning

-

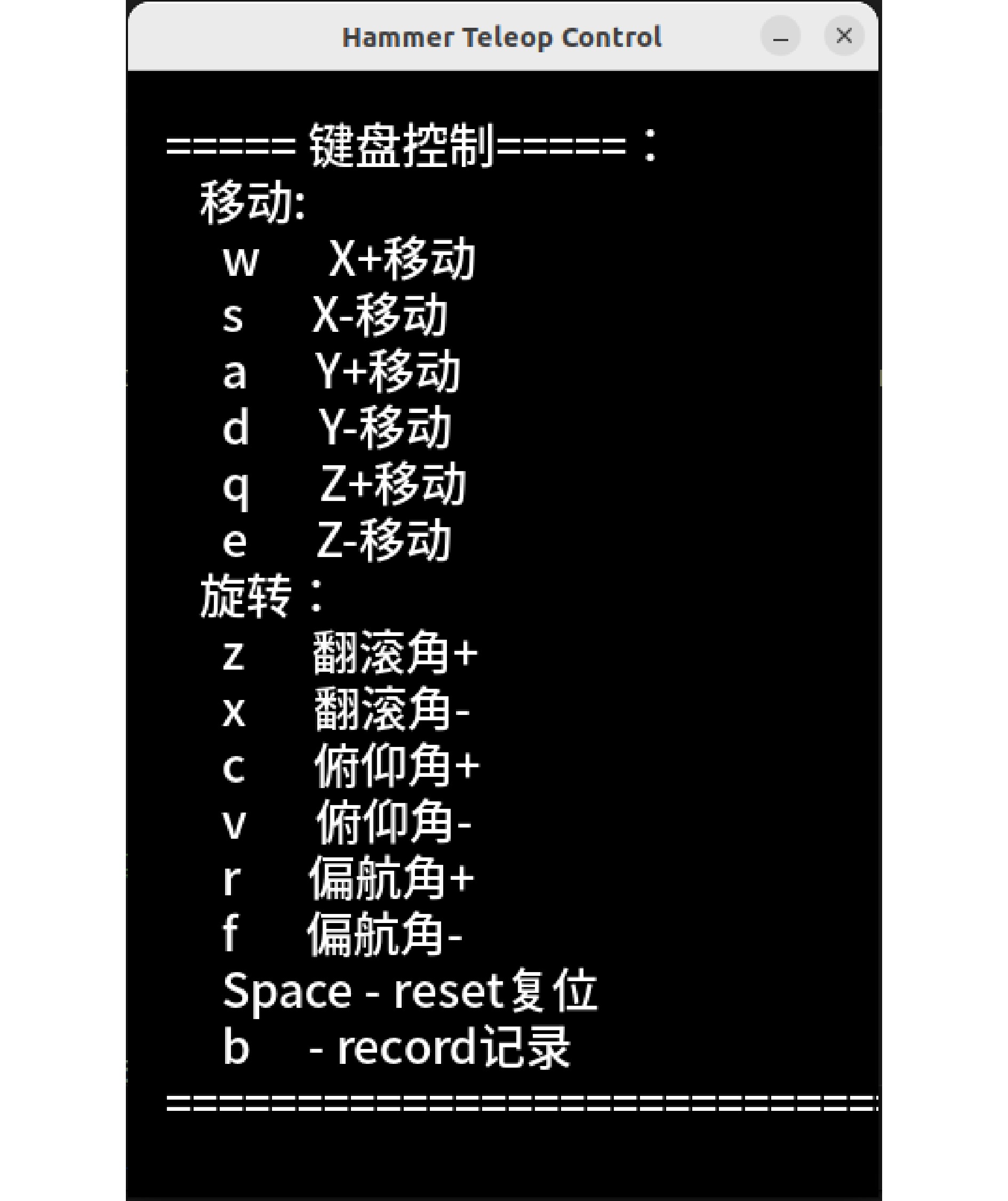

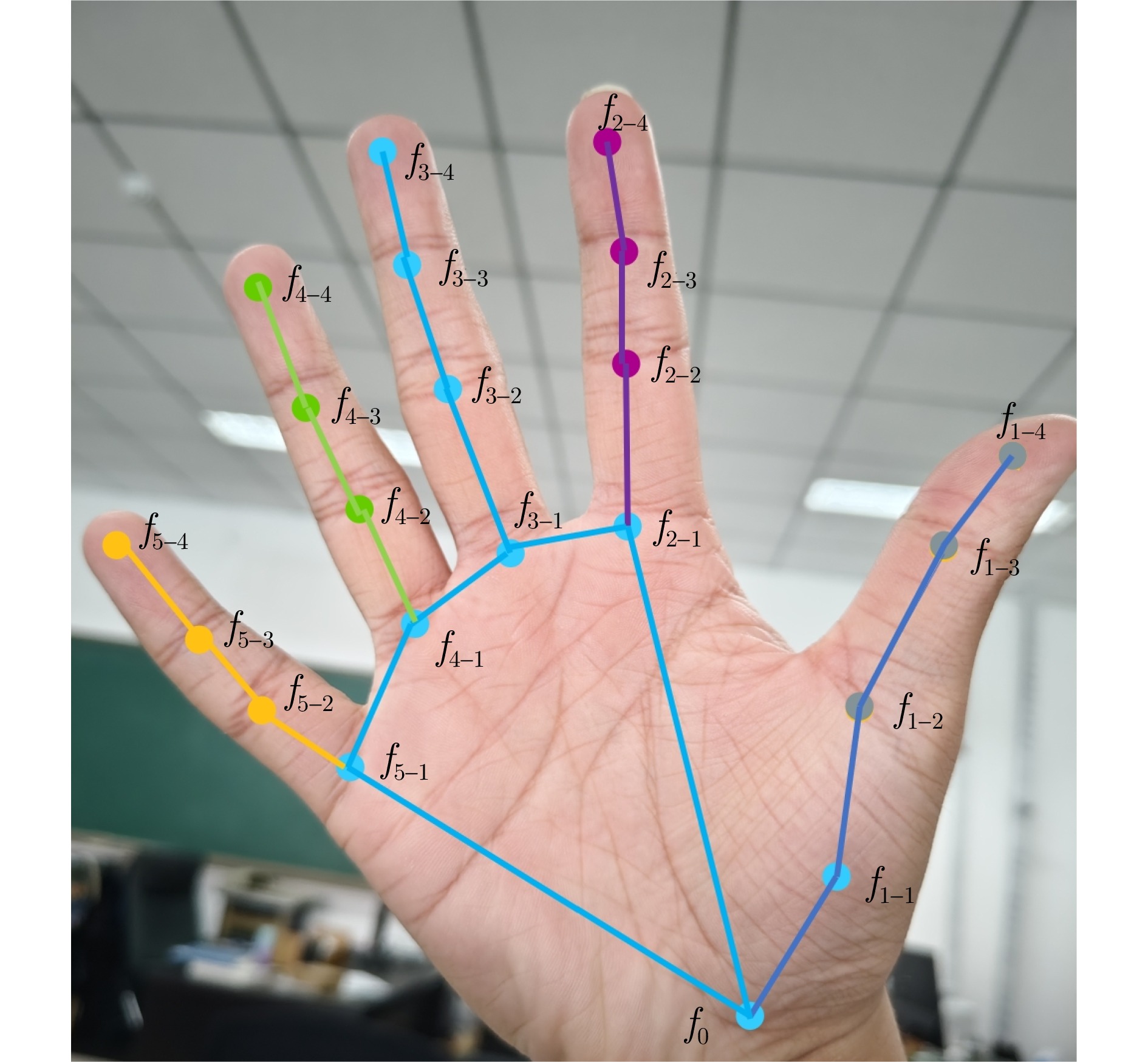

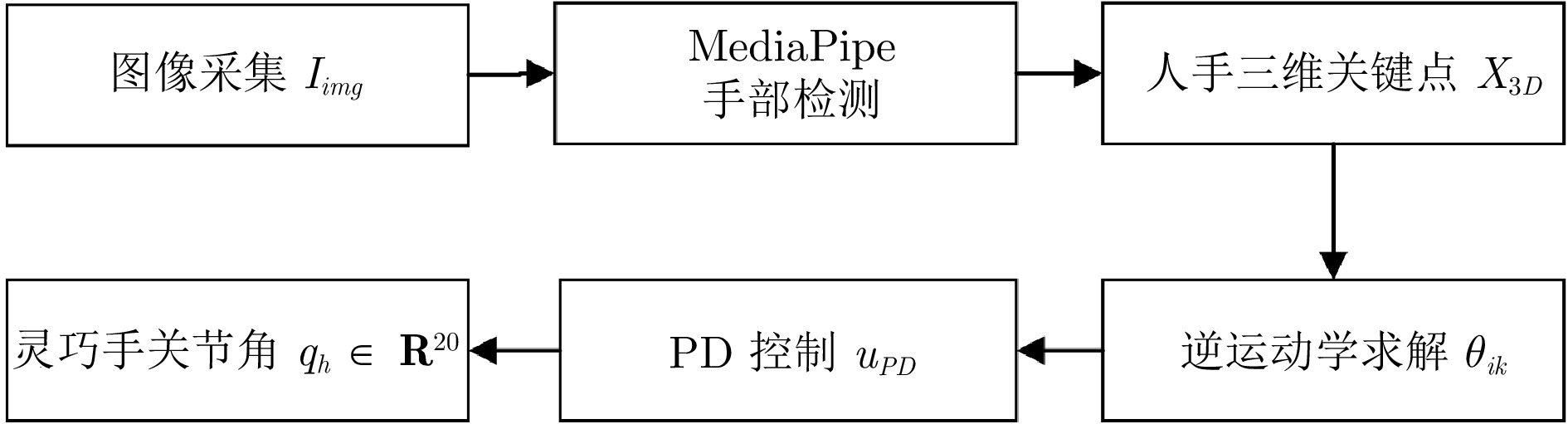

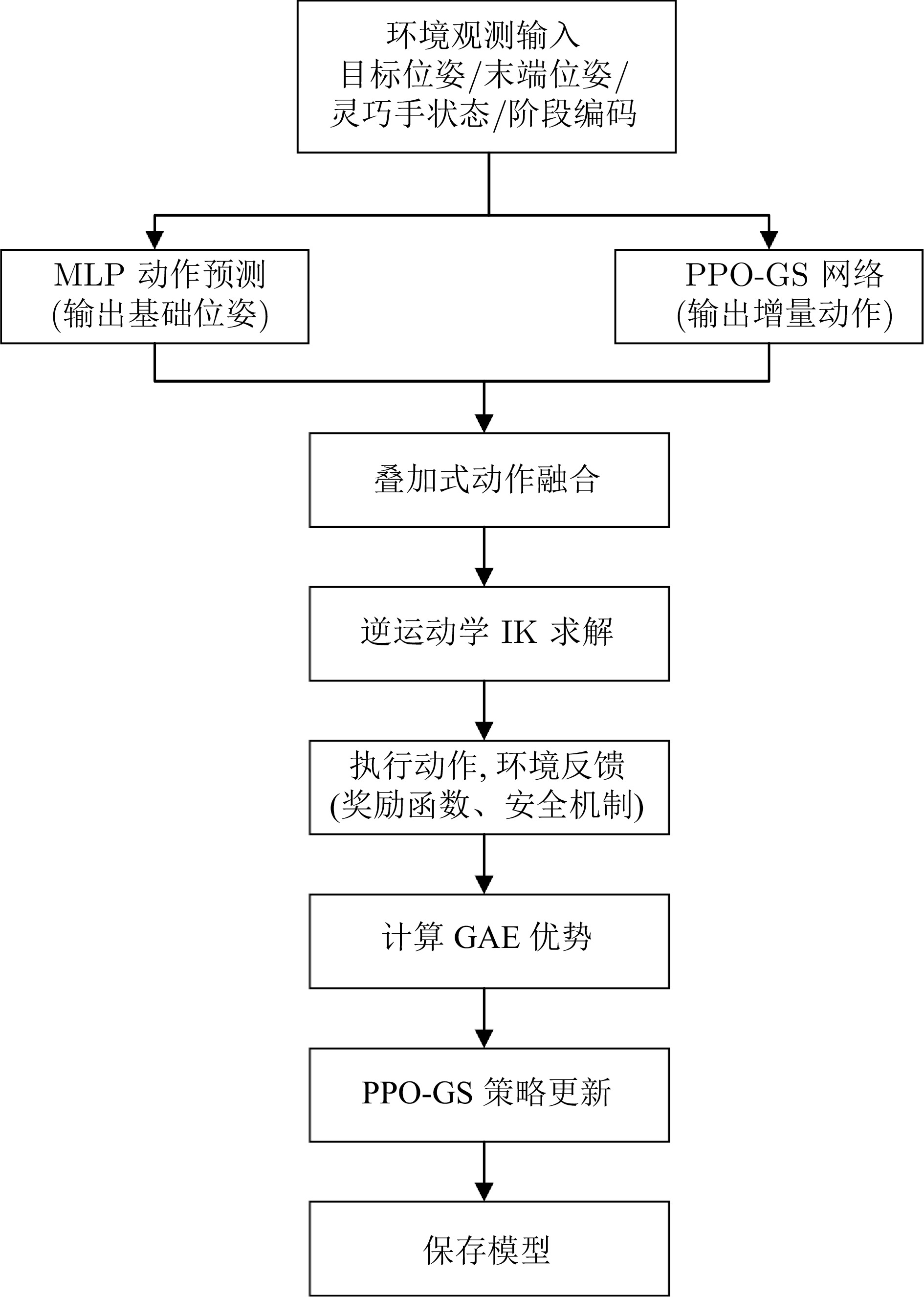

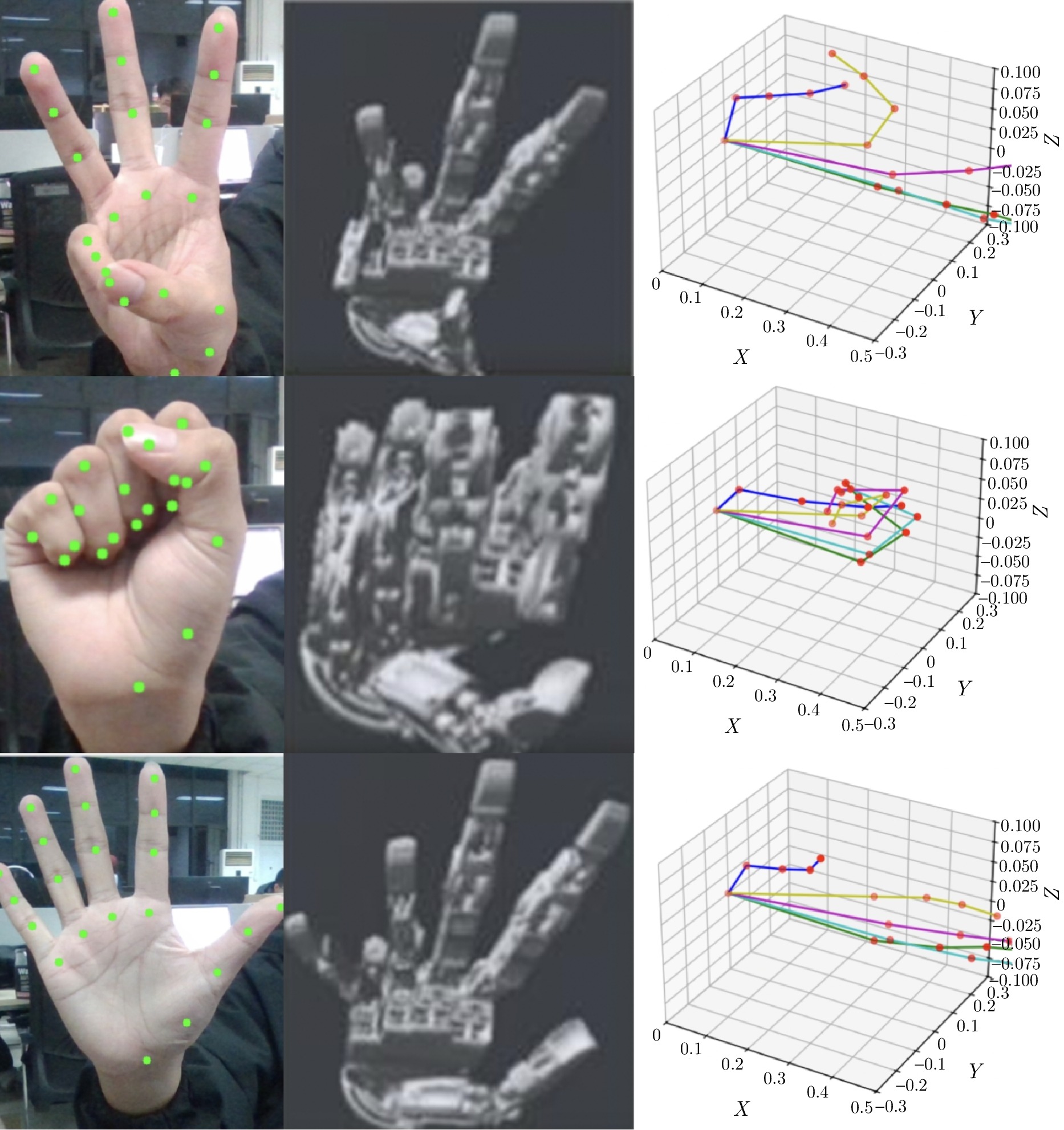

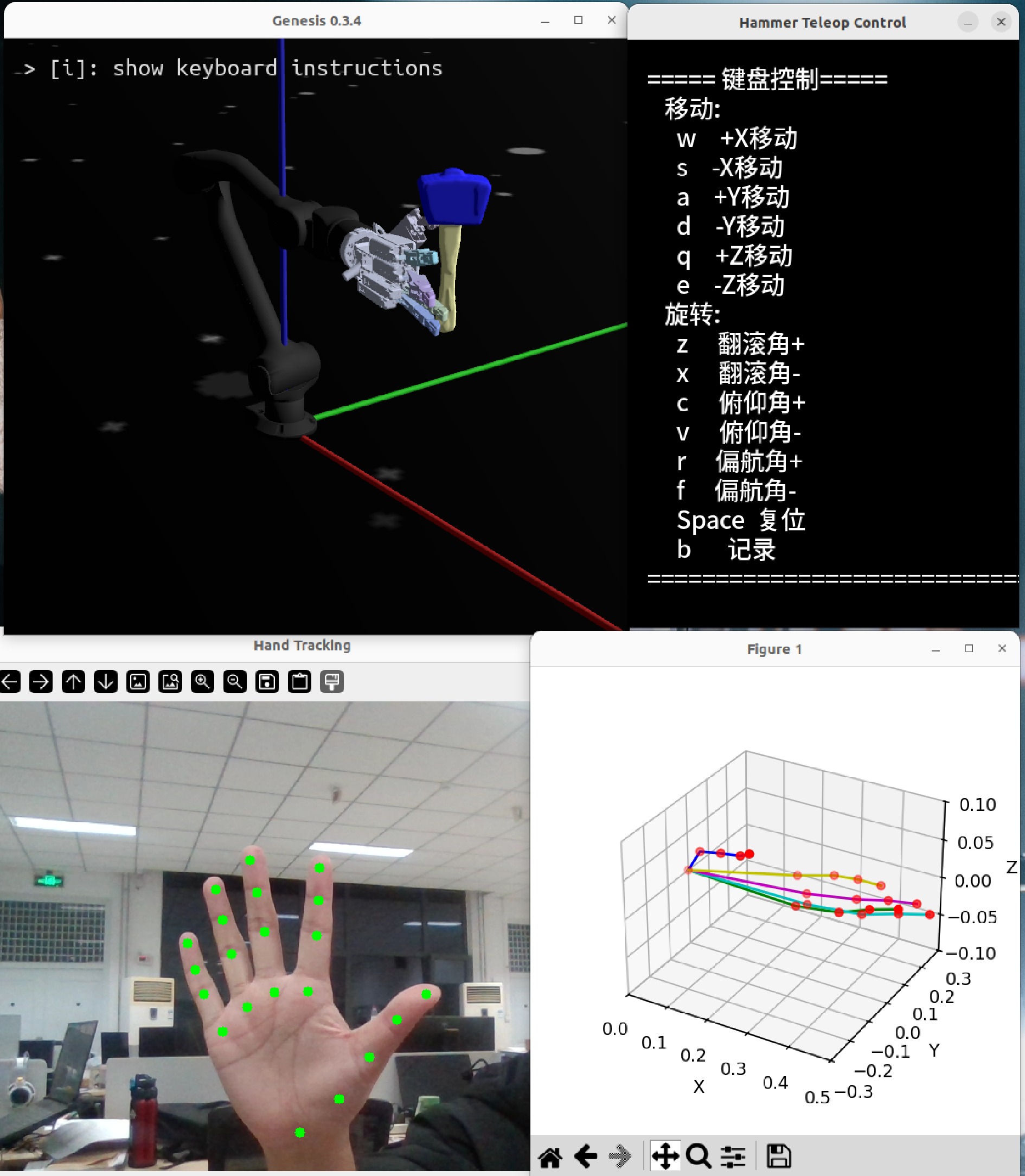

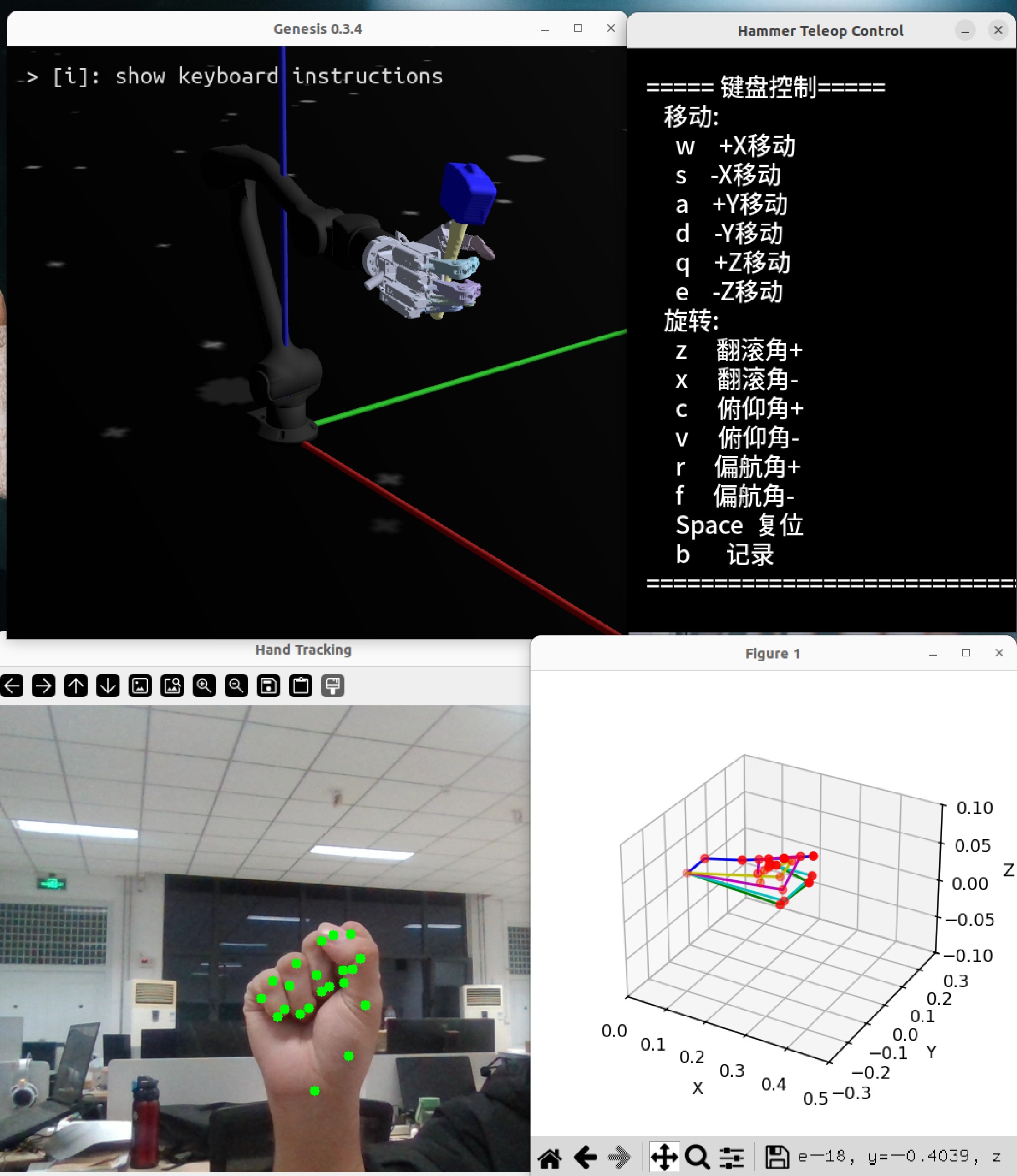

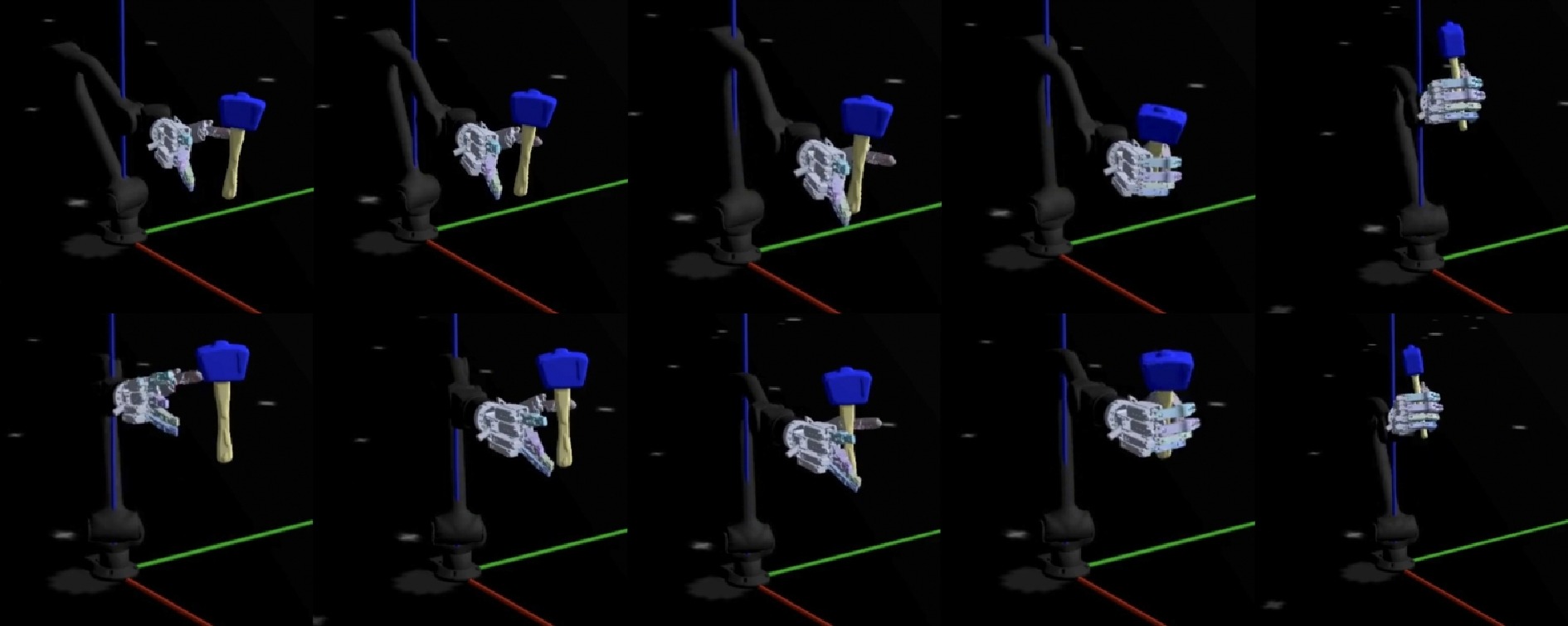

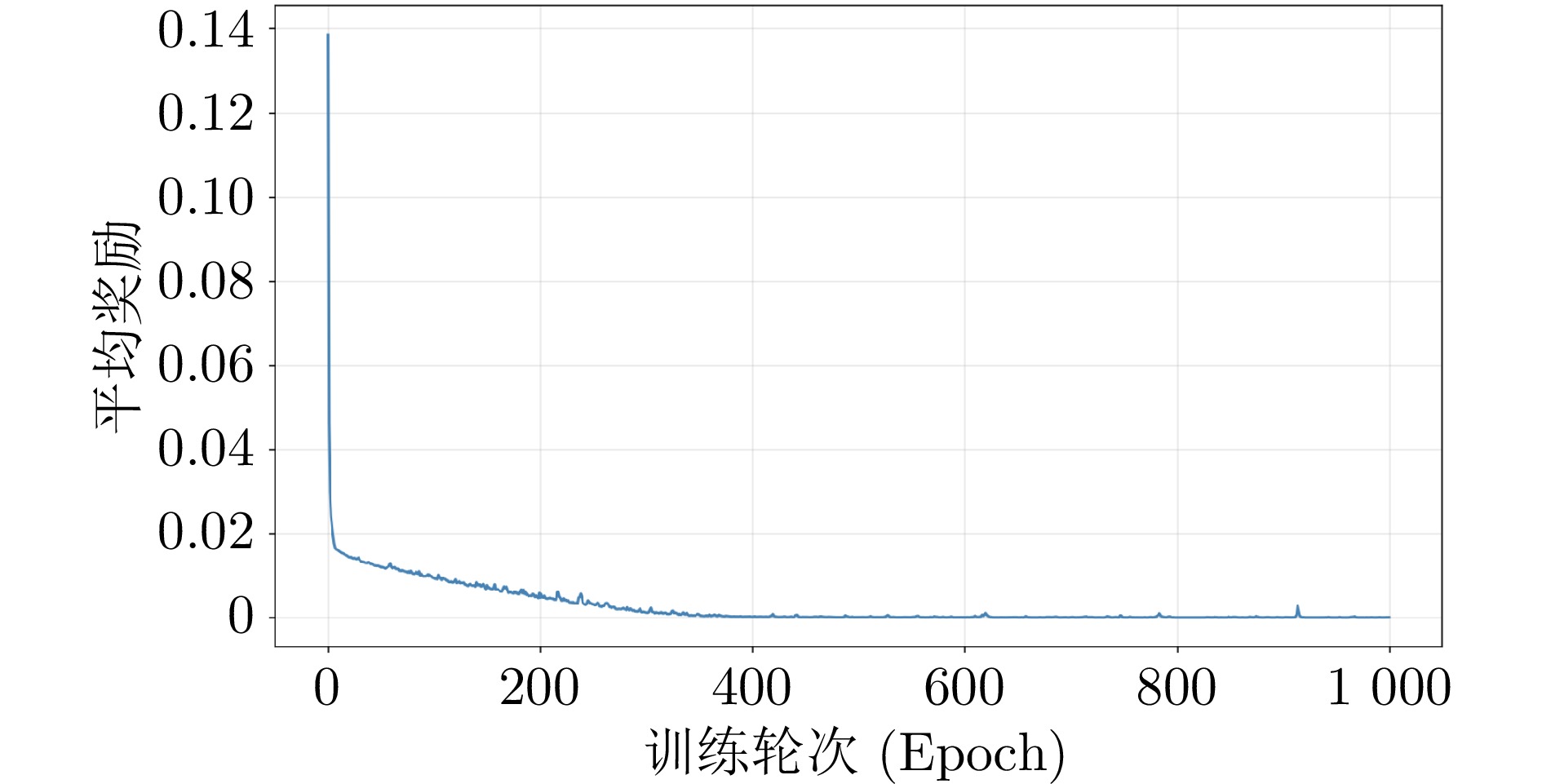

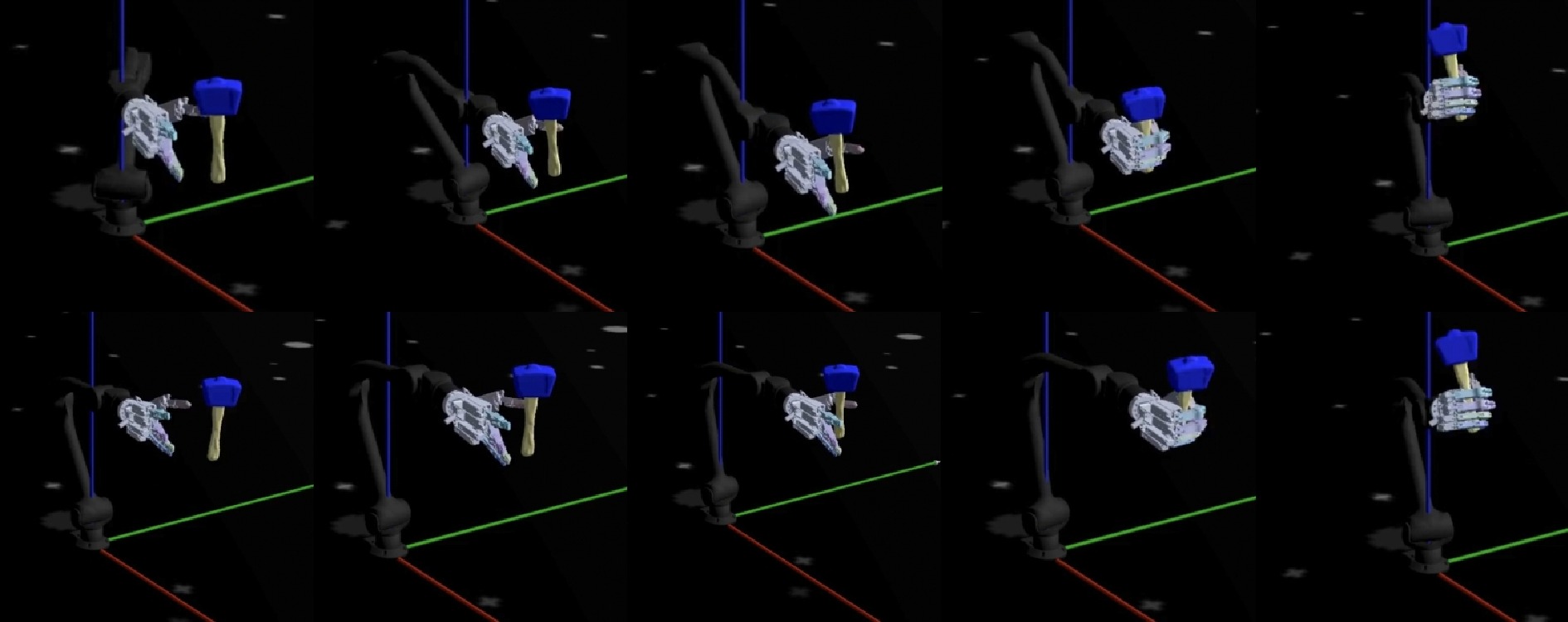

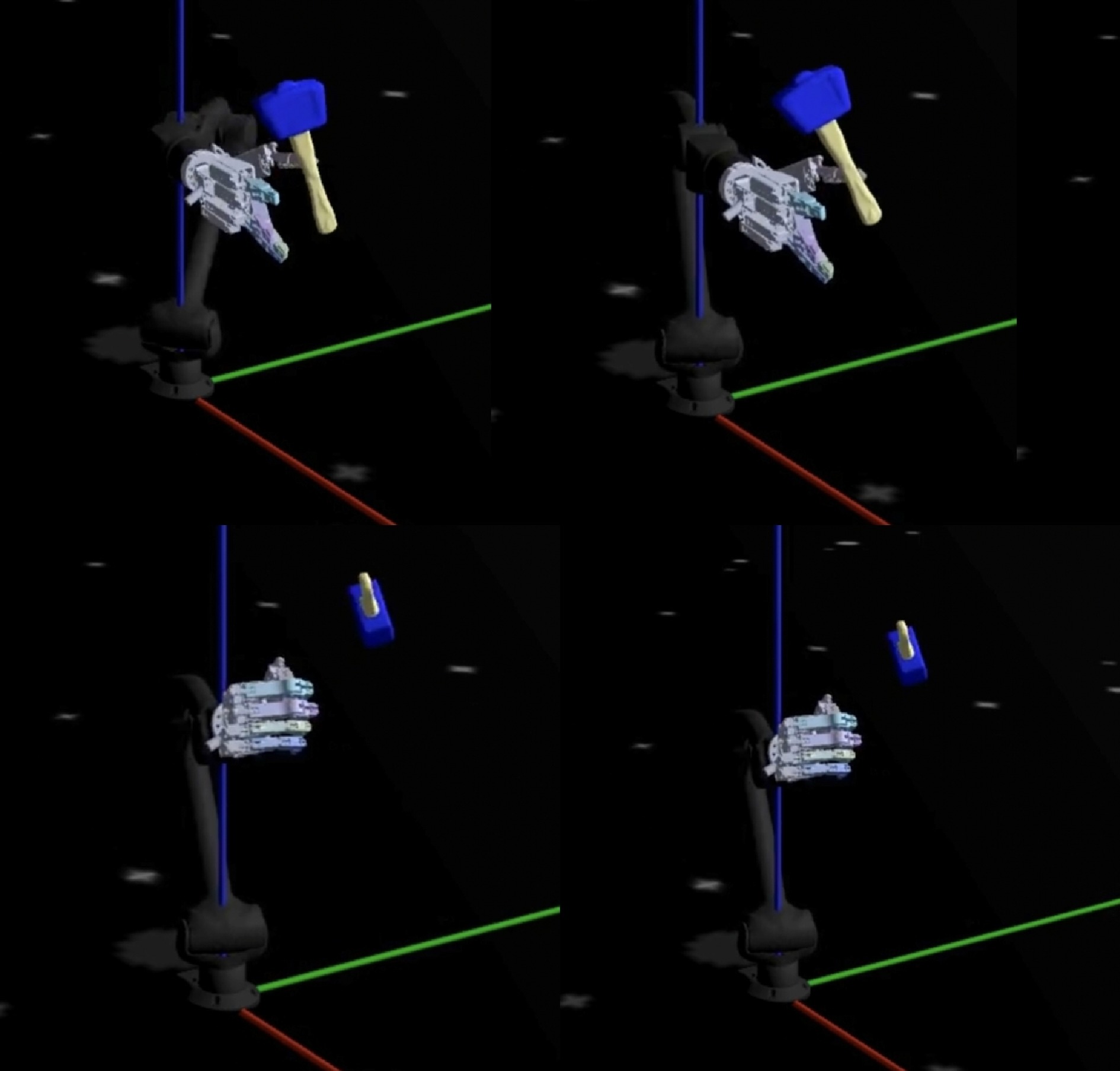

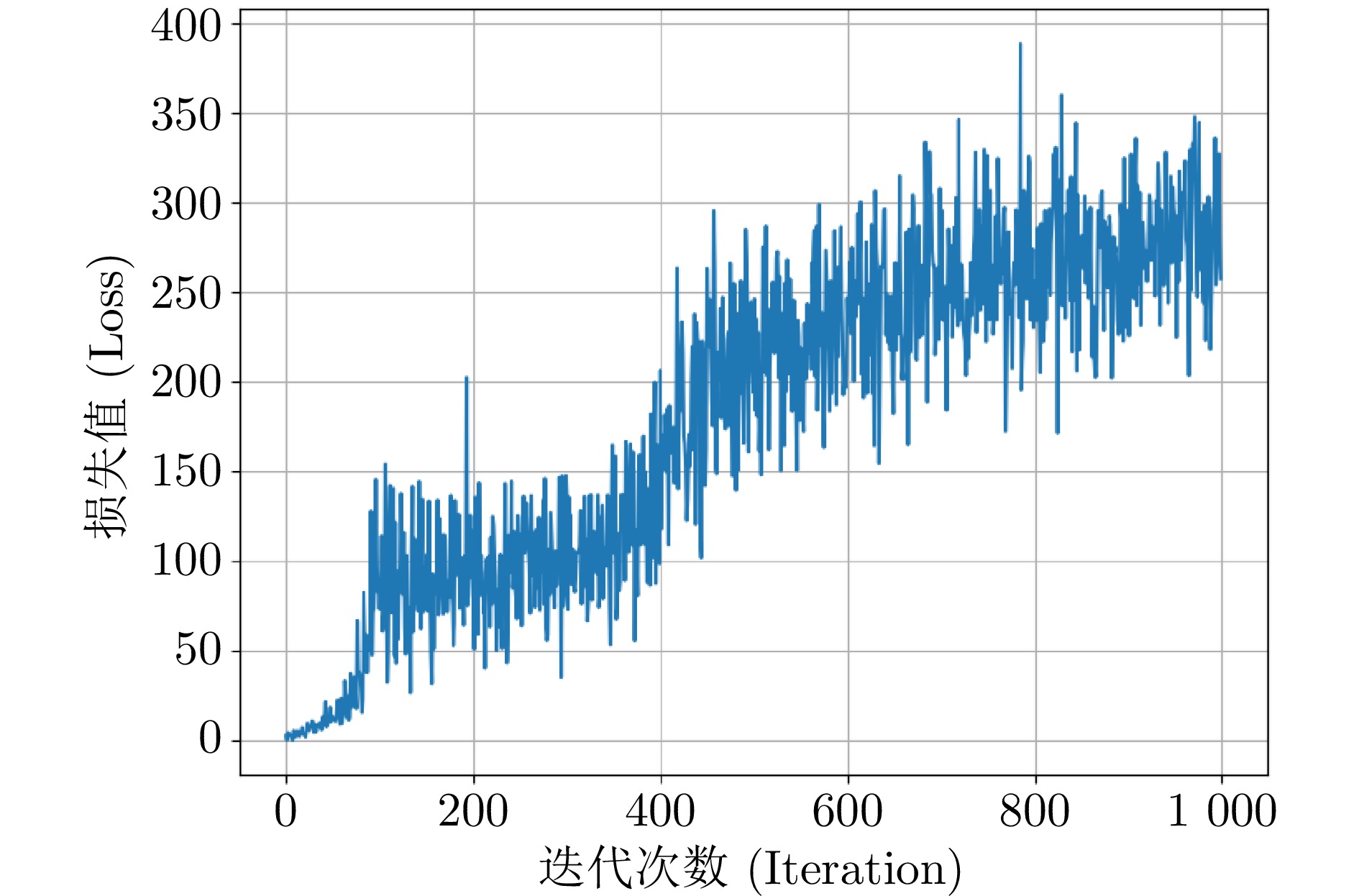

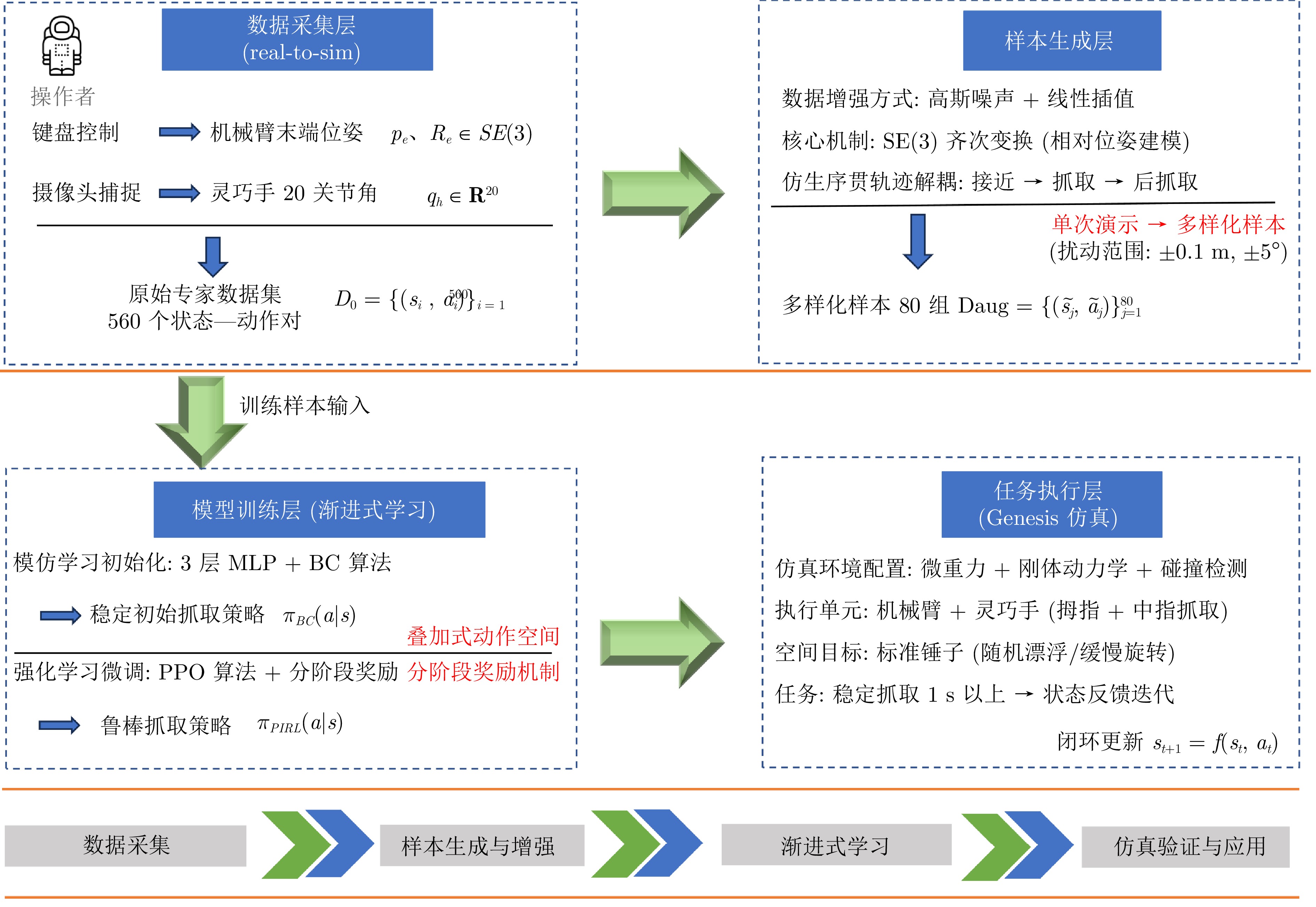

摘要: 针对空间机械臂在微重力环境下执行漂浮目标自主抓取任务时存在的样本获取难、泛化能力弱、动态扰动适应差的问题, 提出一种融合仿生智能的渐进式模仿强化学习方法. 首先, 基于遥操作采集的人类臂手协同操作专家演示数据, 构建多层感知机(MLP) 初始抓取策略模型, 并通过行为克隆完成仿生抓取训练; 然后, 将该初始模型嵌入Genesis高保真空间操作仿真环境, 采用近端策略优化空间抓取算法开展抓取策略在线微调, 依托叠加式动作空间与分阶段奖励机制实现专家先验知识与环境自主探索的协同优化, 有效解决模仿学习的分布偏移缺陷与强化学习样本效率瓶颈. 实验结果表明, 所提方法在目标随机位姿扰动下抓取成功率达89.5%, 较MLP模仿学习提升14.5%, 显著增强了策略在目标位姿偏差下复杂空间场景中的鲁棒性与环境适应能力, 为微重力环境下空间机械臂漂浮目标自主抓取提供新的技术方案.Abstract: To address the challenges faced by space manipulators in performing autonomous grasping tasks of floating targets in microgravity environments, specifically the difficulties in sample acquisition, weak generalization capability, and poor adaptation to dynamic disturbances, a bionic-integrated progressive imitation-reinforcement learning method is proposed. First, based on expert demonstration data of human arm-hand collaborative operations collected through teleoperation, a multi-layer perceptron (MLP) initial grasping strategy model is constructed, and bionic grasp training is conducted through behavior cloning; Next, the initial model is embedded into the high-fidelity Genesis space operation simulation environment, and the proximal policy optimization for grasping in space algorithm is employed for online fine-tuning of the grasping strategy. By leveraging a stacked action space and a staged reward mechanism, the method achieves collaborative optimization between expert prior knowledge and autonomous environmental exploration, effectively addressing the distribution shift defect in imitation learning and the sample efficiency bottleneck in reinforcement learning. Experimental results indicate that the proposed method achieves a grasp success rate of 89.5% under random target pose disturbances, an improvement of 14.5% compared to MLP-based imitation learning, significantly enhancing the robustness and environmental adaptability of the strategy in complex spatial scenes with target pose deviations. This provides a new technical solution for autonomous grasping of floating targets by space manipulators in microgravity environments.

-

表 1 MLP网络结构

Table 1 MLP network structure

网络层级 维度/数量 核心内容描述 输入层 15 机械臂当前末端位姿(7维) + 工具当前位姿(7维) + 灵巧手状态(1维) 隐藏层1 128 特征提取层, 处理输入层15维数据, 初步压缩冗余信息 隐藏层2 64 特征优化层, 进一步提炼关键操作特征 输出层 8 机械臂期望末端位姿(7维) + 灵巧手期望运动状态(1维) 表 2 PPO网络结构

Table 2 PPO network structure

网络层级 维度/数量 核心内容描述 输入层 15 观测向量: 抓取目标位姿(3+4)、末端执行器位姿(3+4)、阶段编码(1) actor隐藏层1 128 全连接层, ELU激活 actor隐藏层2 64 全连接层, ELU激活 actor输出层 7 相对增量动作: $\Delta$位置, $\Delta$欧拉角, 抓取命令 critic隐藏层1 128 全连接层, ELU激活 critic隐藏层2 64 全连接层, ELU激活 critic输出层 1 标量状态价值估计$V(s)$ 表 3 实验平台软硬件配置

Table 3 Experimental platform software and hardware configuration

配置项 具体规格 CPU Core i5-10200H GPU NVIDIA GTX 1650 Ti显存 4 GB 内存 16 GB 操作系统 Ubuntu 22.04 Python 3.10.12 PyTorch 2.4.0 CUDA 12.4 仿真引擎 Genesis 0.3.4 表 4 仿真物理参数

Table 4 Simulated physical parameters

参数 取值 仿真时间步长$dt$ $50\,\;\mathrm{ms}$ 重力加速度$g$ $(0,\;0,\;0)\,\;\mathrm{m/s^2}$ 仿真子迭代数$n$ $2$ 工具质量$m$ $0.8\,\;\mathrm{kg}$ 约束求解器$S$ Newton法 碰撞与关节限位$F$ 开启 工作空间半径$R$ $0.5\,\;\mathrm{m}$ Z轴高度限制$Z$ $[0.1,\;0.6]\,\;\mathrm{m}$ 表 5 灵巧手映射误差参数

Table 5 Dexterous hand mapping error parameter

误差项目 数值 相机有效视野$L$ $0.3\,\;\mathrm{m}$ 图像横向分辨率$W$ $640$ 关键点检测误差$p$ $5$像素 逆运动学关节角残差$\theta$ $0.05\,\;\mathrm{rad}$ 图像采集时延$t_1$ 约$33\,\;\mathrm{ms}$ 模型推理时延$t_2$ 约$15\,\;\mathrm{ms}$ 通信传输时延$t_3$ 约$5\,\;\mathrm{ms}$ 系统总时延$\Delta t$ 约$50\,\;\mathrm{ms}$ 手部运动平均速度$v_{hand}$ $0.1\,\;\mathrm{m/s}$ 表 6 时间性能

Table 6 Time performance indicators

指标 数值 计算方法 单步仿真时间$dt$ $0.05\,\;\mathrm{s}$ $\mathrm{dt}=0.05\;\mathrm{s},\; \mathrm{substeps}=2$ 单动作执行时长$T_{act}$ $1.25\,\;\mathrm{s}$ $25$次物理步$\times 0.05\,\;\mathrm{s}/$次 Episode平均时长$T_{epi}$ $8.75\sim22.5\,\;\mathrm{s}$ $7\sim18$步$\times 1.25\,\;\mathrm{s}/$步 单轮训练时长$T_{train}$ 约$37.5\,\;\mathrm{s}$ $30$步$\times 1.25\,\;\mathrm{s}$ 总训练时长$T$ 约$10\,\;\mathrm{h}$ $1 000\times37.5\,\;\mathrm{s}$ 在线推理延迟$\Delta t$ $<5\,\;\mathrm{ms}$ MLP前向$+$ PPO前向 控制频率$f$ $20\,\;\mathrm{Hz}$ $1/0.05\,\;\mathrm{s}$, 满足实时要求 -

[1] 李林峰, 解永春. 空间机器人操作: 一种多任务学习视角. 中国空间科学技术, 2022, 42(3): 10−24Li Lin-Feng, Xie Yong-Chun. Space robotic manipulation: A multi-task learning perspective. Chinese Space Science and Technology, 2022, 42(3): 10−24 [2] Chihi M, Hassine C B, Hu Q. Segmented hybrid impedance control for hyper-redundant space manipulators. Applied Sciences-Basel, 2025, 15(3): Artical No. 1133 doi: 10.3390/app15031133 [3] 谢芳霖, 汪凌昕, 张亚航, 王耀兵, 王捷. 面向空间自主装配验证评估的机械臂避障运动规划. 航天器工程, 2025, 34(2): 82−89Xie Fang-Lin, Wang Ling-Xin, Zhang Ya-Hang, Wang Yao-Bing, Wang Jie. Obstacle avoidance motion planning of manipulator for space autonomous assembly validation and evaluation. Spacecraft Engineering, 2025, 34(2): 82−89 [4] Si Y F, Wang D, Jiang Y Z, Zhu H, Shi S, Tan L, et al. Bionic intelligent clothing. Advanced Materials, 2025, 38(5): Artical No. e14621 [5] Li M G, Zhang N, Xing Y, Liu B Y, Su W Y, Li S Y, et al. Design, analysis, and experimental research of flexible multi-constraint gripper for nest frames. Journal of mechanical design, 2026, 148(2): Artical No. 023301 [6] 原劲鹏, 葛连正, 李德伦. 双臂空间机器人闭链系统的协同柔顺控制策略研究. 空间控制技术与应用, 2023, 49(2): 42−50 doi: 10.3969/j.issn.1674-1579.2023.02.005Yuan Jin-Peng, Ge Lian-Zheng, Li De-Lun. Cooperative compliance control strategy for dual arm space robot with closed chain system. Aerospace Control and Application, 2023, 49(2): 42−50 doi: 10.3969/j.issn.1674-1579.2023.02.005 [7] Jiang Y M, Wang Y N, Miao Z Q, Na J, Zhao Z J, Yang C G. Composite-learning-based adaptive neural control for dual-arm robots with relative motion. IEEE Transactions on Neural Networks and Learning Systems, 2022, 33(3): 1010−1021 doi: 10.1109/TNNLS.2020.3037795 [8] 张孟旭, 高向川, 尹丽楠, 王建辉. 基于机器视觉的机械臂抓取系统设计. 计算机应用与软件, 2024, 41(8): 22−27 doi: 10.3969/j.issn.1000-386x.2024.08.004Zhang Meng-Xu, Gao Xiang-Chuan, Yin Li-Nan, Wang Jian-Hui. Design of a robotic arm grasping system based on machine vision. Computer Applications and Software, 2024, 41(8): 22−27 doi: 10.3969/j.issn.1000-386x.2024.08.004 [9] 黄艳龙, 徐德, 谭民. 机器人运动轨迹的模仿学习综述. 自动化学报, 2022, 48(2): 315−334 doi: 10.16383/j.aas.c210033Huang Yan-Long, Xu De, Tan Min. On imitation learning of robot movement trajectories: A survey. Acta Automatica Sinica, 2022, 48(2): 315−334 doi: 10.16383/j.aas.c210033 [10] Odesanmi G A, Wang Q N, Mai J G. Skill learning framework for human-robot interaction and manipulation tasks. Robotics and Computer-Integrated Manufacturing, 2023, 79: Artical No. 102444 doi: 10.1016/j.rcim.2022.102444 [11] Kota I, Yasutake T, Satoki T, Masaki H. Autonomous teleoperated robotic arm based on imitation learning using instance segmentation and haptics information. Journal of Advanced Computational Intelligence and Intelligent Informatics, 2025, 29(1): 79−94 doi: 10.20965/jaciii.2025.p0079 [12] Zhang S, Liu S Q, Li Y, Li X, Wang Z G. A visual imitation learning algorithm for the selection of robots’ grasping points. Robotics and Autonomous Systems, 2024, 172: Artical No. 104600 doi: 10.1016/j.robot.2023.104600 [13] 王雪松, 王荣荣, 程玉虎. 安全强化学习综述. 自动化学报, 2023, 49(9): 1813−1835 doi: 10.16383/j.aas.c220631Wang Xue-Song, Wang Rong-Rong, Cheng Yu-Hu. Safe reinforcement learning: A survey. Acta Automatica Sinica, 2023, 49(9): 1813−1835 doi: 10.16383/j.aas.c220631 [14] Liu Y K, Xu H, Liu D, Wang L H. A digital twin-based sim-to-real transfer for deep reinforcement learning-enabled industrial robot grasping. Robotics and Computer-Integrated Manufacturing, 2022, 78: Artical No. 102365 doi: 10.1016/j.rcim.2022.102365 [15] Shukla P, Kumar H, Nandi G C. Robotic grasp manipulation using evolutionary computing and deep reinforcement learning. Intelligent Service Robotics, 2021, 14(1): 61−77 doi: 10.1007/s11370-020-00342-7 [16] Hu Z, Zheng Y, Pan J. Grasping living objects with adversarial behaviors using inverse reinforcement learning. IEEE Transactions on Robotics, 2023, 39(2): 1151−1163 doi: 10.1109/TRO.2022.3226108 [17] Yagna J, Mahmoud S, Paul W, Aaisha M. A comprehensive review of robotics advancements through imitation learning for self-learning systems. In: Proceedings of the 9th International Conference On Mechanical Engineering and Robotics Research. Barcelona, Spain: ICMERR, 2025. 1-4 [18] Li Y H, He H Y, Chai J, Bai G R, Dong E B. Grasping unknown objects with only one demonstration. IEEE Robotics and Automation Letters, 2025, 10(2): 987−994 doi: 10.1109/LRA.2024.3513037 [19] 申珅. 基于强化学习与模仿学习结合的机械臂抓取控制研究 [硕士论文], 中北大学, 中国, 2023.Shen S. Research on Robotic Arm Grasping Control Based on the Combination of Reinforcement Learning and Imitation Learning[Master thesis], North University of China, China, 2023. [20] Pereira M, Dimou D, Moreno P. In-hand manipulation of unseen objects through 3D vision. In: Proceedings of the 5th Iberian Robotics Conference. Zaragoza, Spain: ROBOT, 2022. 163-174 [21] 袁利, 姜甜甜, 魏春岭, 杨孟飞. 空间控制技术发展与展望. 自动化学报, 2023, 49(3): 476−493 doi: 10.16383/j.aas.c220792Yuan Li, Jiang Tian-Tian, Wei Chun-Ling, Yang Meng-Fei. Advances and perspectives of space control technology. Acta Automatica Sinica, 2023, 49(3): 476−493 doi: 10.16383/j.aas.c220792 [22] Yang Y C, Li R J, Wang L F, Zheng S, Ma S Z, Zhang K Y, et al. Scalable dexterous robot learning with ar-based remote human-robot interactions. arXiv preprint arXiv: 2602.07341, 2026. [23] 林麒光, 刘宇, 李杰, 刘小峰. 基于轨迹测量与人机映射的六自由度机械臂运动追踪模型. 电子测量与仪器学报, 2023, 37(3): 102−110 doi: 10.13382/j.jemi.B2206010Lin Qi-Guang, Liu Yu, Li Jie, Liu Xiao-Feng. Motion tracking model of 6-DOF manipulator based on trajectory measurement and human-machine mapping. Journal of Electronic Measurement and Instrumentation, 2023, 37(3): 102−110 doi: 10.13382/j.jemi.B2206010 [24] 张玲俊, 汤亮, 刘磊. 目标位置引导的五指灵巧手手内重定向. 机器人, 2025, 47(1): 10−21 doi: 10.13973/j.cnki.robot.240019Zhang Ling-Jun, Tang Liang, Liu Lei. Target position-guided in-hand reorientation of five-fingered dexterous hands. Robotics, 2025, 47(1): 10−21 doi: 10.13973/j.cnki.robot.240019 [25] Schulman J, Wolski F, Dhariwal P, Radford A, Klimov O. Proximal policy optimization algorithms. arXiv: 1707.06347, 2017. [26] Genesis作者团队. Genesis: 面向机器人及具身智能的生成式通用物理引擎 [Online], available: https://genesis-world.readthedocs.io/zh-cn/latest/, 2026-04-20Genesis Authors. Genesis: A Generative and Universal Physics Engine for Robotics and Beyond[Online], available: https://genesis-world.readthedocs.io/zh-cn/latest/, April 20, 2026 [27] Li M Y, Du Z J, Ma X X, Dong W, Gao Y Z. A robot hand-eye calibration method of line laser sensor based on 3d reconstruction. Robotics and Computer-Integrated Manufacturing, 2021, 71: Artical No. 102136 doi: 10.1016/j.rcim.2021.102136 -

计量

- 文章访问数: 42

- HTML全文浏览量: 18

- 被引次数: 0

下载:

下载: