Fractional-order Graph Neural Diffusion for Cross-frequency Alignment Contrastive Learning

-

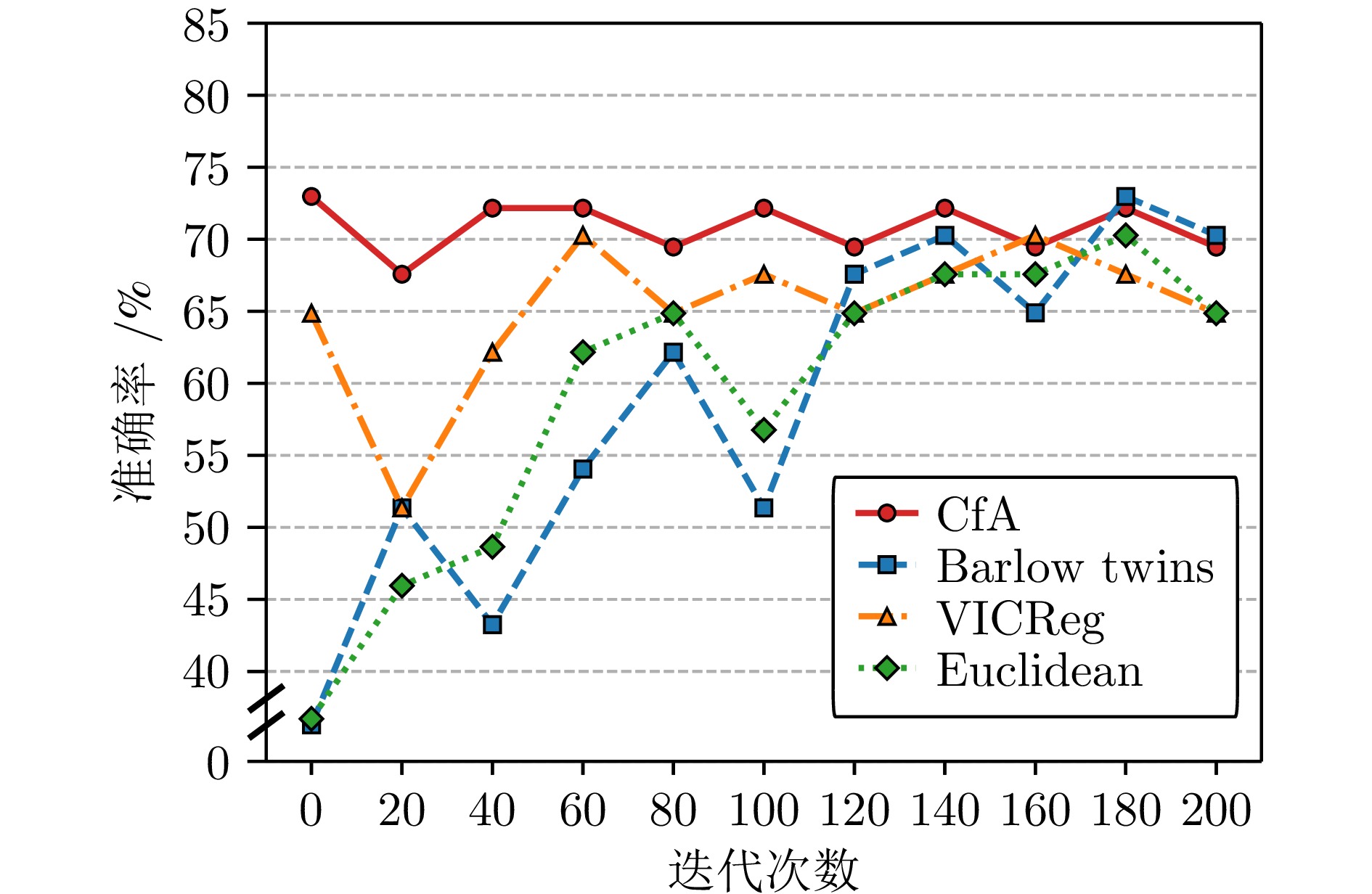

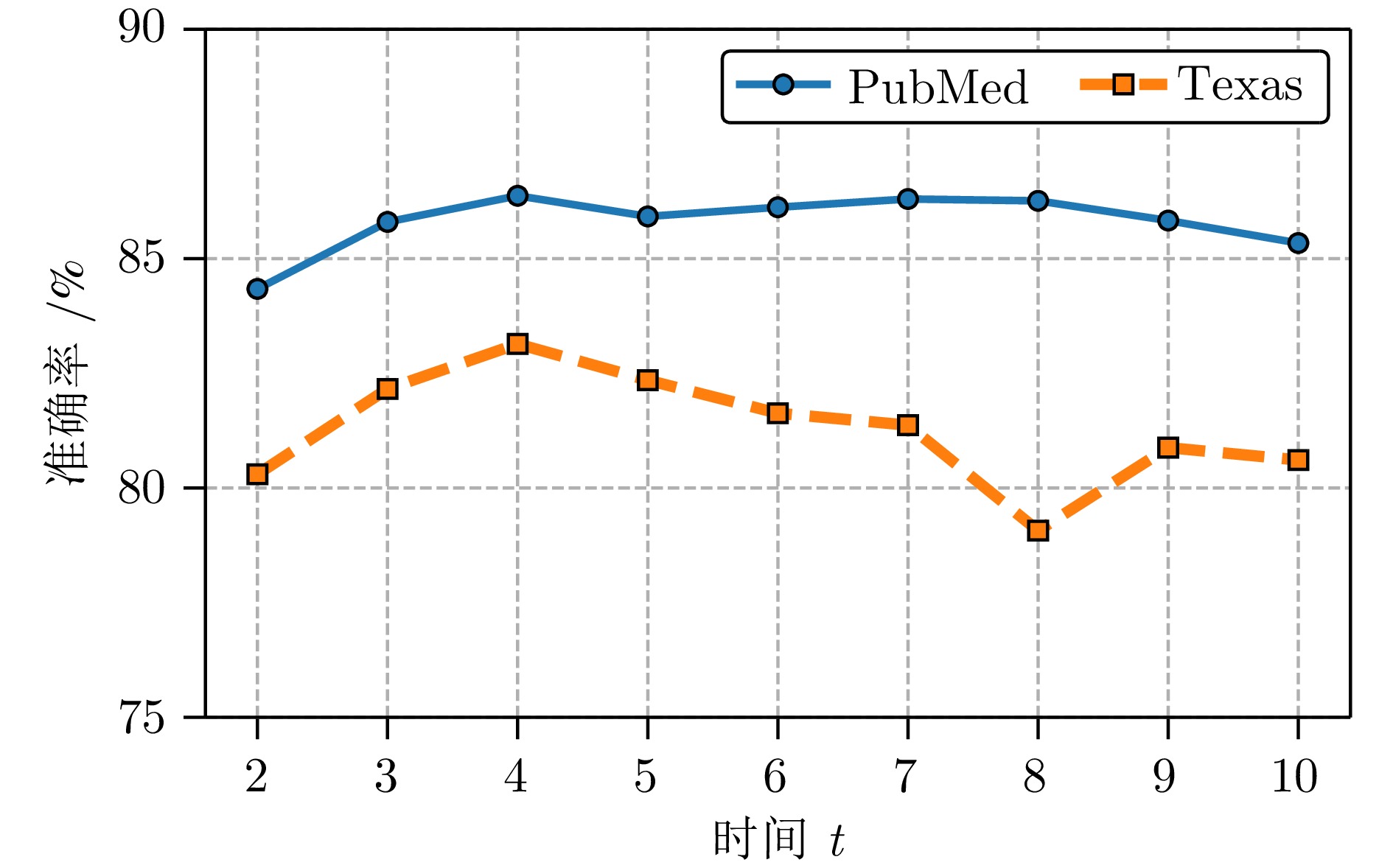

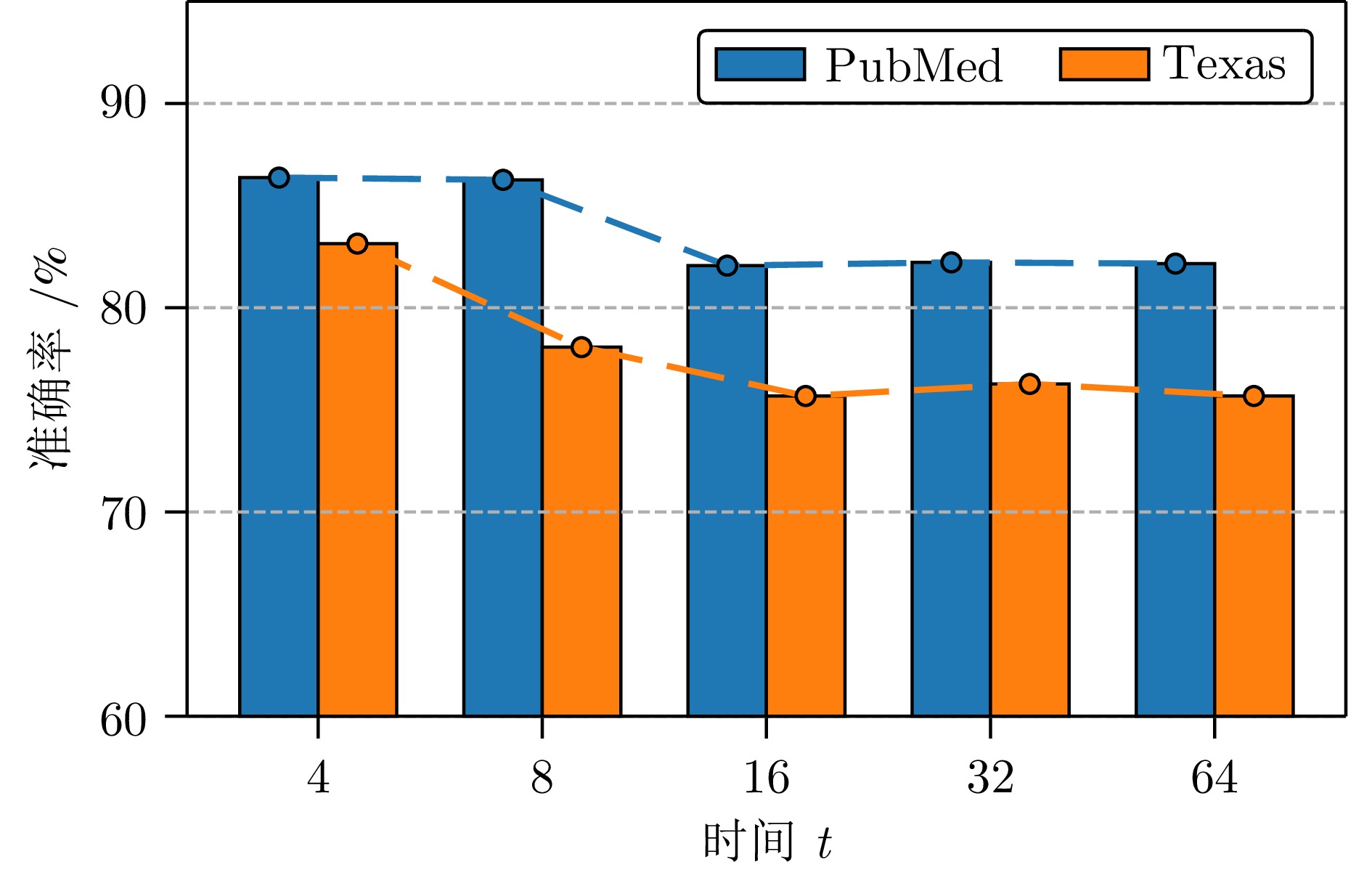

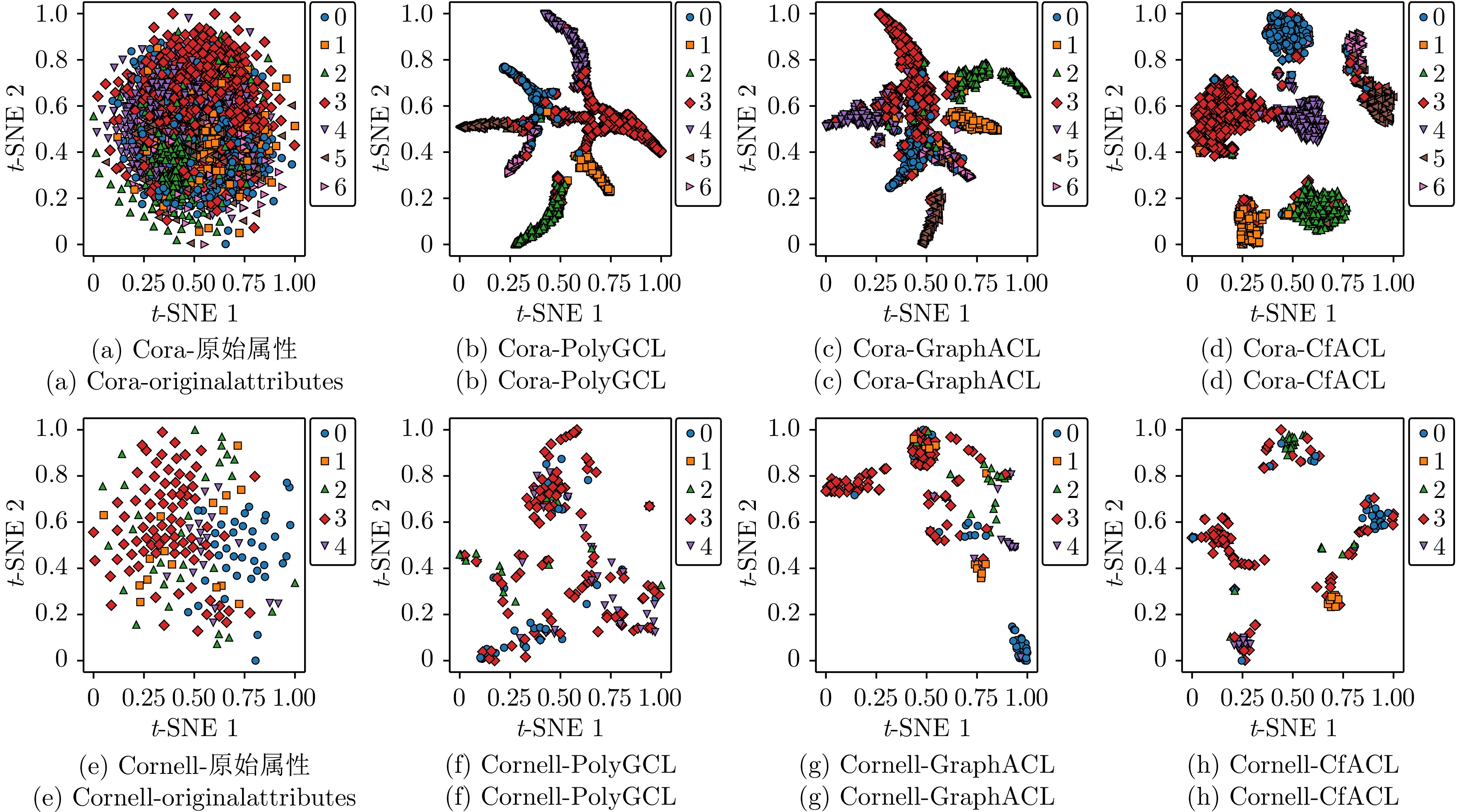

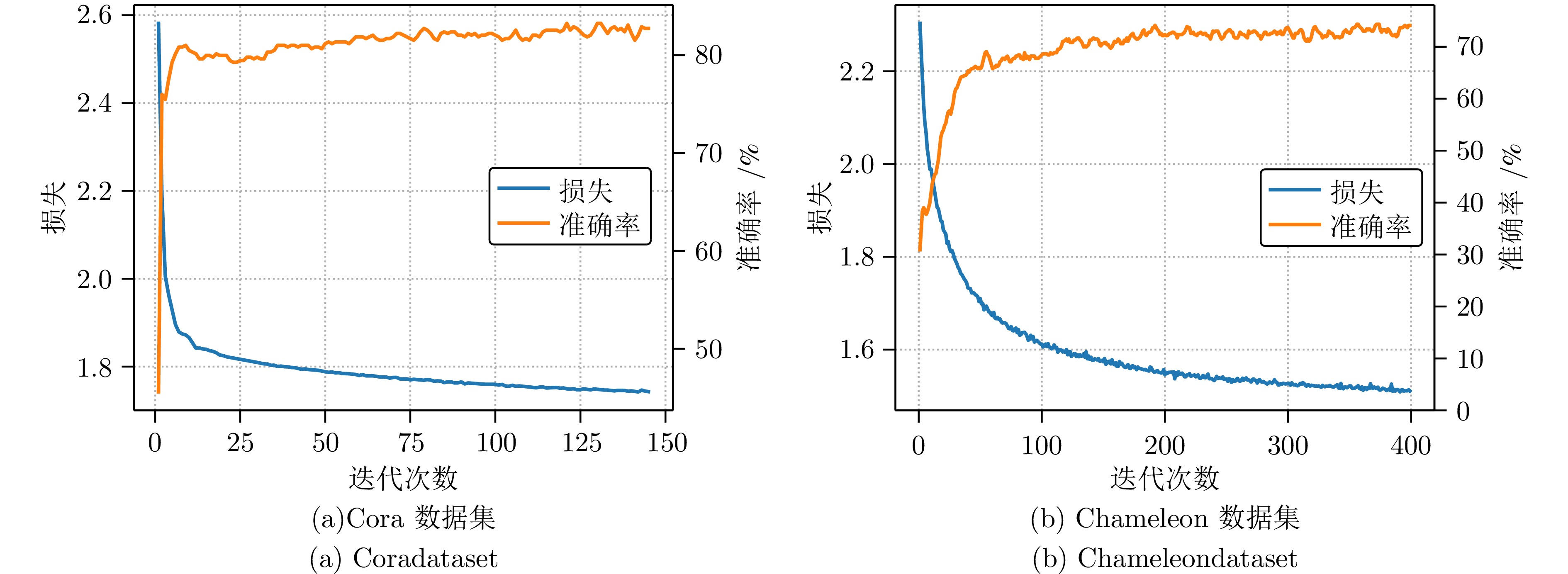

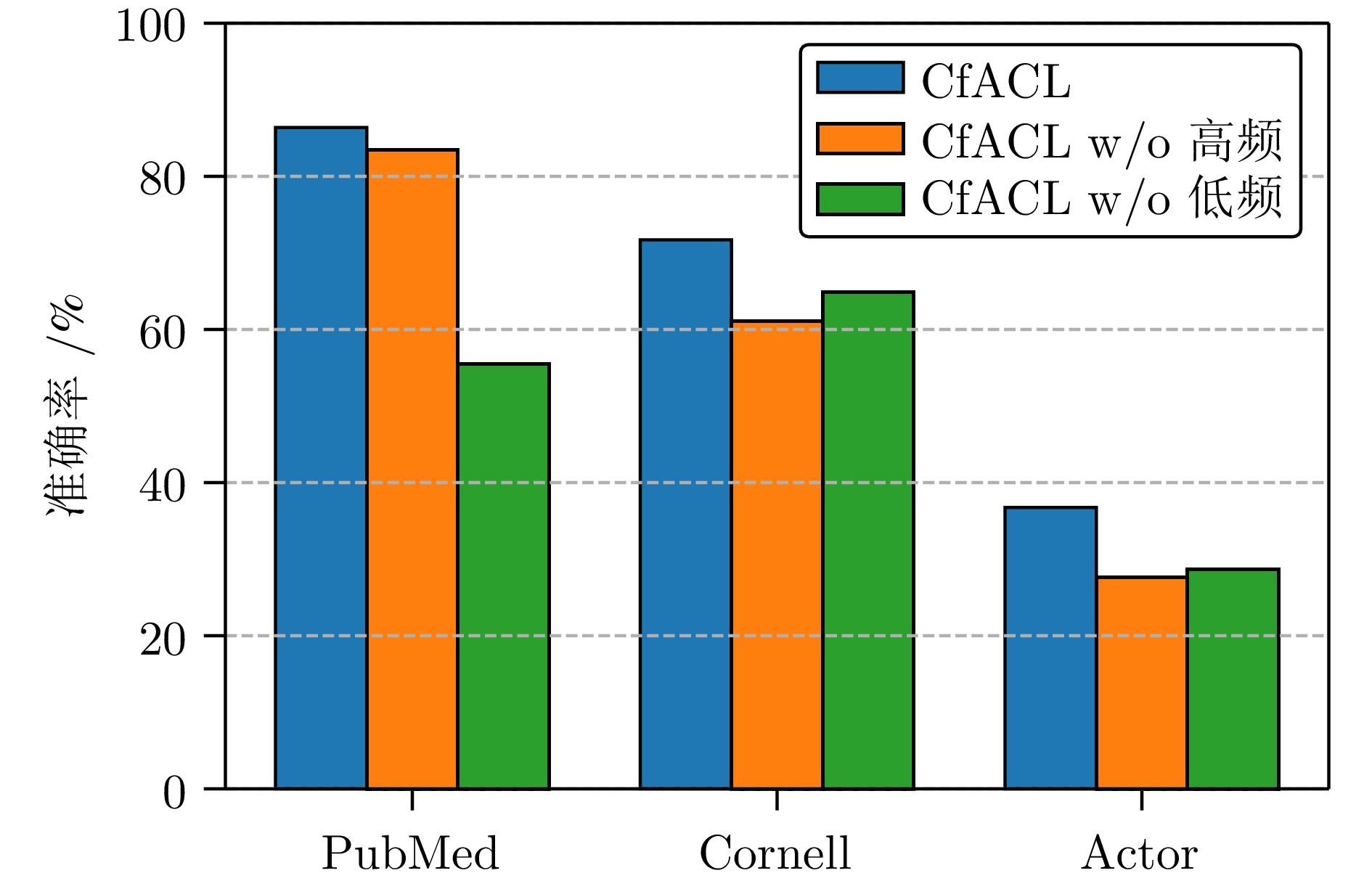

摘要: 图对比学习(GCL)作为一种强大的自监督表示学习范式, 能够通过有效利用无标签数据来增强半监督学习中的表示判别性和泛化能力. 然而, 现有的GCL方法在学习判别性嵌入表示以及图数据增强过程中实现对比多样性与语义不变性之间的平衡方面存在困难, 这导致在构建增强视图时关键信息的丢失. 为了解决这些挑战, 提出了一种新颖的跨频域对齐对比学习(CfACL)框架, 利用分数阶图神经扩散(FGND)进行图节点表示学习. FGND利用切比雪夫多项式分数阶微分方程实现图信号中多阶邻域信息的远程扩散, 缓解过平滑问题并提高图嵌入表示的判别能力. 随后, 通过高频和低频滤波器分别构建两种不同的FGND形式, 形成自然的增强对比视图, 避免了随机增强引起的内在结构坍塌和语义偏移. CfACL方法将高频滤波分量转换到低频域, 并在镜像的虚拟谱空间中进行对比学习, 从而能够在全局一致的语义空间中吸收有益的高频细节, 为下游任务生成全面的表示. 在同配性和异配性基准图数据集上的大量节点分类实验结果验证了所提方法的有效性.Abstract: Graph Contrastive Learning (GCL), a powerful self-supervised representation learning paradigm, could enhance representation discriminability and generalization in semi-supervised learning by effectively leveraging unlabeled data. However, existing GCL methods struggle to learn discriminative embedding and achieve better balance between contrastive diversity and semantic invariance during graph data augmentation, inevitably leading to the critical information loss when constructing augmented views. To address these challenges, this paper proposes a novel Cross-frequency Alignment Contrastive Learning (CfACL) framework with Fractional-order Graph Neural Diffusion (FGND). The FGND leverages Chebyshev polynomial fractional differential equations to achieve the long-range diffusion of multi-order neighboring information, alleviating over-smoothing and improving the discriminability of graph representation. Then, two distinct FGNDs are characterized by high-frequency and low-frequency filters to form natural augmented contrastive views, avoiding the intrinsic structure collapse and semantic shift caused by random augmentation. The CfACL transforms high-frequency components into the low-frequency domain and achieves the contrastive learning in mirrored virtual spectral space, which is capable of absorbing beneficial high-frequency details in a globally consistent semantic space and results in comprehensive representation for downstream task. Extensive node classification experiments demonstrate the effectiveness of the proposed method across homophilic and heterophilic benchmark graph datasets.

-

表 1 数据集统计信息

Table 1 The statistics of the datasets

数据集 节点数量 边数量 特征数量 类别数量 边同配性比 Cora 2708 5429 1433 7 0.81 Citeseer 3327 4732 3703 6 0.73 PubMed 19717 88651 500 3 0.80 Wisconsin 251 466 1703 5 0.17 Texas 183 309 1793 5 0.06 Cornell 183 295 1703 5 0.12 Chameleon 2277 36101 2325 5 0.23 Squirrel 5201 217073 2089 5 0.20 Actor 7600 33391 932 5 0.21 表 2 在同配和异配数据集上的节点分类实验结果

Table 2 The node classification experiment results on homophilic and heterophilic datasets

方法 Cora Citeseer PubMed Wisconsin Cornell Texas Chameleon Squirrel Actor DGI $ 82.30\pm0.60 $ $ 71.80\pm0.70 $ $ 76.80\pm0.60 $ $ 55.21\pm1.02 $ $ 45.33\pm6.11 $ $ 58.53\pm2.98 $ $ 60.27\pm0.70 $ $ 26.44\pm1.12 $ $ 28.30\pm0.76 $ GCA $ 82.93\pm0.42 $ $ 72.19\pm0.31 $ $ 80.79\pm0.45 $ $ 59.55\pm0.81 $ $ 52.31\pm1.09 $ $ 52.92\pm0.46 $ $ 63.66\pm0.32 $ $ 48.09\pm0.21 $ $ 28.47\pm0.29 $ CCA-SSG $ 84.00\pm0.40 $ $ 73.10\pm0.30 $ $ 81.00\pm0.40 $ $ 58.46\pm0.96 $ $ 52.17\pm1.04 $ $ 59.89\pm0.78 $ $ 62.41\pm0.22 $ $ 46.76\pm0.36 $ $ 27.82\pm0.60 $ BGRL $ 82.70\pm0.60 $ $ 71.10\pm0.80 $ $ 79.60\pm0.50 $ $ 51.23\pm1.17 $ $ 50.33\pm2.29 $ $ 52.77\pm1.98 $ $ 64.86\pm0.63 $ $ 36.22\pm1.97 $ $ 28.80\pm0.54 $ SP-GCL $ 83.16\pm0.13 $ $ 71.96\pm0.42 $ $ 79.16\pm0.84 $ $ 60.12\pm0.39 $ $ 52.29\pm1.21 $ $ 59.81\pm1.33 $ $ 65.28\pm0.53 $ $ 52.10\pm0.67 $ $ 28.94\pm0.69 $ GraphACL $ \underline{84.20\pm0.31} $ $ \underline{73.63\pm0.22} $ $ \underline{82.02\pm0.15} $ $ 69.22\pm0.40 $ $ \underline{59.33\pm1.48} $ $ 71.08\pm0.34 $ $ \underline{69.12\pm0.24} $ $ \underline{54.05\pm0.13} $ $ 30.03\pm0.13 $ PolyGCL $ 81.97\pm0.19 $ $ 71.97\pm0.29 $ $ 77.48\pm0.39 $ $ \underline{76.08\pm3.33} $ $ 43.78\pm3.51 $ $ \underline{72.16\pm3.51} $ $ 46.84\pm1.53 $ $ 34.25\pm0.66 $ $ \underline{34.37\pm0.69} $ CfACL $ {\bf{85.17}}\pm{\bf{1.51}} $ $ {\bf{76.67}}\pm{\bf{1.38}} $ $ {\bf{86.37}}\pm{\bf{1.26}} $ $ {\bf{80.34}}\pm{\bf{0.47}} $ $ {\bf{71.70}}\pm{\bf{7.43}} $ $ {\bf{83.14}}\pm{\bf{5.34}} $ $ {\bf{72.29}}\pm{\bf{1.50}} $ $ {\bf{62.54}}\pm{\bf{1.14}} $ $ {\bf{36.76}}\pm{\bf{1.34}} $ 表 3 CfACL与代表性对比方法PolyGCL和GraphACL在不同数据集上的训练成本对比(秒)

Table 3 The comparison of training costs for CfACL versus the representative comparison methods PolyGCL and GraphACL across different datasets (Seconds)

方法 Cora PubMed Chameleon Cornell Texas Actor PolyGCL 23.42 4392.96 19.62 32.29 24.03 54.71 GraphACL 23.08 340.35 212.19 28.10 27.64 3888.55 CfACL 19.58 108.86 19.58 22.79 29.96 32.99 -

[1] Liang K, Meng L Y, Liu M, Liu Y, Tu W X, Wang S W, et al. A survey of knowledge graph reasoning on graph types: static, dynamic, and multi-modal. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2024, 46(12): 9456−9478 doi: 10.1109/TPAMI.2024.3417451 [2] Li M R, Zhang Y, Zhang W, Zhao S Y, Piao X L, Yin B C. Csat: Contrastive sampling-aggregating transformer for community detection in attribute-missing networks. IEEE Transactions on Computational Social Systems, 2024, 11(2): 2277−2290 doi: 10.1109/TCSS.2023.3292145 [3] 罗彪, 胡天萌, 周育豪, 黄廷文, 阳春华, 桂卫华. 多智能体强化学习控制与决策研究综述. 自动化学报, 2025, 51(3): 510−539 doi: 10.16383/j.aas.c240392Luo Biao, Hu Tian-Meng, Zhou Yu-Hao, Huang Ting-Wen, Yang Chun-Hua, Gui Wei-Hua. Survey on multi-agent reinforcement learning for control and decision-making. Acta Automatica Sinica, 2025, 51(3): 510−539 doi: 10.16383/j.aas.c240392 [4] Wu Z H, Lu J L, Yu J J, Zhou S, Pi Y Y, Wang H S. Divide and conquer: Coordinating multiplex mixture of graph learners to handle multi-omics analysis. In: Proceedings of the Thirty-Fourth International Joint Conference on Artificial Intelligence. Montreal, Canada: IJCAI, 2025. 6615-6623 [5] Li M R, Zhang P Y, Xing W B, Zheng Y J, Zaporojets K, Chen J Z, et al. A survey of large language models for data challenges in graphs. Expert Systems with Applications. Expert Systems with Applications, 2025, 298: 129643 [6] 吴博, 梁循, 张树森, 徐睿. 图神经网络前沿进展与应用. 计算机学报, 2022, 45(1): 35−68 doi: 10.11897/SP.J.1016.2022.00035Wu Bo, Liang Xun, Zhang Shu-Sen, Xu Rui. Advances and applications in graph neural network. Chinese Journal of Computers, 2022, 45(1): 35−68 doi: 10.11897/SP.J.1016.2022.00035 [7] Wu Z H, Cai J Y, Zhang Y H, Lu J L, Chen Z L, Zhuang S M, et al. Where graph meets heterogeneity: Multi-view collaborative graph experts. In: Proceedings of 39th Annual Conference on Neural Information Processing Systems. 2025. [8] Wu Z H, Pan S R, Chen F W, Long G D, Zhang C Q, Philip S Y. A comprehensive survey on graph neural networks. IEEE Transactions on Neural Networks and Learning Systems, 2021, 32(1): 4−24 doi: 10.1109/TNNLS.2020.2978386 [9] 张建朋, 裴雨龙, 刘聪, 李邵梅, 陈鸿昶. 基于因子图模型的动态图半监督聚类算法. 自动化学报, 2020, 46(4): 670−680 doi: 10.16383/j.aas.c170363Zhang Jian-Peng, Pei Yu-Long, Liu Cong, Li Shao-Mei, Chen Hong-Chang. A semi-supervised clustering algorithm based on factor graph model for dynamic graphs. Acta Automatica Sinica, 2020, 46(4): 670−680 doi: 10.16383/j.aas.c170363 [10] Cai L, Li J D, Wang J, Ji S W. Line graph neural networks for link prediction. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2022, 44(9): 5103−5113 doi: 10.1007/978-981-16-6054-2_10 [11] 公沛良, 艾丽华. 用于半监督分类的二阶近似谱图卷积模型. 自动化学报, 2021, 47(5): 1067−1076Gong Pei-Liang and Ai Li-Hua. Two-order approximate spectral convolutional model for semi-supervised classification. Acta Automatica Sinica, 2021, 47(5): 1067−1076 [12] 张重生, 陈杰, 李岐龙, 邓斌权, 王杰, 陈承功. 深度对比学习综述. 自动化学报, 2023, 49(1): 15−39 doi: 10.16383/j.aas.c220421Zhang Chong-Sheng, Chen Jie, Li Qi-Long, Deng Bin-Quan, Wang Jie, Chen Cheng-Gong. Deep contrastive learning: a survey. Acta Automatica Sinica, 2023, 49(1): 15−39 doi: 10.16383/j.aas.c220421 [13] Ju W, Wang Y F, Qin Y F, Mao Z Y, Xiao Z P, Luo J Y, et al. Towards graph contrastive learning: a survey and beyond. arXiv: 2405.11868, 2024. [14] Zhao T X, Wang Y Q, Xu S L, Yang T C, Gao J B, Guo J P. Dual-level noise augmentation for graph clustering with triplet-wise contrastive learning. Pattern Recognition, 2025, 172: 112463 doi: 10.1016/j.patcog.2025.112463 [15] Chen J S, Liu H P, Hopcroft J, He K. Leveraging contrastive learning for enhanced node representations in tokenized graph transformers. In: Proceedings of the 38th International Conference on Neural Information Processing Systems. Vancouver, BC, Canada: Curran Associates Inc., 2024. 85824-85845 [16] Lee H K, Zhang Q C, Yang C, Xiong L. Node-level contrastive unlearning on graph neural networks. arXiv: 2503.02959, 2025. [17] Zhao Y H, Wang Y J, Wang Z K, Shan W, Huang M M, Wang X W. Graph contrastive learning with progressive augmentations. In: Proceedings of the 31st ACM SIGKDD Conference on Knowledge Discovery and Data Mining. Toronto, ON, Canada: ACM, 2025. 2079-2088 [18] Liang H D, Du X J, Zhu B L, Ma Z J, Chen K, Gao J B. Graph contrastive learning with implicit augmentations. Neural Networks, 2023, 163: 156−164 doi: 10.1016/j.neunet.2023.04.001 [19] Xiao T, Zhu H S, Chen Z Y, Wang S H. Simple and asymmetric graph contrastive learning without augmentations. In: Proceedings of the 37th International Conference on Neural Information Processing Systems. New Orleans, LA, USA: Curran Associates Inc., 2023. 16129-16152 [20] Zhao T X, Wang Y Q, Wang J L, Wang J P, Cui M L, Gao J B, et al. Hybrid-collaborative augmentation and contrastive sample adaptive-differential awareness for robust attributed graph clustering. arXiv: 2510.02731, 2025. [21] Wang D, Zhou W, Hu S L. Information diffusion prediction with graph neural ordinary differential equation network. In: Proceedings of the 32nd ACM International Conference on Multimedia. Melbourne, VIC, Australia: ACM, 2024. 9699-9708 [22] Chamberlain B, Rowbottom J, Gorinova M I, Michael M. Bronstein, Webb S, Rossi E. GRAND: graph neural diffusion. In: Proceedings of the 38th International Conference on Machine Learning. Virtual Event: PMLR, 2021. 1407-1418 [23] Matthew Thorpe, Tan Minh Nguyen, Hedi Xia, Thomas Strohmer, Andrea L. Bertozzi, Stanley J. Osher, and Bao Wang. GRAND++: graph neural diffusion with a source term. In: Proceedings of the 10th International Conference on Learning Representations. Virtual Event: OpenReview. net, 2022. [24] Kang Q Y, Zhao K, Ding Q X, Ji F, Li X H, Liang W F, et al. Unleashing the potential of fractional calculus in graph neural networks with FROND. In: Proceedings of the 12nd International Conference on Learning Representations. Vienna, Austria: OpenReview. net, 2024. [25] Zhao K, Li X H, Kang Q Y, Ji F, Ding Q X, Zhao Y N, et al. Distributed-order fractional graph operating network. In: Proceedings of the 38th Annual Conference on Neural Information Processing Systems. Vancouver, BC, Canada: Curran Associates Inc., 2024. 103442-103475 [26] Wang J L, Guo J P, Sun Y F, Gao J B, Wang S F, Yang Y C, et al. DGNN: decoupled graph neural networks with structural consistency between attribute and graph embedding representations. IEEE Transactions on Big Data, 2025, 11(4): 1813−1827 doi: 10.1109/TBDATA.2024.3489420 [27] Lee N, Lee J, and Park C Y. Augmentation-free self-supervised learning on graphs. In: Proceedings of the 36th AAAI Conference on Artificial Intelligence. Virtual Event: AAAI Press, 2022. 7372-7380 [28] Zhao Y N, Ji F, Zhao K, Li X H, Kang Q Y, Liang W F, et al. Simple graph contrastive learning via fractional-order neural diffusion networks. arXiv: 2504.16748, 2025. [29] Kipf T N, Welling M. Semi-supervised classification with graph convolutional networks. In: Proceedings of the 5th International Conference on Learning Representations. Toulon, France: OpenReview. net, 2017. [30] Yu W D, Hou Z C, Liu X R. Automated polynomial filter learning for graph neural networks. In: Proceedings of the International Conference on Big Data. Washington, DC, USA: IEEE, 2024. 680-689 [31] He M G, Wei Z W, and Wen J R. Convolutional neural networks on graphs with chebyshev approximation, revisited. In: Proceedings of the 35th Annual Conference on Neural Information Processing Systems. New Orleans, LA, USA: Curran Associates Inc., 2022. 7264-7276 [32] Zou Z Y, Jiang Y H, Shen L, Liu J, Liu X R. LOHA: direct graph spectral contrastive learning between low-pass and high-pass views. In: Proceedings of the AAAI Conference on Artificial Intelligence. Philadelphia, PA, USA: AAAI Press, 2025. 13492-13500 [33] Guo J P, Sun Y F, Gao J B, Hu Y L, Yin B C. Logarithmic schatten-p p norm minimization for tensorial multi-view subspace clustering. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2023, 45(3): 3396−3410 doi: 10.1109/tpami.2022.3179556 [34] Chen J Y, Lei R L, Wei Z W. Polygcl: Graph contrastive learning via learnable spectral polynomial filters. In: Proceedings of the 12nd International Conference on Learning Representations. Vienna, Austria: OpenReview. net, 2024. [35] Velickovic P, Fedus W, Hamilton W L, Liò P, Bengio Y, Hjelm R D. Deep graph infomax. In: Proceedings of the 7th International Conference on Learning Representations. New Orleans, LA, USA: OpenReview. net, 2019. [36] Zhu Y Q, Xu Y C, Yu F, Liu Q, Wu S, Wang L. Graph contrastive learning with adaptive augmentation. In: Proceedings of the Web Conference. Ljubljana, Slovenia: ACM, 2021. 2069-2080 [37] Zhang H R, Wu Q T, Yan J C, Wipf D, Yu P S. From canonical correlation analysis to self-supervised graph neural networks. In: Proceedings of the 34th Annual Conference on Neural Information Processing Systems. Virtual Event: Curran Associates Inc., 2021. 76-89 [38] Thakoor S, Tallec C, Azar M G, Azabou M, Dyer E L, Munos R, et al. Large-scale representation learning on graphs via bootstrapping. In: Proceedings of the 10th International Conference on Learning Representations. Virtual Event: OpenReview. net, 2022. [39] Wang H N, Zhang J Y, Zhu Q, Huang W, Kawaguchi K J, Xiao X K. Single-pass contrastive learning can work for both homophilic and heterophilic graph. Transactions on Machine Learning Research, 2023. [40] Zbontar J, Jing L, Misra I, LeCun Y, Deny S. Barlow twins: Self-supervised learning via redundancy reduction. In: Proceedings of the 38th International Conference on Machine Learning. Virtual Event: PMLR, 2021. 12310-12320 [41] Bardes A, Ponce J, LeCun Y. Vicreg: Variance-invariance-covariance regularization for self-supervised learning. In: Proceedings of the 10th International Conference on Learning Representations. Virtual Event: OpenReview. net, 2022. -

计量

- 文章访问数: 145

- HTML全文浏览量: 62

- 被引次数: 0

下载:

下载: