-

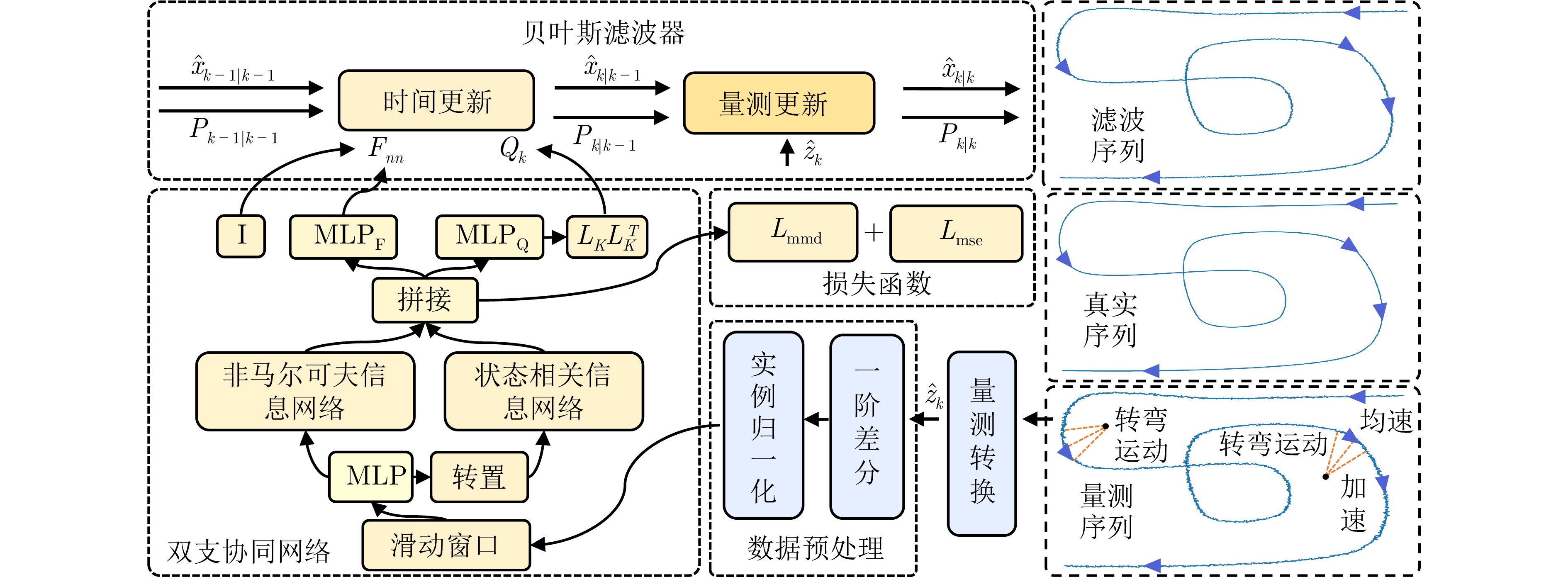

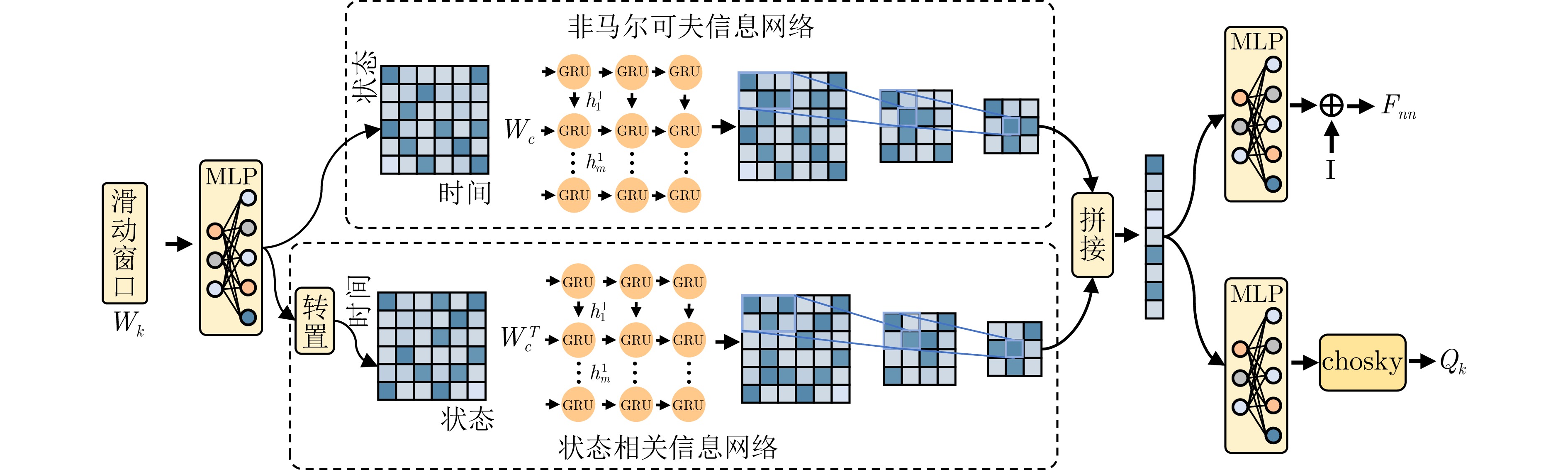

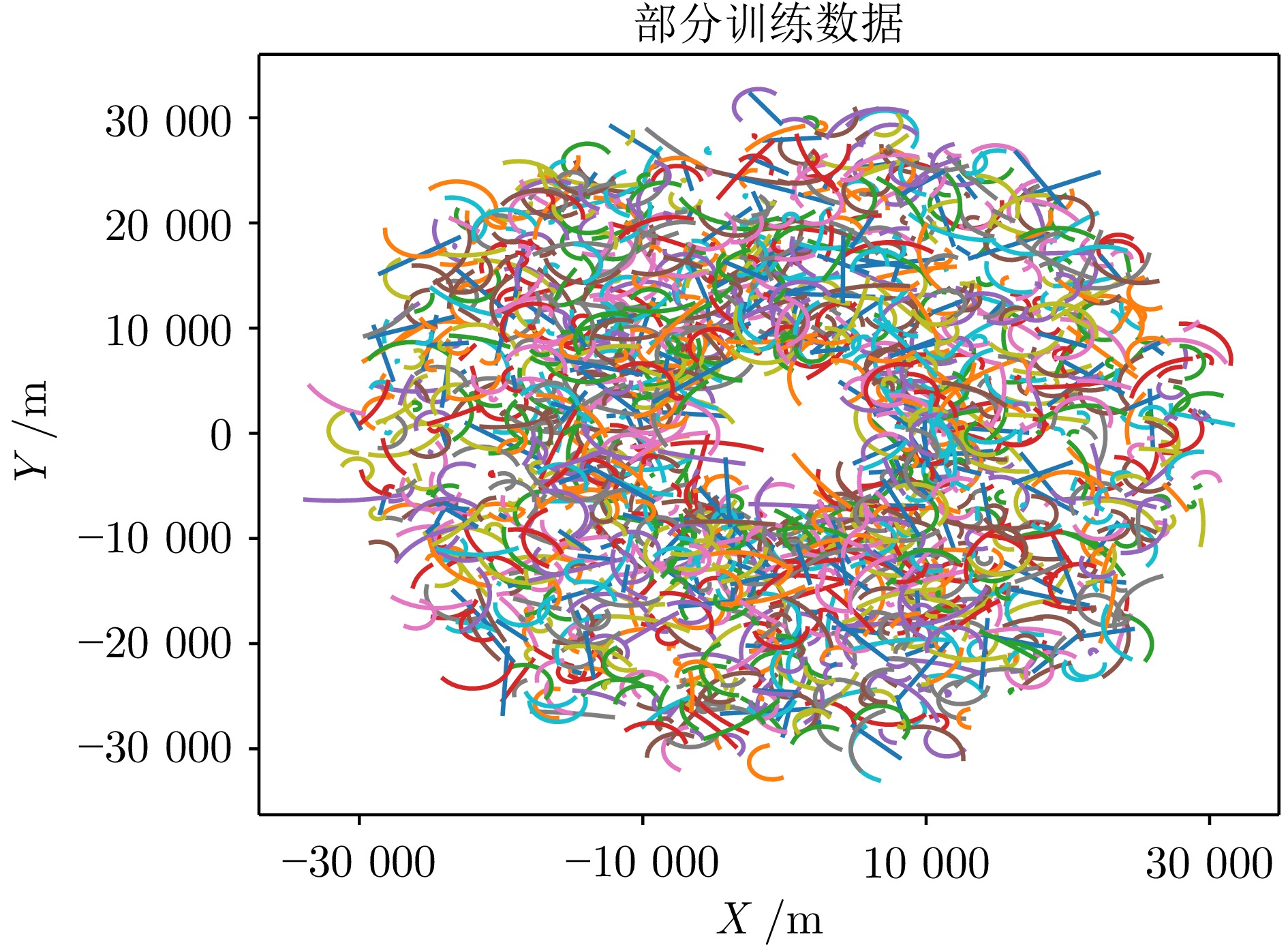

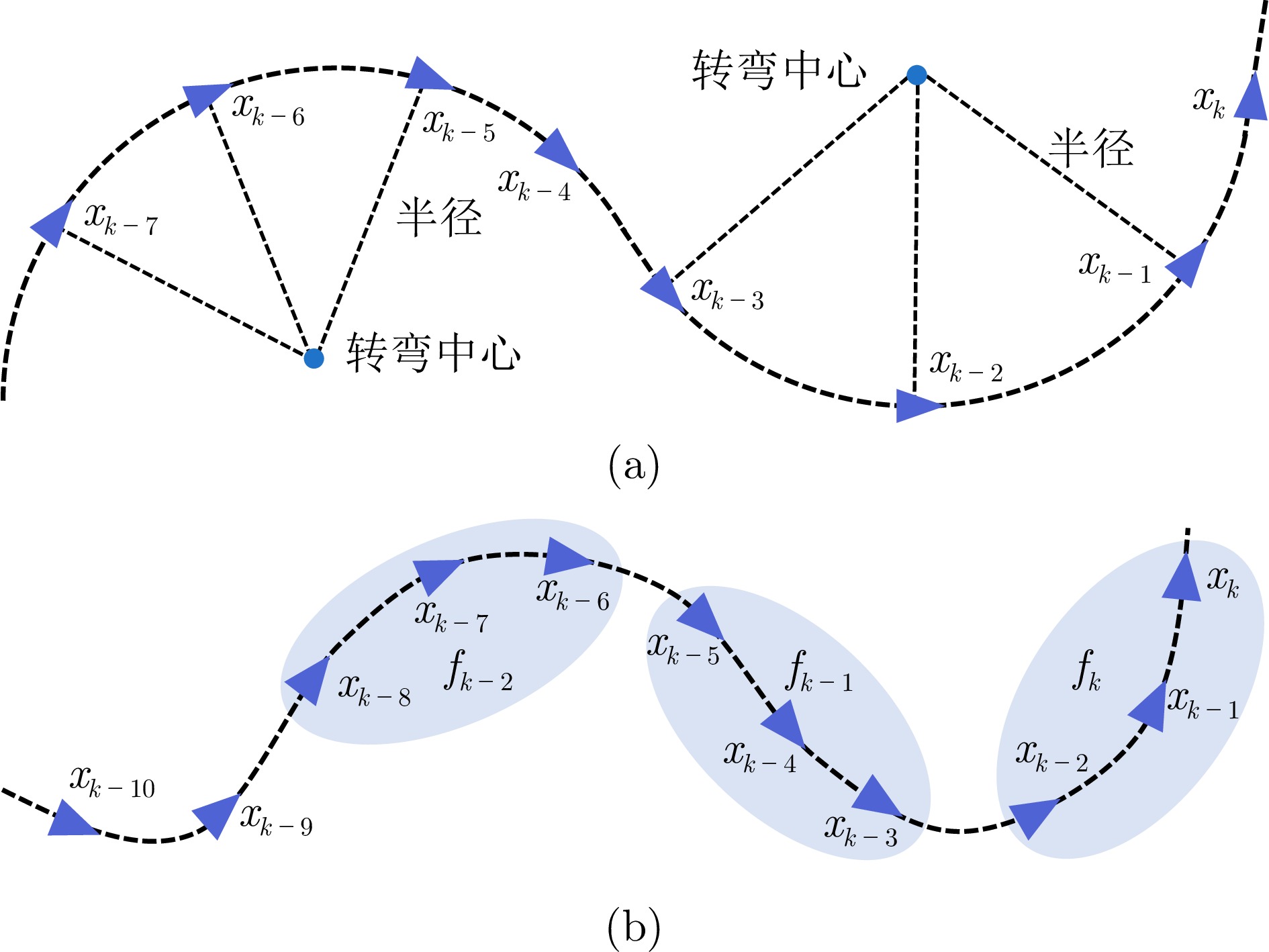

摘要: 针对时序−状态相关性提取不足引起的目标跟踪性能下降问题, 提出了一种基于双支协同滤波网络(Dual-Branch Collaborative Filtering Network, DBCF-Net)的目标跟踪方法. 首先, 为实现运动模型和过程噪声参数的动态调整, 分别设计了非马尔可夫信息网络和状态相关信息网络, 以学习运动目标状态演化过程中的时序依赖性及其状态变量间的局部相关性. 其次, 设计了一种基于最大均值差异(Maximum Mean Discrepancy, MMD)的网络权重协同更新机制, 通过差异化分支网络输出特征来增强分支网络间的学习互补性, 从而提升DBCF-Net对未知运动模式的适应能力. 进而, 融合贝叶斯滤波与神经网络的优势, 引入无偏量测转换到DBCF-Net以增强目标跟踪的鲁棒性. 最后, 通过目标跟踪实验验证了DBCF-Net的有效性.Abstract: To address the performance degradation in target tracking caused by insufficient extraction of temporal-state correlations, a Dual-Branch Collaborative Filtering Network (DBCF-Net) based target tracking method is proposed. First, to achieve dynamic adjustment of motion model and process noise parameters, a non-Markov information network and a state information network are designed separately to learn the temporal dependencies in the motion target state evolution process and the local correlations among state variables. Second, a network weight collaborative update mechanism based on maximum mean discrepancy (MMD) is designed to enhance learning complementarity between the branch networks by differentiating their output features, thereby improving the adaptability of DBCF-Net to unknown motion patterns. Furthermore, leveraging the strengths of Bayesian filtering and neural networks, unbiased measurement transformation is introduced into DBCF-Net to enhance the robustness of target tracking. Finally, target tracking experiments validate the effectiveness of DBCF-Net.

-

Key words:

- State Estimation /

- Kalman Filter /

- Target Tracking /

- Filtering Network

-

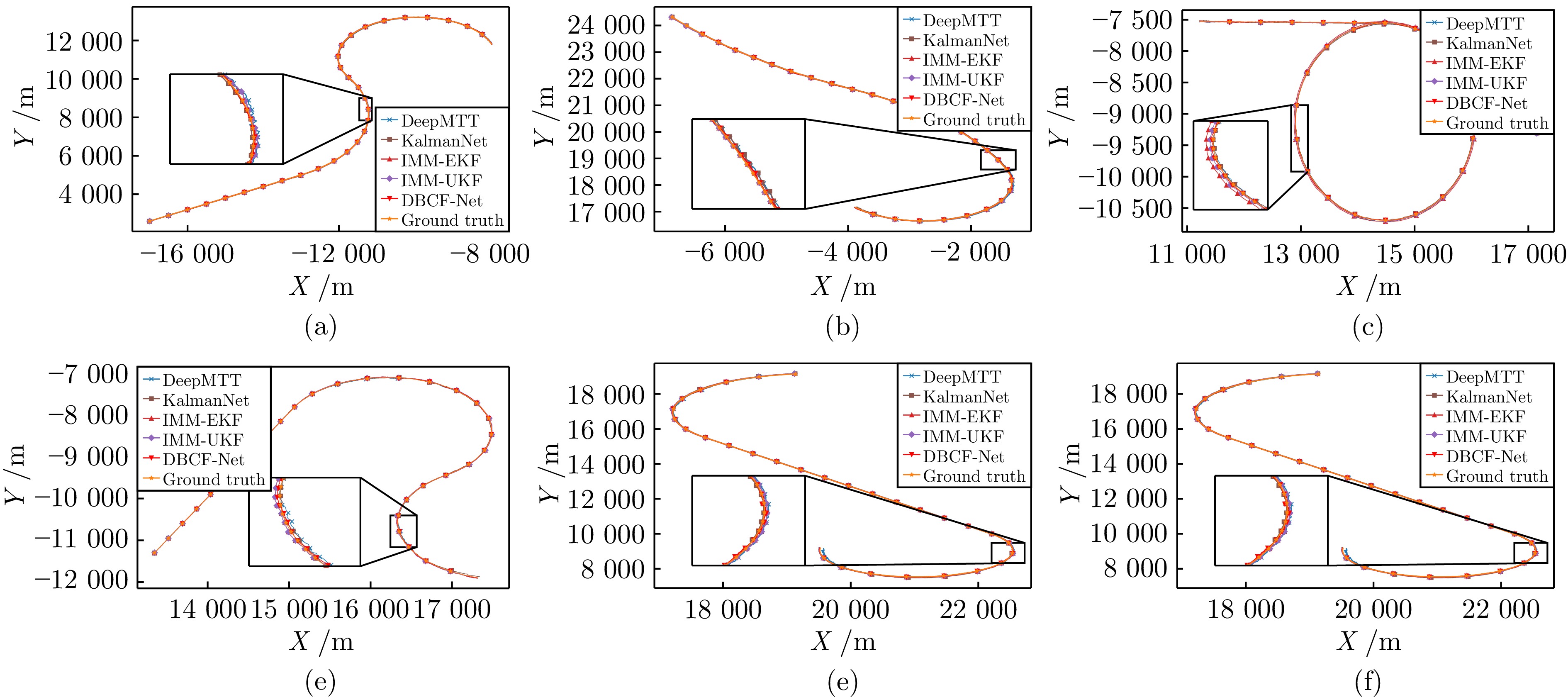

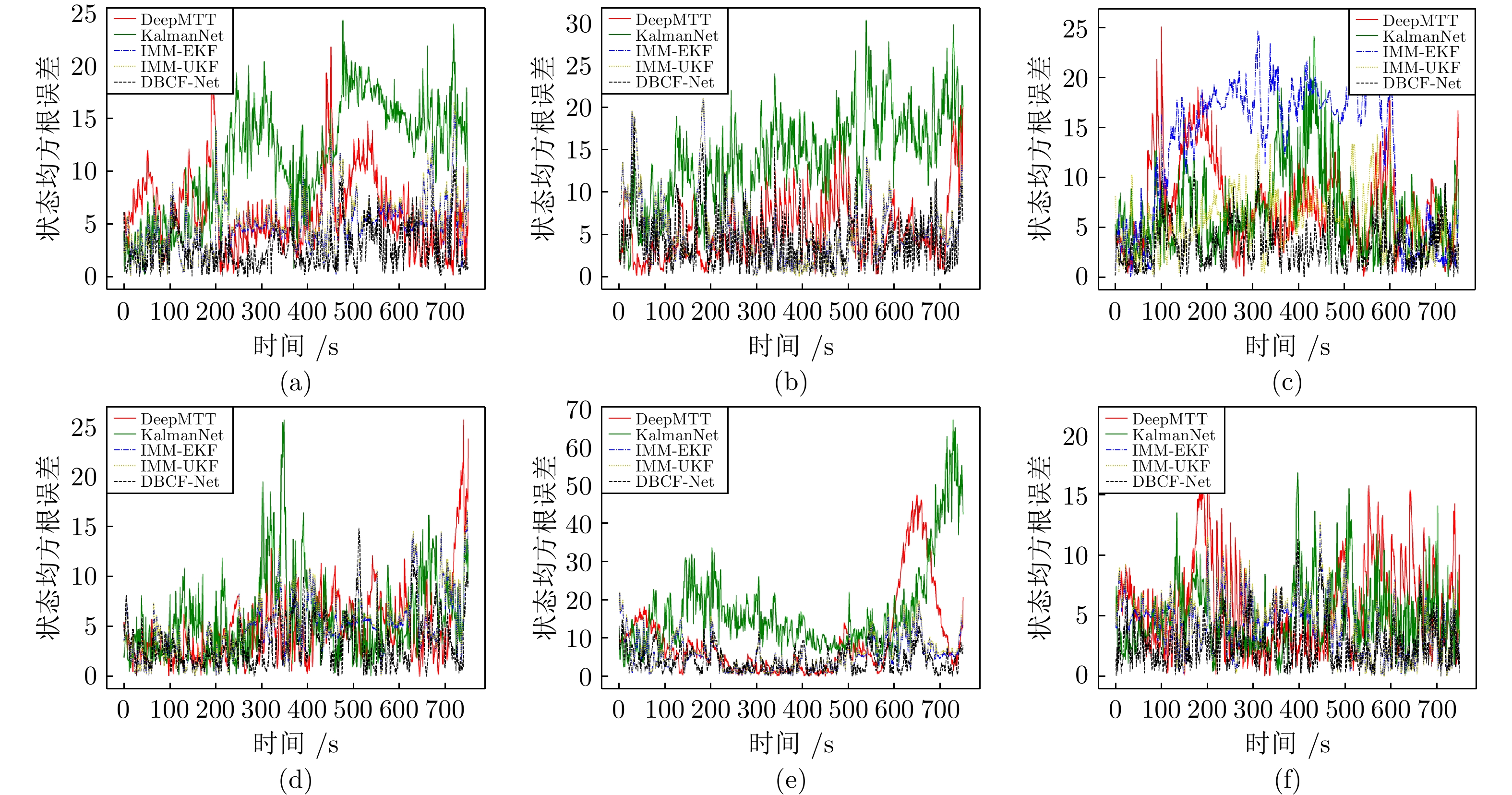

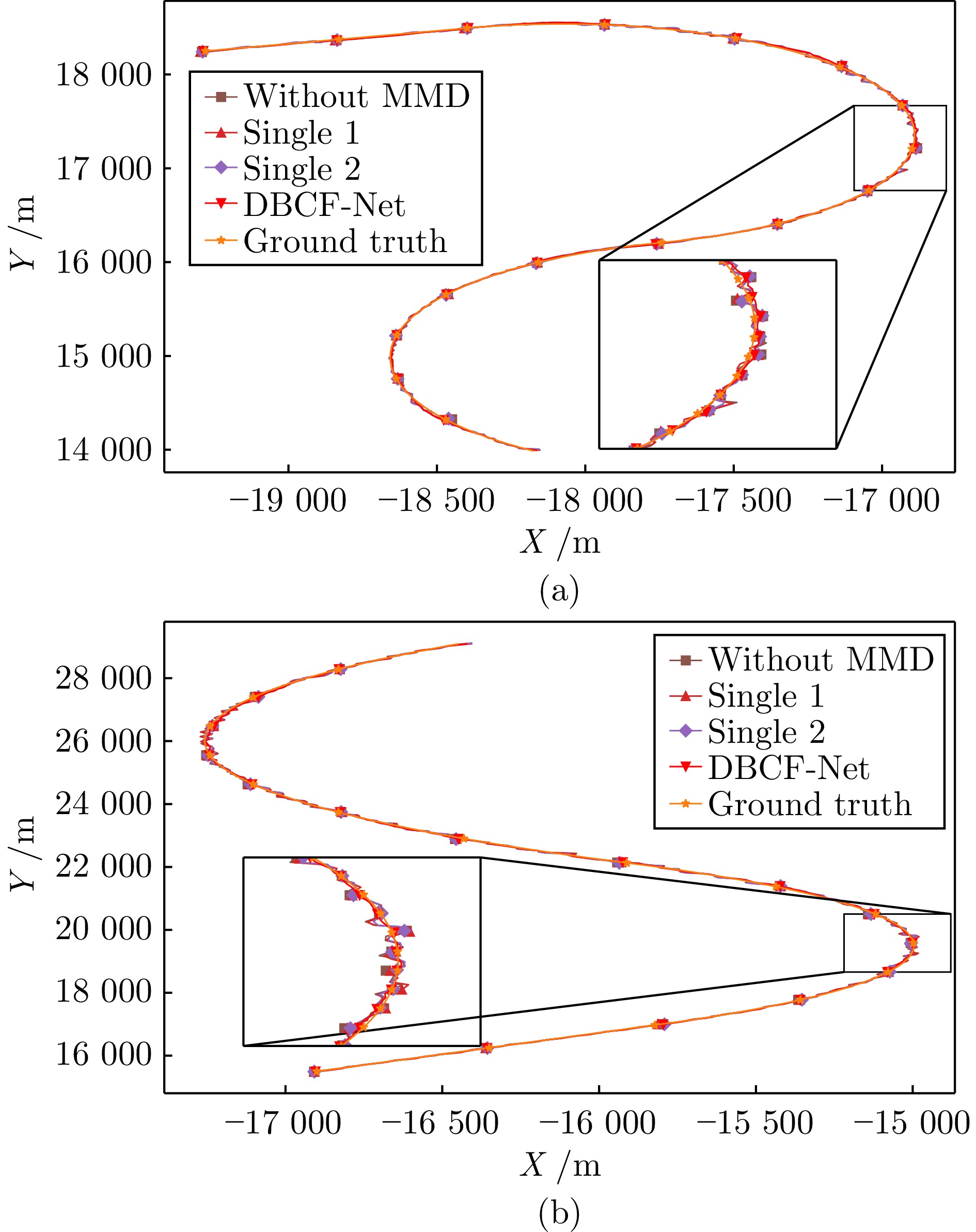

图 5 展示了6条轨迹的跟踪结果, 其中放大的子图中包含了30个采样的轨迹片段. 主图中每隔2.5秒(25个采样点)标记一次采样点, 在子图中每隔0.5秒标记一次采样点.

Fig. 5 The tracking results of six trajectories are shown, where the enlarged subplot contains 30 sampled trajectory segments. In the main plot, sampling points are marked at intervals of 2.5 seconds (corresponding to 25 sampling points), while in the subplot, they are marked every 0.5 seconds.

表 1 测试轨迹运动参数

Table 1 Test trajectory maneuver

轨迹序号 初始状态 第一段 第二段 第三段 1 $ [-17000.0\;{\mathrm{m}},\;2600.0\;{\mathrm{m}},\;200.0\;{\mathrm{m}}/{\mathrm{s}},\;120.0\;{\mathrm{m}}/{\mathrm{s}}] $ $ 20\text{s},\;\text{CV} $ $ 25\text{s},\;\text{CT},\;\omega=3.6^\circ/\text{s} $ $ 30\text{s},\;\text{CT},\;\omega=-6.4^\circ/\text{s} $ 2 $ [-6860.0\;{\mathrm{m}},\;24320.0\;{\mathrm{m}},\;90.0\;{\mathrm{m}}/{\mathrm{s}},\;-130.0\;{\mathrm{m}}/{\mathrm{s}}] $ $ 25\text{s},\;\text{CT},\;\omega=1.0^\circ/\text{s} $ $ 25\text{s},\;\text{CT},\;\omega=-1.6^\circ/\text{s} $ $ 25\text{s},\;\text{CT},\;\omega=-6.4^\circ/\text{s} $ 3 $ [17155.0\;{\mathrm{m}},\;-9300.0\;{\mathrm{m}},\;-169.0\;{\mathrm{m}}/{\mathrm{s}},\;140.0\;{\mathrm{m}}/{\mathrm{s}}] $ $ 10\text{s},\;\text{CV} $ $ 50\text{s},\;\text{CT},\;\omega=8.00^\circ/\text{s} $ $ 15\text{s},\;\text{CV} $ 4 $ [13345.0\;{\mathrm{m}},\;-11300.0\;{\mathrm{m}},\;69.0\;{\mathrm{m}}/{\mathrm{s}},\;140.0\;{\mathrm{m}}/{\mathrm{s}}] $ $ 25\text{s},\;\text{CV} $ $ 30\text{s},\;\text{CT},\;\omega=-7.0^\circ/\text{s} $ $ 20\text{s},\;\text{CT},\;\omega=6.48^\circ/\text{s} $ 5 $ [19134.0\;{\mathrm{m}},\;19144.0\;{\mathrm{m}},\;-235.0\;{\mathrm{m}}/{\mathrm{s}},\;-33.0\;{\mathrm{m}}/{\mathrm{s}}] $ $ 20\text{s},\;\text{CT},\;\omega=6.08^\circ/\text{s} $ $ 30\text{s},\;\text{CV} $ $ 25\text{s},\;\text{CT},\;\omega=-9.01^\circ/\text{s} $ 6 $ [9360.0\;{\mathrm{m}},\;-8740.0\;{\mathrm{m}},\;-140.0\;{\mathrm{m}}/{\mathrm{s}},\;-1.0\;{\mathrm{m}}/{\mathrm{s}}] $ $ 20\text{s},\;\text{CT},\;\omega=9.08^\circ/\text{s} $ $ 30\text{s},\;\text{CT},\;\omega=-8.1^\circ/\text{s} $ $ 25\text{s},\;\text{CT},\;\omega=1.08^\circ/\text{s} $ 表 2 不同方法在测试轨迹上的的平均均方根误差(ARMSE)

Table 2 The ARMSE of states for different methods on the test trajectory

方法 轨迹1 轨迹2 轨迹3 轨迹4 轨迹5 轨迹6 IMM-EKF 位置(m) 4.872 5.208 12.942 4.942 5.969 4.236 速度(m/s) 9.606 6.569 21.949 8.336 11.263 8.919 IMM-UKF 位置(m) 5.089 5.267 5.564 5.082 6.310 4.437 速度(m/s) 10.149 6.689 11.320 8.707 12.177 9.404 DeepMTT 位置(m) 6.061 5.576 7.240 4.889 9.473 5.797 速度(m/s) 3.676 4.493 6.904 4.400 7.595 6.045 KalmanNet 位置(m) 11.302 14.067 6.641 5.863 17.151 4.977 速度(m/s) 12.279 13.168 15.105 13.652 14.708 9.856 DBCF-Net 位置(m) 2.678 4.400 3.339 3.365 4.364 2.682 速度(m/s) 3.806 4.430 4.900 3.956 5.103 3.938 表 3 消融实验测试轨迹运动参数

Table 3 Test trajectory maneuver parameters of ablation experiment

轨迹序号 初始状态 第一段 第二段 第三段 1 [−19280.0 m, 18250.0 m, 180.0 m/s, 50.0 m/s] $ 5\;\text{s},\;\text{CV} $ $ 20\;\text{s},\;\text{CT},\; $ $ \omega=-9.0^\circ/\text{s} $ $ 15\;\text{s},\;\text{CT},\; $ $ \omega= 8.4^\circ/\text{s} $ 2 [−16900.0 m, 15500.0 m, 220.0 m/s, 300.0 m/s] $ 5\;\text{s},\;\text{CV} $ $ 15\;\text{s},\;\text{CT},\; $ $ \omega=5.0^\circ/\text{s} $ $ 20\;\text{s},\;\text{CT},\; $ $ \omega=-3.4^\circ/\text{s} $ 表 4 消融实验测试轨迹ARMSE值

Table 4 ARMSE of ablation experiment test trajectory

方法 轨迹1 轨迹2 DBCF-Net 位置(m) 5.106 5.317 速度(m/s) 6.161 7.265 Single1 位置(m) 6.758 8.169 速度(m/s) 8.908 9.801 Single2 位置(m) 6.920 8.409 速度(m/s) 10.813 7.631 No MMD 位置(m) 7.233 9.209 速度(m/s) 9.666 9.189 -

[1] Cortina E, Otero D, Attellis C E. Maneuvering target tracking using extended Kalman filter. IEEE Transactions on Aerospace and Electronic Systems, 1991, 27(1): 155−158 doi: 10.1109/7.68158 [2] Julier S J, Uhlmann J K. Unscented filtering and nonlinear estimation. Proceedings of the IEEE, 2004, 92(3): 401−422 doi: 10.1109/JPROC.2003.823141 [3] Solaiman S, Alsuwat E, Alharthi R. Simultaneous Tracking and Recognizing Drone Targets with Millimeter-Wave Radar and Convolutional Neural Network. Applied System Innovation, 2023, 6(4): 68 doi: 10.3390/asi6040068 [4] Yan B, Wei Y, Liu S, Huang W, Feng R, Chen X. A review of current studies on the unmanned aerial vehicle-based moving target tracking methods. Defence Technology, 2025, 51: 201−219 doi: 10.1016/j.dt.2025.01.013 [5] Alhafnawi M, Bany S H, Masadeh A, Al-Obiedollah H, Ayyash M, El-Khazali R, et al. A Survey of Indoor and Outdoor UAV-Based Target Tracking Systems: Current Status, Challenges, Technologies, and Future Directions. IEEE Access, 2023, 11: 68324−68339 doi: 10.1109/ACCESS.2023.3292302 [6] Yasmeen A, Daescu O. Recent Research Progress on Ground-to-Air Vision-Based Anti-UAV Detection and Tracking Methodologies: A Review. Drones, 2025, 9(1): 58 doi: 10.3390/drones9010058 [7] Yang Y, Moran B, Wang X, Brown T C, Williams S, Pan Q. Experimental analysis of a game-theoretic formulation of target tracking. Automatica, 2020, 114: 1−10 [8] Yi W, Fang Z, Li W, Hoseinnezhad R, Kong L. Multi-frame track before-detect algorithm for maneuvering target tracking. IEEE Transactions on Vehicular Technology, 2020, 69(4): 4104−4118 doi: 10.1109/TVT.2020.2976095 [9] Blom H A P, Bar-Shalom Y. The interacting multiple model algorithm for systems with Markovian switching coefficients. IEEE Transactions on Automatic Control, 1988, 33(8): 780−783 doi: 10.1109/9.1299 [10] Daeipour E, Bar-Shalom Y. IMM tracking of maneuvering targets in the presence of glint. IEEE Transactions on Aerospace and Electronic Systems, 1998, 34(3): 996−1003 doi: 10.1109/7.705913 [11] Wu W, Cheng P. A nonlinear IMM algorithm for maneuvering target tracking. IEEE Transactions on Aerospace and Electronic Systems, 1994, 30(3): 875−886 doi: 10.1109/7.303756 [12] Li W, Jia Y, Du J, Yu F. Gaussian mixture phd smoother for jump markov models in multiple maneuvering targets tracking, in: Proceedings of the 2011 American Control Conference, San Francisco, USA, 2011. Pp. 3024-3029. [13] Xu L, Li X R, Hybrid grid multiple-model estimation with application to maneuvering target tracking, in: 2010 13th International Conference on Information Fusion, Edinburgh, UK, 2010, pp.1−7. [14] Liu J, Wang Z, Xu M. DeepMTT: A deep learning maneuvering target-tracking algorithm based on bidirectional LSTM network. Information Fusion, 2020, 53: 289−304 doi: 10.1016/j.inffus.2019.06.012 [15] Yang X, Qiao D. Attention-based bidirectional LSTM Network for Target Tracking. In: Proceedings of IEEE International Conference on Electronic Technology, Communication and Information. Changchun, China, 2021. 151-156 [16] Zhai B, Yi W, Li M, Ju H, Kong L. Data-driven XGBoost-based filter for target tracking. International Radar Conference. China, Nanjing, 2019. 6683-6687. [17] 张文安, 林安迪, 杨旭升, 俞立, 杨小牛. 融合深度学习的贝叶斯滤波综述. 自动化学报, 2024, 50(8): 1502 doi: 10.16383/j.aas.c230457Zhang Wen-An, Lin An-Di, Yang Xu-Sheng, Yu Li, Yang Xiao-Niu. A survey on Bayesian filtering integrated with deep learning. Acta Automatica Sinica, 2024, 50(8): 1502 doi: 10.16383/j.aas.c230457 [18] Chen C, Lu C X, Wang B, Trigoni N, Markham A. DynaNet: Neural Kalman dynamical model for motion estimation and prediction. IEEE Transactions on Neural Networks and Learning Systems, 2021, 32(12): 5479−5491 doi: 10.1109/TNNLS.2021.3112460 [19] 杨旭升, 李福祥, 胡佛, 等. 基于肌电-惯性融合的人体运动估计: 高斯滤波网络方法. 自动化学报, 2024, 50(5): 991 doi: 10.16383/j.aas.c230581Yang Xu-Sheng, Li Fu-Xiang, Hu Fo, Zhang Wen-An. Human motion estimation based on EMG-inertial fusion: A Gaussian filtering network approach. Acta Automatica Sinica, 2024, 50(5): 991 doi: 10.16383/j.aas.c230581 [20] Revach G, Shlezinger N, Ni X, Escoriza A L, van Sloun R J G, Eldar Y C. KalmanNet: Neural Network Aided Kalman Filtering for Partially Known Dynamics. IEEE Transactions on Signal Processing, 2022, 70: 1532−1547 doi: 10.1109/TSP.2022.3158588 [21] CHOI G, PARK J, SHLEZINGER N, Eldar Y C, Lee N. Split KalmanNet: A robust model-based deep learning approach for state estimation. IEEE Transactions on Vehicular Technology, 2023, 72(9): 12326−12331 doi: 10.1109/TVT.2023.3270353 [22] Escoriza A L, Revach G, Shlezinger N, van Sloun R J G. Data-Driven Kalman-Based Velocity Estimation for Autonomous Racing, In: Proceedings of IEEE International Conference on Autonomous Systems. Montreal, QC, Canada, 2021. 1-5 [23] 杨旭升, 王雪儿, 汪鹏君, 张文安. 基于渐进无迹卡尔曼滤波网络的人体肢体运动估计. 自动化学报, 2023, 49(8): 1723 doi: 10.16383/j.aas.c220523Yang Xu-Sheng, Wang Xue-Er, Wang Peng-Jun, Zhang Wen-An. Human limb motion estimation based on progressive unscented Kalman filter network. Acta Automatica Sinica, 2023, 49(8): 1723 doi: 10.16383/j.aas.c220523 [24] Ding X, Guo Y, Ding G, Han J. ACNet: Strengthening the Kernel Skeletons for Powerful CNN via Asymmetric Convolution Blocks. In: Proceedings of IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea (South), 2019. 1911-1920 -

计量

- 文章访问数: 150

- HTML全文浏览量: 83

- 被引次数: 0

下载:

下载: