-

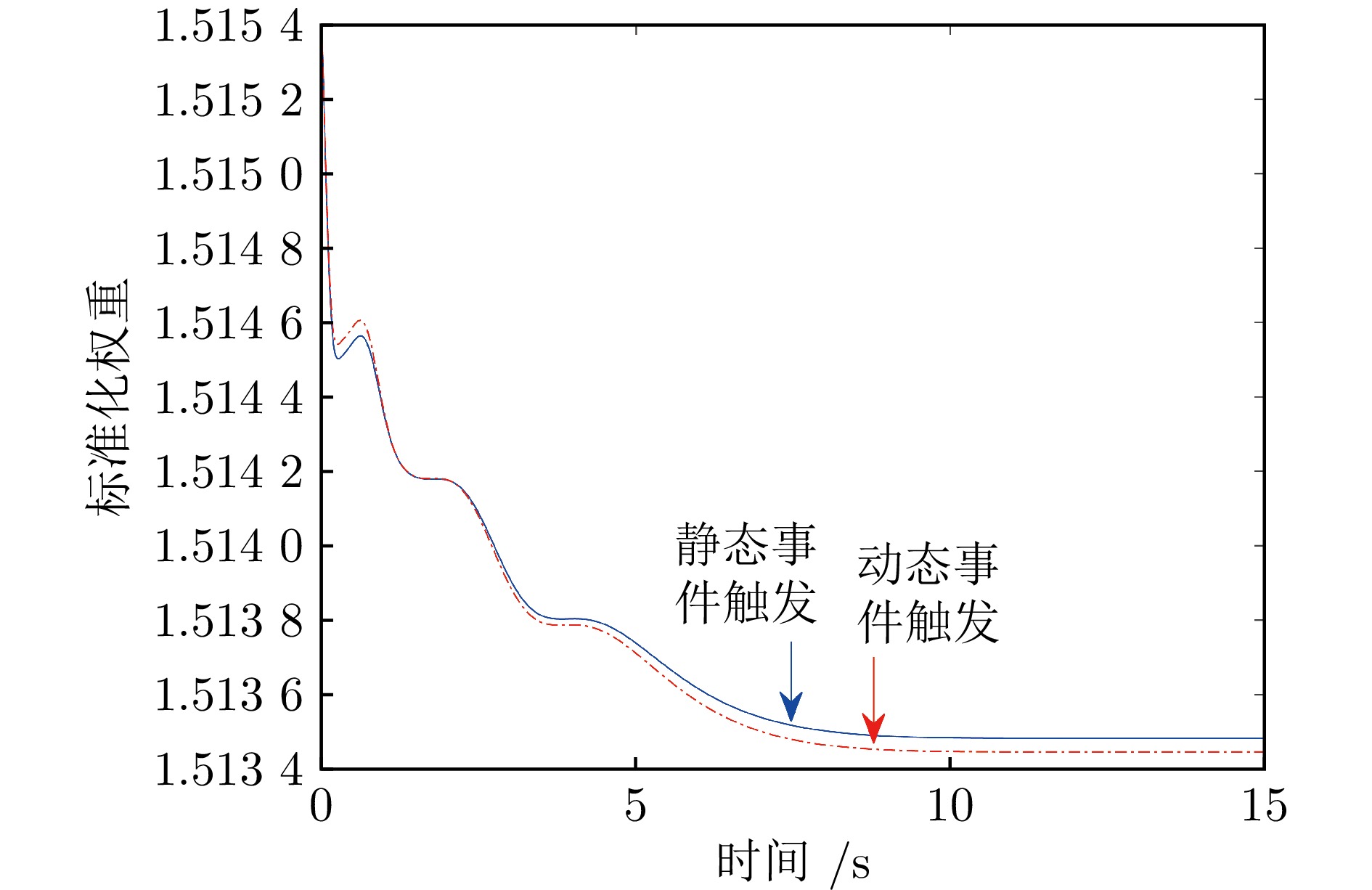

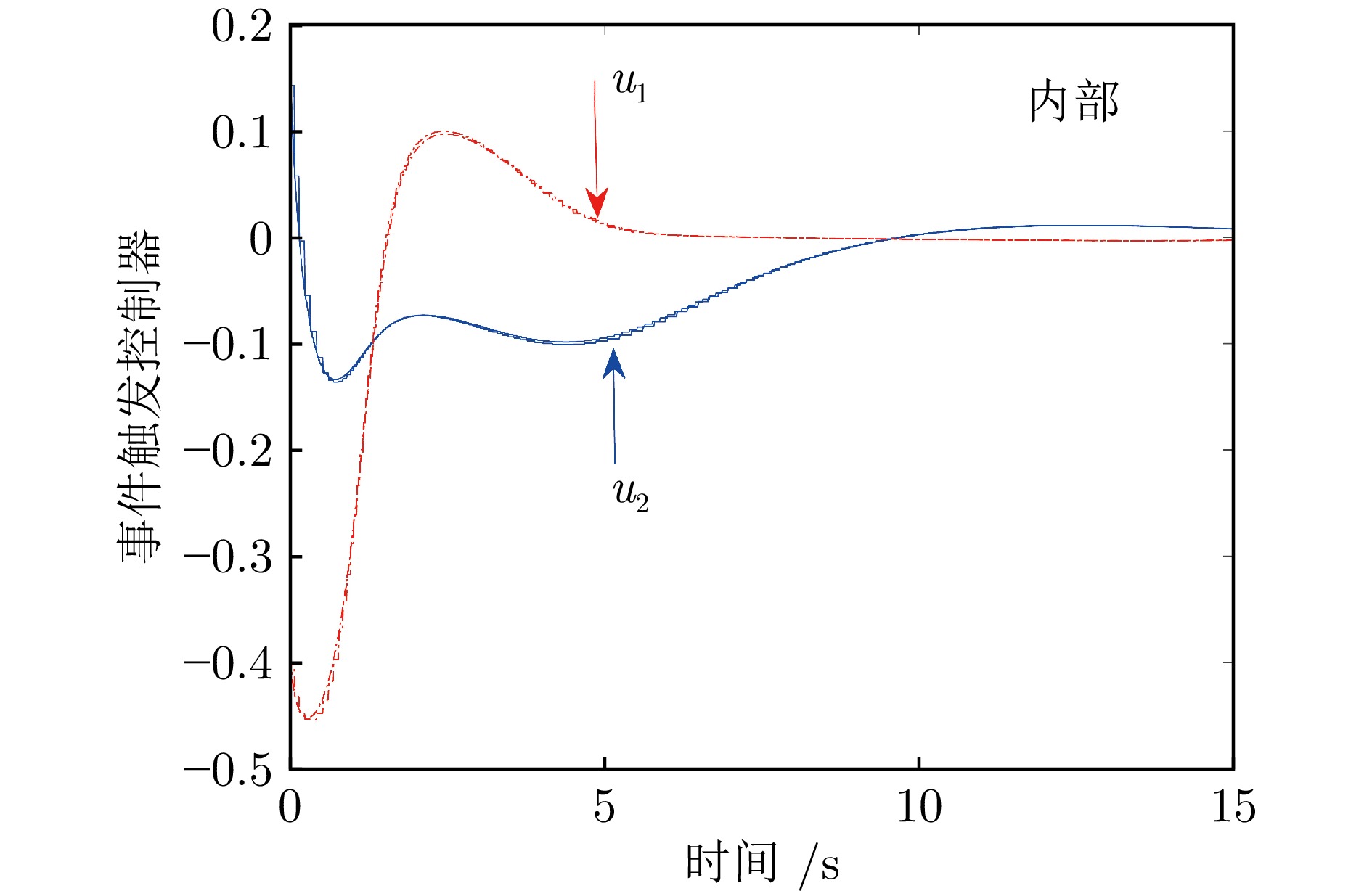

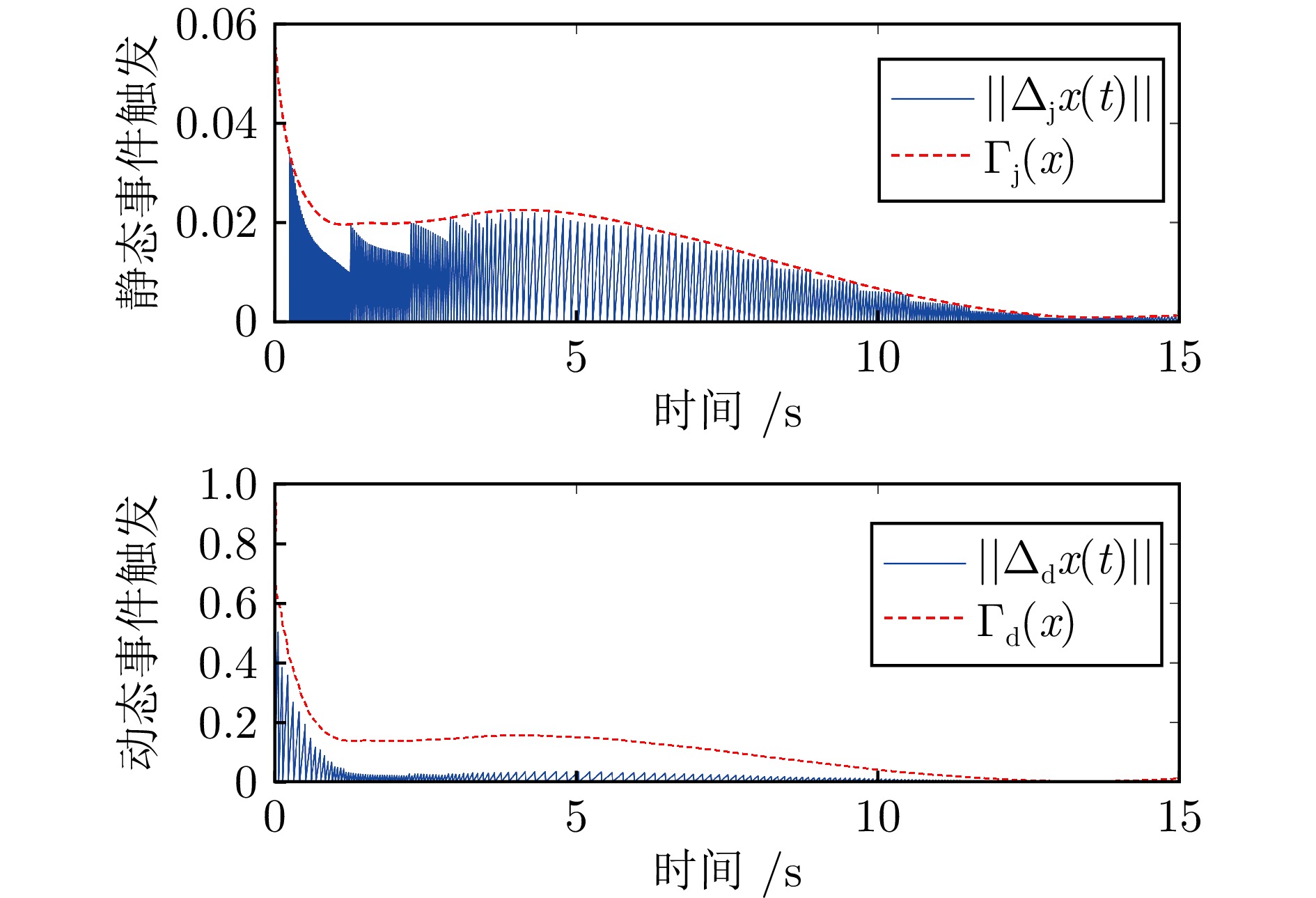

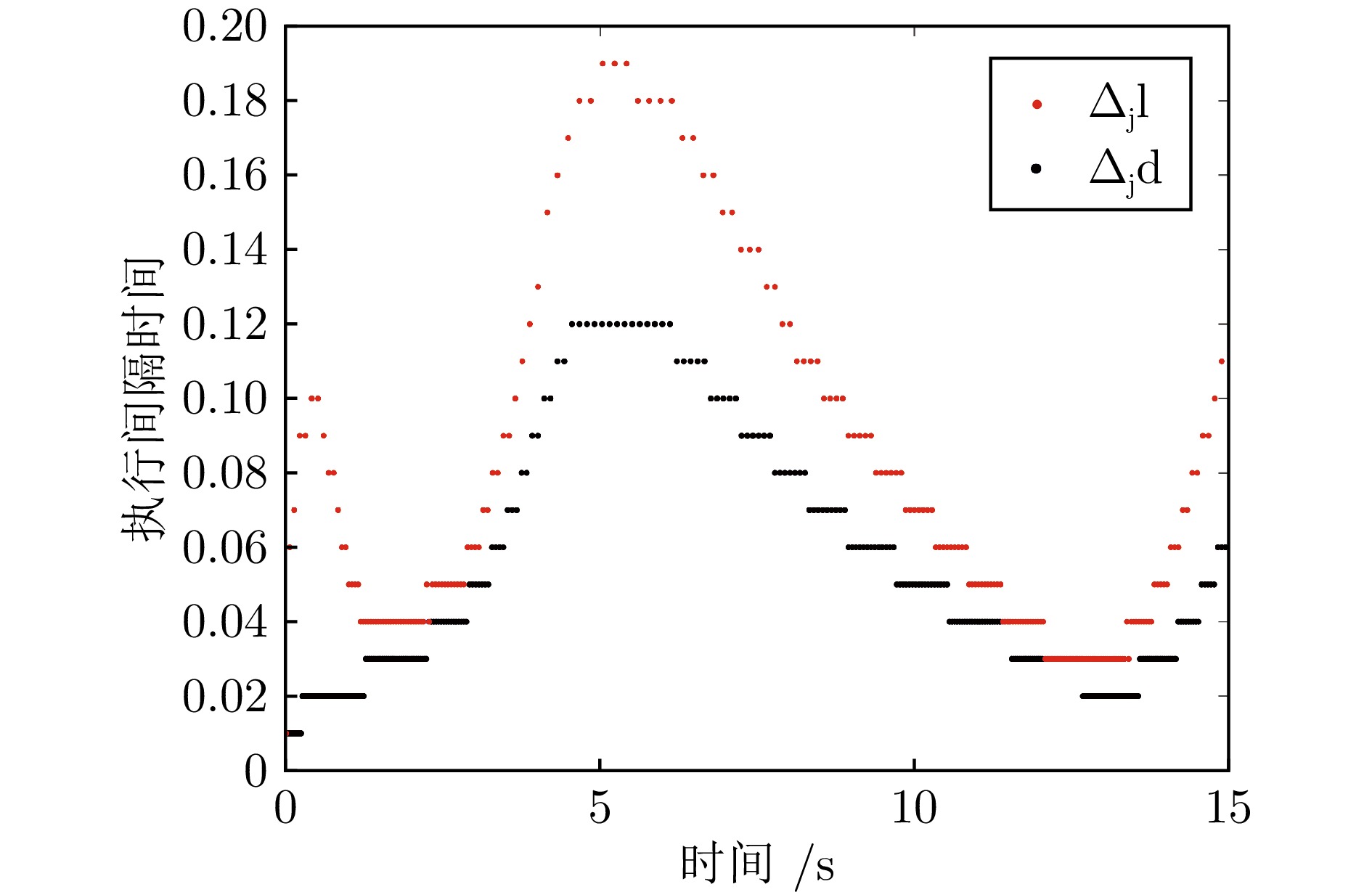

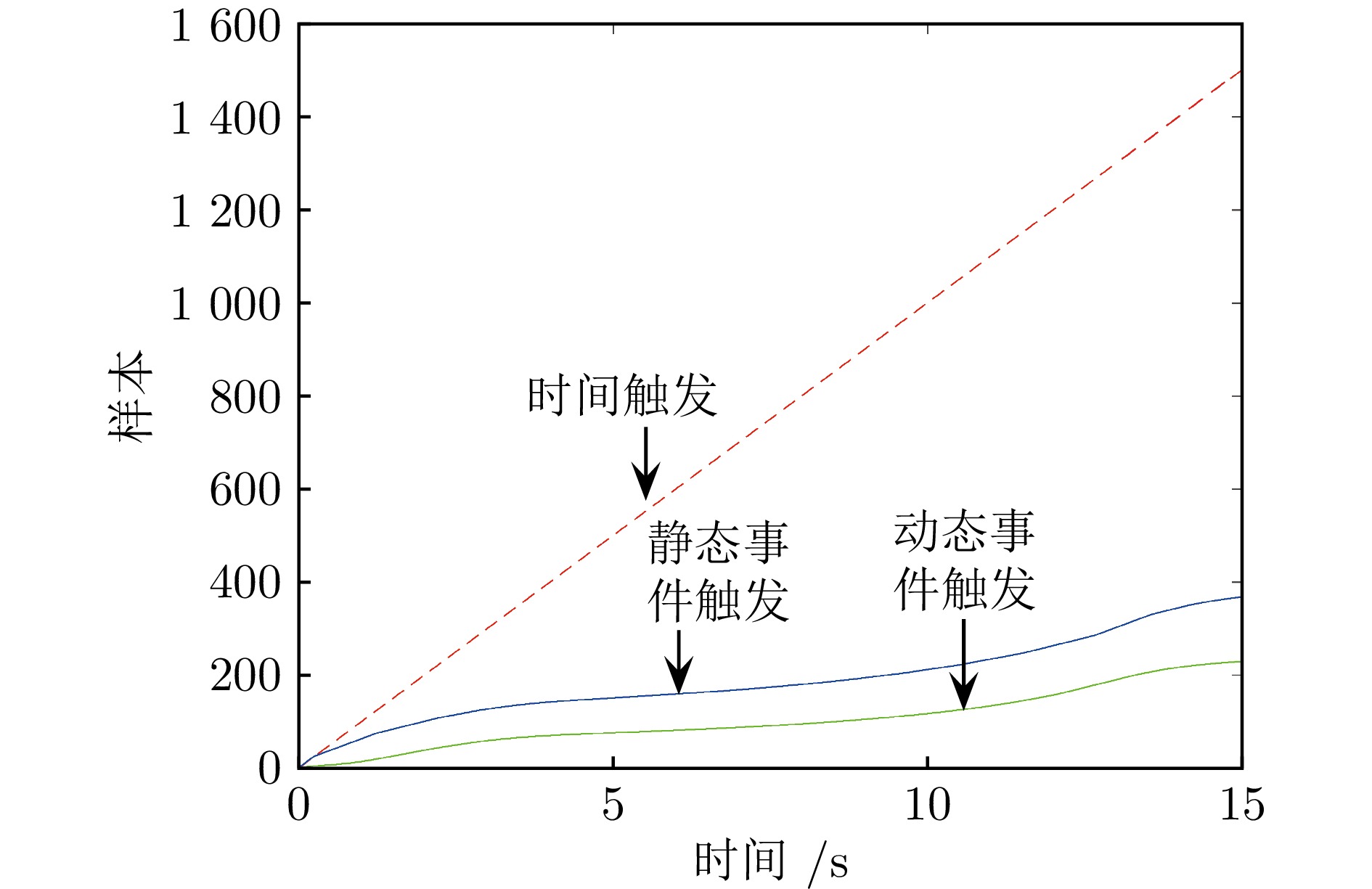

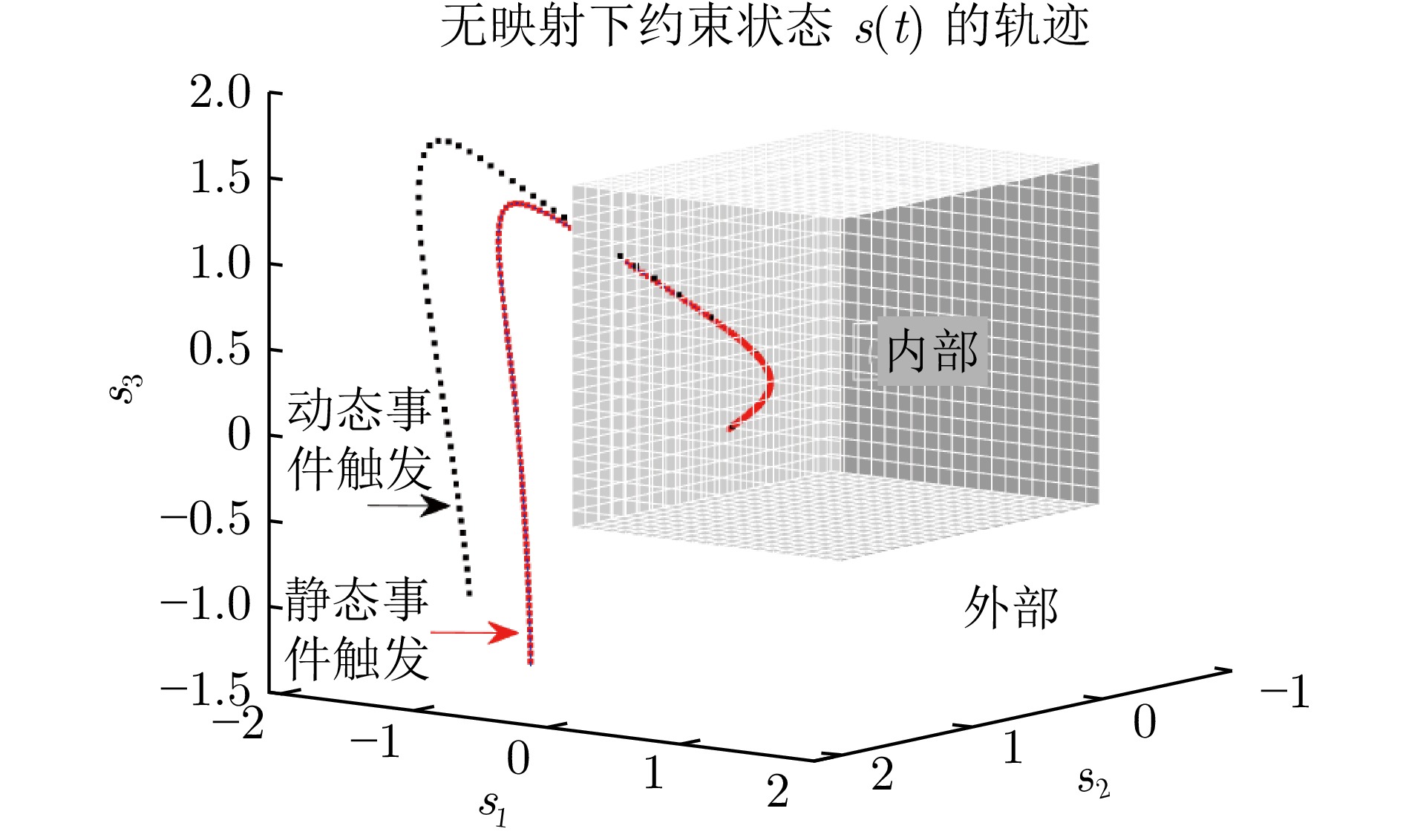

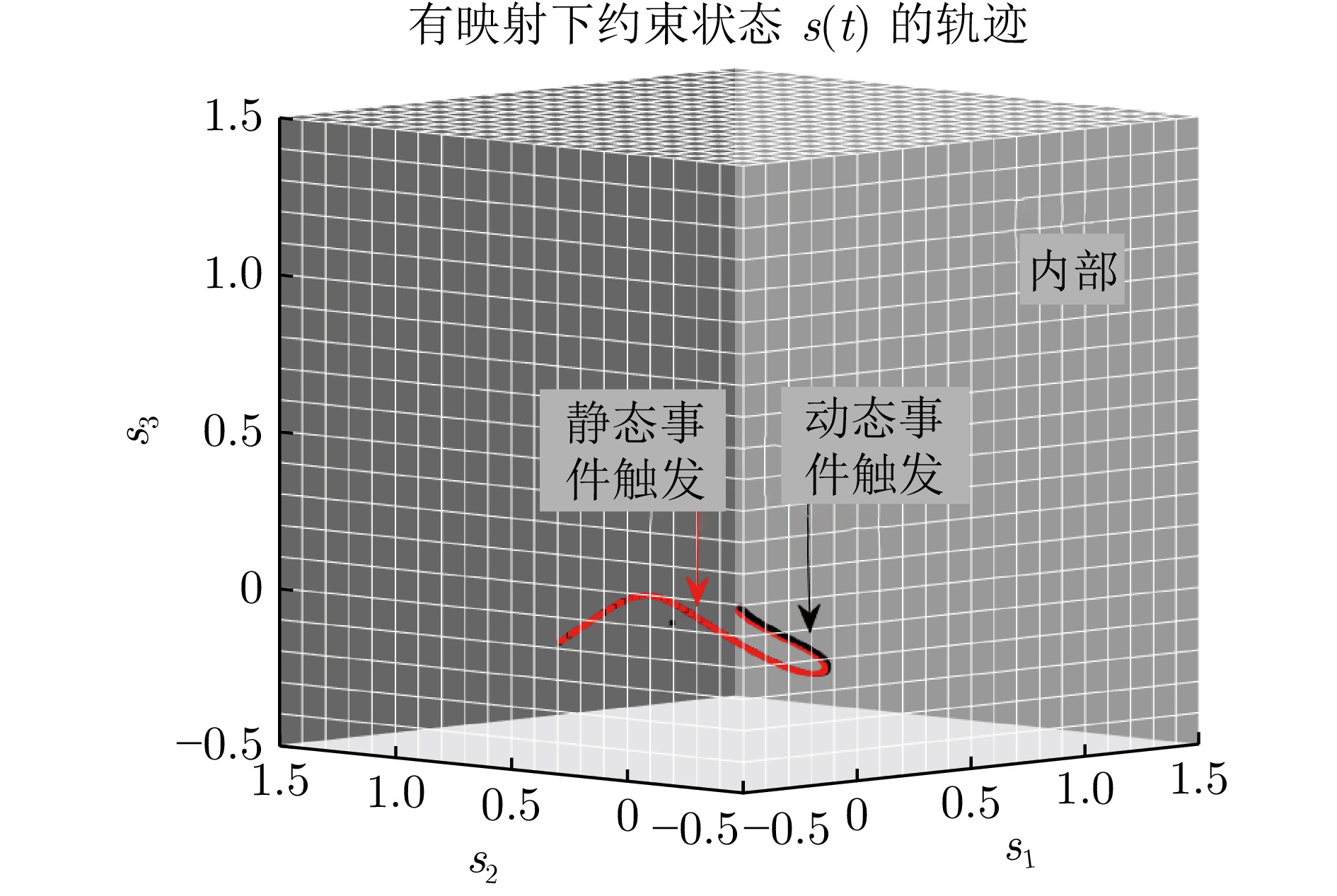

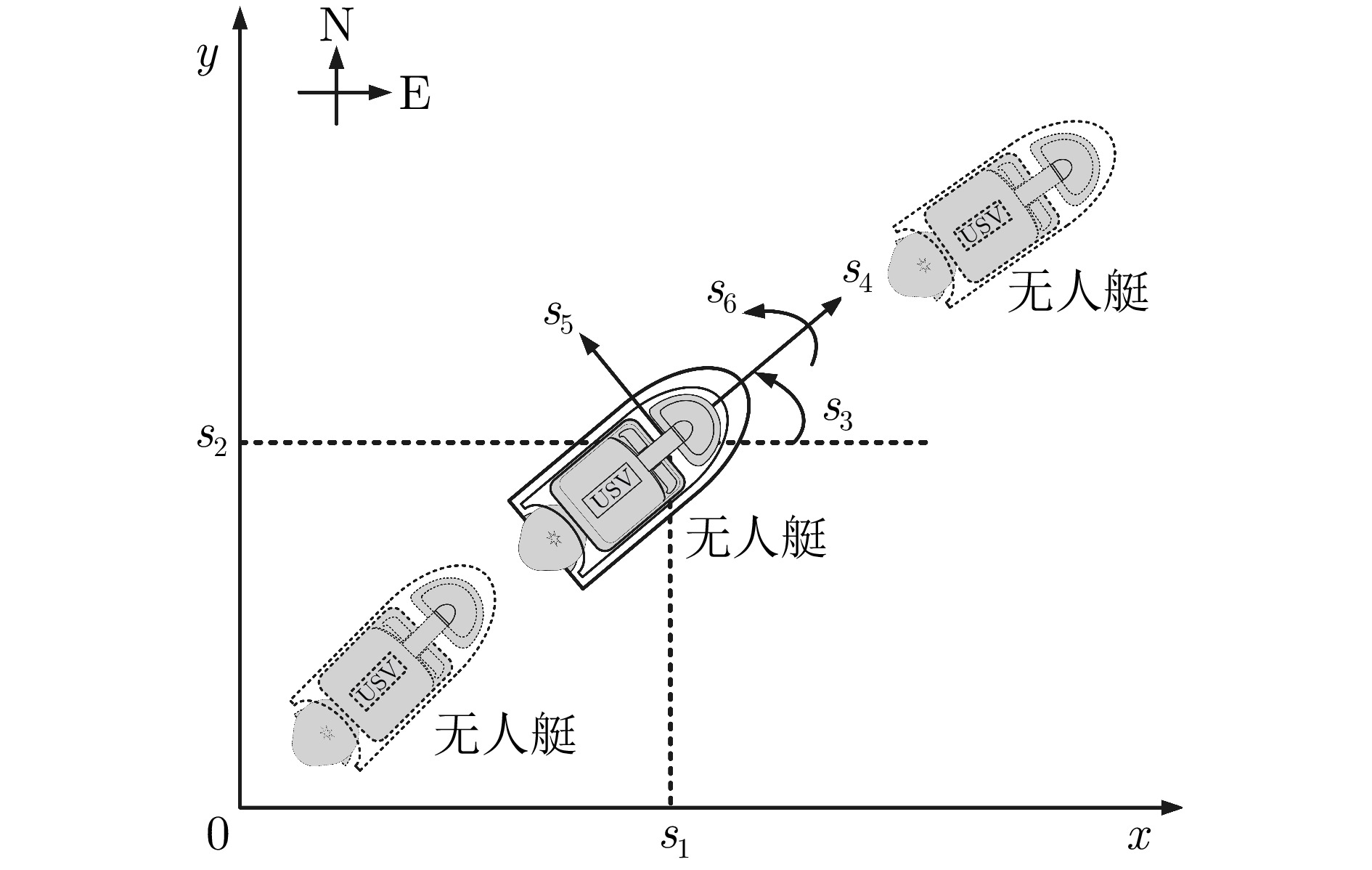

摘要: 本文提出一种基于自适应动态规划的动态事件触发方法(DEM), 用于解决具有状态与控制双重非对称约束的非线性连续时间系统最优控制问题. 首先, 利用非线性映射函数将非对称约束系统的控制问题转化为无约束形式. 然后, 设计一种静态事件触发方法(SEM), 其中触发条件仅与当前状态相关. 进一步, 开发一种依赖额外内部动态变量的DEM, 其触发条件也与系统历史信息相关. 事实上, DEM是SEM的进阶方法. 理论分析证实DEM在确保系统性能的情况下, 能够进一步节省计算和网络资源. 最后, 介绍基于神经网络的实现方法. 在无人水面艇仿真实验环境下, 该方法的有效性得到了验证.Abstract: In this paper, an adaptive dynamic programming-based dynamic event-triggering method (DEM) is developed to solve the optimal control problem of nonlinear continuous-time systems with asymmetric constraints for both state and control. First, using a nonlinear mapping function to transform the control problem of asymmetric constrained systems into an unconstrained form. Then, a static event-triggering method (SEM) is designed, where triggering conditions are only associated with the current state. Based on the SEM, a DEM that relies on an additional internal dynamic variable is developed, whose triggering condition is also related to the system historical information. In fact, the DEM is a filtered version of the static one. Theoretical analysis proves that the DEM can further save computational and network resources while ensuring system performance. Finally, the neural network-based implementation is presented. The effectiveness of this method has been verified in the simulation experiment environment of the unmanned surface vehicle.

-

表 1 命名法

Table 1 Nomenclature

符号 含义 $ {\bf{N}} $ 正整数集合 $ {\bf{R}} $ 实数集合 $ {\bf{R}}^{m} $ $ m $维欧式空间 $ {\bf{R}}^{m\times n} $ $ m\times n $矩阵空间 $ \text{T} $ 转置 $ \nabla J(s) $ 梯度算子 $ \| {\cal{A}} \| $ 2范数 -

[1] 罗彪, 胡天萌, 周育豪, 黄廷文, 阳春华, 桂卫华. 多智能体强化学习控制与决策研究综述. 自动化学报, 2025, 51(3): 510−539 doi: 10.16383/j.aas.c240392Luo Biao, Hu Tian-Meng, Zhou Yu-Hao, Huang Ting-Wen, Yang Chun-Hua, Gui Wei-Hua. Survey on multi-agent reinforcement learning for control and decision-making. Acta Automatica Sinica, 2025, 51(3): 510−539 doi: 10.16383/j.aas.c240392 [2] 杜城龙, 韩洁, 李繁飙, 桂卫华. 基于无模型策略梯度强化学习的未知随机系统最优控制. 自动化学报, 2025, 51(10): 2245−2255 doi: 10.16383/j.aas.c250156Du Cheng-Long, Han Jie, Li Fan-Biao, Gui Wei-Hua. Model-free policy gradient-based reinforcement learning algorithms for optimal control of unknown stochastic systems. Acta Automatica Sinica, 2025, 51(10): 2245−2255 doi: 10.16383/j.aas.c250156 [3] Lewis F L, Liu D R. Reinforcement Learning and Approximate Dynamic Programming for Feedback Control. Hoboken: John Wiley & Sons, 2013. [4] Liu D R, Xue S, Zhao B, Luo B, Wei Q L. Adaptive dynamic programming for control: A survey and recent advances. IEEE Transactions on Systems, Man, and Cybernetics: Systems, 2021, 51(1): 142−160 doi: 10.1109/TSMC.2020.3042876 [5] Liu D R, Wei Q L, Wang D, Yang X, Li H L. Adaptive Dynamic Programming with Applications in Optimal Control. Cham, Switzerland: Springer, 2017. [6] 魏庆来, 张化光, 刘德荣, 赵琰. 基于自适应动态规划的一类带有时滞的离散时间非线性系统的最优控制策略. 自动化学报, 2010, 36(1): 121−129 doi: 10.3724/SP.J.1004.2010.00121Wei Qing-Lai, Zhang Hua-Guang, Liu De-Rong, Zhao Yan. An optimal control scheme for a class of discrete-time nonlinear systems with time delays using adaptive dynamic programming. Acta Automatica Sinica, 2010, 36(1): 121−129 doi: 10.3724/SP.J.1004.2010.00121 [7] Sun J Y, Zhang H G, Yan Y, Xu S, Fan X X. Optimal regulation strategy for nonzero-sum games of the immune system using adaptive dynamic programming. IEEE Transactions on Cybernetics, 2023, 53(3): 1475−1484 doi: 10.1109/TCYB.2021.3103820 [8] Liu D R, Wei Q L. Policy iteration adaptive dynamic programming algorithm for discrete-time nonlinear systems. IEEE Transactions on Neural Networks and Learning Systems, 2014, 25(3): 621−634 doi: 10.1109/TNNLS.2013.2281663 [9] Zhao B, Zhang Y W, Liu D R. Adaptive dynamic programming-based cooperative motion/force control for modular reconfigurable manipulators: A joint task assignment approach. IEEE Transactions on Neural Networks and Learning Systems, 2023, 34(12): 10944−10954 doi: 10.1109/TNNLS.2022.3171828 [10] Liu D R, Yang X, Wang D, Wei Q L. Reinforcement-learning-based robust controller design for continuous-time uncertain nonlinear systems subject to input constraints. IEEE Transactions on Cybernetics, 2015, 45(7): 1372−1385 doi: 10.1109/TCYB.2015.2417170 [11] Kiumarsi B, Lewis F L. Actor-critic-based optimal tracking for partially unknown nonlinear discrete-time systems. IEEE Transactions on Neural Networks and Learning Systems, 2015, 26(1): 140−151 doi: 10.1109/TNNLS.2014.2358227 [12] Zhu Y H, Zhao D B. Comprehensive comparison of online ADP algorithms for continuous-time optimal control. Artificial Intelligence Review, 2018, 49(4): 531−547 doi: 10.1007/s10462-017-9548-4 [13] Wang D, Zhao M M, Qiao J F. Intelligent optimal tracking with asymmetric constraints of a nonlinear wastewater treatment system. International Journal of Robust and Nonlinear Control, 2021, 31(14): 6773−6787 doi: 10.1002/rnc.5639 [14] Liu D R, Wang D, Yang X. An iterative adaptive dynamic programming algorithm for optimal control of unknown discrete-time nonlinear systems with constrained inputs. Information Sciences, 2013, 220: 331−342 doi: 10.1016/j.ins.2012.07.006 [15] Yi X N, Luo B, Zhao Y Q. Neural network-based robust guaranteed cost control for image-based visual servoing of quadrotor. IEEE Transactions on Neural Networks and Learning Systems, 2024, 35(9): 12693−12705 doi: 10.1109/TNNLS.2023.3264511 [16] Wei Q L, Liu D R, Liu Y, Song R Z. Optimal constrained self-learning battery sequential management in microgrid via adaptive dynamic programming. IEEE/CAA Journal of Automatica Sinica, 2017, 4(2): 168−176 doi: 10.1109/jas.2016.7510262 [17] Yang Y L, Ding D W, Xiong H Y, Yin Y X, Wunsch D C. Online barrier-actor-critic learning for $H_{\infty}$ control with full-state constraints and input saturation. Journal of the Franklin Institute, 2020, 357(6): 3316−3344 doi: 10.1016/j.jfranklin.2019.12.017 [18] Kong L H, He W, Liu Z J, Yu X B, Silvestre C. Adaptive tracking control with global performance for output-constrained MIMO nonlinear systems. IEEE Transactions on Automatic Control, 2023, 68(6): 3760−3767 doi: 10.1109/TAC.2022.3201258 [19] Fan B, Yang Q M, Tang X Y, Sun Y X. Robust ADP design for continuous-time nonlinear systems with output constraints. IEEE Transactions on Neural Networks and Learning Systems, 2018, 29(6): 2127−2138 doi: 10.1109/TNNLS.2018.2806347 [20] Fan B, Yang Q M, Jagannathan S, Sun Y X. Output-constrained control of nonaffine multiagent systems with partially unknown control directions. IEEE Transactions on Automatic Control, 2019, 64(9): 3936−3942 doi: 10.1109/TAC.2019.2892391 [21] He K H, Shi S L, van den Boom T, De Schutter B. Approximate dynamic programming for constrained piecewise affine systems with stability and safety guarantees. IEEE Transactions on Systems, Man, and Cybernetics: Systems, 2025, 55(3): 1722−1734 doi: 10.1109/TSMC.2024.3515645 [22] Tabuada P. Event-triggered real-time scheduling of stabilizing control tasks. IEEE Transactions on Automatic Control, 2007, 52(9): 1680−1685 doi: 10.1109/TAC.2007.904277 [23] Heemels W P M H, Johansson K H, Tabuada P. An introduction to event-triggered and self-triggered control. In: Proceedings of the IEEE 51st IEEE Conference on Decision and Control (CDC). Maui, USA: IEEE, 2012. 3270−3285 [24] Vamvoudakis K G. Event-triggered optimal adaptive control algorithm for continuous-time nonlinear systems. IEEE/CAA Journal of Automatica Sinica, 2014, 1(3): 282−293 [25] Xie X P, Zhou Q, Yue D, Li H Y. Relaxed control design of discrete-time Takagi-Sugeno fuzzy systems: An event-triggered real-time scheduling approach. IEEE Transactions on Systems, Man, and Cybernetics: Systems, 2018, 48(12): 2251−2262 doi: 10.1109/TSMC.2017.2737542 [26] Zhao B, Liu D R. Event-triggered decentralized tracking control of modular reconfigurable robots through adaptive dynamic programming. IEEE Transactions on Industrial Electronics, 2020, 67(4): 3054−3064 doi: 10.1109/TIE.2019.2914571 [27] Liu J, Zhang Y L, Yu Y, Sun C Y. Fixed-time leader-follower consensus of networked nonlinear systems via event/self-triggered control. IEEE Transactions on Neural Networks and Learning Systems, 2020, 31(11): 5029−5037 doi: 10.1109/TNNLS.2019.2957069 [28] Bai W W, Li T S, Long Y, Philip Chen C L. Event-triggered multigradient recursive reinforcement learning tracking control for multiagent systems. IEEE Transactions on Neural Networks and Learning Systems, 2023, 34(1): 366−379 doi: 10.1109/TNNLS.2021.3094901 [29] 李振兴, 庄娇娇, 杨成东, 邱建龙, 曹进德. 异构不确定二阶非线性多智能体系统事件触发状态趋同. 自动化学报, 2025, 51(4): 804−812 doi: 10.16383/j.aas.c240423Li Zhen-Xing, Zhuang Jiao-Jiao, Yang Cheng-Dong, Qiu Jian-Long, Cao Jin-De. Event-triggered state consensus of heterogeneous uncertain second-order nonlinear multi-agent systems. Acta Automatica Sinica, 2025, 51(4): 804−812 doi: 10.16383/j.aas.c240423 [30] Li Y M, Li Y X, Tong S C. Event-based finite-time control for nonlinear multiagent systems with asymptotic tracking. IEEE Transactions on Automatic Control, 2022, 68(6): 3790−3797 doi: 10.1109/tac.2022.3197562 [31] Zhao F Y, Luo S X Y, Gao W N, Wen C Y. Event-triggered cooperative adaptive optimal output regulation for multiagent systems under switching network: An adaptive dynamic programming approach. IEEE Transactions on Systems, Man, and Cybernetics: Systems, 2025, 55(3): 1707−1721 doi: 10.1109/TSMC.2024.3514202 [32] Dong L, Tang Y F, He H B, Sun C Y. An event-triggered approach for load frequency control with supplementary ADP. IEEE Transactions on Power Systems, 2017, 32(1): 581−589 doi: 10.1109/TPWRS.2016.2537984 [33] Wei Q L, Lu J W, Zhou T M, Cheng X, Wang F Y. Event-triggered near-optimal control of discrete-time constrained nonlinear systems with application to a boiler-turbine system. IEEE Transactions on Industrial Informatics, 2022, 18(6): 3926−3935 doi: 10.1109/TII.2021.3116084 [34] Girard A. Dynamic triggering mechanisms for event-triggered control. IEEE Transactions on Automatic Control, 2015, 60(7): 1992−1997 doi: 10.1109/TAC.2014.2366855 [35] Dolk V S, Borgers D P, Heemels W P M H. Output-based and decentralized dynamic event-triggered control with guaranteed $L_p$- gain performance and zeno-freeness. IEEE Transactions on Automatic Control, 2017, 62(1): 34−49 doi: 10.1109/TAC.2016.2536707 [36] Wang L J, Philip Chen C L. Reduced-order observer-based dynamic event-triggered adaptive NN control for stochastic nonlinear systems subject to unknown input saturation. IEEE Transactions on Neural Networks and Learning Systems, 2021, 32(4): 1678−1690 doi: 10.1109/TNNLS.2020.2986281 [37] 何怡睿, 苏涵光, 张化光, 栾鑫洋. 未知大规模互联系统在线分散式动态事件触发控制. 自动化学报, 2025, 51(9): 2011−2026 doi: 10.16383/j.aas.c240262He Yi-Rui, Su Han-Guang, Zhang Hua-Guang, Luan Xin-Yang. Online decentralized dynamic event-triggered control of unknown large-scale interconnected systems. Acta Automatica Sinica, 2025, 51(9): 2011−2026 doi: 10.16383/j.aas.c240262 [38] Ge X H, Han Q L, Ding L, Wang Y L, Zhang X M. Dynamic event-triggered distributed coordination control and its applications: A survey of trends and techniques. IEEE Transactions on Systems, Man, and Cybernetics: Systems, 2020, 50(9): 3112−3125 doi: 10.1109/TSMC.2020.3010825 [39] Zhang P, Yuan Y, Guo L. Fault-tolerant optimal control for discrete-time nonlinear system subjected to input saturation: A dynamic event-triggered approach. IEEE Transactions on Cybernetics, 2021, 51(6): 2956−2968 doi: 10.1109/TCYB.2019.2923011 [40] Shi T, Shi P, Wu Z G. Dynamic event-triggered asynchronous MPC of Markovian jump systems with disturbances. IEEE Transactions on Cybernetics, 2022, 52(11): 11639−11648 doi: 10.1109/TCYB.2021.3078572 [41] He W L, Xu B, Han Q L, Qian F. Adaptive consensus control of linear multiagent systems with dynamic event-triggered strategies. IEEE Transactions on Cybernetics, 2020, 50(7): 2996−3008 doi: 10.1109/TCYB.2019.2920093 [42] Zhang H G, Li W H, Zhang J, Wang Y C, Sun J Y. Fully distributed dynamic event-triggered bipartite formation tracking for multiagent systems with multiple nonautonomous leaders. IEEE Transactions on Neural Networks and Learning Systems, 2023, 34(10): 7453−7466 doi: 10.1109/TNNLS.2022.3143867 [43] Mu C X, Wang K, Qiu T. Dynamic event-triggering neural learning control for partially unknown nonlinear systems. IEEE Transactions on Cybernetics, 2022, 52(4): 2200−2213 doi: 10.1109/TCYB.2020.3004493 [44] Mu C X, Wang K, Sun C Y. Learning control supported by dynamic event communication applying to industrial systems. IEEE Transactions on Industrial Informatics, 2021, 17(4): 2325−2335 doi: 10.1109/TII.2020.2999376 [45] Yang X, Xu M M, Wei Q L. Dynamic event-sampled control of interconnected nonlinear systems using reinforcement learning. IEEE Transactions on Neural Networks and Learning Systems, 2024, 35(1): 923−937 doi: 10.1109/TNNLS.2022.3178017 [46] 王鼎, 胡凌治, 赵明明, 哈明鸣, 乔俊飞. 未知非线性零和博弈最优跟踪的事件触发控制设计. 自动化学报, 2023, 49(1): 91−101 doi: 10.16383/j.aas.c220378Wang Ding, Hu Ling-Zhi, Zhao Ming-Ming, Ha Ming-Ming, Qiao Jun-Fei. Event-triggered control design for optimal tracking of unknown nonlinear zero-sum games. Acta Automatica Sinica, 2023, 49(1): 91−101 doi: 10.16383/j.aas.c220378 [47] 任好, 马亚杰, 姜斌, 刘成瑞. 基于零和微分博弈的航天器编队通信链路故障容错控制. 自动化学报, 2025, 51(1): 174−185 doi: 10.16383/j.aas.c240115Ren Hao, Ma Ya-Jie, Jiang Bin, Liu Cheng-Rui. Fault-tolerant control for spacecraft formation with communication faults based on zero-sum differential game. Acta Automatica Sinica, 2025, 51(1): 174−185 doi: 10.16383/j.aas.c240115 [48] Khalil H K. Nonlinear Systems (Second edition). Upper Saddle River, NJ, USA: Prentice Hall, 1996. -

计量

- 文章访问数: 153

- HTML全文浏览量: 77

- 被引次数: 0

下载:

下载: