Autonomous Liver Ultrasound Scanning via Robotic Arm Using Ensemble Bayesian Interaction Primitives

-

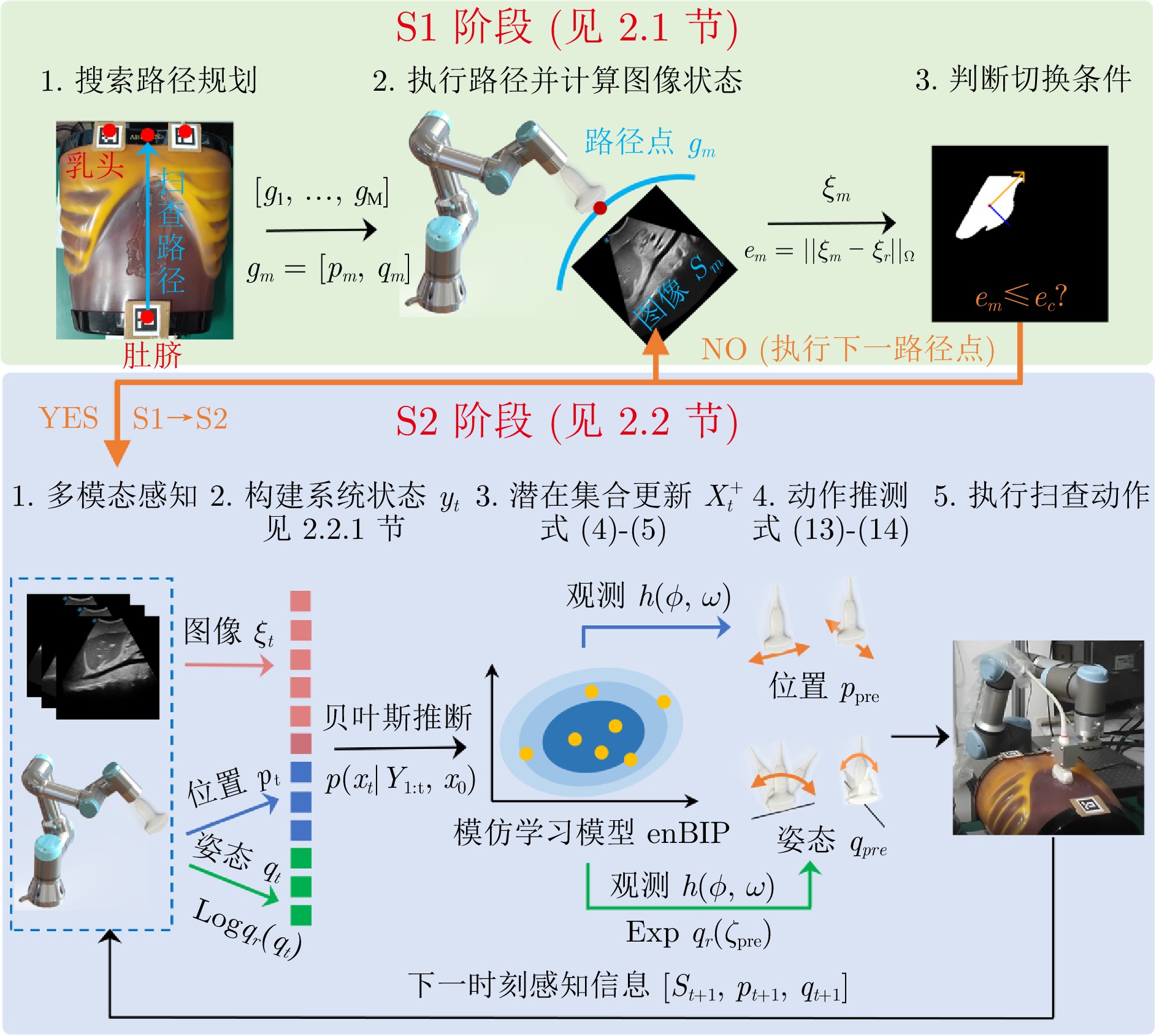

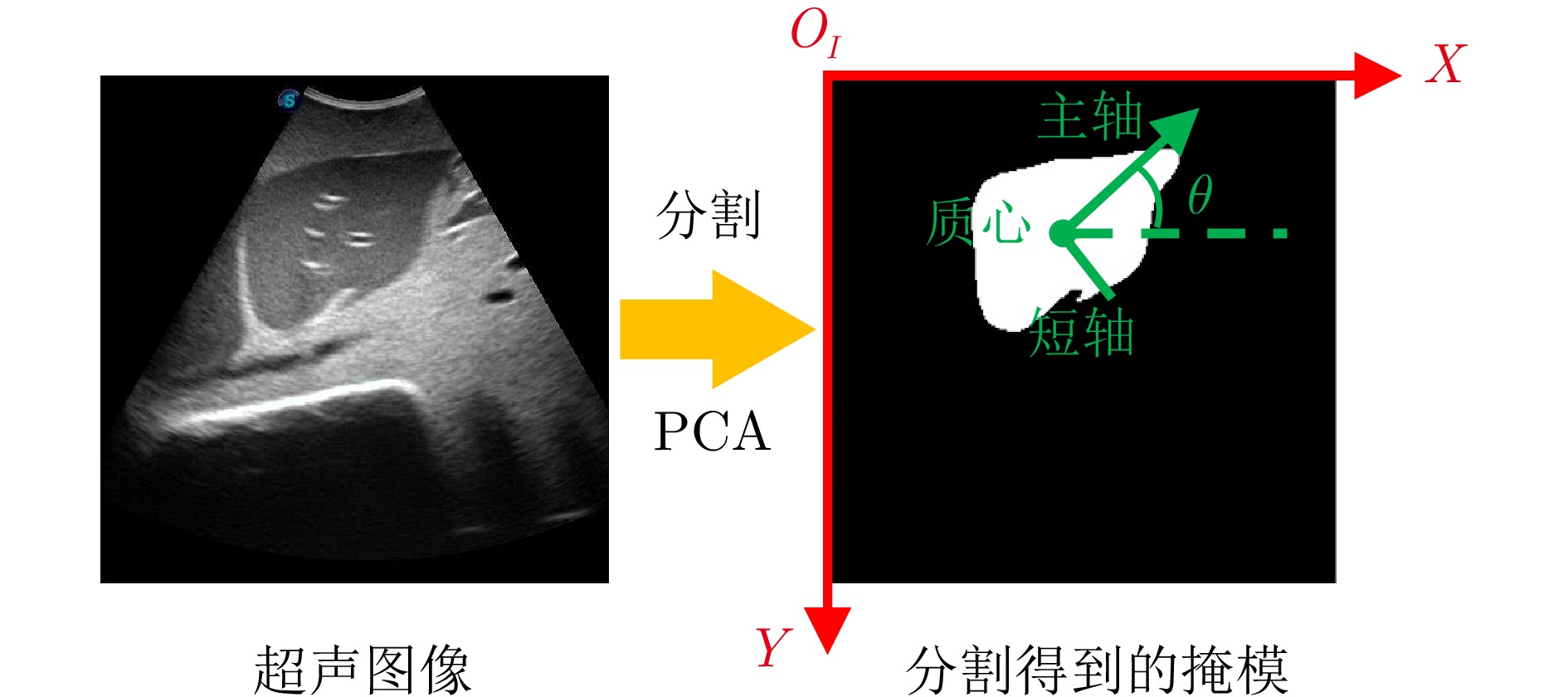

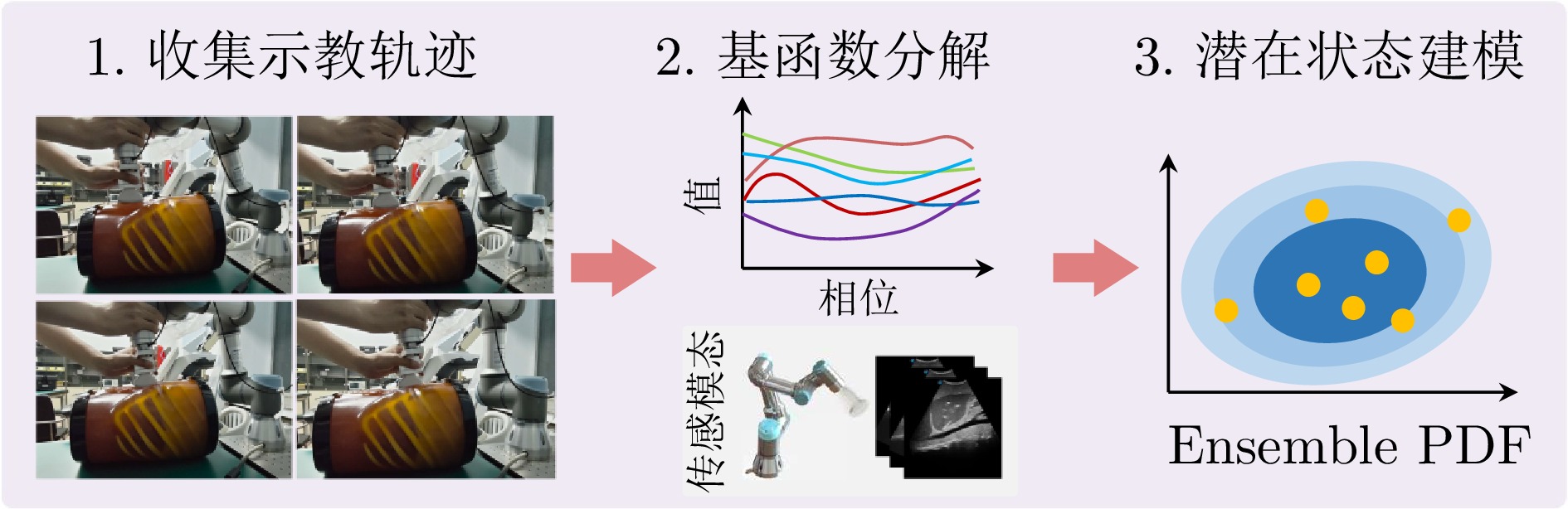

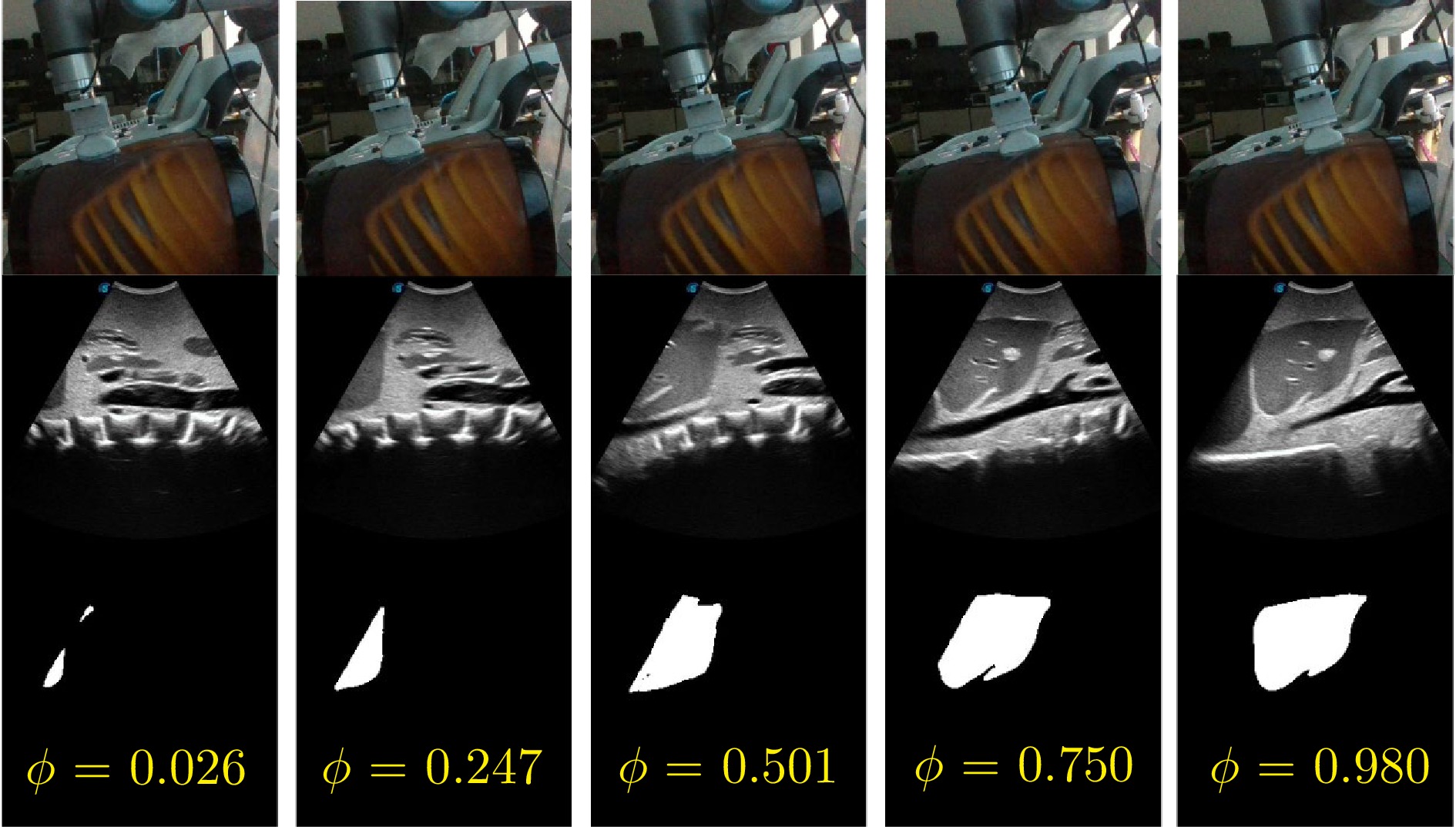

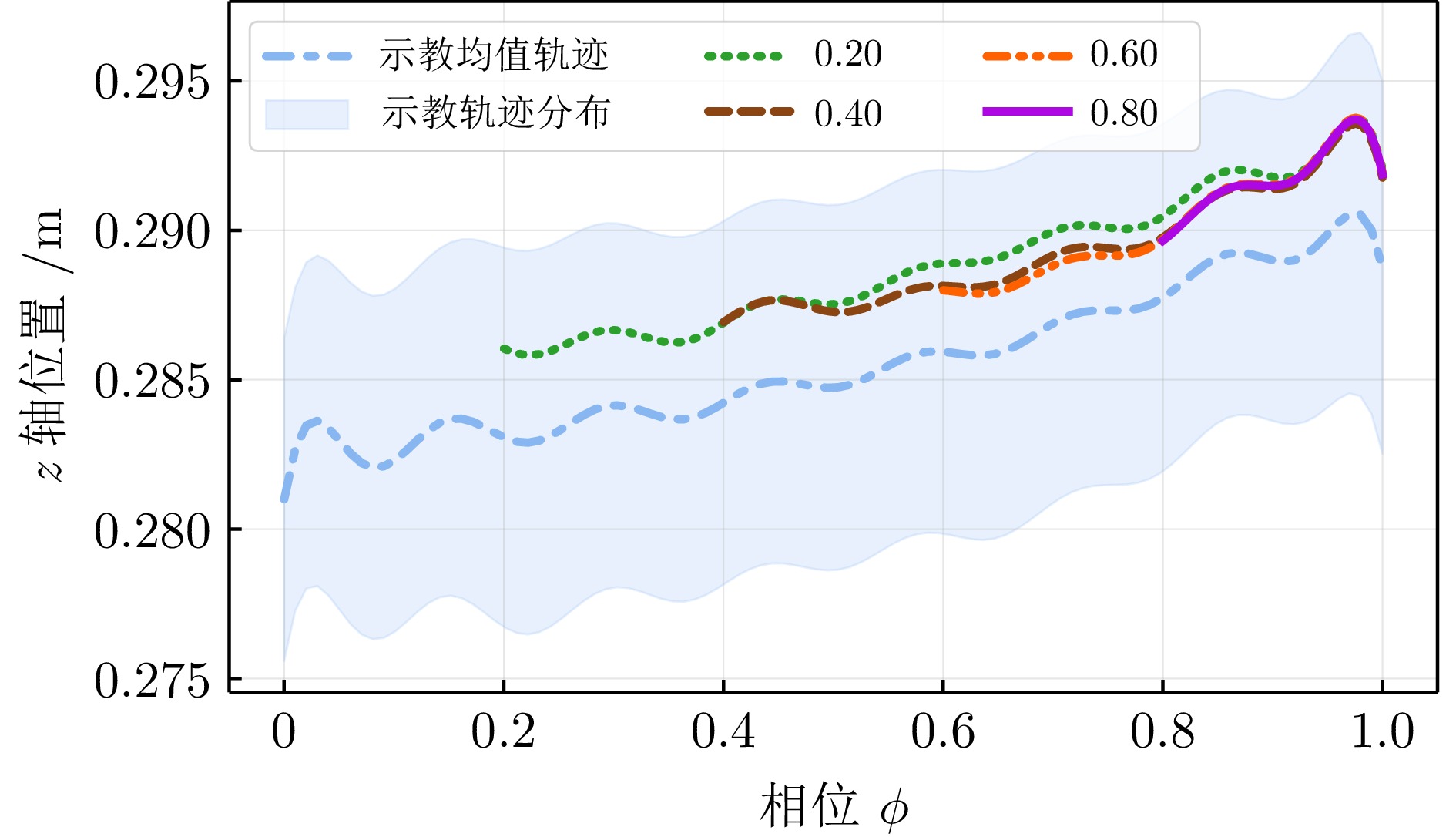

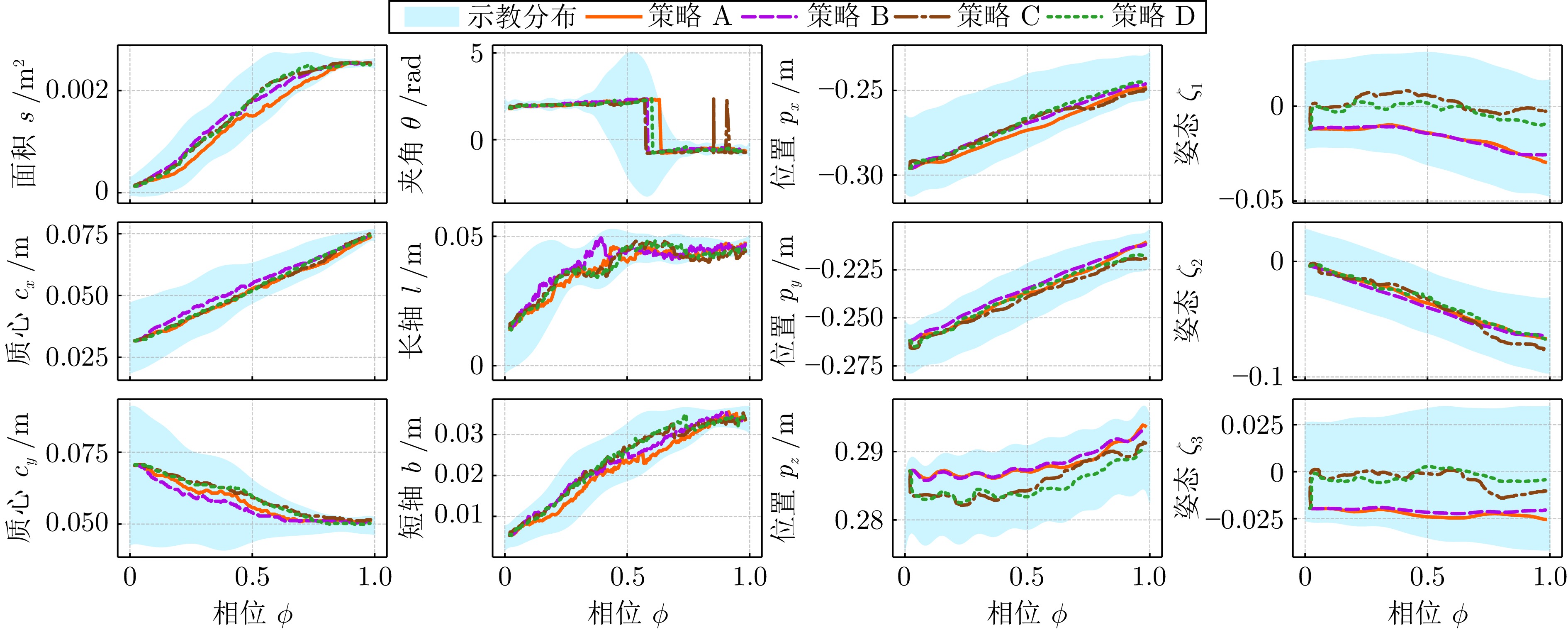

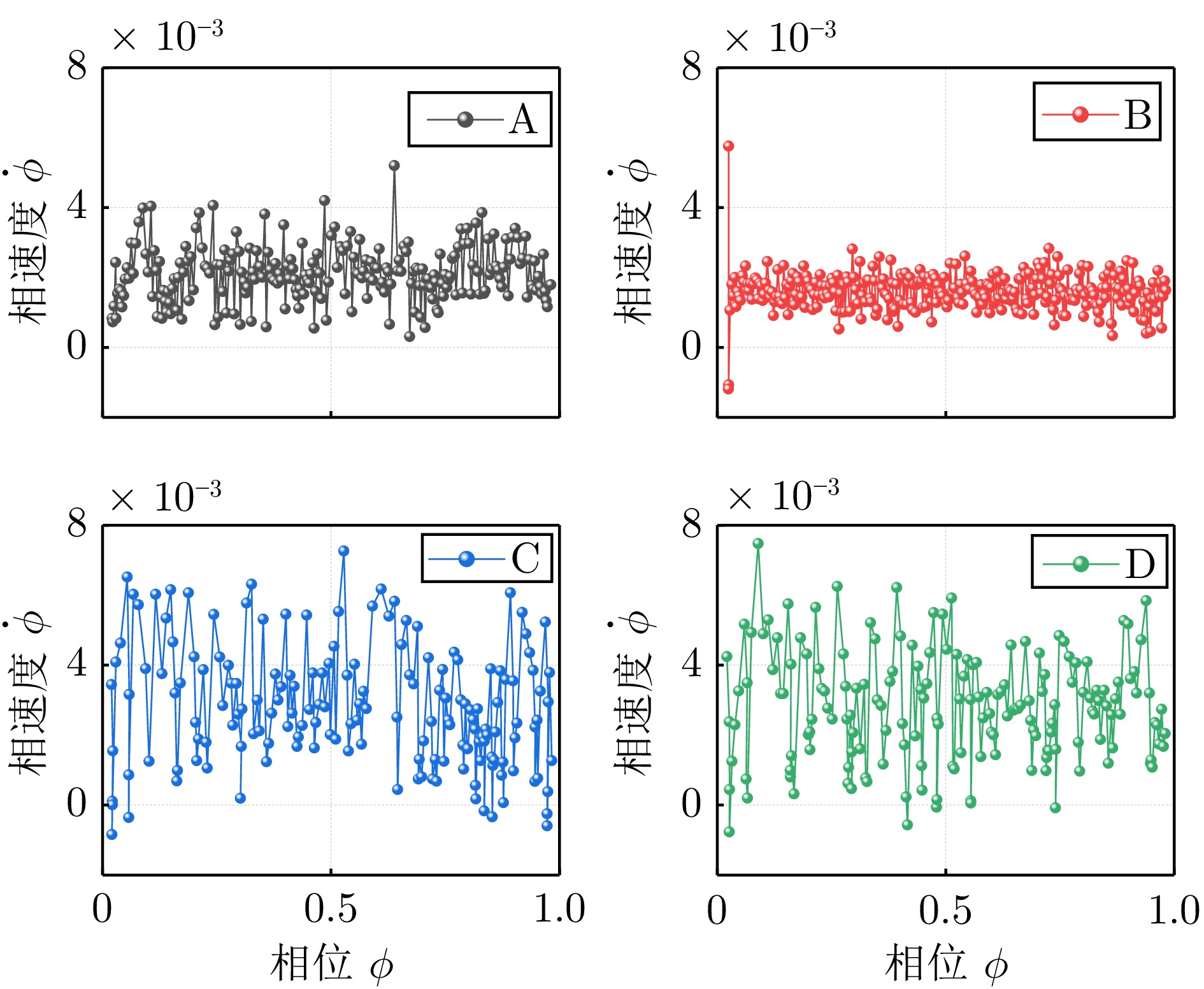

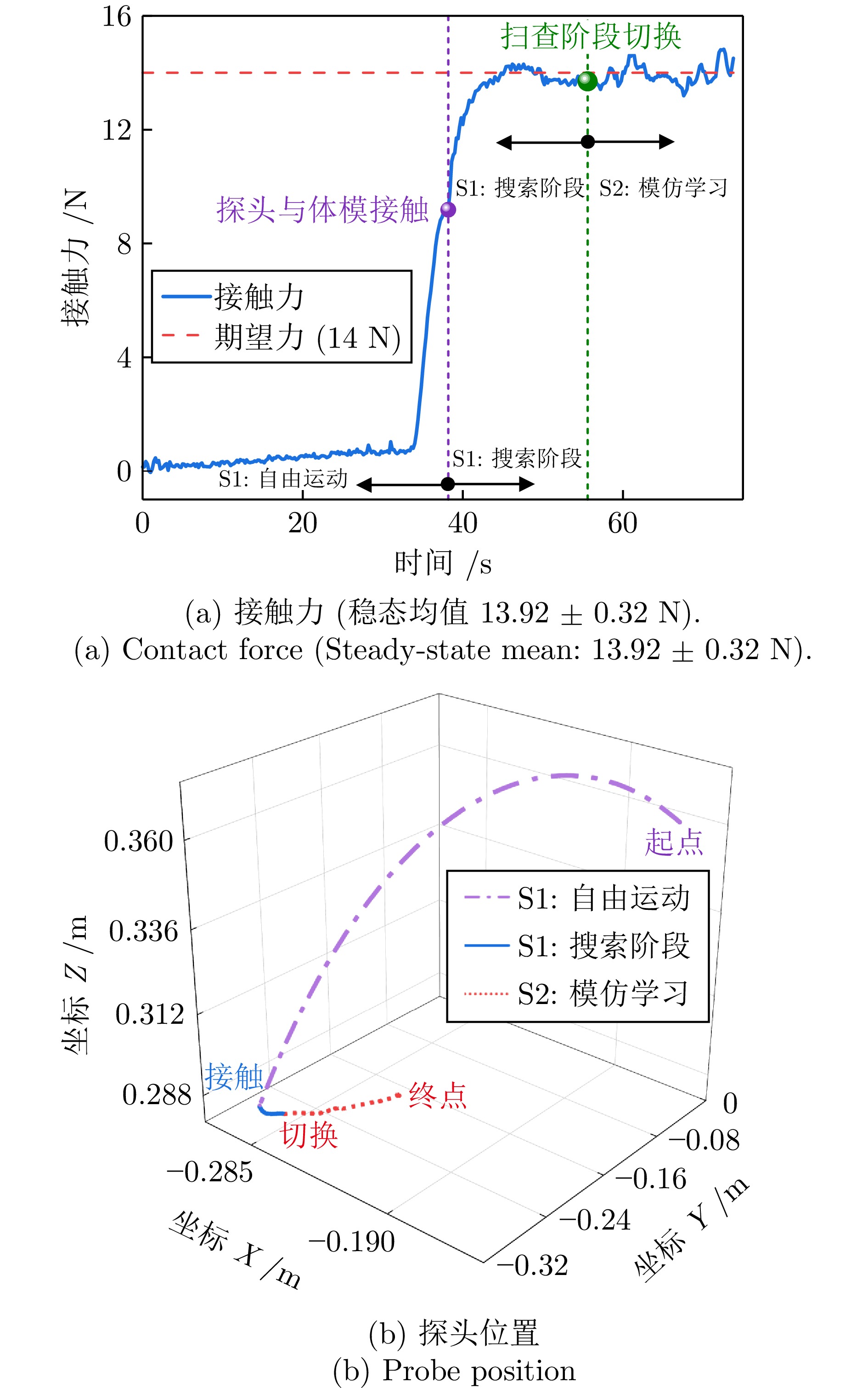

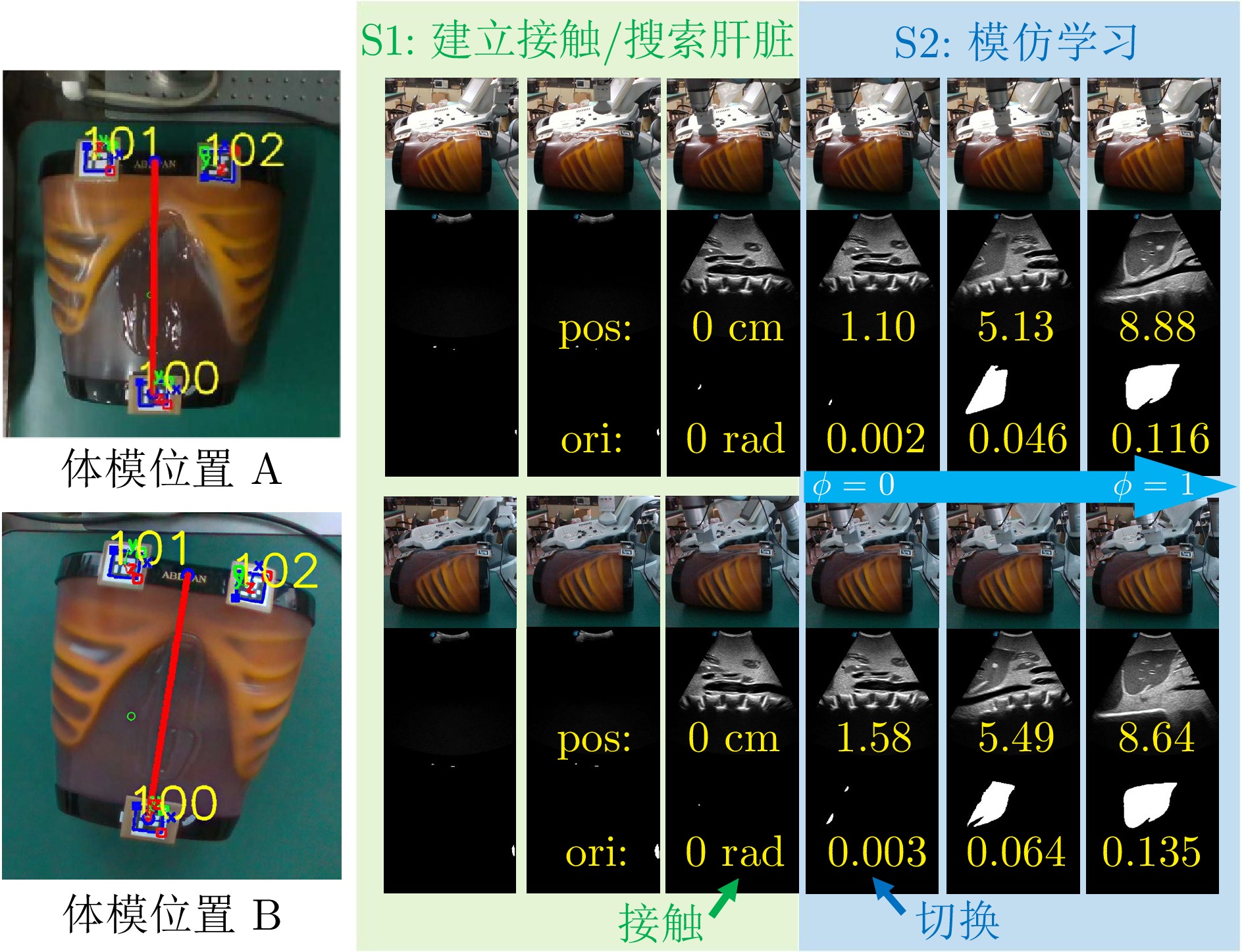

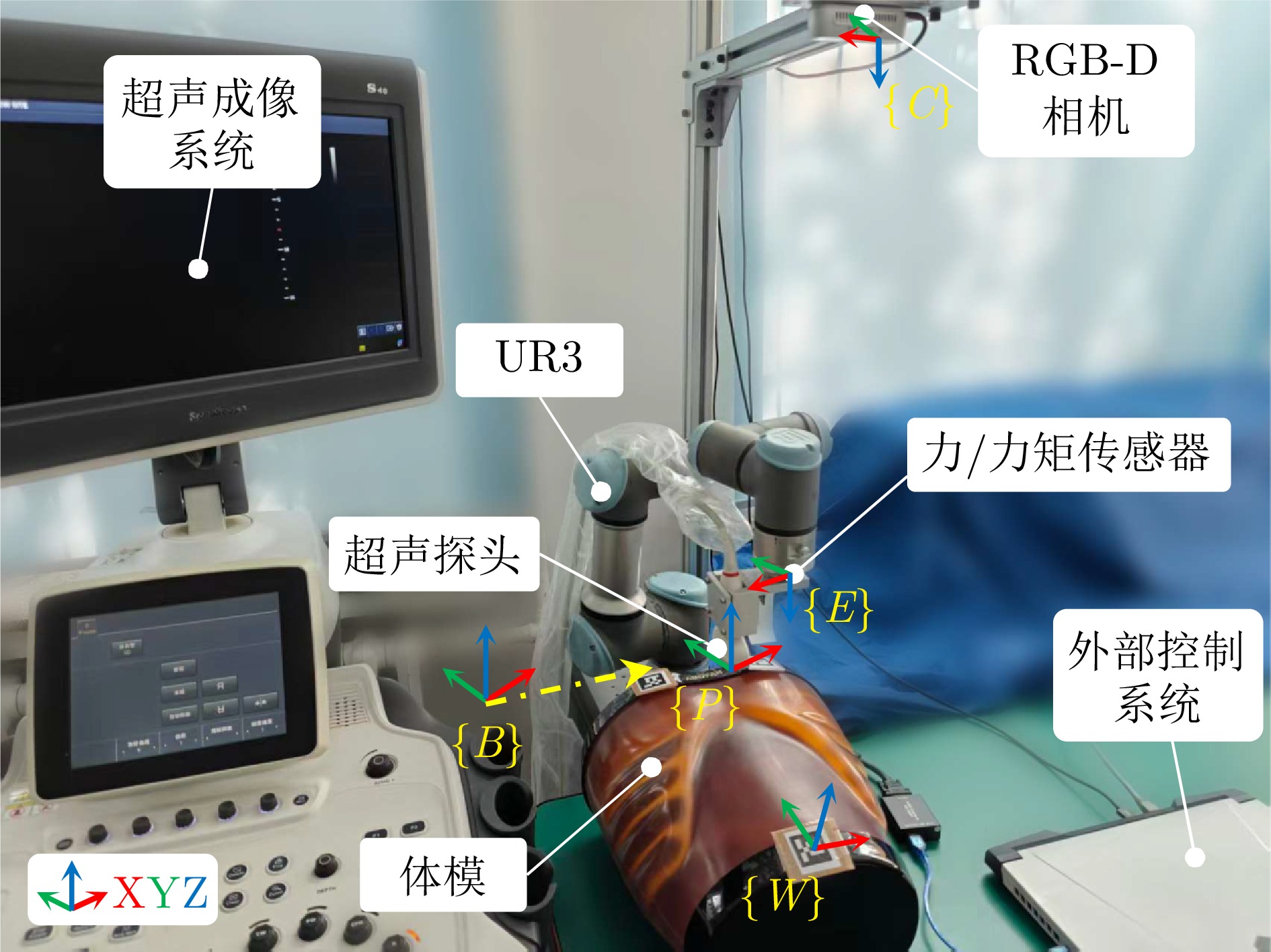

摘要: 针对人体肝脏结构的超声扫查需求, 提出一种基于集合贝叶斯交互基元的全自主机械臂辅助扫查方法, 并搭建了相应的实验系统.该方法将扫查流程划分为顺序执行的“初始定位”与“模仿学习”两个阶段.在初始定位阶段, 系统通过RGB-D图像引导探头与患者建立接触, 并基于实时超声图像判断向模仿学习阶段切换的时机. 在模仿学习阶段, 系统将医师示范的扫查技能编码为超声图像与探头运动轨迹, 并通过集合贝叶斯交互基元实现对扫查技能的学习与复现, 最终完成肝脏的自主超声扫查. 最后, 在人体腹部体模上对所提方法进行了实验验证. 实验结果表明, 该方法在无需人工干预的条件下即可完成肝脏自主扫查任务, 展现出良好的临床应用前景.Abstract: To advance liver ultrasound examination, this study proposes a fully autonomous robotic ultrasound scanning framework based on ensemble Bayesian Interaction Primitives (enBIP), and develops the corresponding experimental system. The proposed framework consists of two sequential stages: initial positioning and imitation learning. In the initial positioning stage, the system guides the probe to establish contact with the patient using RGB-D images and determines the transition to the imitation learning stage based on real-time ultrasound feedback. In the imitation learning stage, the system encodes expert scanning skills into ultrasound image state trajectories and probe motion trajectories, and learns to reproduce these skills using enBIP. Consequently, fully autonomous robotic liver ultrasound scanning is achieved. Finally, the proposed framework was experimentally validated on an abdominal phantom. Experimental results demonstrate that the proposed framework successfully performs the liver scanning task without human intervention, highlighting its potential for clinical application.

-

表 1 本文方法与现有代表性研究的特性对比

Table 1 Feature comparison between the proposed method and existing representative studies

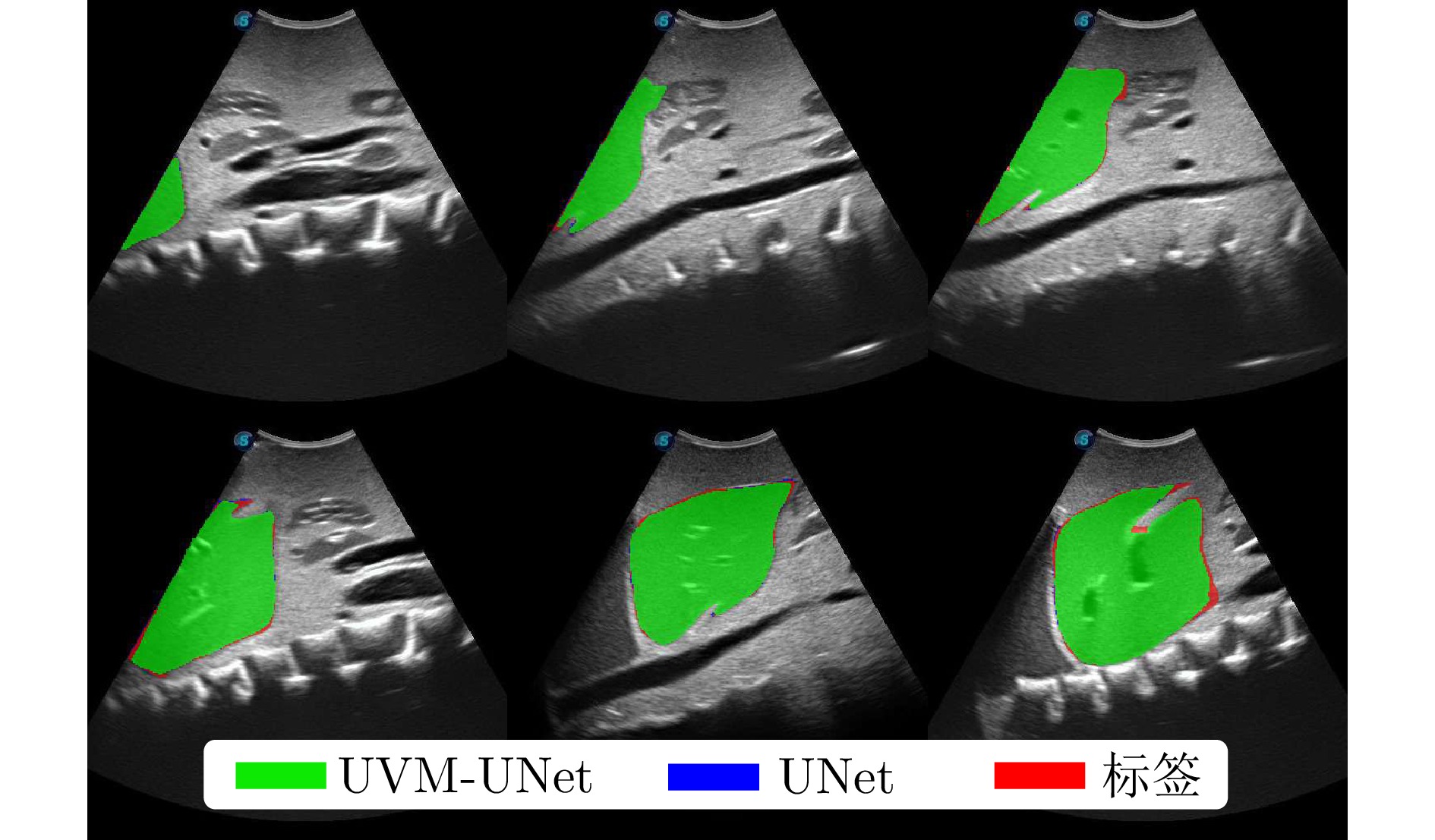

表 2 深度网络分割性能指标

Table 2 Segmentation performance metrics of deep networks

模型 mIoU Dice Acc Spe Sen 自主初始定位 UVM-UNet 0.9529 $ \pm $0.0041 0.9759 $ \pm $0.0022 0.9935 $ \pm $0.0008 0.9963 $ \pm $0.0004 0.9755 $ \pm $0.0027 9.09$ \pm $0.30 UNet 0.9674 $ \pm $0.0056 0.9834 $ \pm $0.0029 0.9955 $ \pm $0.0007 0.9973 $ \pm $0.0007 0.9846 $ \pm $0.0014 43.04$ \pm $0.19 表 3 不同扫查策略评价指标对比

Table 3 Comparison of evaluation metrics of different scanning strategies

策略 $ e_{\boldsymbol{p}} $ /m $ e_{\boldsymbol{q}} $ /rad 推理次数/次 成功率 A 0.0024 0.0153 244$ \pm $12 10/10 B 0.0012 0.0091 303$ \pm $6 10/10 C 0.0046 0.0295 170$ \pm $13 10/10 D$ ^* $ 0.0041 0.0255 175$ \pm $26 8/10 $ ^* $: 该策略下的评价指标以8个样本计算 -

[1] Bray F, Laversanne M, Sung H, Ferlay J, Siegel R L, Soerjomataram I, et al. Global cancer statistics 2022: GLOBOCAN estimates of incidence and mortality worldwide for 36 cancers in 185 countries. Ca-a Cancer Journal for Clinicians, 2024, 74(3): 229−263 doi: 10.3410/f.739487650.793592245 [2] Zhou J K, Tian H Z, Wang W, Huang Q H. Fully automated thyroid ultrasound screening utilizing multi-modality image and anatomical prior. Biomedical Signal Processing and Control, 2024, 87(A): Article No. 105430 doi: 10.1016/j.bspc.2023.105430 [3] Huang Q H, Gao B, Wang M L. Robot-assisted autonomous ultrasound imaging for carotid artery. IEEE Transactions on Instrumentation and Measurement, 2024, 73: Article No. 4003009 doi: 10.1109/tim.2024.3353836 [4] Priester A M, Natarajan S, Culjat M O. Robotic ultrasound systems in medicine. IEEE Transactions on Ultrasonics, Ferroelectrics, and Frequency Control, 2013, 60(3): 507−523 doi: 10.1109/TUFFC.2013.2593 [5] Huang Q H, Zhou J K, Li Z J. Review of robot-assisted medical ultrasound imaging systems: Technology and clinical applications. Neurocomputing, 2023, 559: Article No. 126790 doi: 10.1016/j.neucom.2023.126790 [6] Ma X H, Zeng M J, Hill J C, Hoffmann B, Zhang Z M, Zhang H C K. Guiding the last centimeter: Novel anatomy-aware probe servoing for standardized imaging plane navigation in robotic lung ultrasound. IEEE Transactions on Automation Science and Engineering, 2025, 22: 6569−6580 doi: 10.1109/TASE.2024.3448241 [7] Ning G C, Zhang X R, Liao H G. Autonomic robotic ultrasound imaging system based on reinforcement learning. IEEE Transactions on Biomedical Engineering, 2021, 68(9): 2787−2797 doi: 10.1109/TBME.2021.3054413 [8] Luo C W, Chen Y H, Cao H Z, Sibahee M A Al, Xu W T, Zhang J. Multi-modal autonomous ultrasound scanning for efficient human-machine fusion interaction. IEEE Transactions on Automation Science and Engineering, 2025, 22: 4712−4723 doi: 10.1109/TASE.2024.3370728 [9] Hu Y, Tavakoli M. Autonomous ultrasound scanning towards standard plane using interval interaction probabilistic movement primitives. In: Proceedings of the 2023 IEEE/RSJ International Conference on Intelligent Robots and Systems. Detroit, USA: IEEE, 2023. 3719-3727 [10] Wang Z H, Shi D H, Yang C G, Si W Y, Li Q C. Autonomous liver ultrasound examination based on imitation learning and stiffness estimation. In: Proceedings of the 2024 IEEE International Conference on Industrial Technology. Bristol, UK: IEEE, 2024. 1-6 [11] Deng X T, Jiang J N, Cheng W, Yang C G, Li M. Learning freehand ultrasound through multimodal representation and skill adaptation. IEEE Transactions on Automation Science and Engineering, 2025, 22: 5117−5130 doi: 10.1109/TASE.2024.3416827 [12] Campbell J, Stepputtis S, Amor H B. Probabilistic multimodal modeling for human-robot interaction tasks. In: Proceedings of the 15th Robotics: Science and Systems. Freiburg im Breisgau, Germany, 2019. 1-9 [13] Campbell J, Hitzmann A, Stepputtis S, Ikemoto S, Hosoda K, Amor H B. Learning interactive behaviors for musculoskeletal robots using Bayesian Interaction Primitives. In: Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems. Macau, China: IEEE, 2019. 5071-5078 [14] Clark G, Amor H B. Learning ergonomic control in human-robot symbiotic walking. IEEE Transactions on Robotics, 2023, 39(1): 327−342 doi: 10.1109/TRO.2022.3192779 [15] Huang Q H, Lan J L, Li X L. Robotic arm based automatic ultrasound scanning for three-dimensional imaging. IEEE Transactions on Industrial Informatics, 2019, 15(2): 1173−1182 doi: 10.1109/TII.2018.2871864 [16] Wu R K, Liu Y H, Ning G C, Liang P C, Chang Q. UltraLight VM-UNet: Parallel Vision Mamba significantly reduces parameters for skin lesion segmentation. Patterns, 2025, 6(11): Article No. 101298 doi: 10.1016/j.patter.2025.101298 [17] Mustafa A S B, Ishii T, Matsunaga Y, Nakadate R, Ishii H, Ogawa K, et al. Development of robotic system for autonomous liver screening using ultrasound scanning device. In: Proceedings of the 2013 IEEE International Conference on Robotics and Biomimetics. Shenzhen, China: IEEE, 2013. 804-809 [18] Huang Y L, Abu-Dakka F J, Silvério J, Caldwell D G. Toward orientation learning and adaptation in cartesian space. IEEE Transactions on Robotics, 2021, 37(1): 82−98 doi: 10.1109/TRO.2020.3010633 [19] Zeestraten M J A, Havoutis I, Silvério J, Calinon S, Caldwell D G. An approach for imitation learning on riemannian manifolds. IEEE Robotics and Automation Letters, 2017, 2(3): 1240−1247 doi: 10.1109/LRA.2017.2657001 [20] Wang Z W, Zhao B L, Zhang P, Yao L, Wang Q, Li B, et al. Full-coverage path planning and stable interaction control for automated robotic breast ultrasound scanning. IEEE Transactions on Industrial Electronics, 2023, 70(7): 7051−7061 doi: 10.1109/TIE.2022.3204967 [21] Jiang Z L, Grimm M, Zhou M C, Hu Y, Esteban J, Navab N. Automatic force-based probe positioning for precise robotic ultrasound acquisition. IEEE Transactions on Industrial Electronics, 2021, 68(11): 11200−11211 doi: 10.1109/TIE.2020.3036215 [22] 张立建, 胡瑞钦, 易旺民. 基于六维力传感器的工业机器人末端负载受力感知研究. 自动化学报, 2017, 43(3): 439−447 doi: 10.16383/j.aas.2017.c150753Zhang Li-Jian, Hu Rui-Qin, Yi Wang-Min. Research on force sensing for the end-load of industrial robot based on a 6-axis Force/Torque sensor. Acta Automatica Sinica, 2017, 43(3): 439−447 doi: 10.16383/j.aas.2017.c150753 [23] Ronneberger O, Fischer P, Brox T. U-Net: convolutional networks for biomedical image segmentation. In: Proceedings of the 18th International Conference on Medical Image Computing and Computer-Assisted Intervention. Munich, Germany: Springer, 2015. 234-241 -

计量

- 文章访问数: 124

- HTML全文浏览量: 100

- 被引次数: 0

下载:

下载: