-

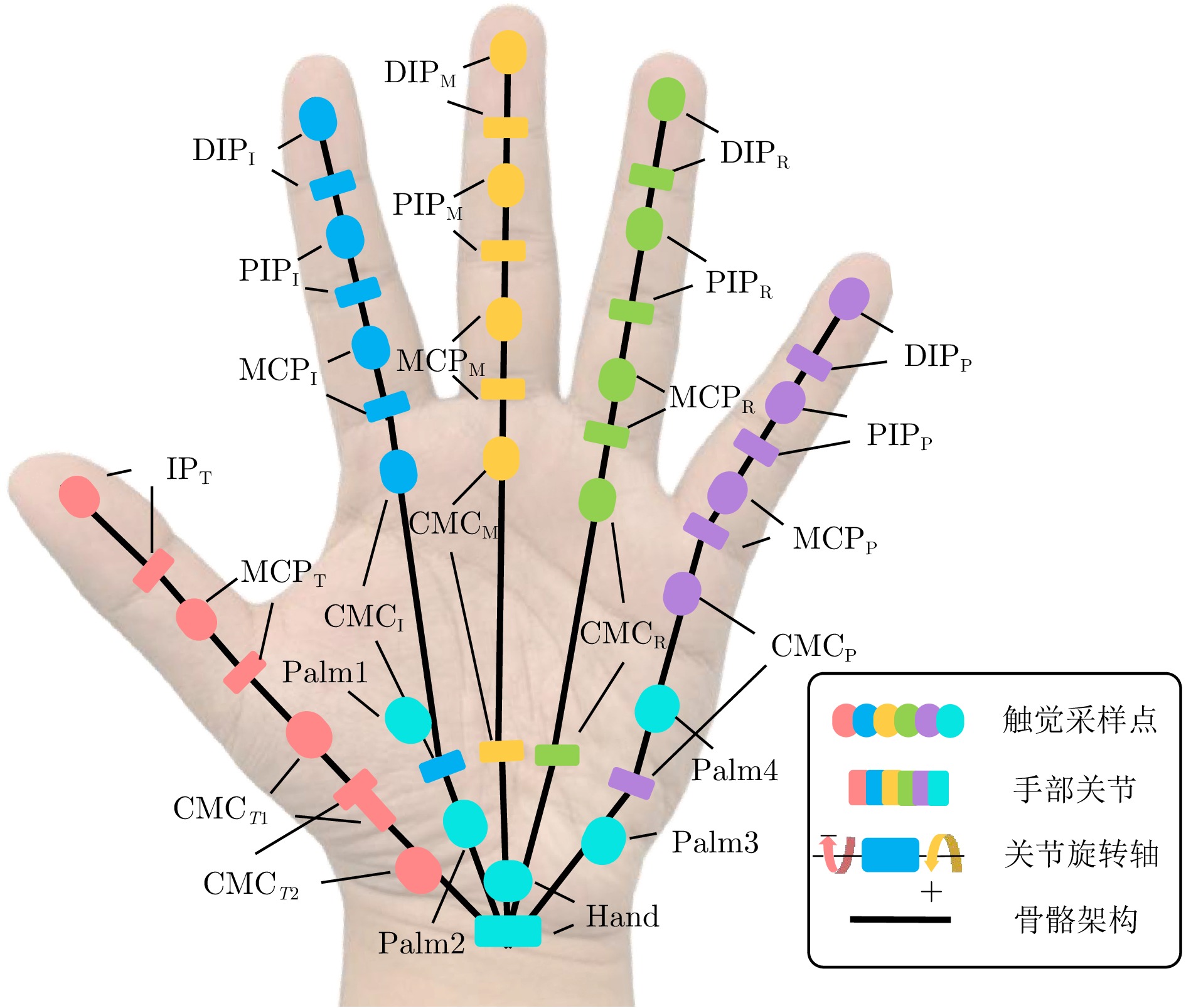

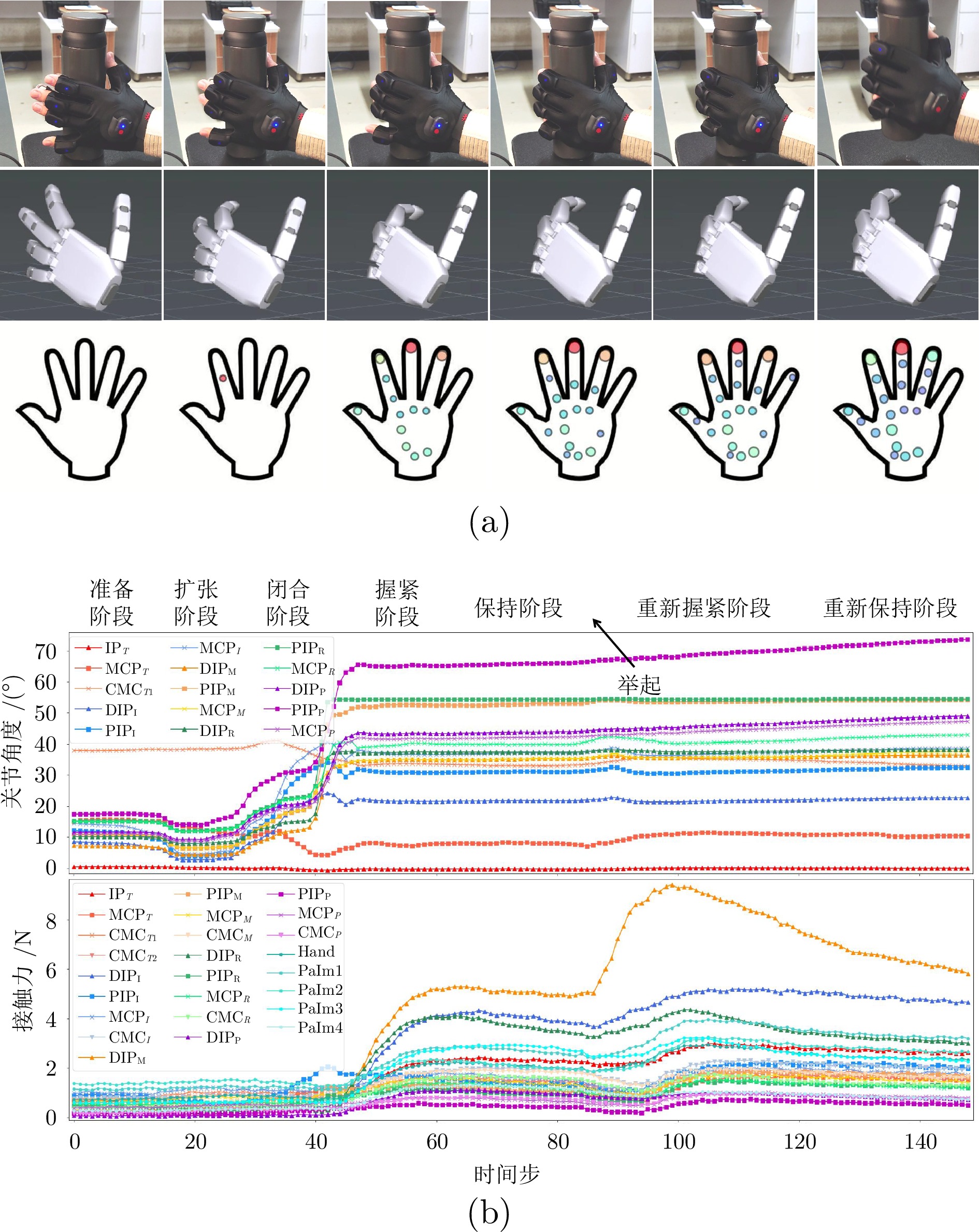

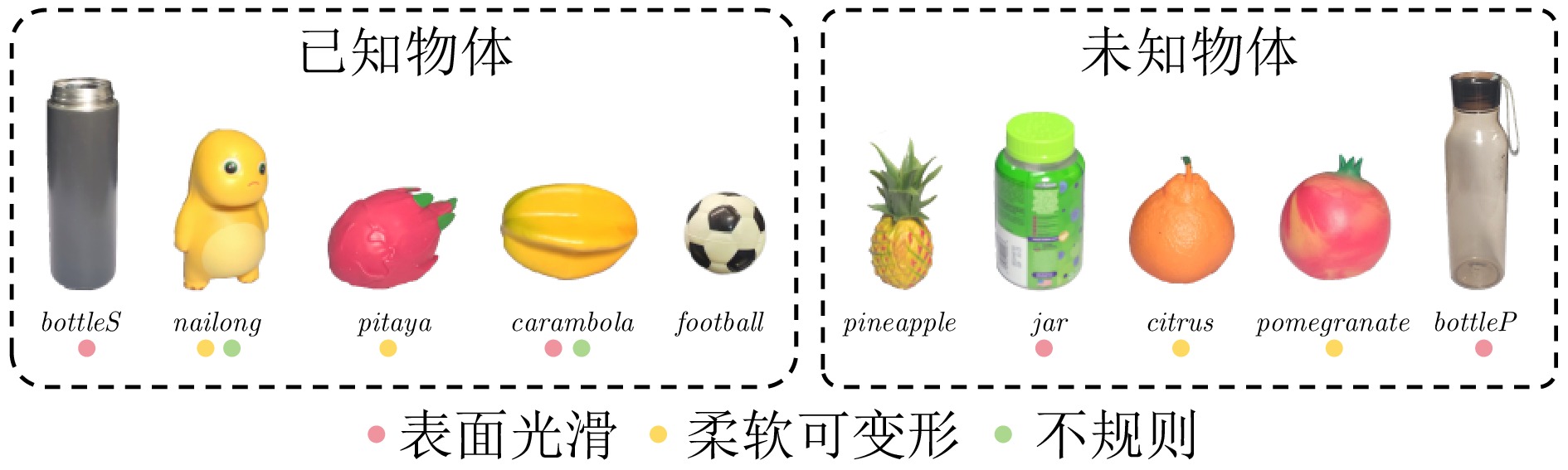

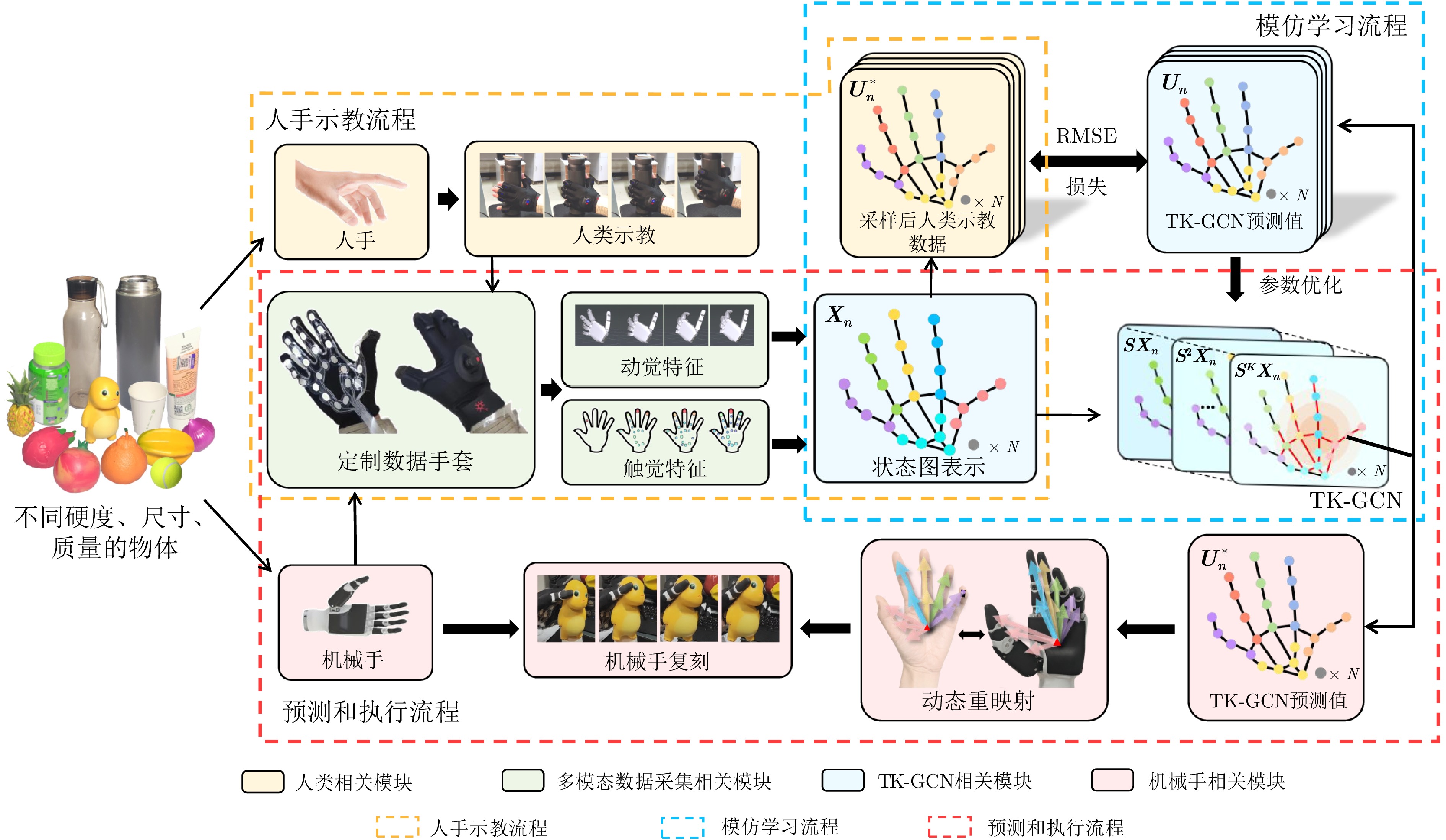

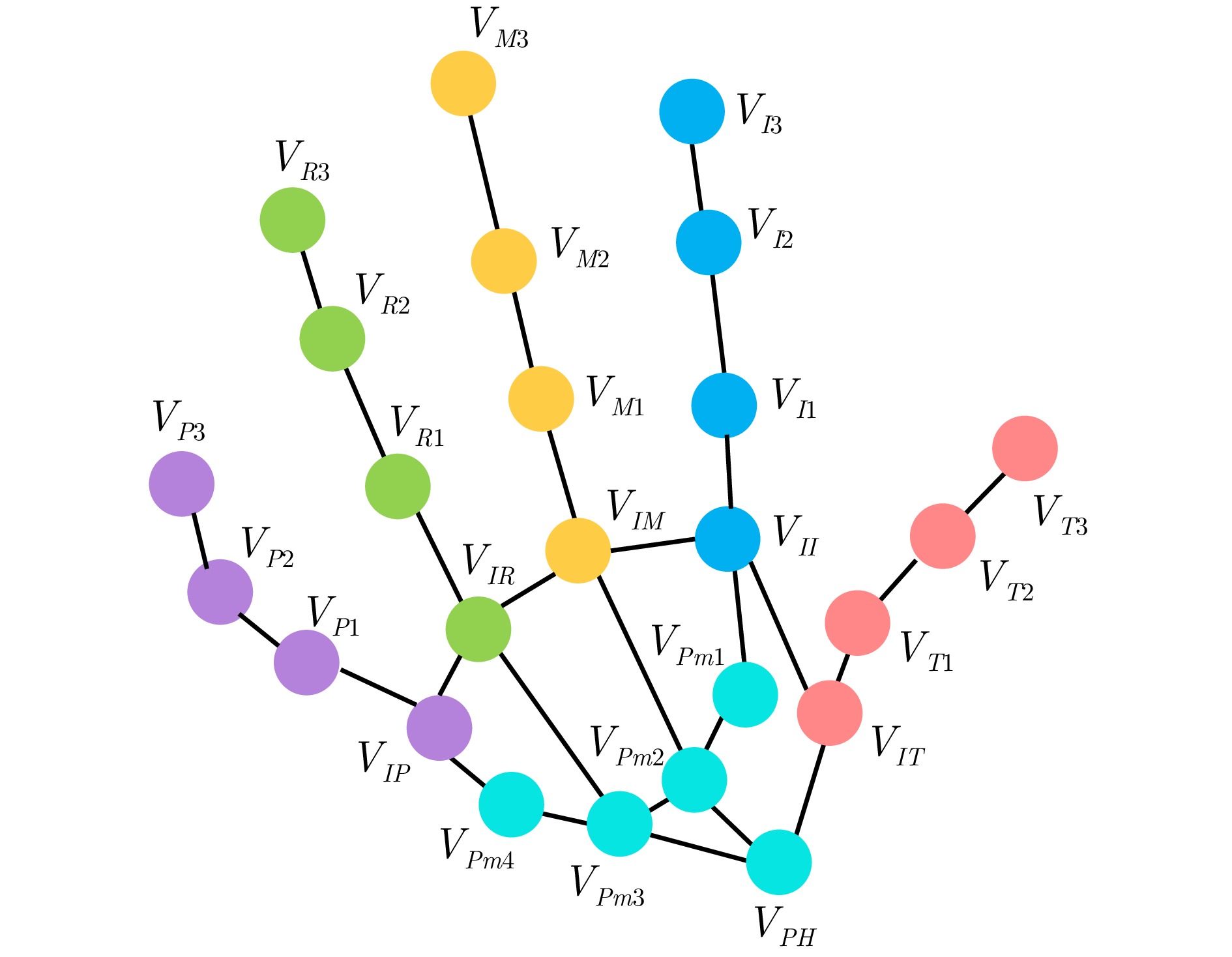

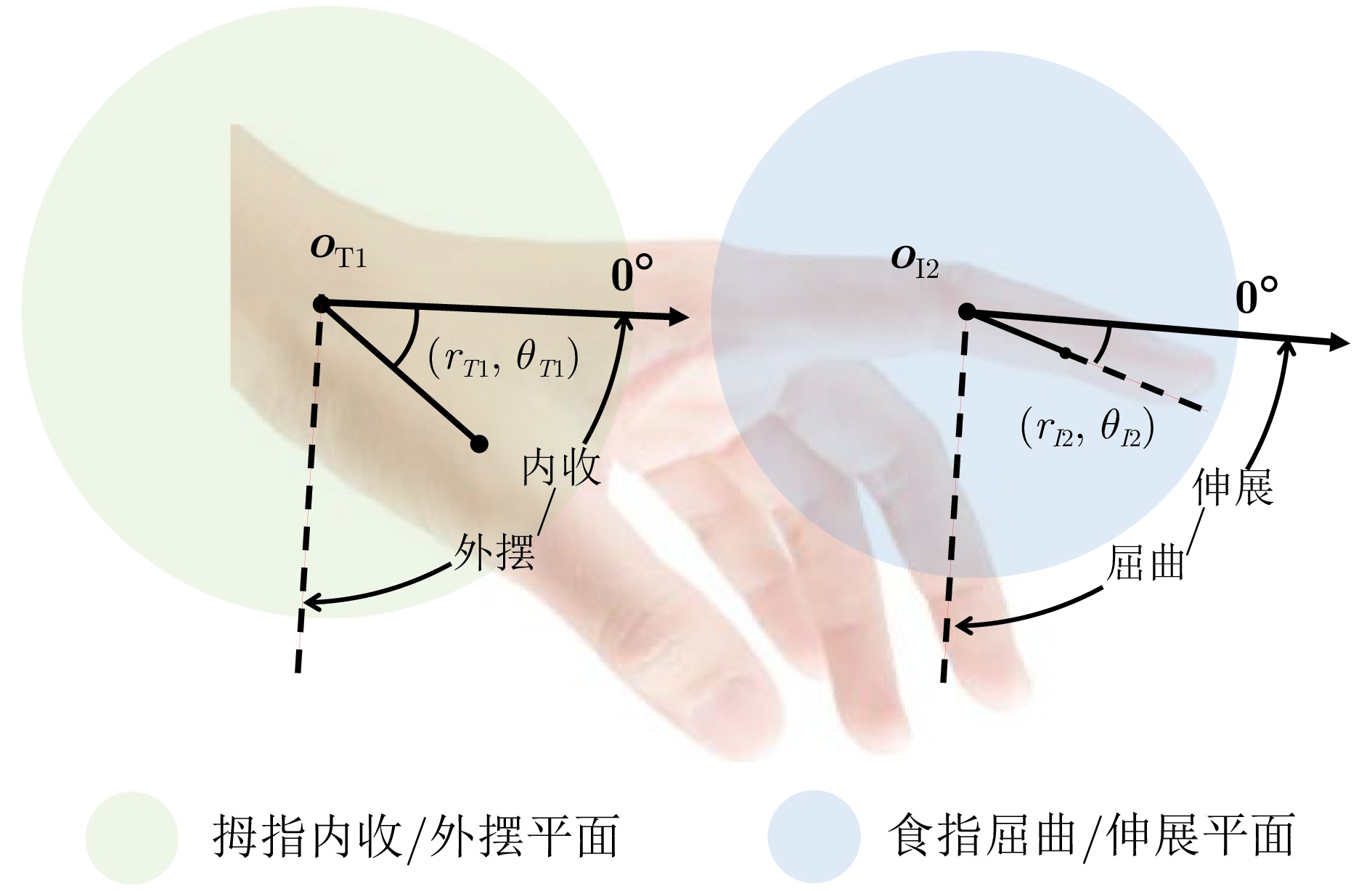

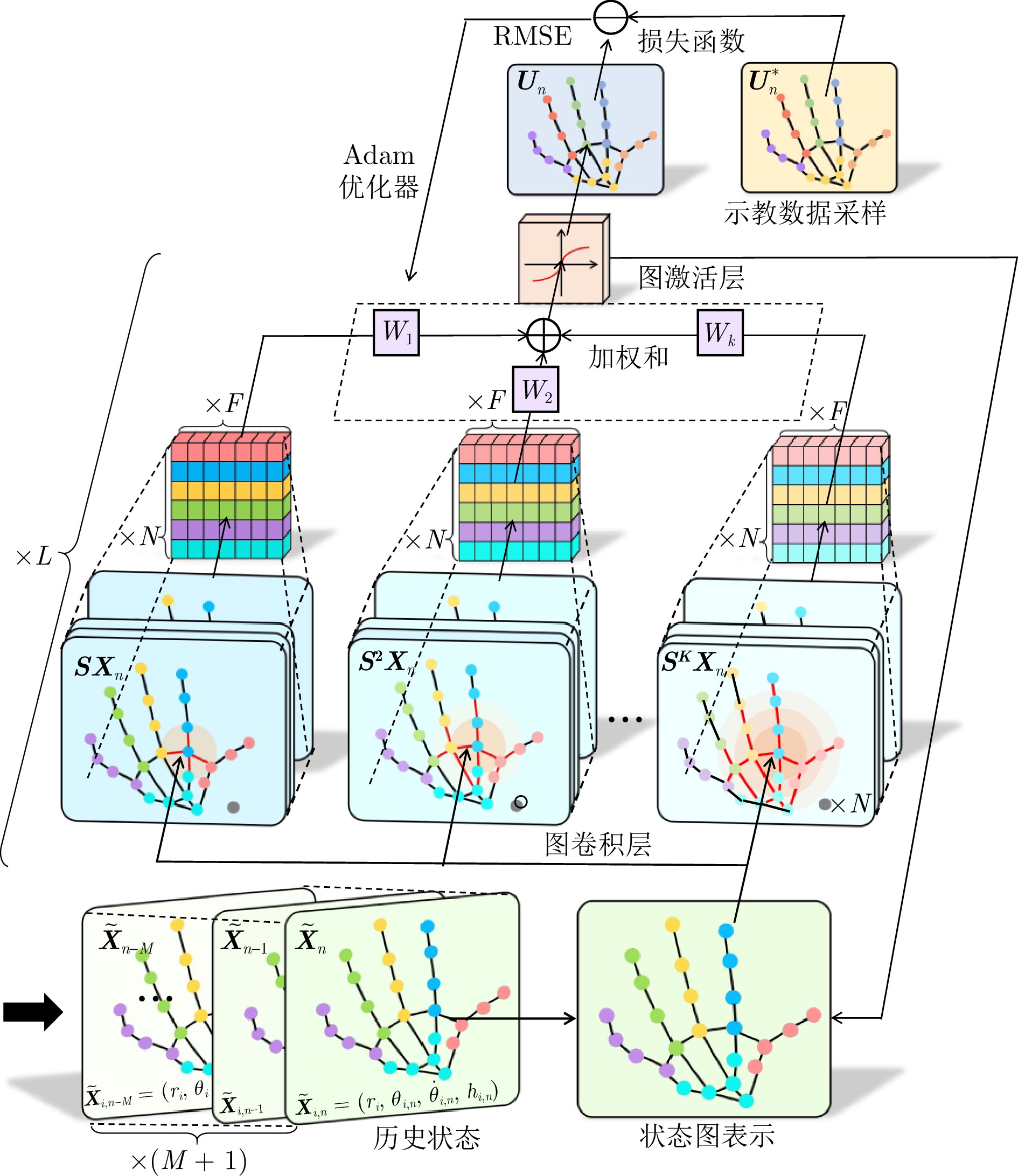

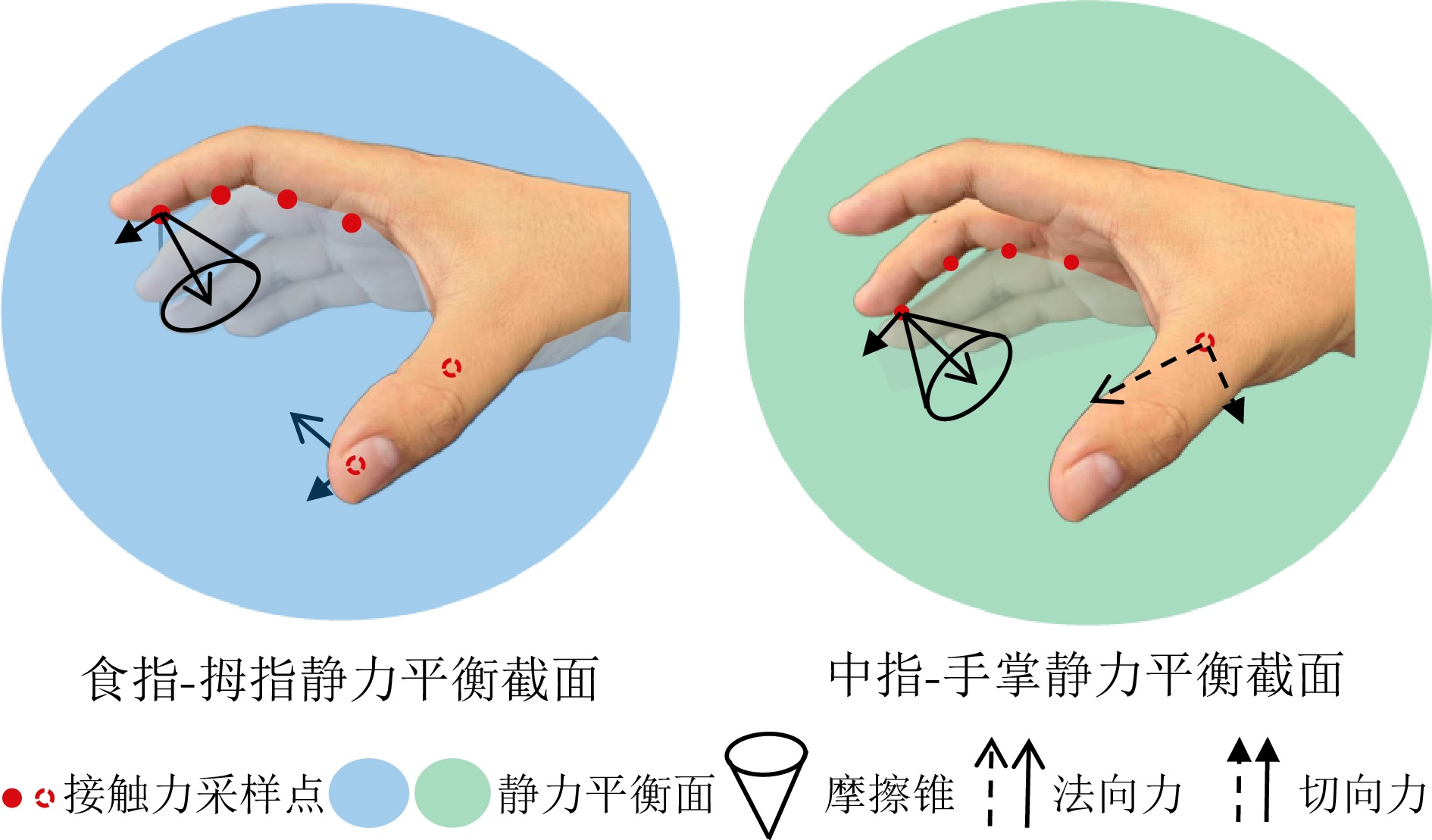

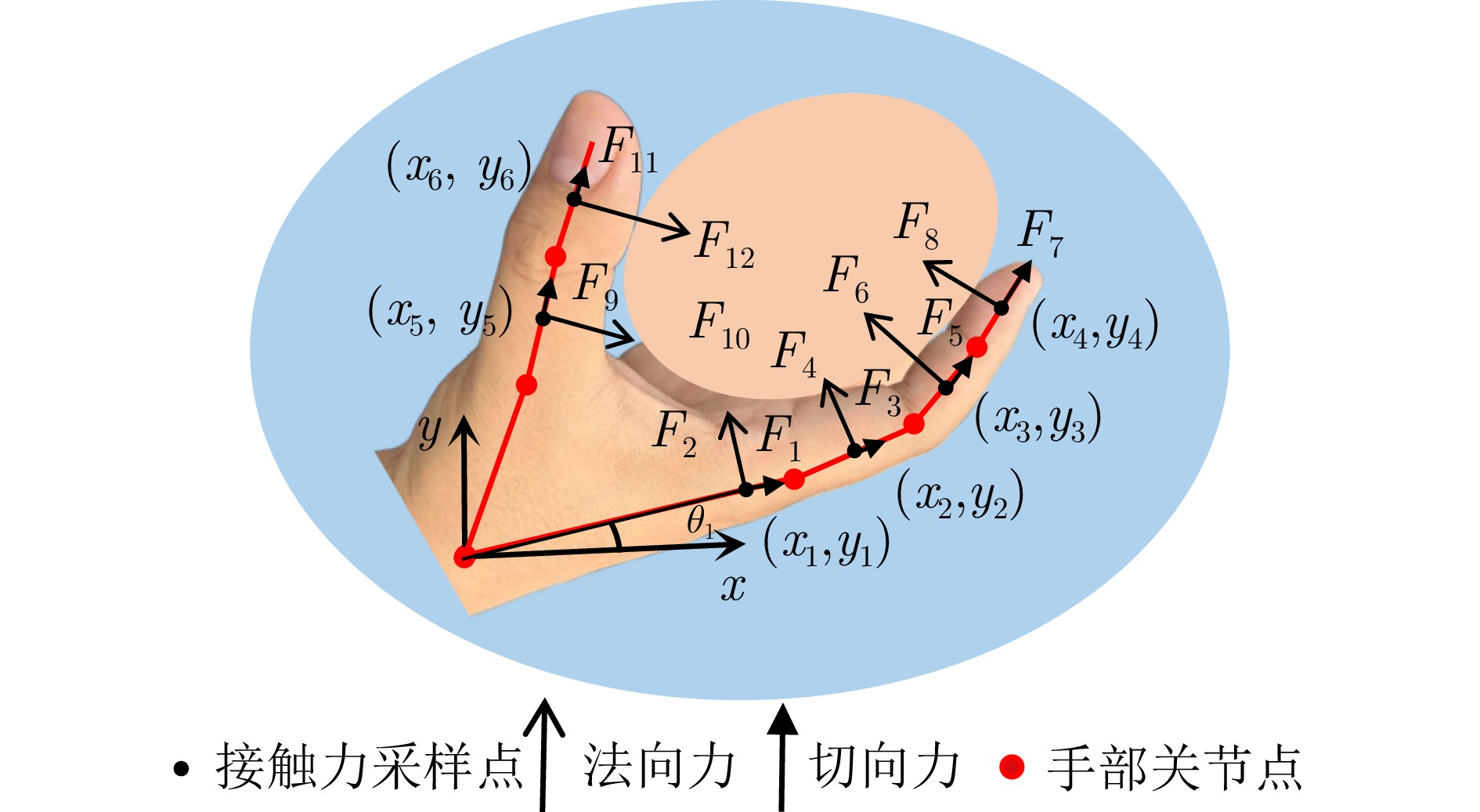

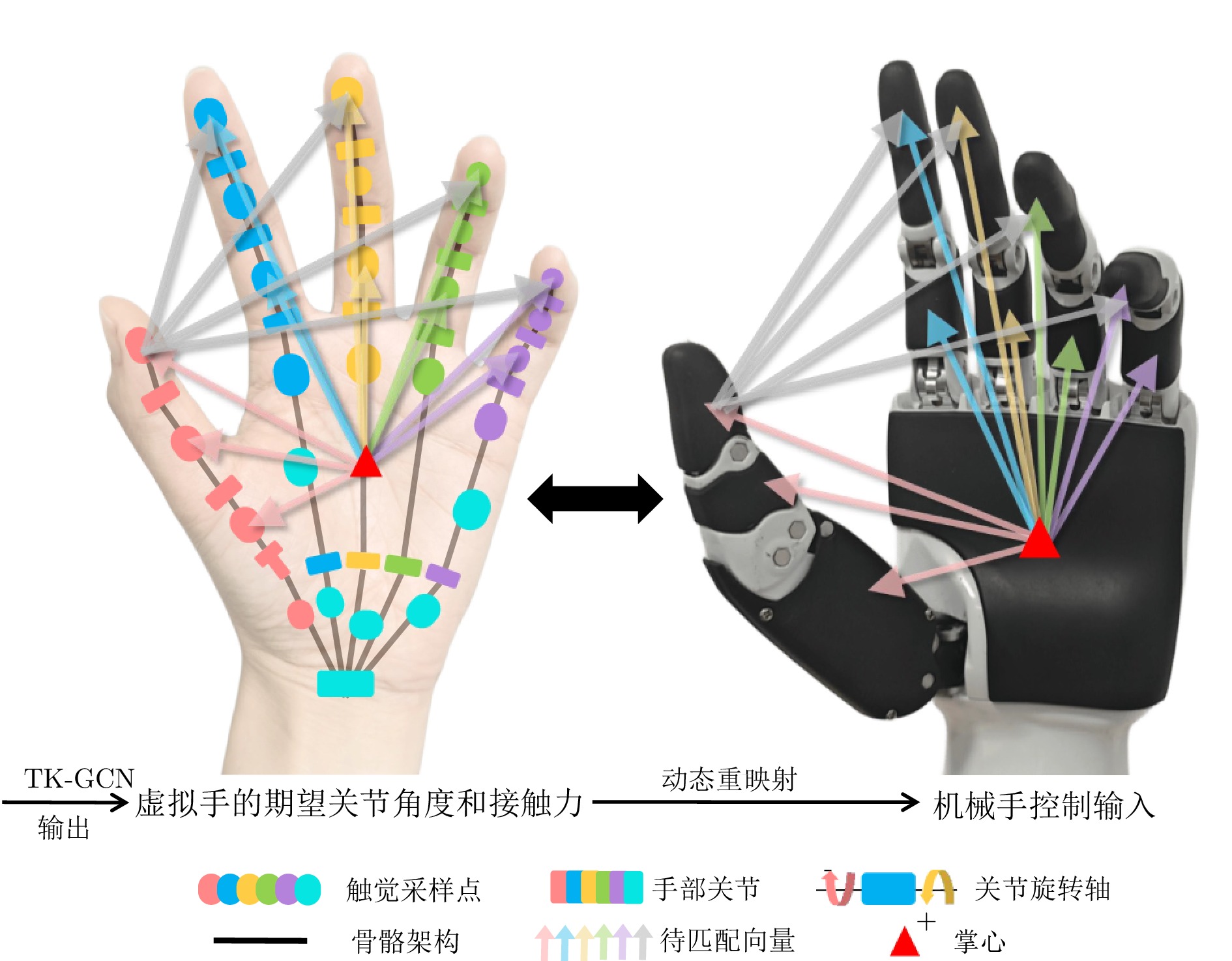

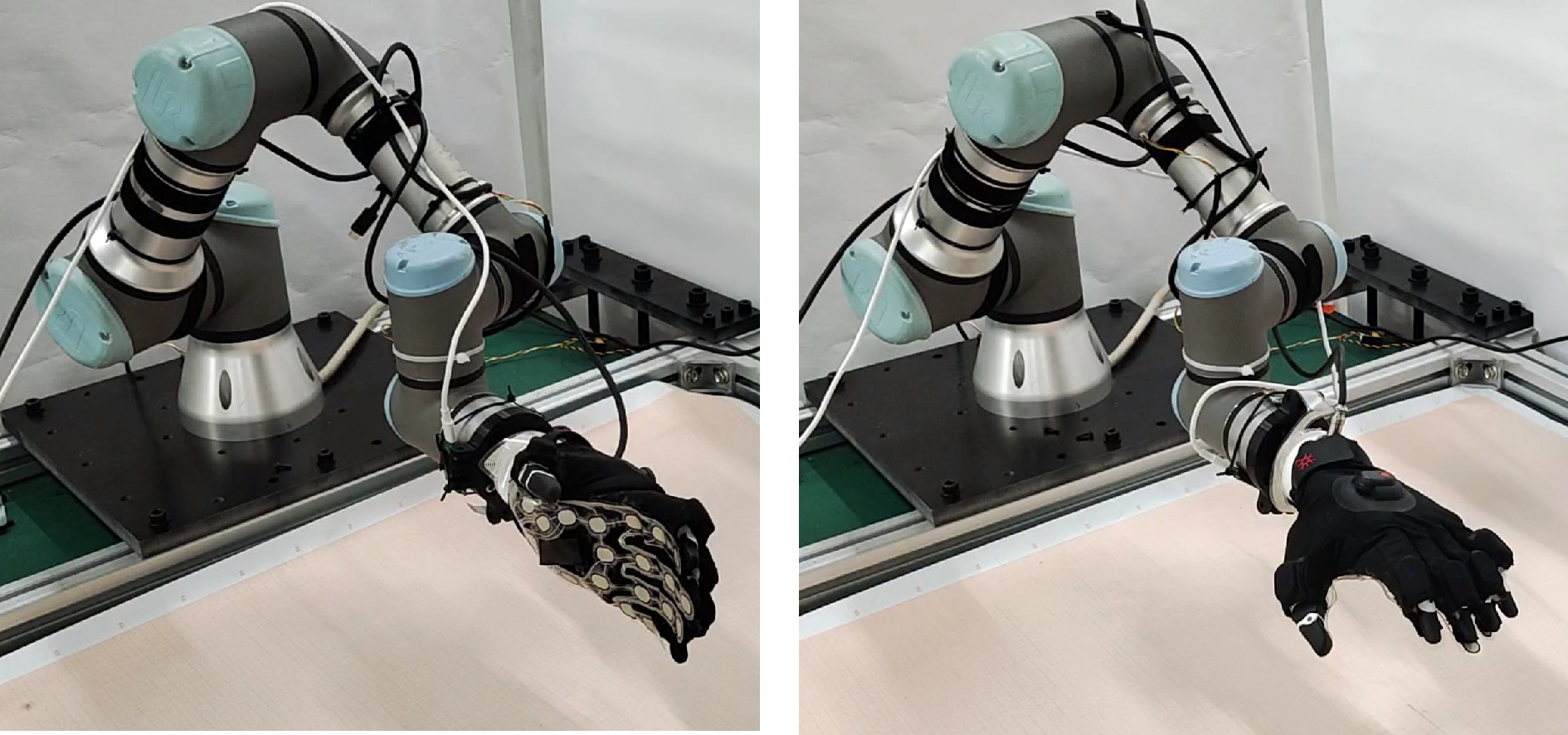

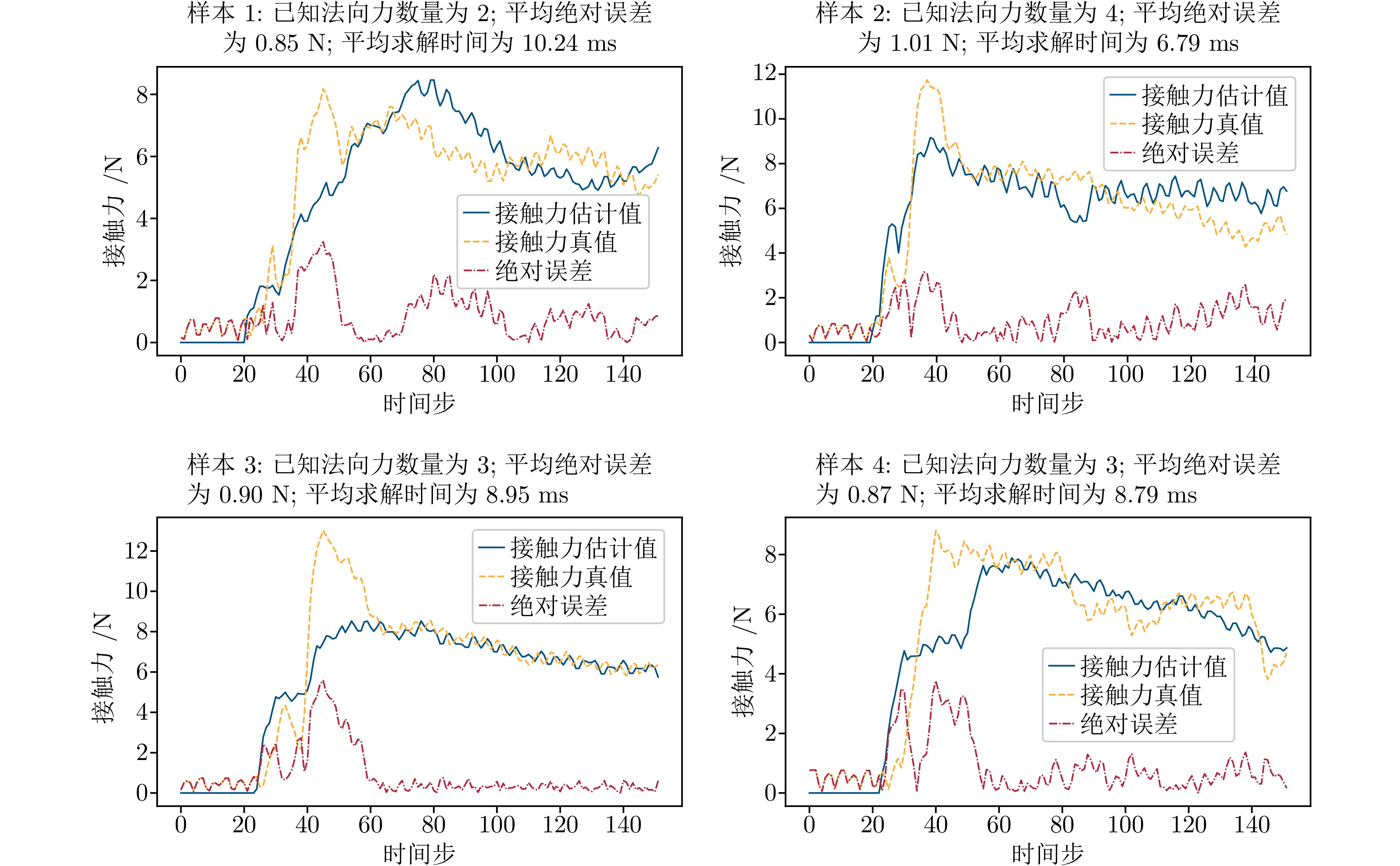

摘要: 模仿学习是实现从人手到机械手技能传递的有效方式. 传统示教方法面临示教方式不够直观、示教数据难以复用、触觉和动觉感知特征难以有效传递等问题. 为解决上述问题, 设计一款能够同时采集触觉和动觉特征的数据手套, 并提出以该手套为媒介的抓握技能传递方案, 包括基于图结构和极坐标的多模态特征表示、静力平衡假设下未知接触力估计、基于期望关节角度和接触力分布的动态重映射方法等. 实验证明, 对于可变形、不规则等多种属性的物体, 该方案能够在实现较高抓握成功率的同时保持合理的接触力控制, 相比于其他基准方案, 实现了相对更接近人手直接抓握的效果.Abstract: Imitation learning provides an effective way for transferring manipulation skills from human hands to robotic hands. However, traditional demonstration methods face problems including non-intuitive demonstration, poor reusability of demonstration data, and difficulties in effectively transferring tactile and kinesthetic perception features. To address these problems, this paper designs an integrated data glove capable of simultaneously collecting tactile and kinesthetic features, and proposes a data glove-mediated grasping skill transfer scheme. This scheme encompasses a multimodal feature representation based on graph structures and polar coordinates, estimation of unknown contact forces under static force equilibrium assumptions, and a dynamic remapping method utilizing desired joint angles and contact force distributions. Experimental results demonstrate that for objects with diverse properties, such as deformable and irregular geometries, the proposed scheme achieves a high grasping success rate while maintaining proper contact force control, producing results that relatively more closely resemble direct human grasping among the baseline schemes.

-

Key words:

- data glove /

- imitation learning /

- tactile perception /

- robotic hands /

- grasping

1)1 1https://www.noitom.com.cn/perception-neuron-3-pro.html/ -

表 1 被抓握物体属性

Table 1 Properties of grasped objects

标签 质量(g) 硬度 形状(尺寸) (mm) 材质 弹性模量(GPa) 抓握方式 bottleS 201.1 大于90 HA 圆柱体(65, 194) 不锈钢 196.000 侧抓 nailong 48.6 15 HC 近圆柱体(70, 115) TPE 0.204 侧抓 pitaya 185.5 32 HC 球体(83) TPE 0.204 自上而下 carambola 18.7 39 ~ 50 HA 近圆柱体(66, 103) MDPE 0.648 自上而下 football 10.7 16 HC 球体(58) TPE 0.204 自上而下 pineapple 26.9 75 ~ 93 HA 近圆柱体(70, 133) MDPE 0.648 侧抓 jar 49.1 大于90 HA 圆柱体(68, 118) PET 3.250 侧抓 citrus 167.4 31 HC 球体(78) TPE 0.204 侧抓 pomegrante 133.8 32 HC 球体(74) TPE 0.204 自上而下 bottleP 103.9 大于90 HA 圆柱体(60, 228) PC 2.600 侧抓 表 2 消融实验评估结果

Table 2 Evaluation results of ablation experiments

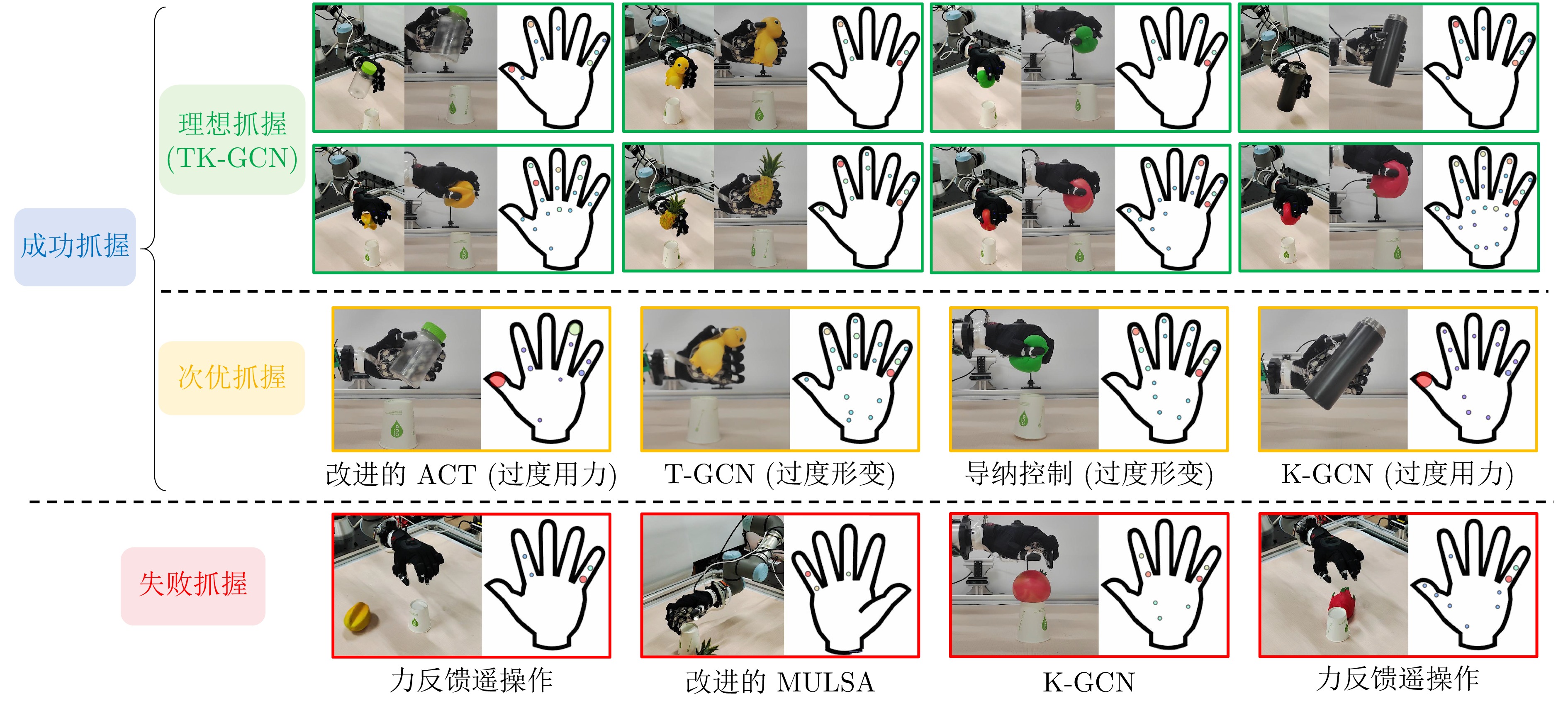

方案 成功率(%) $ \uparrow $ FEM-AT (N) $ \downarrow $ FEM-KE (N) $ \downarrow $ FEM-MAE (N) $ \downarrow $ S-Time (s) $ \downarrow $ P-Time (ms) $ \downarrow $ T-GCN+力位混合映射 73.33 285.27 ± 79.48 2.68 19.32 2.51 1.32 K-GCN+力位混合映射 77.33 323.83 ± 81.06 2.91 19.32 3.13 1.95 TK-GCN+力位混合映射 88.00 267.81 ± 68.27 1.73 8.29 3.31 2.83 TK-GCN+未知接触力估计+力位混合映射 88.67 245.78 ± 61.42 1.70 8.32 3.26 7.63 TK-GCN+未知接触力估计+动态重映射 90.67 243.13 ± 62.47 1.72 8.27 3.54 11.96 注: 上箭头表示数值越高越好, 下箭头表示数值越低越好. 表 3 不同方法的评估结果

Table 3 Evaluation results of different approaches

方法 成功率(%) $ \uparrow $ FEM-AT (N) $ \downarrow $ FEM-KE (N) $ \downarrow $ FEM-MAE (N) $ \downarrow $ S-Time (s) $ \downarrow $ P-Time (ms) $ \downarrow $ 力反馈遥操作 70.00 351.31 ± 109.09 1.92 19.32 5.18 — 导纳控制(70%) 73.33 476.91 ± 113.81 4.67 19.33 1.97 — 导纳控制(80%) 86.00 563.51 ± 139.21 5.02 19.33 2.05 — 导纳控制(90%) 86.67 775.47 ± 151.65 7.13 19.33 2.39 — 改进的ACT 70.00 356.68 ± 88.60 3.52 19.32 7.11 16.53 改进的MULSA 50.67 302.51 ± 48.74 2.22 8.65 6.37 31.94 TK-GCN (本文方法) 90.67 243.13 ± 62.47 1.72 8.27 3.54 11.96 人手抓握 100.00 268.64 ± 50.26 1.44 6.28 2.07 — 注: 上箭头表示数值越高越好, 下箭头表示数值越低越好. -

[1] Hu Z, Zheng Y, Pan J. Grasping living objects with adversarial behaviors using inverse reinforcement learning. IEEE Transactions on Robotics, 2023, 39(2): 1151−1163 doi: 10.1109/TRO.2022.3226108 [2] Andrychowicz M, Baker B, Chociej M, Jozefowicz R, McGrew B, Pachocki J, et al. Learning dexterous in-hand manipulation. The International Journal of Robotics Research, 2020, 39(1): 3−20 doi: 10.1177/0278364919887447 [3] Liang H, Cong L, Hendrich N, Li S, Sun F, Zhang J. Multifingered grasping based on multimodal reinforcement learning. IEEE Robotics and Automation Letters, 2021, 7(2): 1174−1181 doi: 10.1109/lra.2021.3138545 [4] Tian X, Zhan Q, Zhang Y, Zou J, Jiang L, Xu Q. Simplified configuration design of anthropomorphic hand imitating specific human hand grasps. IEEE Robotics and Automation Letters, 2022, 8(1): 152−159 doi: 10.1109/lra.2022.3224309 [5] Yang L, Huang B, Li Q, Tsai Y Y, Lee W W, Song S. TacGNN: Learning tactile-based in-hand manipulation with a blind robot using hierarchical graph neural network. IEEE Robotics and Automation Letters, 2023, 8(6): 3605−3612 doi: 10.1109/LRA.2023.3264759 [6] Wolpert D M, Ghahramani Z, Jordan M I. An internal model for sensorimotor integration. Science, 1995, 269(5232): 1880−1882 doi: 10.1126/science.7569931 [7] Ordás C M, Alonso-Frech F. The neural basis of somatosensory temporal discrimination threshold as a paradigm for time processing in the sub-second range: An updated review. Neuroscience & Biobehavioral Reviews, 2024, 156: Article No. 105486 doi: 10.1016/j.neubiorev.2023.105486 [8] Zhao T Z, Kumar V, Levine S, Finn C. Learning fine-grained bimanual manipulation with low-cost hardware. In: Proceedings of the 19th Robotics: Science and System. Daegu, South Korea: RSS, 2023. 16−34 [9] Funabashi S, Isobe T, Hongyi F, Hiramoto A, Schmitz A, Sugano S. Multi-fingered in-hand manipulation with various object properties using graph convolutional networks and distributed tactile sensors. IEEE Robotics and Automation Letters, 2022, 7(2): 2102−2109 doi: 10.1109/LRA.2022.3142417 [10] Palleschi A, Angelini F, Gabellieri C, Park D W, Pallottino L, Bicchi A. Grasp it like a Pro 2.0: A data-driven approach exploiting basic shape decomposition and human data for grasping unknown objects. IEEE Transactions on Robotics, 2023, 39(5): 4016−4036 doi: 10.1109/TRO.2023.3286115 [11] Ravichandar H, Polydoros A S, Chernova S, Billard A. Recent advances in robot learning from demonstration. Annual Review of Control, Robotics, and Autonomous Systems, 2020, 3(1): 297−330 doi: 10.1146/annurev-control-100819-063206 [12] 秦方博, 徐德. 机器人操作技能模型综述. 自动化学报, 2019, 45(8): 1401−1418 doi: 10.16383/j.aas.c180836Qin Fang-Bo, Xu De. Review of robot manipulation skill models. Acta Automatica Sinica, 2019, 45(8): 1401−1418 doi: 10.16383/j.aas.c180836 [13] 刘乃军, 鲁涛, 蔡莹皓, 王硕. 机器人操作技能学习方法综述. 自动化学报, 2019, 45(3): 458−470 doi: 10.16383/j.aas.c180076Liu Nai-Jun, Lu Tao, Cai Ying-Hao, Wang Shuo. A review of robot manipulation skills learning methods. Acta Automatica Sinica, 2019, 45(3): 458−470 doi: 10.16383/j.aas.c180076 [14] Gabellieri C, Angelini F, Arapi V, Palleschi A, Catalano M G, Grioli G, et al. Grasp it like a Pro: Grasp of unknown objects with robotic hands based on skilled human expertise. IEEE Robotics and Automation Letters, 2020, 5(2): 2808−2815 doi: 10.1109/LRA.2020.2974391 [15] Wei D, Xu H. A wearable robotic hand for hand-over-hand imitation learning. In: Proceedings of the IEEE International Conference on Robotics and Automation. Yokohama, Japan: IEEE, 2024. 18113−18119 [16] Li S, Hendrich N, Liang H, Ruppel P, Zhang C, Zhang J. A dexterous hand-arm teleoperation system based on hand pose estimation and active vision. IEEE Transactions on Cybernetics, 2022, 54(3): 1417−1428 [17] Wang C, Fan L, Sun J, Zhang R, Li F F, Xu D, et al. MimicPlay: Long-horizon imitation learning by watching human play. In: Proceedings of the 7th Conference on Robot Learning. Atlanta, USA: PMLR, 2023. 201−221 [18] Xu X, Qian K, Jing X, Song W. Learning robot manipulation skills from human demonstration videos using two-stream 2-D/3-D residual networks with self-attention. IEEE Transactions on Cognitive and Developmental Systems, 2022, 15(3): 1000−1011 doi: 10.1109/tcds.2022.3182877 [19] de Haan P, Jayaraman D, Levine S. Causal confusion in imitation learning. In: Proceedings of the Advances in Neural Information Processing Systems. Vancouver, Canada: Curran Associates, 2019. 11698−11709 [20] Handa A, van Wyk K, Yang W, Liang J, Chao Y W, Wan Q. DexPilot: Vision-based teleoperation of dexterous robotic hand-arm system. In: Proceedings of the IEEE International Conference on Robotics and Automation. Paris, France: IEEE, 2020. 9164−9170 [21] Qin Y, Yang W, Huang B, van Wyk K, Su H, Wang X, et al. AnyTeleop: A general vision-based dexterous robot arm-hand teleoperation system. In: Proceedings of the 19th Robotics: Science and System. Daegu, South Korea: RSS, 2023. 15−27 [22] Fu Z, Zhao T Z, Finn C. Mobile ALOHA: Learning bimanual mobile manipulation with low-cost whole-body teleoperation. In: Proceedings of the 8th Annual Conference on Robot Learning. Seoul, South Korea: PMLR, 2025. 4066−4083 [23] Chi C, Xu Z, Pan C, Cousineau E, Burchfiel B, Feng S, et al. Universal manipulation interface: In-the-wild robot teaching without in-the-wild robots. In: Proceedings of the 20th Robotics: Science and Systems. Delft, Netherlands: RSS, 2024. 45−58 [24] Sundaram S, Kellnhofer P, Li Y, Zhu J Y, Torralba A, Matusik W. Learning the signatures of the human grasp using a scalable tactile glove. Nature, 2019, 569(7758): 698−702 doi: 10.1038/s41586-019-1234-z [25] Park M, Park T, Park S, Yoon S J, Koo S H, Park Y L. Stretchable glove for accurate and robust hand pose reconstruction based on comprehensive motion data. Nature Communications, 2024, 15(1): Article No. 5821 doi: 10.1038/s41467-024-50101-w [26] Yu T, Luo J, Gong Y, Wang H, Guo W, Yu H, et al. A compact gesture sensing glove for digital twin of hand motion and robot teleoperation. IEEE Transactions on Industrial Electronics, 2025, 72(2): 1684−1693 doi: 10.1109/TIE.2024.3417980 [27] Shen V, Rae-Grant T, Mullenbach J, Harrison C. Fluid reality: High-resolution, untethered haptic gloves using electroosmotic pump arrays. In: Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology. San Francisco, USA: ACM, 2023. 1−20 [28] Standring S, Ellis H, Healy J, Johnson D, Williams A, Collins P, et al. Gray's anatomy: The anatomical basis of clinical practice. American Journal of Neuroradiology, 2005, 26(10): Article No. 2703 doi: 10.5860/choice.43-1300 [29] Li H, Zhang Y, Zhu J, Wang S, Lee M A, Xu H, et al. See, hear, and feel: Smart sensory fusion for robotic manipulation. In: Proceedings of the Machine Learning Research. Baltimore, USA: PMLR, 2022. 1368−1378 -

计量

- 文章访问数: 187

- HTML全文浏览量: 118

- 被引次数: 0

下载:

下载: