-

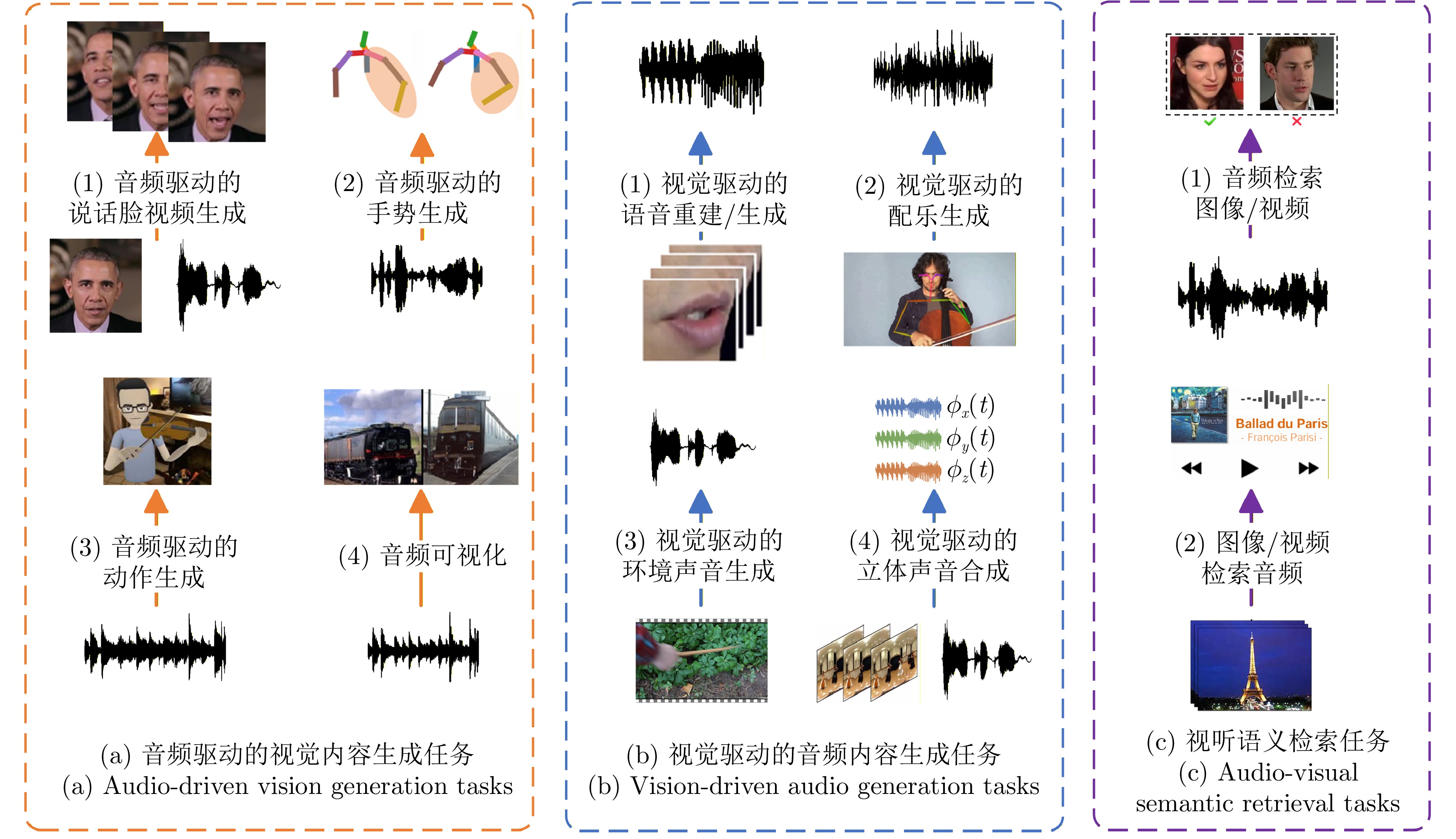

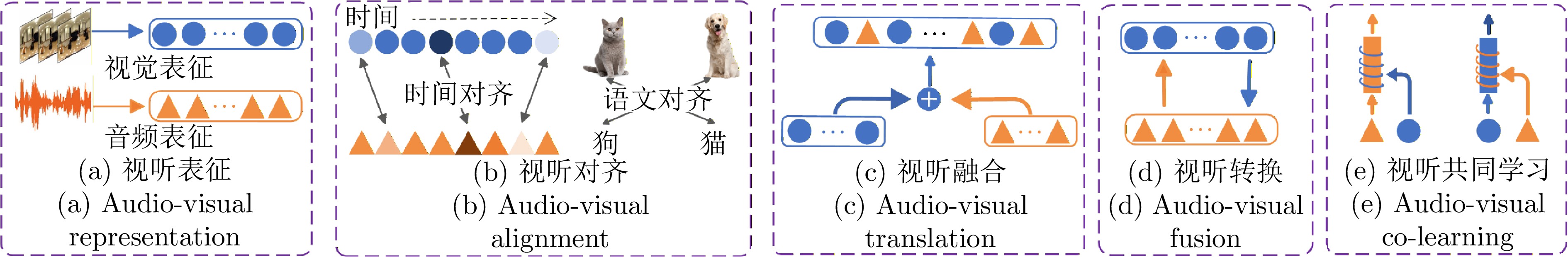

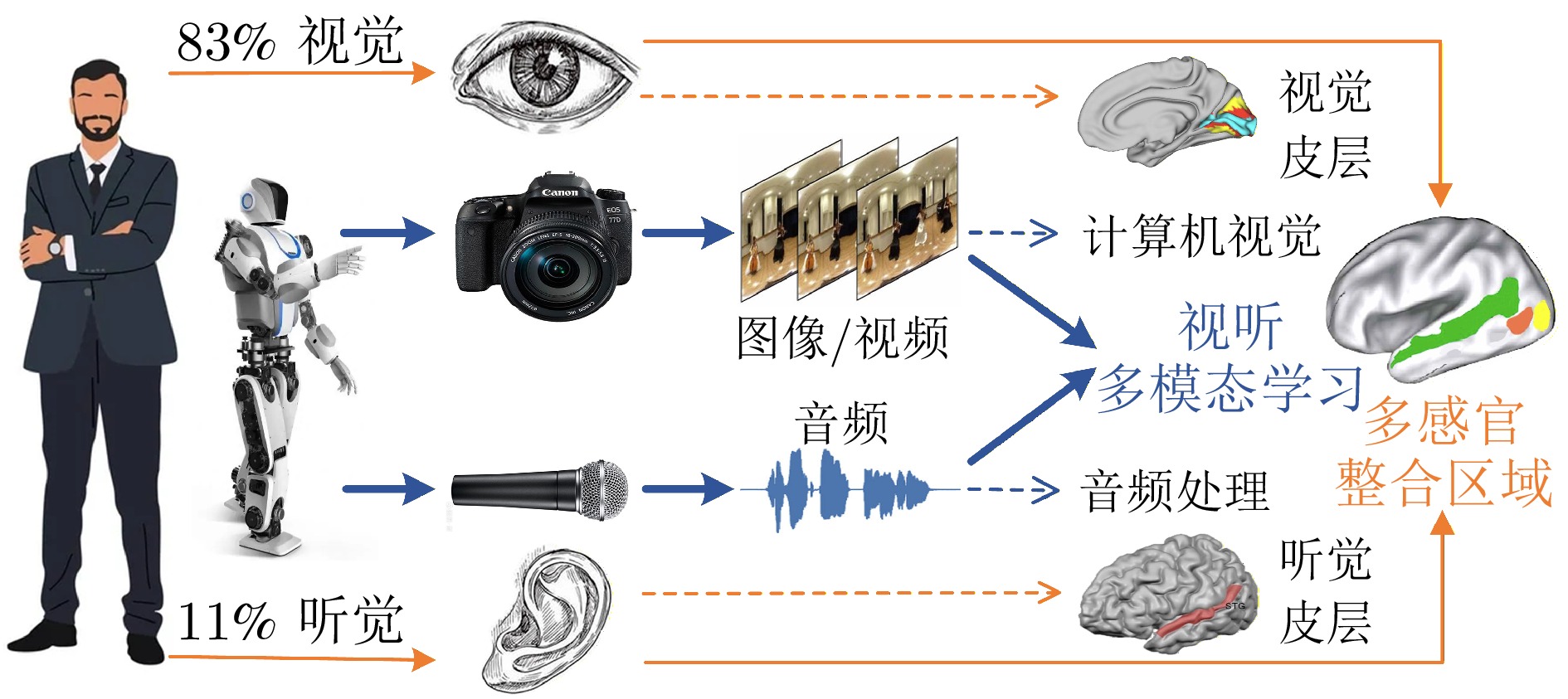

摘要: 在人类信息获取过程中, 视听觉扮演着重要角色, 大脑通过整合视听信息, 形成统一、连贯且稳定的知觉体验. 视听多模态学习旨在模拟人类的视听多感官整合能力, 近年来受到研究者的广泛关注. 然而, 该领域在应用场景、任务目标和技术方法上呈现出显著的多样性, 目前尚且缺乏对视听多模态学习领域系统性回顾和分析的综合性中文综述. 基于人类的多感官整合机制在视听认知中的重要性以及不同视听多模态学习任务间的内在关联性, 提出一个统一框架, 将现有研究归纳为三类: 视听增强通过引入音频或视觉信息实现对初始单模态任务的增强效应; 跨模态交互旨在探索视听信息间的相互转换; 视听协作致力于探索视听信息的综合理解方法及其协同效应. 在此基础上, 对该领域中的最新研究进展进行系统性综述和总结. 此外, 深入剖析当前视听多模态学习研究所面临的五大核心共性问题和挑战---视听表征、对齐、转换、融合和共同学习; 并探讨大模型背景下视听多模态学习的发展现状.Abstract: In the process of human information acquisition, audio-visual plays crucial roles, with the brain integrating audio-visual information to form a unified, coherent, and stable perceptual experience. Audio-visual Multi-modal Learning (AVMML) aims to simulate human capacity for audio-visual multi-sensory integration, which has garnered significant attention from researchers in recent years. However, this field exhibits significant diversity in application scenarios, task objectives and technical methodologies. Currently, there is a lack of a comprehensive Chinese survey that systematically reviews and analyzes the field of AVMML. In this paper, we proposes a unified framework based on the importance of human multi-sensory integration mechanisms in audio-visual cognition and the intrinsic relationships among different AVMML tasks. Under our framework, existing AVMML researches can be categorized into three main types: Audio-visual enhancement, which improves the performance of initial uni-modal tasks by incorporating audio or visual information; Cross-modal interaction, which explores the mutual translation between audio and visual information; Audio-visual collaboration, which investigate comprehensive understanding methods and synergistic effects of audio-visual information. Building on this, this paper systematically reviews and summarizes the latest research progress in the AVMML field. Additionally, we provides an in-depth analysis of five core issues and challenges faced by current AVMML research, covering audio-visual representation, alignment, translation, fusion, and co-learning; We also discusses the development state of AVMML in the context of large models.

-

表 1 视/听单模态学习与视听多模态学习

Table 1 Audio/vision uni-modal learning and audio-visual multi-modal learning

数据处理能力 知识迁移能力 噪声鲁棒能力 计算机视觉/音频处理(CV/AP) 单模态学习 仅能处理图像、视频、

音频单一模态数据知识只能从一种模态中

学习并应用在该模态中容易受到模态自身数据

噪声的影响视听多模态学习 多模态学习 能同时处理图像、视频、

音频多模态数据知识可以从一种模态获取,

也能应用于不同模态各模态数据噪声互不影响,

且信息可相互补充表 2 视听学习任务涉及的核心问题/挑战

Table 2 Core issues/challenges involved in audio-visual learning tasks

分类 子类 视听表征 视听转换 视听对齐 视听融合 视听共同学习 视听增强学习 音频增强的视觉任务 $ \surd $ $ \surd $ $ \surd $ 视觉增强的音频任务 $ \surd $ $ \surd $ $ \surd $ 视听跨模态学习 视听生成任务 $ \surd $ $ \surd $ $ \surd $ 视听检索任务 $ \surd $ $ \surd $ $ \surd $ 视听协作学习 视听实例感知任务 $ \surd $ $ \surd $ $ \surd $ 视听场景理解任务 $ \surd $ $ \surd $ $ \surd $ 视听推理与交互任务 $ \surd $ $ \surd $ $ \surd $ 非传统的视听学习任务 $ \surd $ $ \surd $ $ \surd $ $ \surd $ -