-

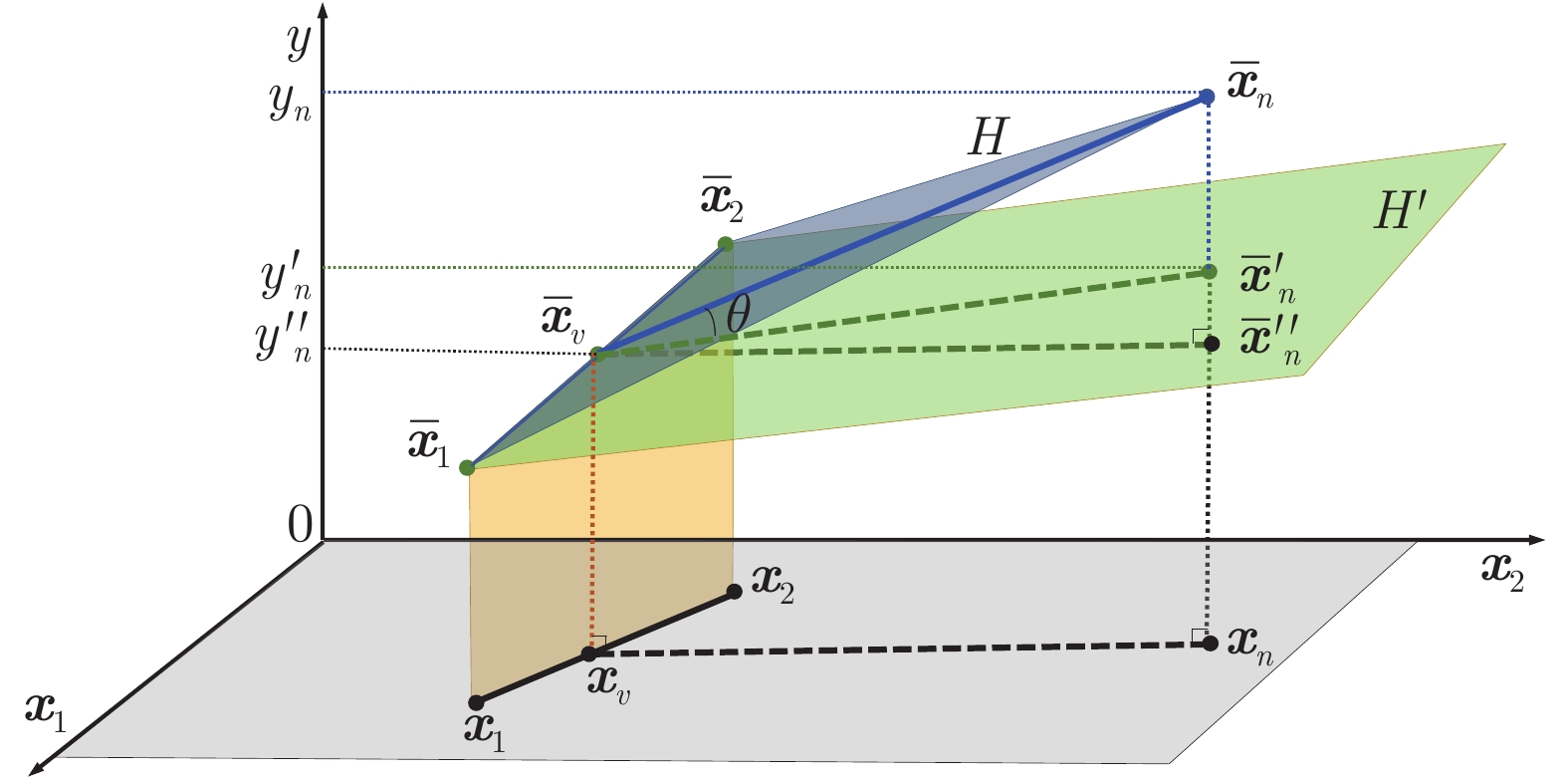

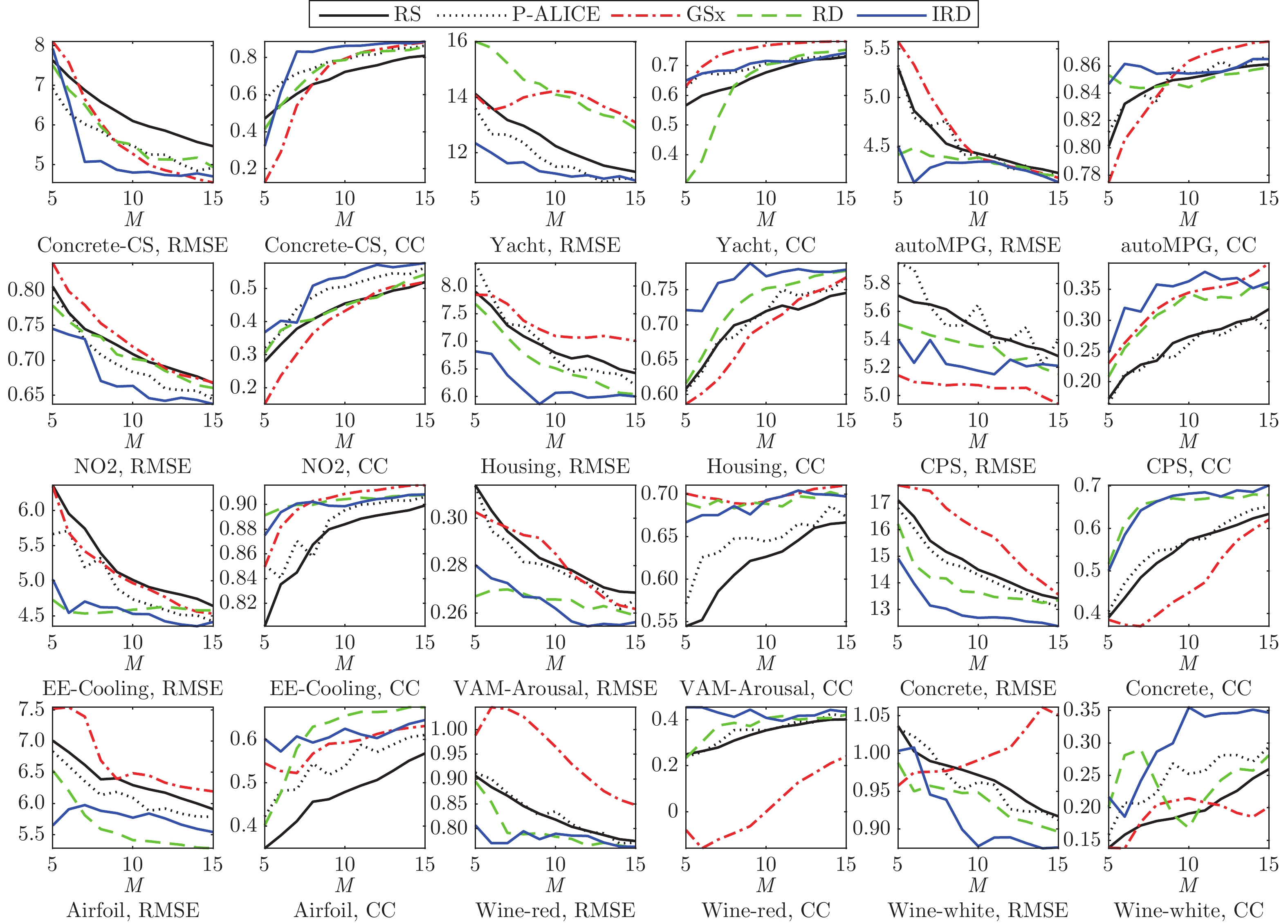

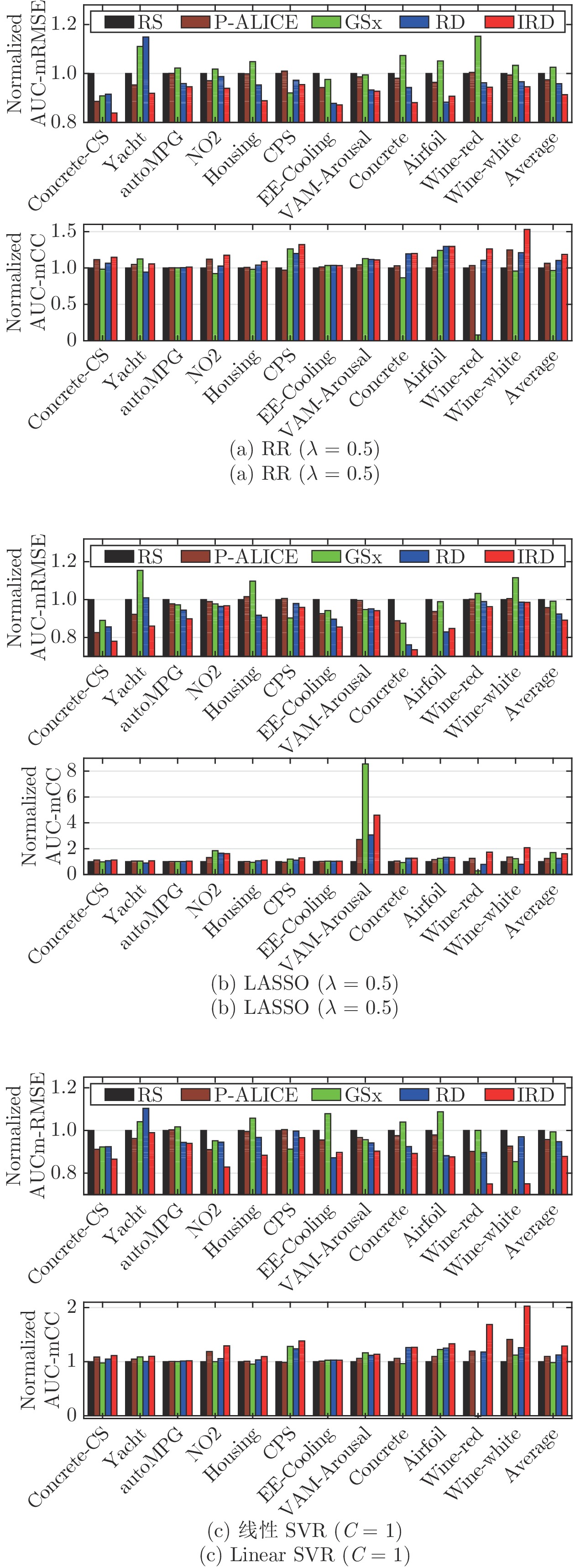

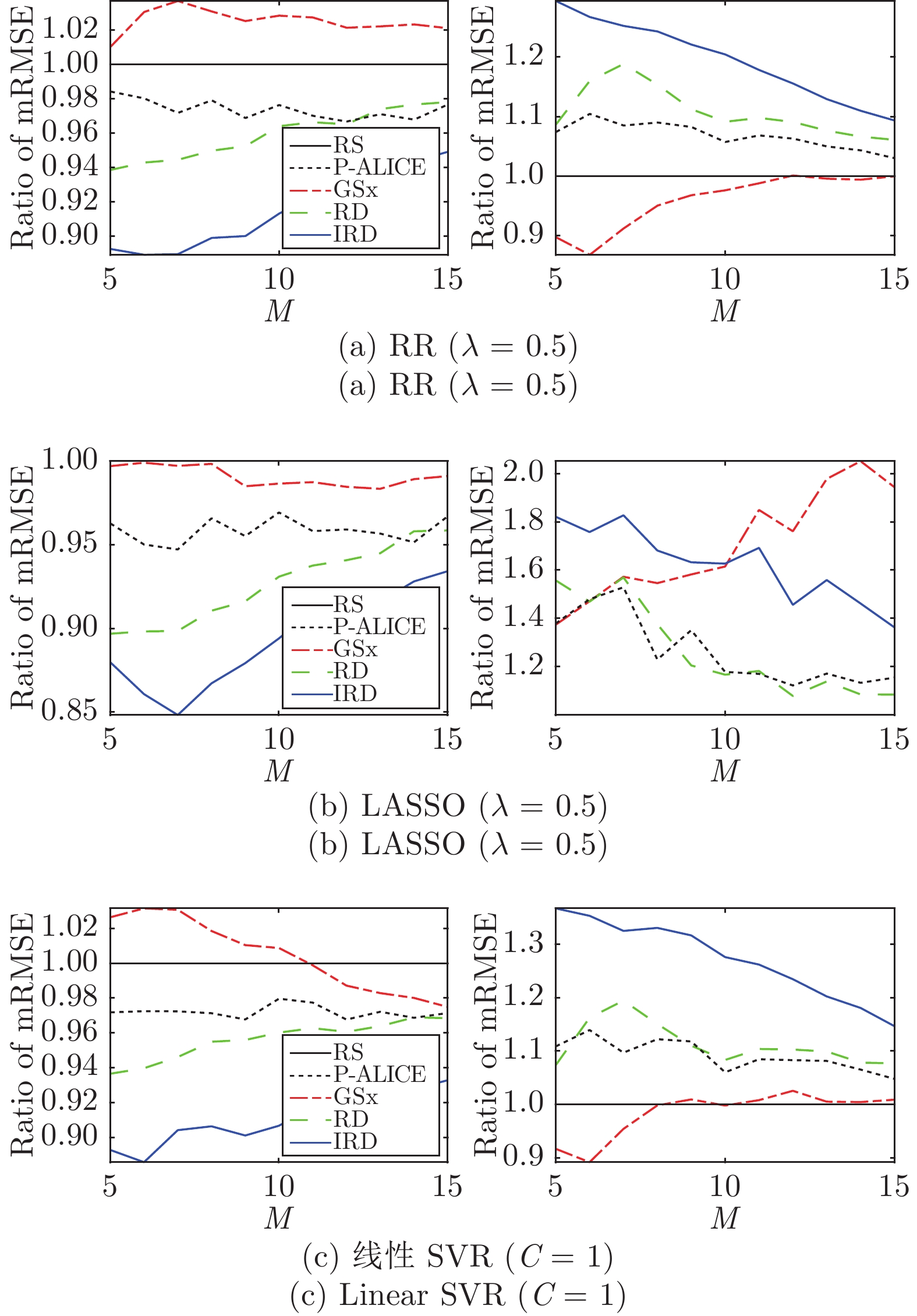

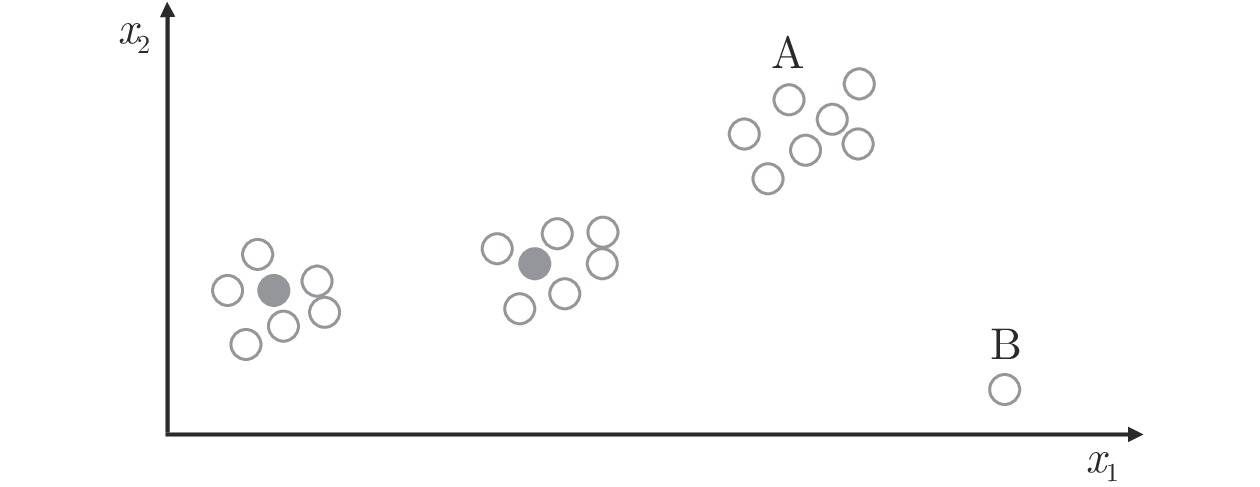

摘要: 在许多现实的机器学习应用场景中, 获取大量未标注的数据是很容易的, 但标注过程需要花费大量的时间和经济成本. 因此, 在这种情况下, 需要选择一些最有价值的样本进行标注, 从而只利用较少的标注数据就能训练出较好的机器学习模型. 目前, 主动学习(Active learning)已广泛应用于解决这种场景下的问题. 但是, 大多数现有的主动学习方法都是基于有监督场景: 能够从少量带标签的样本中训练初始模型, 基于模型查询新的样本, 然后迭代更新模型. 无监督情况下的主动学习却很少有人考虑, 即在不知道任何标签信息的情况下最佳地选择要标注的初始训练样本. 这种场景下, 主动学习问题变得更加困难, 因为无法利用任何标签信息. 针对这一场景, 本文研究了基于池的无监督线性回归问题, 提出了一种新的主动学习方法, 该方法同时考虑了信息性、代表性和多样性这三个标准. 本文在3个不同的线性回归模型(岭回归、LASSO (Least absolute shrinkage and selection operator)和线性支持向量回归)和来自不同应用领域的12个数据集上进行了广泛的实验, 验证了其有效性.Abstract: In many real-world machine learning applications, unlabeled data can be easily obtained, but it is very time-consuming and/or expensive to label them. So, it is desirable to be able to select the optimal samples to label, so that a good machine learning model can be trained from a minimum number of labeled data. Active learning (AL) has been widely used for this purpose. However, most existing AL approaches are supervised: they train an initial model from a small number of labeled samples, query new samples based on the model, and then update the model iteratively. Few of them have considered the completely unsupervised AL problem, i.e., starting from zero, how to optimally select the very first few samples to label, without knowing any label information at all. This problem is very challenging, as no label information can be utilized. This paper studies unsupervised pool-based AL for linear regression problems. We propose a novel AL approach that considers simultaneously the informativeness, representativeness, and diversity, three essential criteria in AL. Extensive experiments on 12 datasets from various application domains, using three different linear regression models (ridge regression, LASSO (least absolute shrinkage and selection operator), and linear support vector regression), demonstrated the effectiveness of our proposed approach.

-

多智能体系统控制在近些年发展迅速, 广泛应用于无人机编队、传感器网络、机械臂装配、多导弹联合攻击等领域. 一致性作为协同控制的基础, 成为多智能体系统研究中的核心问题. 近年来, 学者们针对多智能体系统不同类型的一致性问题进行了大量研究, 如完全一致性[1-4]、领导-跟随一致性[5]、群体一致性[6]、比例一致性[7-9]等. 以上一致性成果主要集中在设计控制器使智能体状态误差最终趋于零. 但是在实际系统中, 由于执行器偏差、计算误差和恶劣环境, 智能体系统会存在通信约束(如通信时滞[10-11]、数据量化[12]等)、外部干扰、未知耦合等情况, 若仍要求每个智能体的真实运动状态之间的偏差趋于零, 这在有限条件下往往难以实现. 因此, Dong等[13]提出使智能体状态偏差函数在某一确定有界区间内波动的实用一致性概念, 可适用于更为复杂的实际系统.

为解决不同非理想网络环境中的一致性问题, 学者们对基于实用一致性概念的多智能体控制算法进行了深度研究[13-17]. 文献[13]研究了有向通信拓扑下具有外部扰动、相互作用不确定和时变时滞的一般高阶线性时不变群系统实用一致性问题. 在实际系统中, 当采样周期变大时, 若要使智能体状态误差趋于零, 初始状态就须非常接近, 显然限制了系统的有效性. 基于文献[13], 文献[14]进一步探讨了二阶多智能体系统齐次采样下的实用一致性. 为解决初始控制输入量过大, 出现输入饱和而导致削弱系统性能的问题, 文献[15]利用时基发生器, 提出多智能体系统的固定时间实用一致性框架. 具有振荡器的系统, 也难以实现智能体状态误差最终趋于零, 因此文献[16]研究了异质网络上非线性非均匀Stuart-Landa振子的实用动态一致性. 文献[17]研究了带未知耦合权重的领导-跟随多智能体系统的实用一致性. 以上已有的实用一致性研究只考虑非理想网络环境中智能体之间的合作关系, 即用非负权重的通信拓扑来表示. 在许多实际系统中, 合作与竞争关系同时存在.

文献[18]率先设通信拓扑权重为负以表示智能体间的竞争关系, 提出结构平衡图假设, 利用拉普拉斯算子证明了智能体系统能实现二分一致. 随后该结论被推广至更一般的线性多智能体系统[19-20]. 文献[21]研究了切换拓扑下多智能体系统的二分一致性, 建立了智能体稳态与拉普拉斯矩阵间的联系. 文献[22]引入正负生成树概念, 得出矩阵加权网络实现二分一致的充要条件, 但只适用于结构平衡图. 对于结构不平衡下的矩阵加权网络, 文献[23]通过矩阵耦合, 得出了实现二分一致的代数条件. 文献[24]针对含有对抗关系和时变拓扑的耦合离散系统, 考虑了拓扑切换后出现结构不平衡或结构平衡的两个子系统成员随时间变化的情况, 实现了有界双向同步. 上述二分一致性的研究大部分考虑智能体间的误差最终能趋于零, 本文将着重考虑受到通信时延、数据量化影响下智能体间的误差收敛于可控区间的二分实用一致性.

量化一致性概念最早由文献[25]提出, 随后文献[26]基于矩阵谱理论分别研究了通讯信息在一致量化和对数量化下多智能体系统的一致性问题. 文献[27]构建了基于磁滞效应量化的多智能体网络的混杂系统模型, 该混杂系统能够有效避免震颤现象, 并进一步分析了系统解的有限时间收敛性. 文献[28]考虑竞争关系和通信量化下多智能体系统的二分一致性. 文献[29]研究了具有量化通信约束的非线性多智能体系统分布式二分一致性问题. 以上论文对通信量化做了大量研究, 但均未考虑通信时滞, 而时滞也是影响多智能体系统一致性的重要因素.

综合考虑上述因素, 本文将以带有通信时滞和量化数据等通信约束且同时存在合作、竞争关系的实际系统为对象, 研究其二分实用一致性问题, 提出了基于融合时滞项、取整函数、符号函数的量化器的右端不连续控制协议. 根据微分包含理论和菲利波夫解的框架证明了控制器在右端不连续情况下仍能求得系统的全局解, 实现智能体位置状态收敛至模相同但符号不同的可控区间. 相较于文献[17], 本文同时考虑了智能体间的合作与竞争关系. 相较于文献[18, 20], 本文考虑了通信时滞、量化数据等通信约束对智能体系统的影响. 在文献[25, 28]的基础上, 本文将渐近一致性推广至实用一致性, 使智能体间的误差收敛于一个可控区间, 且收敛上界值与任何全局信息和初始值无关.

1. 预备知识及问题描述

1.1 图论

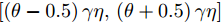

由

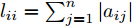

$n$ 个智能体组成的系统通信拓扑图可由有向图$G = \left( {V,E,{A}} \right)$ 描述, 其中$V = \left\{ {1,2, \cdots ,n} \right\}$ 为点集,$E \subseteq V \times V$ 为边集, 有向边${e_{ij}} = (i,j) \in E$ 表示智能体$i$ 向智能体$j$ 传输信息.${A} = [{a_{ij}}] \in {{\bf{R}}^{n \times n}}$ 为通信权重矩阵, 其对角线元素${a_{ii}} = 0$ . 若${a_{ij}} \ne 0$ , 则称智能体$j$ 为智能体$i$ 的邻居. 对任意$i,j$ 均有${a_{ij}} \geq 0$ , 则称图$G$ 为非负图, 否则为符号图. 度矩阵${D} \in {{\bf{R}}^{n \times n}}$ 定义为${D} = {\rm{diag}}\{ {d_1},{d_2}, \cdots ,{d_n}\}$ ,${\rm{diag}}\{ {d_1}, $ $ {d_2}, \cdots ,{d_n} \}$ 表示对角矩阵, 其对角线元素为$\{ {d_1}, $ $ {d_2}, \cdots ,{d_n} \}$ ,${d_i} = \sum\nolimits_{j = 1}^n {\left| {{a_{ij}}} \right|} $ . 拉普拉斯矩阵${L} \in {{\bf{R}}^{n \times n}}$ 定义为${L} = {D} - {A}$ , 其中${l_{ii}} = \sum\nolimits_{j = 1}^n {\left| {{a_{ij}}} \right|} $ , 且${l_{ij}} = - $ $ {a_{ij}}$ ,$\forall i \ne j$ . 若图中任意两个节点, 均存在一条有向路径, 则称图$G$ 为有向强连通图.定义 1[12]. 给定点

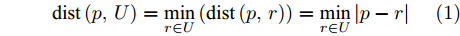

$p \in \bf{R}$ 到给定集合$U \subseteq \bf{R}$ 中的点的最短距离为:$${\rm dist}\left( {p,U} \right) = \mathop {\min }\limits_{r \in U} \left( {{\rm dist}\left( {p,r} \right)} \right) = \mathop {\min }\limits_{r \in U} \left| {p - r} \right|$$ (1) 定义 2[18]. 图

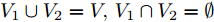

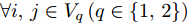

$G$ 为结构平衡图当且仅当节点集$V$ 可分成两个非空集合${V_1}$ 和${V_2}$ , 并满足以下条件:1)

${V_1} \cup {V_2} = V,{V_1} \cap {V_2} = \emptyset $ ;2)若

$\forall i,j \in {V_q}\left( {q \in \left\{ {1,2} \right\}} \right)$ , 则所有权重${a_{ij}} \geq 0$ ;3)若

$\forall i \in {V_q},j \in {V_r},q \ne r\left( {q,r \in \left\{ {1,2} \right\}} \right)$ , 则所有权重${a_{ij}} \leq 0$ .1.2 规范变换

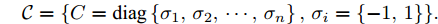

引入一类正交矩阵

$\mathcal{C}$ , 其定义如下:$$\mathcal{C} = \{ {C} = {\rm{diag}}\left\{ {{\sigma _1},{\sigma _2}, \cdots ,{\sigma _n}} \right\}, {\sigma _i} = \{ - 1,1\} \} .$$ 易得,

${C}$ 满足${{C}^{\rm{T}}}{C} = {C}{{C}^{\rm{T}}} = {I}$ , 且${{C}^{ - 1}} = {C}$ .${\rm{diag}}\left\{ {{\sigma _1},{\sigma _2}, \cdots ,{\sigma _n}} \right\}$ 表示对角矩阵, 其对角线元素为$\left\{ {{\sigma _1},{\sigma _2}, \cdots ,{\sigma _n}} \right\}$ . 对于固定无向网络拓扑和零通信时延的多智能体系统, 常采用控制协议${\boldsymbol{\dot x}}(t) = $ $ {\boldsymbol{u}}(t) = - L{\boldsymbol{x}}\left( t \right)$ , 对其规范变换. 令${\boldsymbol{z}} = C{\boldsymbol{x}}$ ,${C} \in \mathcal{C}$ , 由${{C}^{ - 1}} = {C}$ ,${\boldsymbol{x}} = C{\boldsymbol{z}}$ , 则$${\boldsymbol{\dot z}} = - {{L}_{D}}{\boldsymbol{z}}$$ (2) 其中,

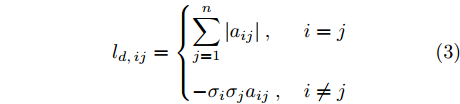

${{L}_{D}} = {CLC} = {D} - {CAC}$ 为规范变换后拉普拉斯矩阵,$${l_{d,ij}} = \left\{ {\begin{aligned} &{\sum\limits_{j = 1}^n {\left| {{a_{ij}}} \right|} }\ ,&{i = j} \\ &{ - {\sigma _i}{\sigma _j}{a_{ij}}}\ , &{i \ne j} \end{aligned}} \right.$$ (3) 引理 1[18].

${L}$ 与${{L}_{D}}$ 等谱, 即具有相同的特征值集合$sp\left( {L} \right) = sp\left( {{{L}_{D}}} \right)$ .1.3 右端不连续微分方程与微分包含Filippov解

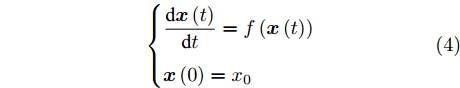

针对右端不连续微分方程, 在微分包含理论和菲利波夫解的框架基础下求全局解.考虑如下

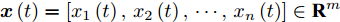

$m$ 维微分方程$$\left\{ \begin{aligned} & \frac{{{\rm{d}}{\boldsymbol{x}}\left( t \right)}}{{{\rm{d}}t}} = f\left( {{\boldsymbol{x}}\left( t \right)} \right) \\ & {\boldsymbol{x}}\left( 0 \right) = {{x}_0} \end{aligned} \right.$$ (4) 其中

${\boldsymbol{x}}\left( t \right) = \left[ {{x_1}\left( t \right),{x_2}\left( t \right), \cdots ,{x_n}\left( t \right)} \right] \in {{\bf{R}}^m}$ ,$f:{\bf{R}} \times $ $ {{\bf{R}}^m} \to {{\bf{R}}^m}$ 为勒贝格可测函数且本性局部有界.如果

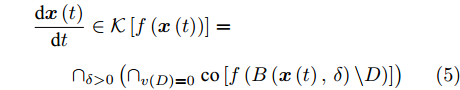

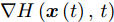

${\boldsymbol{x}}\left( t \right)$ 在$\left[ {0,T} \right)$ 绝对连续, 且满足微分包含$$\begin{split} &\frac{{{\rm{d}}{\boldsymbol{x}}\left( t \right)}}{{{\rm{d}}t}} \in \mathcal{K}\left[ {f\left( {{\boldsymbol{x}}\left( t \right)} \right)} \right] = \\ &\qquad { \cap _{\delta > 0}}\left( {{ \cap _{v\left( D \right) = 0}} \ {\rm co}\left[ {f\left( {B\left( {{\boldsymbol{x}}\left( t \right),\delta } \right)\backslash D} \right)} \right]} \right) \end{split} $$ (5) 其中

$\mathcal{K}$ 是集值函数,${\rm co}\left( \Omega \right)$ 表示集合$\Omega $ 的凸闭包,${ \cap _{v\left( D \right) = 0}}$ 表示所有勒贝格测度为0的集合$D$ 的交集,$B\left( {{\boldsymbol{x}}\left( t \right),\delta } \right)$ 代表以${\boldsymbol{x}}\left( t \right)$ 为中心$\delta $ 为半径的闭球, 则称${\boldsymbol{x}}\left( t \right)$ 为右端不连续微分方程(4)的解, 也称为Filippov解.函数

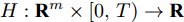

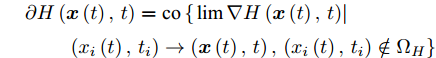

$H:{{\bf{R}}^m} \times \left[ {0,T} \right) \to \bf{R}$ 满足局部李普希茨条件,$H$ 在$\left( {{\boldsymbol{x}}\left( t \right),t} \right)$ 上广义梯度定义为$$\begin{split} &\partial H\left( {{\boldsymbol{x}}\left( t \right),t} \right) = {\rm {co}}\left\{ {\left. {\lim \nabla H\left( {{\boldsymbol{x}}\left( t \right),t} \right)} \right|} \right. \\ &\qquad \left. {\left( {{x_i}\left( t \right),{t_i}} \right) \to \left( {{\boldsymbol{x}}\left( t \right),t} \right),\left( {{x_i}\left( t \right),{t_i}} \right) \notin {\Omega _H}} \right\} \end{split} $$ 其中集合

${\Omega _H}$ 测度为0且$\nabla H\left( {{\boldsymbol{x}}\left( t \right),t} \right)$ 不存在.1.4 问题陈述

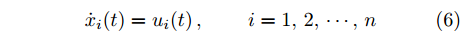

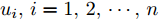

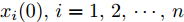

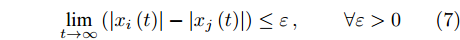

考虑包含

$n$ 个智能体的系统, 智能体$i$ 的动力学方程为$${\dot x_i}(t) = {u_i}(t) \, , \begin{array}{*{20}{c}} {}&{} \end{array}i = 1,2, \cdots ,n $$ (6) 其中

${x_i}(t) \in \bf{R}$ 表示智能体$i$ 的位置状态变量,${u_i}(t) \in $ $ \bf{R}$ 表示智能体$i$ 的控制输入.定义 3. 给定的控制器

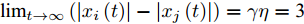

${u_i},i = 1,2, \cdots ,n$ , 若对任意初始值${x_i}(0),i = 1,2, \cdots ,n$ , 都存在一个不依赖任何全局信息和初始值的正数$\varepsilon $ , 使得$$\mathop {\lim }\limits_{t \to \infty } \left( {\left| {{x_i}\left( t \right)} \right| - \left| {{x_j}\left( t \right)} \right|} \right) \leq \varepsilon \, , \begin{array}{*{20}{c}} {}&{} \end{array}\forall \varepsilon > 0$$ (7) 则称系统(6)能实现二分实用一致.

2. 通信受限的多智能体系统二分实用一致性

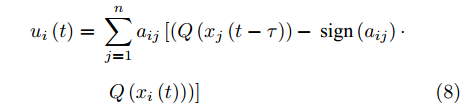

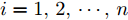

针对多智能体系统(6), 提出如下控制器,

$$\begin{split} {u_i}\left( t \right) = \;& \sum\limits_{j = 1}^n {{a_{ij}}} \left[ {\left( {Q\left( {{x_j}\left( {t - \tau } \right)} \right) - } \right.} \right.{\rm{sign}}\left( {{a_{ij}}} \right) \cdot \\ &\left. {\left. {Q\left( {{x_i}\left( t \right)} \right)} \right)} \right] \end{split} $$ (8) 其中

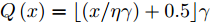

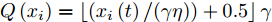

$Q\left( x \right)$ 为量化函数, 定义$Q\left( x \right) = \lfloor \left( {x/\eta \gamma } \right) + 0.5 \rfloor \gamma$ ,$i = 1,2, \cdots ,n$ ,$\gamma $ 为量化水平参数,$\eta \in \left( {0,1} \right]$ 为量化器精度,$\tau $ 为智能体$j$ 向智能体$i$ 通信传输时发生的通信延迟,$\left\lfloor \cdot \right\rfloor $ 为向下取整函数,${\rm{sign}}\left( \cdot \right)$ 为符号函数.本文讨论的是有向强连通符号图下多智能体系统二分实用一致性问题, 系统邻接矩阵中存在负元素, 由式(2)得变换后的邻接矩阵为,

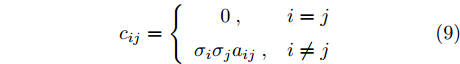

$${c_{ij}} = \left\{ {\begin{array}{*{20}{c}} 0\ ,&{i = j} \\ {{\sigma _i}{\sigma _j}{a_{ij}}}\ ,&{i \ne j} \end{array}} \right.$$ (9) 其中

${\sigma _i},{\sigma _j} = \left\{ { - 1,1} \right\}$ .上述量化器是将连续状态空间映射到离散信息符号码集合, 智能体状态仅取整数, 在控制器(8)作用下, 系统(6)本质上是一个右端不连续的非光滑系统, 其解需要在菲利波夫意义下讨论.

定义 4. 如果函数

${\boldsymbol{x}}\left( t \right):\left[ { - \tau ,T} \right) \to {{\bf{R}}^n}$ 满足:1)

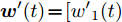

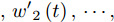

${\boldsymbol{x}}\left( t \right)$ 在$\left[ { - \tau ,T} \right)$ 连续, 在$\left[ {0,T} \right)$ 绝对连续;2)存在可测向量函数

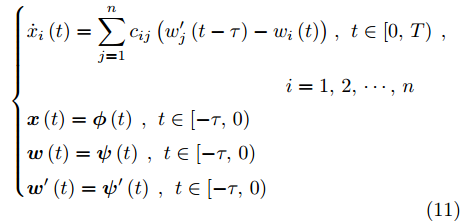

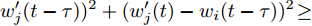

${\boldsymbol{w}}\left( t \right) = \left[ {{w_1}\left( t \right),} \right.$ ${w}_{2}(t),\cdots , $ $ {w}_{n}(t)]: [-\tau , T)\to {\bf{R}}^{n}$ 、${{\boldsymbol{w}}'}( t ) = [ {{{w'}_1}( t )}$ $,{{w}^{\prime }}_{2}\left(t\right),\cdots , $ $ {{w}^{\prime }}_{n}\left(t\right)]: \left[-\tau , T\right)\to {\bf{R}}^{n}$ 使得${\boldsymbol{x}}\left( t \right)$ 在$t \in \left[ {0,T} \right)$ 有$$\frac{{{\rm{d}}{x_i}}}{{{\rm{d}}t}} = \sum\limits_{j = 1}^n {{c_{ij}}\left( {{{w'_j}}\left( {t - \tau } \right) - {w_i}\left( t \right)} \right)}\ , \;\;\; i = 1,2, \cdots ,n$$ (10) 其中

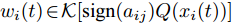

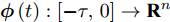

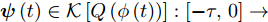

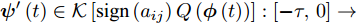

${w_i}( t ) \in \mathcal{K}[ {{\rm{sign}}( {{a_{ij}}} )Q( {{x_i}( t )} )} ]$ 、${w'_i}( t ) \in \mathcal{K}$ $\left[ {Q\left( {{x_i}\left( t \right)} \right)} \right]$ 是关于解${\boldsymbol{x}}\left( t \right)$ 的输出函数, 则称${\boldsymbol{x}}\left( t \right)$ 为系统(6)在$\left[ {0,T} \right)$ 上的Filippov解.定义 5. 给定连续向量函数

${\boldsymbol {\phi}} \left( t \right):\left[ { - \tau ,0} \right] \to {{\bf{R}}^n}$ , 可测向量函数${\boldsymbol {\psi}} \left( t \right) \in \mathcal{K}\left[ {Q\left( {\phi \left( t \right)} \right)} \right]:\left[ { - \tau ,0} \right] \to$ ${{\bf{R}}^n}$ 、${\boldsymbol {\psi}} ' \left( t \right) \in\mathcal{K}\left[ {{\rm{sign}}\left( {{a_{ij}}} \right)Q\left( {{\boldsymbol {\phi}} \left( t \right)} \right)} \right]:\left[ { - \tau ,0} \right] \to$ ${{\bf{R}}^n}$ , 如果$\exists T > 0$ , 使得在$\left[ {0,T} \right)$ 上${\boldsymbol{x}}\left( t \right)$ 为系统(6)的解, 且满足$$\left\{ \begin{aligned} & {{\dot x}_i}\left( t \right) = \sum\limits_{j = 1}^n {{c_{ij}}\left( {{{w'_j}}\left( {t - \tau } \right) - {w_i}\left( t \right)} \right)}\ ,\ t \in \left[ {0,T} \right) \ , \\ &\qquad\qquad\qquad\quad\qquad\qquad\qquad{i = 1,2, \cdots ,n} \\ &{\boldsymbol{x}}\left( t \right) = {\boldsymbol {\phi}} \left( t \right)\ ,\ t \in \left[ { - \tau ,0} \right) \\ &{\boldsymbol{w}}\left( t \right) = {\boldsymbol {\psi}} \left( t \right)\ ,\ t \in \left[ { - \tau ,0} \right) \\ &{{\boldsymbol{w}}'}\left( t \right) = {\boldsymbol {\psi}} '\left( t \right)\ ,\ t \in \left[ { - \tau ,0} \right) \end{aligned} \right.$$ (11) 则

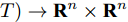

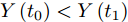

$\left[ {{\boldsymbol{x}}\left( t \right),{\boldsymbol{w}}\left( t \right),{{\boldsymbol{w}}'}\left( t \right)} \right]:\left[ { - \tau ,T} \right) \to {{\bf{R}}^n} \times {{\bf{R}}^n}$ 为系统(6)满足初始条件$\left( {{\boldsymbol {\phi}} \left( t \right),{\boldsymbol {\psi}} \left( t \right),{\boldsymbol {\psi}} '\left( t \right)} \right)$ 的解.定理 1. 对任意初始向量函数

${\boldsymbol {\phi}} \left( t \right)$ 和任意可测向量输出函数${\boldsymbol {\psi}} \left( t \right)$ 、${\boldsymbol {\psi}} '\left( t \right)$ , 系统(11)存在全局解.证明. 1)局部解的存在性

基于参考文献[2]对引理1的证明, 易得系统(11)在

$\left[ {0,T} \right)$ 上存在局部解. 依据泛函微分方程理论, 通过局部解的有界性可得存在全局解. 故进一步证明局部解的有界性.2)设系统(11)的解为

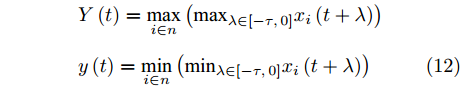

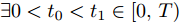

$\left[ {{\boldsymbol{x}}\left( t \right),{\boldsymbol{w}}\left( t \right),{{\boldsymbol{w}}'}\left( t \right)} \right]:$ $[ - \tau , $ $ T ) \to {{\bf{R}}^n} \times {{\bf{R}}^n}$ , 令$$\begin{split} &Y\left( t \right) = {\max _{i \in n}}\left( {{{\max }_{\lambda \in \left[ { - \tau ,0} \right]}}{x_i}\left( {t + \lambda } \right)} \right) \\ &y\left( t \right) = {\min _{i \in n}}\left( {{{\min }_{\lambda \in \left[ { - \tau ,0} \right]}}{x_i}\left( {t + \lambda } \right)} \right) \end{split} $$ (12) 若能证明

$Y\left( t \right)$ 对$t$ 为非增函数,$y\left( t \right)$ 对$t$ 为非减函数, 则上述解有界. 我们将利用反证法证明该解的有界性. 首先分四步证明$Y\left( t \right)$ 对$t$ 为非增函数.假设

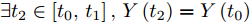

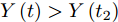

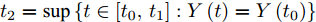

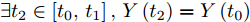

$Y\left( t \right)$ 是增函数,$\exists 0 < {t_0} < {t_1} \in \left[ {0,T} \right)$ , 使得$Y\left( {{t_0}} \right) < Y\left( {{t_1}} \right)$ .第1步: 证明

$\exists {t_2} \in \left[ {{t_0},{t_1}} \right],Y\left( {{t_2}} \right) = Y\left( {{t_0}} \right)$ , 且有$\forall t \in \left( {{t_2},{t_1}} \right]$ ,$Y\left( t \right) > Y\left( {{t_2}} \right)$ .令

${t_2} = \sup \left\{ {t \in \left[ {{t_0},{t_1}} \right]:Y\left( t \right) = Y\left( {{t_0}} \right)} \right\}$ , 则有$Y\left( {{t_2}} \right) = $ $ Y\left( {{t_0}} \right)$ .假设

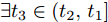

$\exists {t_3} \in \left( {{t_2},{t_1}} \right]$ ,$Y\left( {{t_3}} \right) \leq Y\left( {{t_2}} \right)$ .$Y\left( t \right)$ 为增函数,$Y\left( {{t_1}} \right) > Y\left( {{t_0}} \right)$ , 由介值定理,$\exists {t_4} \in \left( {{t_3},{t_1}} \right] \subseteq \left( {{t_2},{t_1}} \right]$ , 使得$Y\left( {{t_4}} \right) = Y\left( {{t_0}} \right)$ .这与

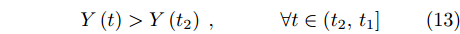

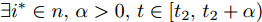

${t_2}$ 定义相矛盾, 上述假设不成立. 故有,$\exists {t_2} \in \left[ {{t_0},{t_1}} \right],Y\left( {{t_2}} \right) = Y\left( {{t_0}} \right)$ , 且有$$Y\left( t \right) > Y\left( {{t_2}} \right)\ ,\begin{array}{*{20}{c}} {}&{}&{} \end{array}\forall t \in \left( {{t_2},{t_1}} \right]$$ (13) 第2步: 推导

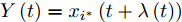

$\exists {i^*} \in n,\alpha > 0,t \in \left[ {{t_2},{t_2} + \alpha } \right)$ , 有$Y\left( t \right) = {x_{{i^*}}}\left( {t + \lambda \left( t \right)} \right)$ ,$\lambda \left( t \right) \in \left[ { - \tau ,0} \right]$ . 令${Y'_i}\left( t \right) = $ $ {\max _{\lambda \in \left[ { - \tau ,0} \right]}}\left( {{x_i}\left( {t + \lambda \left( t \right)} \right)} \right) = {x_i}\left( {t + \lambda \left( t \right)} \right)$ , 由函数连续性可得,$Y\left( t \right) = {\max _{i \in n}}\left( {{Y'_i}\left( t \right)} \right)$ .因此

$\exists {i^*} \in n$ ,$\alpha > 0$ , 当$t \in \left[ {{t_2},{t_2} + \alpha } \right)$ , 使得$Y\left( t \right) =$ ${Y'_{{i^*}}}\left( t \right)$ . 即$Y\left( t \right) = {x_{{i^*}}}\left( {t + \lambda \left( t \right)} \right)$ ,$\lambda \left( t \right) \in \left[ { - \tau ,0} \right]$ .第3步: 证明

$\lambda \left( {{t_2}} \right) = 0$ .设

$t = {t_2}$ ,$\lambda \left( {{t_2}} \right) \ne 0$ ,$$Y\left( {{t_2}} \right) = {Y'_{{i^*}}}\left( {{t_2}} \right){\rm{ = }}{x_{{i^{\rm{*}}}}}\left( {{t_{\rm{2}}} + \lambda \left( t \right)} \right) > {x_{{i^{\rm{*}}}}}\left( {{t_{\rm{2}}}} \right)$$ 令

$\kappa \left( t \right) = {x_{{i^*}}}\left( {{t_2} + \lambda \left( {{t_2}} \right)} \right) - {x_{{i^*}}}\left( t \right),\kappa \left( {{t_2}} \right) > 0$ , 则有, 当$t \in \left[ {{t_2},{t_2} + \alpha '} \right),\alpha ' \in \left( {0,\alpha } \right)$ ,$${x_{{i^{\rm{*}}}}}\left( {{t_{\rm{2}}} + \lambda \left( {{t_{\rm{2}}}} \right)} \right) > {x_{{i^{\rm{*}}}}}\left( t \right)$$ (14) 由此, 当

$t \in \left[ {{t_2},{t_2} + \alpha '} \right),Y\left( t \right) \leq Y\left( {{t_2}} \right)$ . 这与第1步所得结论相矛盾, 上述假设不成立.故有, 当$t = {t_2}$ 时,$\lambda \left( {{t_2}} \right) = 0$ ,$Y\left( {{t_2}} \right) = {x_{{i^*}}}\left( {{t_2}} \right)$ .第4步: 证明

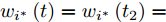

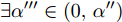

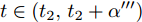

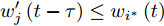

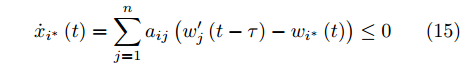

$Y\left( t \right)$ 对$t$ 为非增函数. 若对连续函数${x_{{i^*}}}\left( t \right),{x_{{i^*}}}\left( {{t_2}} \right) \ne \left( {\theta + 0.5} \right)\gamma \eta $ ,$\theta $ 为正整数,$\exists \alpha '' < \alpha '$ ,$\forall t \in \left( {{t_2},{t_2} + \alpha ''} \right)$ , 使得${x_{{i^*}}}\left( t \right) \ne \left( {\theta + 0.5} \right)\gamma \eta , $ $ {w'_{{i^*}}}\left( t \right) = {w'_{{i^*}}}\left( {{t_2}} \right) = Q\left( {{x_{{i^*}}}\left( {{t_2}} \right)} \right),$ ${w_{{i^*}}}\left( t \right) = {w_{{i^*}}}\left( {{t_2}} \right) = $ $\sum\nolimits_{j = 1}^n {\left( {{\rm{sign}}\left( {{a_{ij}}} \right)Q\left( {{x_{{i^*}}}\left( {{t_2}} \right)} \right)} \right)}. $ 由

$Y\left( {{t_2}} \right) = {x_{{i^*}}}\left( {{t_2}} \right)$ , 则${x_j}\left( {{t_2} - \tau } \right) \leq {x_{{i^*}}}\left( {{t_2}} \right)$ .又因

$Q\left( \cdot \right)$ 为非递减函数,$\exists \alpha ''' \in \left( {0,\alpha ''} \right)$ , 对$t \in \left( {{t_2},{t_2} + \alpha '''} \right)$ , 有${w'_j}\left( {t - \tau } \right) \leq {w_{{i^*}}}\left( t \right)$ , 则$${\dot x_{{i^*}}}\left( t \right) = \sum\limits_{j = 1}^n {{a_{ij}}\left( {{{w'_j}}\left( {t - \tau } \right) - {w_{{i^*}}}\left( t \right)} \right)} \leq 0$$ (15) 由式(14),

$\exists {t_5} \in \left( {{t_2},{t_2} + \alpha '''} \right)$ ,${x_{{i^*}}}\left( {{t_5}} \right) > {x_{{i^*}}}\left( {{t_2}} \right)$ , 则必须存在集合$\left( {{t_2},{t_5}} \right)$ 的子集${\Omega _1}$ ,${\Omega _1}$ 有正测度, 且${\dot x_{{i^*}}}\left( t \right) > 0$ . 这与式(15)矛盾.若

${x_{{i^*}}}\left( {{t_2}} \right) = \left( {\theta + 0.5} \right)\gamma \eta $ , 由上述分析, 对$\forall \alpha ''$ ,$\exists {t_6} \in \left( {{t_2},{t_2} + \alpha ''} \right]$ 使得${x_{{i^*}}}\left( {{t_6}} \right) > {x_{{i^*}}}\left( {{t_2}} \right)$ .令

${t_7} = \sup \left\{ {t \in \left[ {{t_2},{t_6}} \right]:{x_{{i^*}}}\left( t \right) = {x_{{i^*}}}\left( {{t_2}} \right)} \right\}$ , 由${x_{{i^*}}}\left( t \right)$ 函数连续性,${t_2} \leq {t_7} < {t_6}$ ,${x_{{i^*}}}\left( {{t_7}} \right) = $ ${x_{{i^*}}}\left( {{t_2}} \right)$ .因此, 对

$\forall t \in ( {{t_7},{t_6}} ]$ , 有${x_{{i^*}}}( t ) \geq Y( {{t_2}} )$ , 且${x_{{i^*}}} ( t ) \ne $ $ ( {\theta + 0.5} )\gamma \eta$ .与上同理, 由

${x_{{i^*}}}\left( {{t_7}} \right) > {x_{{i^*}}}\left( {{t_6}} \right)$ , 必须存在集合$\left( {{t_7},{t_6}} \right]$ 的一个子集${\Omega _2}$ ,${\Omega _2}$ 有正测度, 且${\dot x_{{i^*}}}\left( t \right) > 0$ . 这与式(15)矛盾.得证,

$Y\left( t \right)$ 对$t$ 为非增函数. 同理, 可证得$y\left( t \right)$ 对$t$ 为非减函数.可得, 对

$\forall i \in n$ , 有$y\left( 0 \right) \leq {x_i}\left( t \right) \leq Y\left( 0 \right)$ , 系统(11)的局部解$\left[ {{\boldsymbol{x}}\left( t \right),{\boldsymbol{w}}\left( t \right),} \right.\left. {{{\boldsymbol{w}}'}\left( t \right)} \right]$ 有界.由此, 系统(11)存在全局解. □

定理 2. 假设多智能体系统的固定有向通信拓扑

$G$ 为强连通符号图, 考虑存在通信时延和量化数据通信约束的多智能体系统(6), 在控制器(8)的作用下, 系统在任意初始条件下均能实现二分实用一致, 即$$\mathop {\lim }\limits_{t \to \infty } \left( {\left| {{x_i}\left( t \right)} \right| - \left| {{x_j}\left( t \right)} \right|} \right) \leq \varepsilon $$ (16) 其中,

$\forall \varepsilon > 0$ .证明. 构造如下Lyapunov函数:

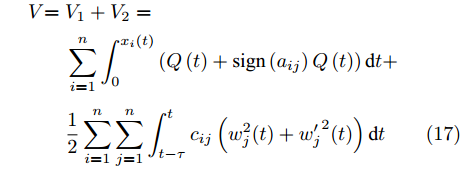

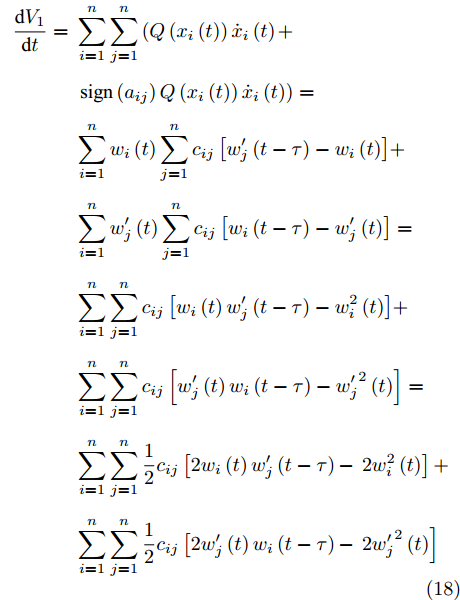

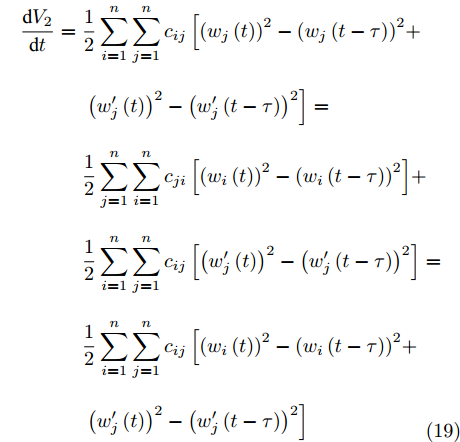

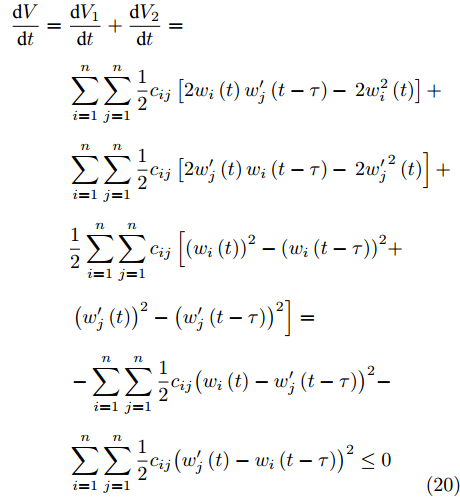

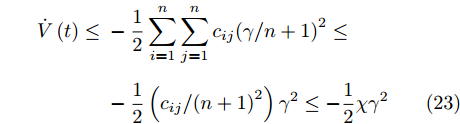

$$\begin{split} V {\rm{ = }}\;& {V_1} + {V_2} =\\ & \sum\limits_{i = 1}^n {\int_0^{{x_i}\left( t \right)} {\left( {Q\left( t \right) + {\rm{sign}}\left( {{a_{ij}}} \right)Q\left( t \right)} \right){\rm{d}}t} } + \\ & \frac{1}{2}\sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {\int_{t - \tau }^t {{c_{ij}}\left( {{w_j^2}{{\left( t \right)}} + {{w'_j}^2}{{\left( t \right)}}} \right){\rm{d}}t} } } \end{split} $$ (17) 求导有

$$\begin{split} \frac{{{\rm{d}}{V_1}}}{{{\rm{d}}t}} =\;& \sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {\left( {Q\left( {{x_i}\left( t \right)} \right){{\dot x}_i}\left( t \right)} \right.} } + \\ &\left. {{\rm{sign}}\left( {{a_{ij}}} \right)Q\left( {{x_i}\left( t \right)} \right){{\dot x}_i}\left( t \right)} \right) =\\ & \sum\limits_{i = 1}^n {{w_i}\left( t \right)\sum\limits_{j = 1}^n {{c_{ij}}\left[ {{{w'_j}}\left( {t - \tau } \right) - {w_i}\left( t \right)} \right]} } + \\ & \sum\limits_{i = 1}^n {{{w'_j}}\left( t \right)\sum\limits_{j = 1}^n {{c_{ij}}\left[ {{w_i}\left( {t - \tau } \right) - {{w'_j}}\left( t \right)} \right]} } =\\ & \sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {{c_{ij}}\left[ {{w_i}\left( t \right){{w'_j}}\left( {t - \tau } \right) - w^2_i\left( t \right)} \right]} } + \\ & \sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {{c_{ij}}\left[ {{{w'_j}}\left( t \right){w_i}\left( {t - \tau } \right) - {{w'_j}}^2\left( t \right)} \right]} }= \\ & \sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {\frac{1}{2}{c_{ij}}} } \left[ {2{w_i}\left( t \right){{w'_j}}\left( {t - \tau } \right) - } \right.\left. {2w^2_i\left( t \right)} \right] + \\ &\sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {\frac{1}{2}{c_{ij}}} } \left[ {2{{w'_j}}\left( t \right){w_i}\left( {t - \tau } \right) - } \right.\left. {2{{w'_j}}^2\left( t \right)} \right] \end{split} $$ (18) $$\begin{split} \frac{{{\rm{d}}{V_2}}}{{{\rm{d}}t}} =\;&\frac{1}{2}\sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {{c_{ij}}} } \left[ {{{\left( {{w_j}\left( t \right)} \right)}^2} - {{\left( {{w_j}\left( {t - \tau } \right)} \right)}^2} + } \right. \\ &\left. {{{\left( {{{w'_j}}\left( t \right)} \right)}^2} - {{\left( {{{w'_j}}\left( {t - \tau } \right)} \right)}^2}} \right] =\\ & \frac{1}{2}\sum\limits_{j = 1}^n {\sum\limits_{i = 1}^n {{c_{ji}}\left[ {{{\left( {{w_i}\left( t \right)} \right)}^2} - {{\left( {{w_i}\left( {t - \tau } \right)} \right)}^2}} \right]} } + \\ & \frac{1}{2}\sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {{c_{ij}}\left[ {{{\left( {{{w'_j}}\left( t \right)} \right)}^2} - {{\left( {{{w'_j}}\left( {t - \tau } \right)} \right)}^2}} \right]} } = \\ & \frac{1}{2}\sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {{c_{ij}}} } \left[ {{{\left( {{w_i}\left( t \right)} \right)}^2} - {{\left( {{w_i}\left( {t - \tau } \right)} \right)}^2} + } \right. \\ &\left. {{{\left( {{{w'_j}}\left( t \right)} \right)}^2} - {{\left( {{{w'_j}}\left( {t - \tau } \right)} \right)}^2}} \right] \\[-10pt] \end{split} $$ (19) 从而有,

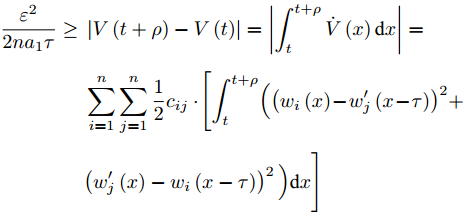

$$\begin{split} \frac{{{\rm{d}}V}}{{{\rm{d}}t}} = \;& \frac{{{\rm{d}}{V_1}}}{{{\rm{d}}t}} + \frac{{{\rm{d}}{V_2}}}{{{\rm{d}}t}} =\\ & \sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {\frac{1}{2}{c_{ij}}} } \left[ {2{w_i}\left( t \right){{w'_j}}\left( {t - \tau } \right) - } \right.\left. {2w^2_i\left( t \right)} \right] + \\ & \sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {\frac{1}{2}{c_{ij}}} } \left[ {2{{w'_j}}\left( t \right){w_i}\left( {t - \tau } \right) - } \right.\left. {2{{w'_j}}^2\left( t \right)} \right] + \\ &\frac{1}{2}\sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {{c_{ij}}} } \left[ {{{\left( {{w_i}\left( t \right)} \right)}^2} - {{\left( {{w_i}\left( {t - \tau } \right)} \right)}^2} + } \right. \\ & \left. {{{\left( {{{w'_j}}\left( t \right)} \right)}^2} - {{\left( {{{w'_j}}\left( {t - \tau } \right)} \right)}^2}} \right]= \\ & - \sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {\frac{1}{2}{c_{ij}}} } {\left( {{w_i}\left( t \right) - {{w'_j}}\left( {t - \tau } \right)} \right)^2} - \\ & \sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {\frac{1}{2}{c_{ij}}} } {\left( {{{w'_j}}\left( t \right) - {w_i}\left( {t - \tau } \right)} \right)^2} \leq 0 \\[-10pt] \end{split} $$ (20) 由式(20)得,

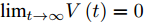

$V\left( t \right)$ 对$t$ 为非增函数,$V\left( t \right) > 0$ ,${\lim _{t \to \infty }}V\left( t \right) = 0$ 存在, 因此多智能体系统能实现稳定.令

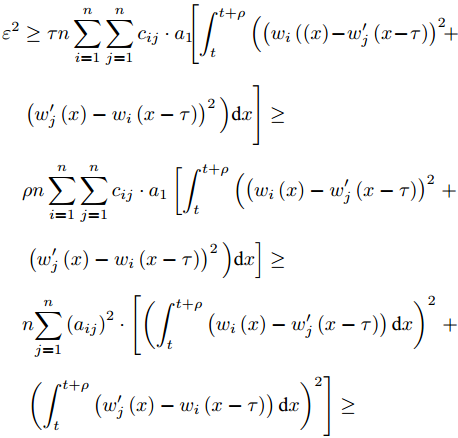

${a_1} = {\max _{1 \leq i \leq j \leq n}}{c_{ij}}$ ,$\forall \varepsilon > 0$ ,$\exists {T_0}$ 使得$\forall t \geq $ $ {T_0}$ ,$\rho \in \left[ {0,\tau } \right]$ $$\begin{split} \frac{{{\varepsilon ^2}}}{{2n{a_1}\tau }} \ge\;& \left| {V\left( {t + \rho } \right) - V\left( t \right)} \right| = \left| {\int_t^{t + \rho } {\dot V\left( x \right){\rm{d}}x} } \right|=\\ & \sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {\frac{1}{2}{c_{ij}}} } \cdot \Bigg[ {\int_t^{t + \rho } \Big({{{\left( {{w_i}\left( x \right) - {{w'_j}}\left( {x - \tau } \right)} \right)}^2}} } + \\ & {{\left( {{{w'_j}}\left( x \right) - {w_i}\left( {x - \tau } \right)} \right)^2 \Big)}{\rm{d}}x} \Bigg] \end{split}$$ 则

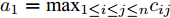

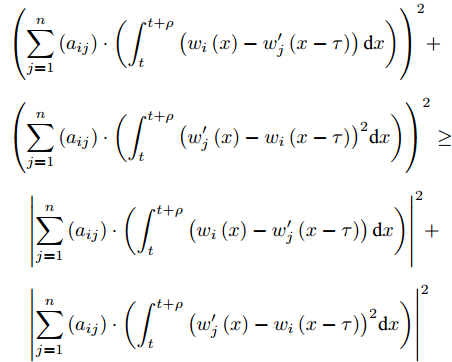

$$\begin{split} &{\varepsilon ^2} \ge \tau n\sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {{c_{ij}}} } \cdot {a_1}\Bigg[ {\int_t^{t + \rho }\Big( {{{\left( {{w_i}\left(( x \right) - {{w'_j}}\left( {x - \tau } \right)} \right)}^2}} } + \\ &\quad \left( {{{w'_j}}\left( x \right) - {w_i}\left( {x - \tau } \right)} \right)^2\Big){\rm{d}}x \Bigg] \ge \\ &\quad \rho n\sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {{c_{ij}}} } \cdot {a_1}\left[ {\int_t^{t + \rho } \Big({{{\left( {{w_i}\left( x \right) - {{w'_j}}\left( {x - \tau } \right)} \right)}^2}} } \right. + \\ &\quad \left. {\left( {{{w'_j}}\left( x \right) - {w_i}\left( {x - \tau } \right)} \right)^2\Big){\rm{d}}x} \right] \ge \\ &\quad n{\sum\limits_{j = 1}^n {\left( {{a_{ij}}} \right)} ^2} \cdot \left[ {{{\left( {\int_t^{t + \rho }{\left( {{w_i}\left( x \right) - {{w'_j}}\left( {x - \tau } \right)} \right){\rm{d}}x} } \right)}^2}} \right.+\\ &\quad \left. { {{\left( {\int_t^{t + \rho } {\left( {{{w'_j}}\left( x \right) - {w_i}\left( {x - \tau } \right)} \right){\rm{d}}x} } \right)}^2}} \right] \ge \end{split}$$ $$\begin{split} &{\left( {\sum\limits_{j = 1}^n {\left( {{a_{ij}}} \right)} \cdot \left( {\int_t^{t + \rho } {\left( {{w_i}\left( x \right) - {{w'_j}}\left( {x - \tau } \right)} \right){\rm{d}}x} } \right)} \right)^2} + \\ &{\left( {\sum\limits_{j = 1}^n {\left( {{a_{ij}}} \right)} \cdot \left( {\int_t^{t + \rho } {{{\left( {{{w'_j}}\left( x \right) - {w_i}\left( {x - \tau } \right)} \right)}^2}{\rm{d}}x} } \right)} \right)^2} \ge \\ &\quad {\left| {\sum\limits_{j = 1}^n {\left( {{a_{ij}}} \right)} \cdot \left( {\int_t^{t + \rho } {\left( {{w_i}\left( x \right) - {{w'_j}}\left( {x - \tau } \right)} \right){\rm{d}}x} } \right)} \right|^2} + \\ &\quad {\left| {\sum\limits_{j = 1}^n {\left( {{a_{ij}}} \right)} \cdot \left( {\int_t^{t + \rho } {{{\left( {{{w'_j}}\left( x \right) - {w_i}\left( {x - \tau } \right)} \right)}^2}{\rm{d}}x} } \right)} \right|^2} \end{split}$$ 从而有,

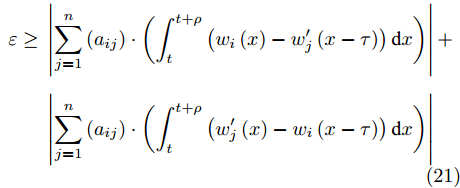

$$\begin{split} \varepsilon \ge \;& \left| {\sum\limits_{j = 1}^n {\left( {{a_{ij}}} \right)} \cdot \left( {\int_t^{t + \rho } {\left( {{w_i}\left( x \right) - {{w'_j}}\left( {x - \tau } \right)} \right){\rm{d}}x} } \right)} \right| + \\ &\left| {\sum\limits_{j = 1}^n {\left( {{a_{ij}}} \right)} \cdot \left( {\int_t^{t + \rho } {\left( {{{w'_j}}\left( x \right) - {w_i}\left( {x - \tau } \right)} \right){\rm{d}}x} } \right)} \right| \end{split}$$ (21) 再由式(21)可得,

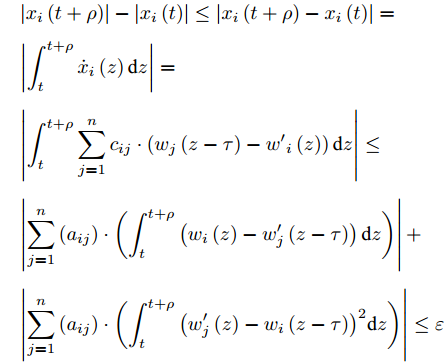

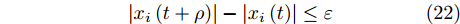

$$\begin{split} & \left| {{x_i}\left( {t + \rho } \right)} \right| - \left| {{x_i}\left( t \right)} \right| \le \left| {{x_i}\left( {t + \rho } \right) - {x_i}\left( t \right)} \right|=\\ & \left| {\int_t^{t + \rho } {{{\dot x}_i}\left( z \right){\rm{d}}z} } \right|=\\ & \left| {\int_t^{t + \rho } {\sum\limits_{j = 1}^n {{c_{ij}}} \cdot \left( {{w_j}\left( {z - \tau } \right) - {{w'}_i}\left( z \right)} \right){\rm{d}}z} } \right| \le \\ & \left| {\sum\limits_{j = 1}^n {\left( {{a_{ij}}} \right)} \cdot \left( {\int_t^{t + \rho } {\left( {{w_i}\left( z \right) - {{w'_j}}\left( {z - \tau } \right)} \right){\rm{d}}z} } \right)} \right| + \\ &\left| {\sum\limits_{j = 1}^n {\left( {{a_{ij}}} \right)} \cdot \left( {\int_t^{t + \rho } {{{\left( {{{w'_j}}\left( z \right) - {w_i}\left( {z - \tau } \right)} \right)}^2}{\rm{d}}z} } \right)} \right| \le \varepsilon \end{split}$$ 由此, 对

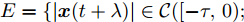

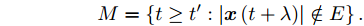

$\forall \varepsilon > 0$ ,$\exists {T_0}$ 使得$\forall t \geq {T_0}$ ,$\rho \in \left[ {0,\tau } \right]$ $$\left| {{x_i}\left( {t + \rho } \right)} \right| - \left| {{x_i}\left( t \right)} \right| \leq \varepsilon $$ (22) 进一步假设存在集合

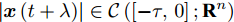

${ E} = \{ {| {{\boldsymbol{x}}( {t + \lambda } )} |} \in \mathcal{C}( [ { - \tau ,0}); $ $ {{\bf{R}}^n} ):( {{w_i}( t ) - }$ ${ {{{w'_j}}( {t - \tau } )} )^2} + {{( {{{w'_j}}( t ) - {w_i}( {t - \tau } )} )}^2} \leq $ ${{( {{\gamma /{n + 1}}} )}^2} \} $ , 对$\forall t' \ge 0$ ,$\exists t'' \geq t'$ 使得系统中所有智能体都能在$t''$ 收敛至集合${ E}$ . 令$${M} = \left\{ {t \geq t':\left| {{\boldsymbol{x}}\left( {t + \lambda } \right)} \right| \notin { E}} \right\}.$$ 对

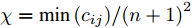

$\left| {{\boldsymbol{x}}\left( {t + \lambda } \right)} \right| \in \mathcal{C}\left( {\left[ { - \tau ,0} \right];{{\bf{R}}^n}} \right)$ ,$t \in {M}$ , 使$( {w_i}( t ) - $ $ {{w'_j}}( {t - \tau } ))^2 + {( {{{w'_j}}( t ) - {w_i}( {t - \tau } )} )^2} \geq$ ${\left( {{\gamma /{n + 1}}} \right)^2}$ , 有$$\begin{split} \dot V\left( t \right) \leq\;& - \frac{1}{2}\sum\limits_{i = 1}^n {\sum\limits_{j = 1}^n {{c_{ij}}{{\left( {{\gamma /{n + 1}}} \right)}^2}} } \leq \\ &-\frac{1}{2}\left( {{{{c_{ij}}}/{{{\left( {n + 1} \right)}^2}}}} \right){\gamma ^2} \leq - \frac{1}{2}\chi {\gamma ^2} \end{split} $$ (23) 其中,

$\chi = {{\min \left( {{c_{ij}}} \right)}/{{{\left( {n + 1} \right)}^2}}}$ .假设,

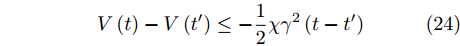

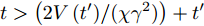

$t \in {M}$ , 对$\forall t' \geq 0$ ,$$V\left( t \right) - V\left( {t'} \right) \leq - \frac{1}{2}\chi {\gamma ^2}\left( {t - t'} \right)$$ (24) 对

$t > \left( {{{2V\left( {t'} \right)}/({\chi {\gamma ^2}}}}) \right) + t'$ , 由式(24)得$V\left( t \right) < 0$ , 这与$V\left( t \right)$ 的定义相矛盾. 由此, 对$\forall t' \geq 0$ ,$\exists t'' \geq t'$ 使所有智能体能在$t''$ 收敛至集合${E}$ .由

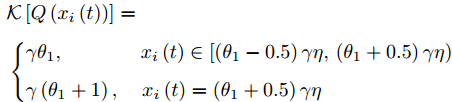

$Q\left( {{x_i}} \right) = \left\lfloor {\left( {{x_i}\left( t \right)/(\gamma \eta) } \right) + 0.5} \right\rfloor \gamma$ ,$$\begin{split} &{\cal K}\left[ {Q\left( {{x_i}\left( t \right)} \right)} \right] = \\ &\left\{ \begin{aligned} &{\gamma {\theta _1}} ,&{{x_i}\left( t \right) \in \left[ {\left( {{\theta _1} - 0.5} \right)\gamma \eta , \left( {\theta_1 + 0.5} \right)\gamma \eta } \right)} \\ &\gamma \left( {{\theta _1} + 1} \right), & {x_i}\left( t \right) = \left( {{\theta _1} + 0.5} \right)\gamma \eta \qquad\qquad\qquad\;\; \end{aligned} \right. \end{split}$$ 其中

${\theta _1} \in \bf{Z}$ .又由

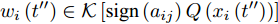

${w_i}\left( {t''} \right) \in \mathcal{K}\left[ {{\rm{sign}}\left( {{a_{ij}}} \right)Q\left( {{x_i}\left( {t''} \right)} \right)} \right]$ 、${w'_i}\left( {t''} \right) \in $ $ \mathcal{K}$ $\left[ {Q\left( {{x_i}\left( {t''} \right)} \right)} \right]$ , 易得$\exists {\theta _{ij}} \in \bf{Z}$ , 使得$$\left\{ \begin{aligned} &{x_i}\left( {t'} \right) \in \left[ {\left( {{\theta _{ij}} - 0.5} \right)\gamma \eta ,\left( {{\theta _{ij}} + 0.5} \right)\gamma \eta } \right] \\ &{x_j}\left( {t' - \tau } \right) \in \left[ {\left( {{\theta _{ij}} - 0.5} \right)\gamma \eta ,\left( {{\theta _{ij}} + 0.5} \right)\gamma \eta } \right] \end{aligned} \right.$$ (25) 由于所考虑的网络为强连通图, 对任意智能体

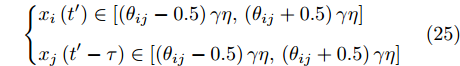

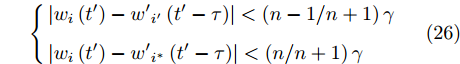

$i$ 到其邻居智能体$i'$ 、${i^{\rm{*}}}$ 有不超过$n - 1$ 条路径.结合上述分析, 可得$$\left\{ \begin{aligned} &\left| {{w_i}\left( {t'} \right) - {{w'}_{i'}}\left( {t' - \tau } \right)} \right| < \left( {{{n - 1}/{n + 1}}} \right)\gamma \\ &\left| {{w_i}\left( {t'} \right) - {{w'}_{{i^{\rm{*}}}}}\left( {t' - \tau } \right)} \right| < \left( {{n/{n + 1}}} \right)\gamma \end{aligned} \right.$$ (26) 因此, 对

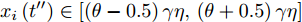

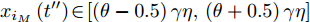

$\forall t' \geq 0$ ,$\exists t''$ , 使得任意一个智能体$i$ 的状态${x_i}\left( {t''} \right) \in \left[ {\left( {\theta - 0.5} \right)\gamma \eta ,\left( {\theta + 0.5} \right)\gamma \eta } \right]$ .由定理1的证明知,

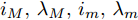

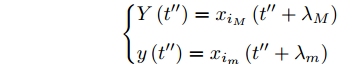

$Y\left( t \right)$ 为非增函数,$y\left( t \right)$ 为非减函数, 由式(12), 存在${i_M},{\lambda _M},{i_m},{\lambda _m}$ , 使得$$\left\{ \begin{aligned} & Y\left( {t''} \right) = {x_{{i_M}}}\left( {t'' + {\lambda _M}} \right) \\ & y\left( {t''} \right) = {x_{{i_m}}}\left( {t'' + {\lambda _m}} \right) \end{aligned} \right.$$ 设

${x_{{i_M}}}\left( {t''} \right) \in \left[ {\left( {\theta - 0.5} \right)\gamma \eta ,\left( {\theta + 0.5} \right)\gamma \eta } \right]$ ,${x_{{i_m}}}( t'' - $ $ \tau ) \in \left[ {\left( {\theta - 0.5} \right)\gamma \eta ,\left( {\theta + 0.5} \right)\gamma \eta } \right]$ .由式(22)得,

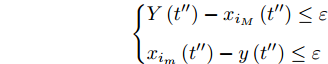

$$\left\{ \begin{aligned} & Y\left( {t''} \right) - {x_{{i_M}}}\left( {t''} \right) \leq \varepsilon \\ & {x_{{i_m}}}\left( {t''} \right) - y\left( {t''} \right) \leq \varepsilon \end{aligned} \right.$$ 从而得,

$${x_{{i_m}}}\left( {t'' - \tau } \right) - \varepsilon \leq y\left( {t''} \right) \leq {x_{{i_M}}}\left( {t''} \right) + \varepsilon $$ 再由

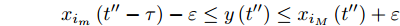

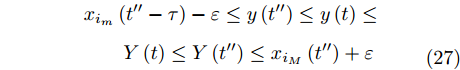

$Y\left( t \right)$ 和$y\left( t \right)$ 的单调性可得, 对$\forall t \geq t''$ ,$$\begin{split} &{x_{{i_m}}} \left( {t'' - \tau } \right) - \varepsilon \leq y\left( {t''} \right) \leq y\left( t \right) \leq \\ &\qquad Y \left( t \right) \leq Y\left( {t''} \right) \leq {x_{{i_M}}}\left( {t''} \right) + \varepsilon \\ \end{split} $$ (27) 由式(1), 对

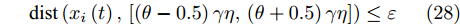

$\forall \varepsilon > 0$ , 有$${\rm {dist}}\left( {{x_i}\left( t \right),\left[ {\left( {\theta - 0.5} \right)\gamma \eta ,\left( {\theta + 0.5} \right)\gamma \eta } \right]} \right) \leq \varepsilon $$ (28) 由此可得, 对任意一个智能体

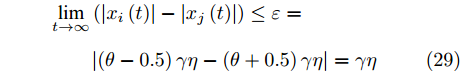

$i$ 的状态${x_i}\left( t \right)$ 都能收敛到集合$\left[ {\left( {\theta - 0.5} \right)\gamma \eta ,\left( {\theta + 0.5} \right)\gamma \eta } \right]$ 中. 由此可得,$$\begin{split} & \mathop {\lim }\limits_{t \to \infty } \left( {\left| {{x_i}\left( t \right)} \right| - \left| {{x_j}\left( t \right)} \right|} \right) \leq \varepsilon = \\ &\qquad \left| {\left( {\theta - 0.5} \right)\gamma \eta - \left( {\theta + 0.5} \right)\gamma \eta } \right| = \gamma \eta \end{split} $$ (29) □

3. 仿真实例

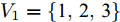

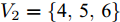

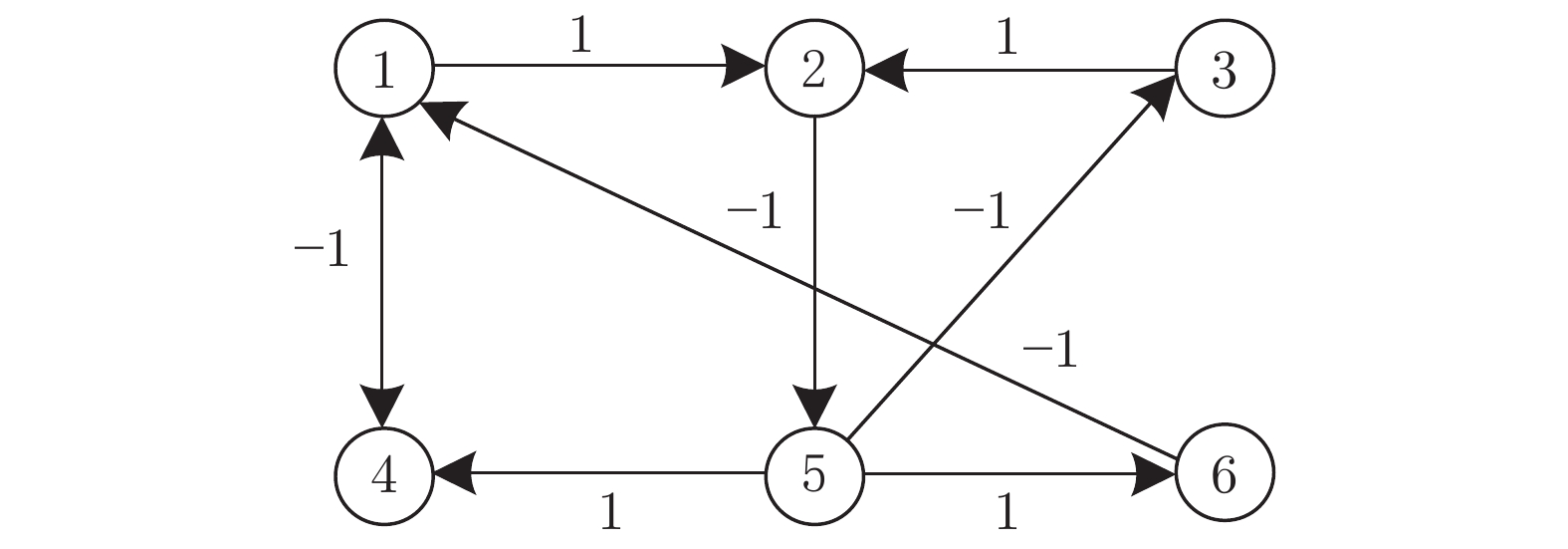

实例 1. 考虑由6个智能体构成的多智能体系统, 其有向通信拓扑结构如图1所示.该拓扑图满足结构平衡, 图中两个子群分别为

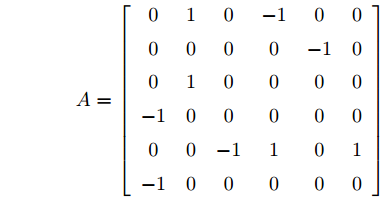

$ {V}_{1}=\left\{\rm{1}, \rm{2}, \rm{3}\right\}$ 和$ {V}_{2}=\left\{\rm{4}, \rm{5}, \rm{6}\right\}$ , 子群之间为竞争关系, 子群内部为合作关系.由通信拓扑图不难得到邻接矩阵

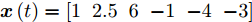

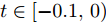

${A}$ , 对邻接矩阵${A}$ 规范变换, 取转换矩阵为${C} = {\rm{diag}}\{ 1,1,1, - 1, $ $ - 1, - 1 \}$ . 其中, 转换矩阵${C}$ 为正交矩阵,${C} = {\rm{diag}}\{ {\sigma _1}, $ $ {\sigma _2}, \cdots ,{\sigma _n} \},$ ${\sigma _i} = \{ - 1,1\} $ , 满足${{C}^{\rm{T}}}{C} = {C}{{C}^{\rm{T}}} = {I}$ ,${{C}^{ - 1}} = {C}$ ;${\rm{diag}}\left\{ {{\sigma _1},{\sigma _2}, \cdots ,{\sigma _n}} \right\}$ 表示对角矩阵, 其对角线元素为$\left\{ {{\sigma _1},{\sigma _2}, \cdots ,{\sigma _n}} \right\}$ .$${A} = \left[ {\begin{array}{*{20}{c}} 0&1&0&{ - 1}&0&0 \\ 0&0&0&0&{ - 1}&0 \\ 0&1&0&0&0&0 \\ { - 1}&0&0&0&0&0 \\ 0&0&{ - 1}&1&0&1 \\ { - 1}&0&0&0&0&0 \end{array}} \right]$$ 取

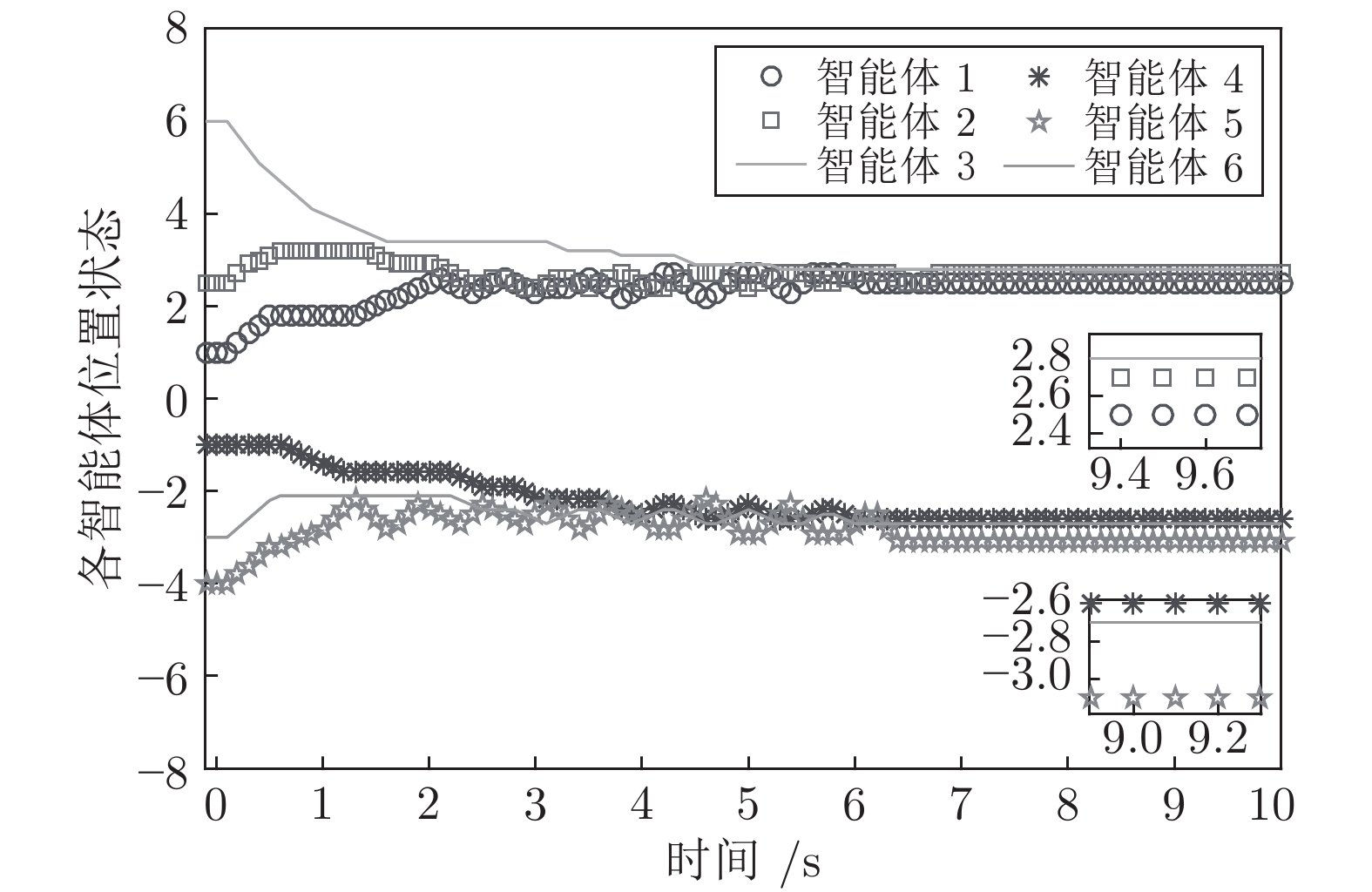

$\eta = 1$ ,$\gamma = {\rm{3}}$ ,$\tau = 0.1$ , 各智能体初始值${\boldsymbol{x}}\left( t \right) = [ 1\;\;{2.5}\;\;6\;\;{ - 1}\;\;{ - 4}\;\;{ - 3} ]$ ,$t \in \left[ { - 0.1,0} \right)$ . 由式(29)易得,$\mathop {\lim }_{t \to \infty } \left( {\left| {{x_i}\left( t \right)} \right| - \left| {{x_j}\left( t \right)} \right|} \right) = \gamma \eta = {\rm{3}}$ .图2为系统(6)在控制器(8)的作用下各智能体位置状态变化曲线, 由图可知各智能体位置状态绝对值的误差收敛于可控区间

$\left[ {0,{\rm{3}}} \right]$ , 存在竞争关系的两个子群最终能实现收敛, 即通信受限的多智能体系统能实现二分实用一致.图3、图4为在相同初始值状态下分别取

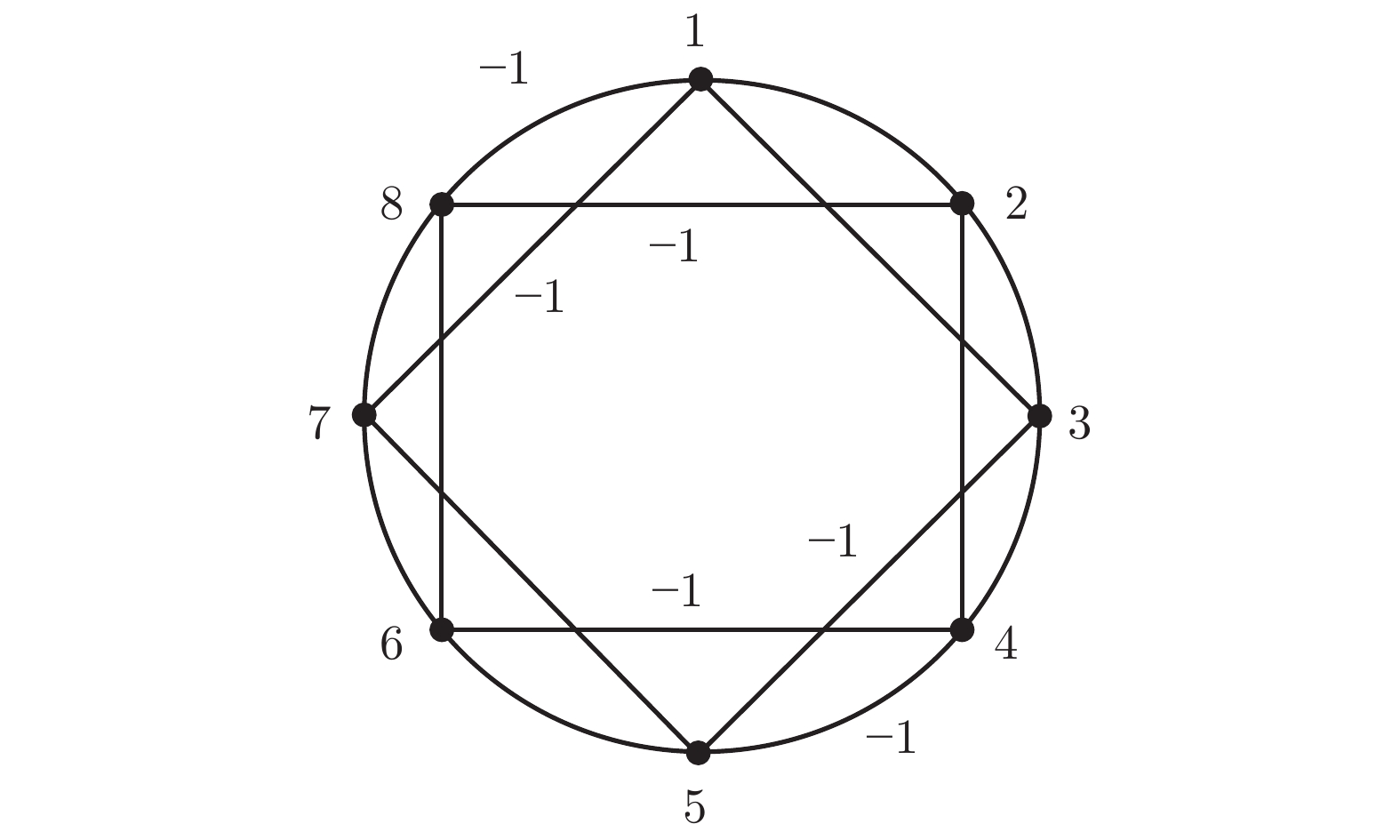

$\gamma = {\rm{1}}$ 、$\gamma = {\rm{0}}{\rm{.1}}$ 时的各智能体位置状态曲线, 表明通过改变$\gamma $ 值可以控制智能体位置状态绝对值误差的波动区间, 所设计的$\gamma $ 值越小, 智能体位置状态绝对值的误差波动区间也越小.实例 2. 为了进一步说明本文的研究意义, 选用8个智能体组成的最邻近耦合小世界网络系统, 智能体互连构成的有向拓扑图如图5所示, 此处无向图为一种特殊的有向图, 图中未标权重的连边权重设为1. 其中, 两个子群

${V_1} = \left\{ {1,2,3,4} \right\}$ 、${V_2} = \{ 5,6, $ $ 7,8 \}$ , 取$\eta = 1$ ,$\gamma = {\rm{0}}{\rm{.5}}$ ,$\tau = 0.1$ , 智能体初始状态分别为${\boldsymbol{x}}\left( t \right) = [ 1\;\;2\;\;4\;\;5\;\;{ - 1}\;\;{ - 2}\;\;{ - 4}\;\;{ - 5} ]$ ,$t \in \left[ { - 0.1,0} \right)$ .对邻接矩阵

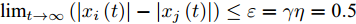

${A}$ 规范变换, 取转换矩阵为${C} = $ $ {\rm{diag}}\left\{ {1,1,1,1, - 1, - 1, - 1, - 1} \right\}$ . 由式(29)易得,$\mathop {\lim }_{t \to \infty } \left( {\left| {{x_i}\left( t \right)} \right| - \left| {{x_j}\left( t \right)} \right|} \right) \le \varepsilon = \gamma \eta = {\rm{0}}{\rm{.5}}$ .图6为系统(6)在控制器(8)的作用下各智能体位置状态变化曲线. 由图可知, 各智能体位置状态绝对值的误差收敛于可控区间

$\left[ {2.8,3.2} \right]$ , 存在竞争关系的两个子群最终能实现收敛, 运用本文所设计的控制策略, 最邻近耦合小世界网络系统能实现二分实用一致.4. 结论

本文研究了有向强连通符号图下通信受限的多智能体子群系统二分实用一致性问题, 设计了一种基于量化器的分布式控制协议, 使智能体位置状态绝对值的误差收敛于可控区间, 并得到了误差收敛上界值, 该上界值不依赖于任何全局信息和初始状态, 仅与量化器参数有关, 所设计量化水平参数越小, 收敛区间也越小. 后续工作将考虑推广至离散系统和高阶系统.

-

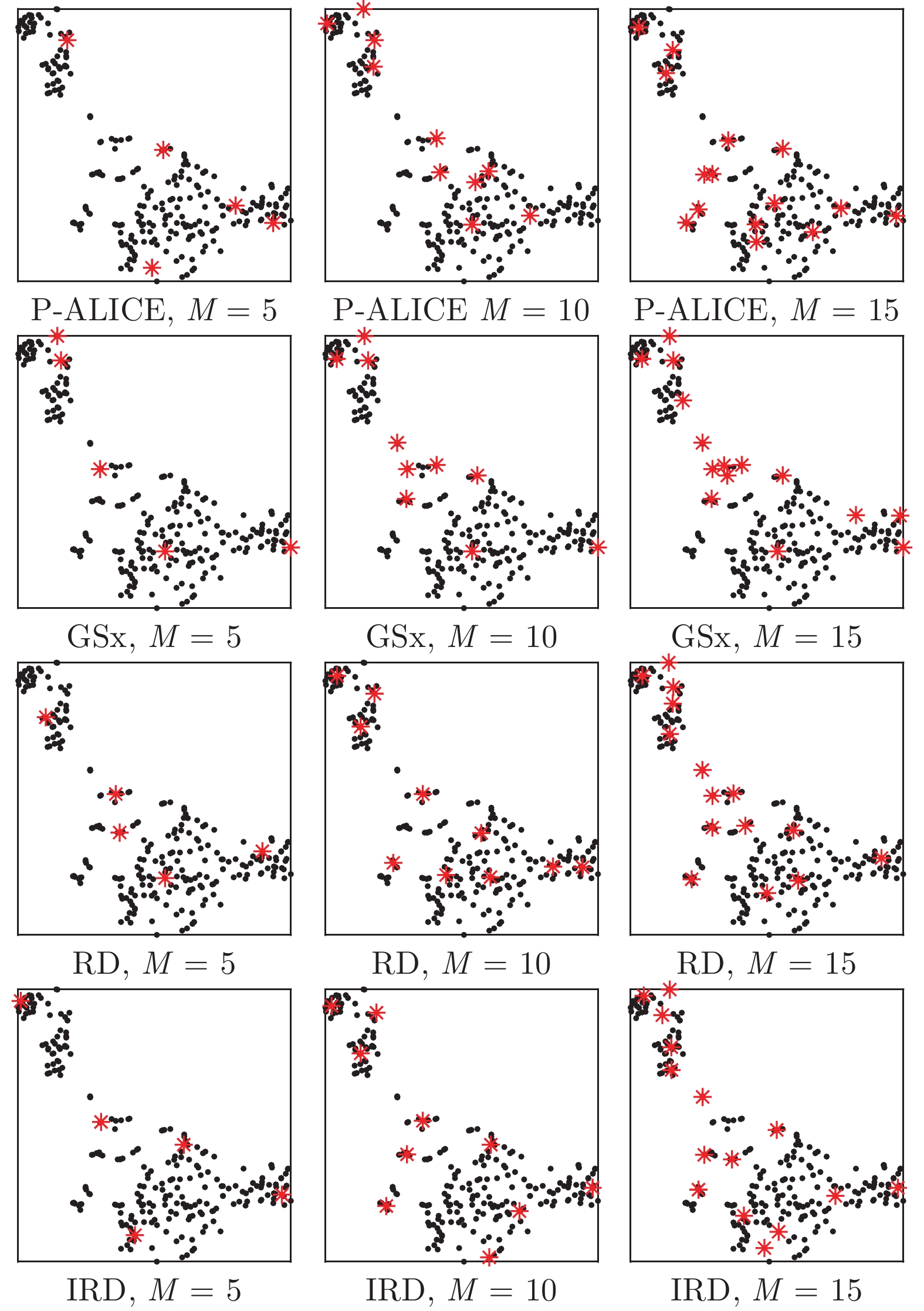

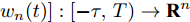

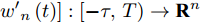

表 1 基于池的无监督ALR方法中考虑的标准

Table 1 Criteria considered in the three existing and the proposed unsupervised pool-based ALR approaches

方法 信息性 代表性 多样性 现有方法 P-ALICE $\checkmark$ $-$ $-$ GSx $-$ $-$ $\checkmark$ RD $-$ $\checkmark$ $\checkmark$ 本文方法 IRD $\checkmark$ $\checkmark$ $\checkmark$ 表 2 12个数据集的总结

Table 2 Summary of the 12 regression datasets

数据集 来源 样本个数 原始特征个数 数字型特征个数 类别型特征个数 总的特征个数 Concrete-CSa UCI 103 7 7 0 7 Yachtb UCI 308 6 6 0 6 autoMPGc UCI 392 7 6 1 9 NO2d StatLib 500 7 7 0 7 Housinge UCI 506 13 13 0 13 CPSf StatLib 534 10 7 3 19 EE-Coolingg UCI 768 7 7 0 7 VAM-Arousalh ICME 947 46 46 0 46 Concretei UCI 1030 8 8 0 8 Airfoilj UCI 1503 5 5 0 5 Wine-Redk UCI 1599 11 11 0 11 Wine-Whitel UCI 4898 11 11 0 11 a https://archive.ics.uci.edu/ml/datasets/Concrete+Slump+Test

b https://archive.ics.uci.edu/ml/datasets/Yacht+Hydrodynamics

c https://archive.ics.uci.edu/ml/datasets/auto+mpg

d http://lib.stat.cmu.edu/datasets/

e https://archive.ics.uci.edu/ml/machine-learning-databases/housing/

f http://lib.stat.cmu.edu/datasets/CPS_85_Wagesg http://archive.ics.uci.edu/ml/datasets/energy+efficiency

h https://dblp.uni-trier.de/db/conf/icmcs/icme2008.html

i https://archive.ics.uci.edu/ml/datasets/Concrete+Compressive+ Strength

j https://archive.ics.uci.edu/ml/datasets/Airfoil+Self-Noise

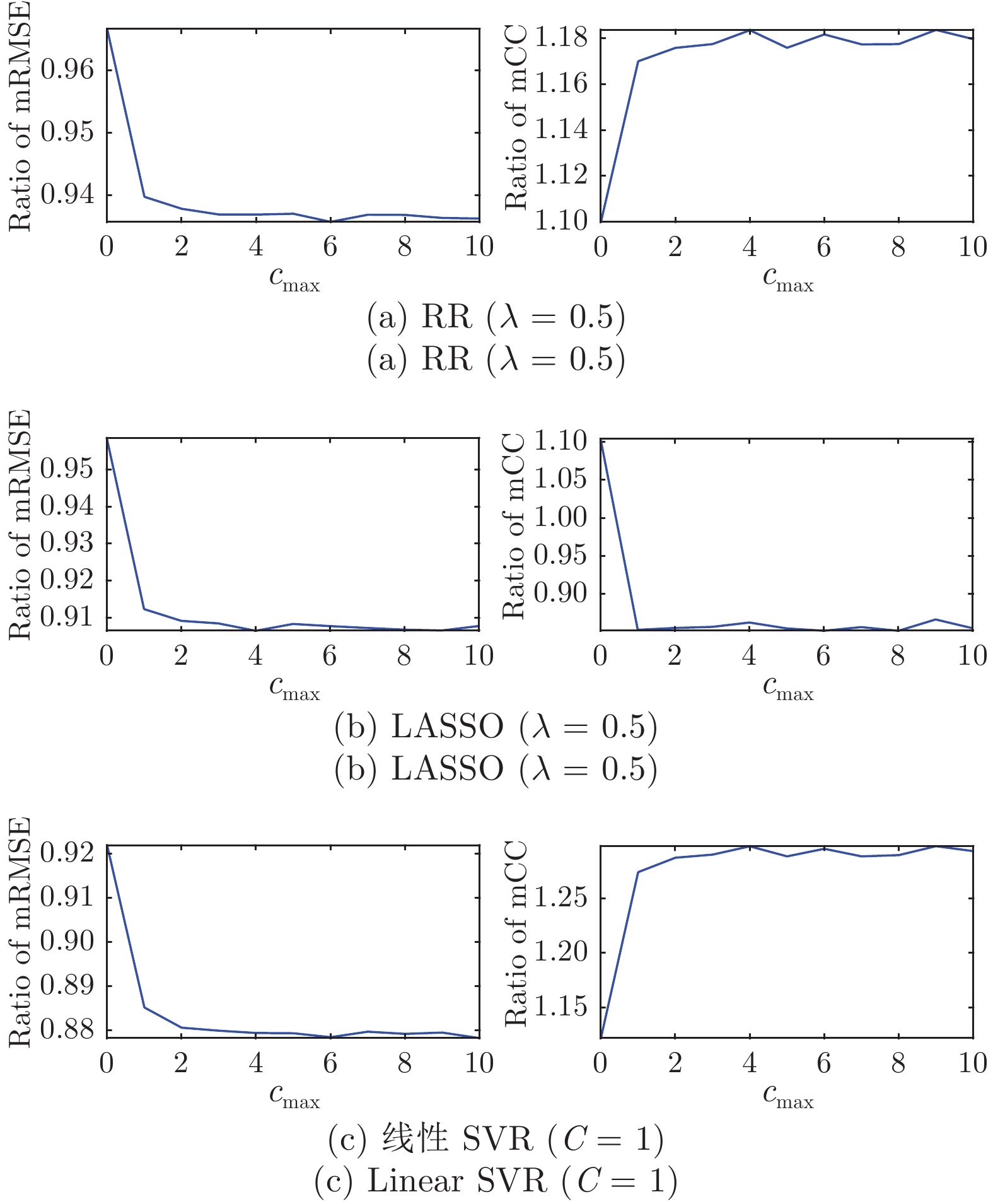

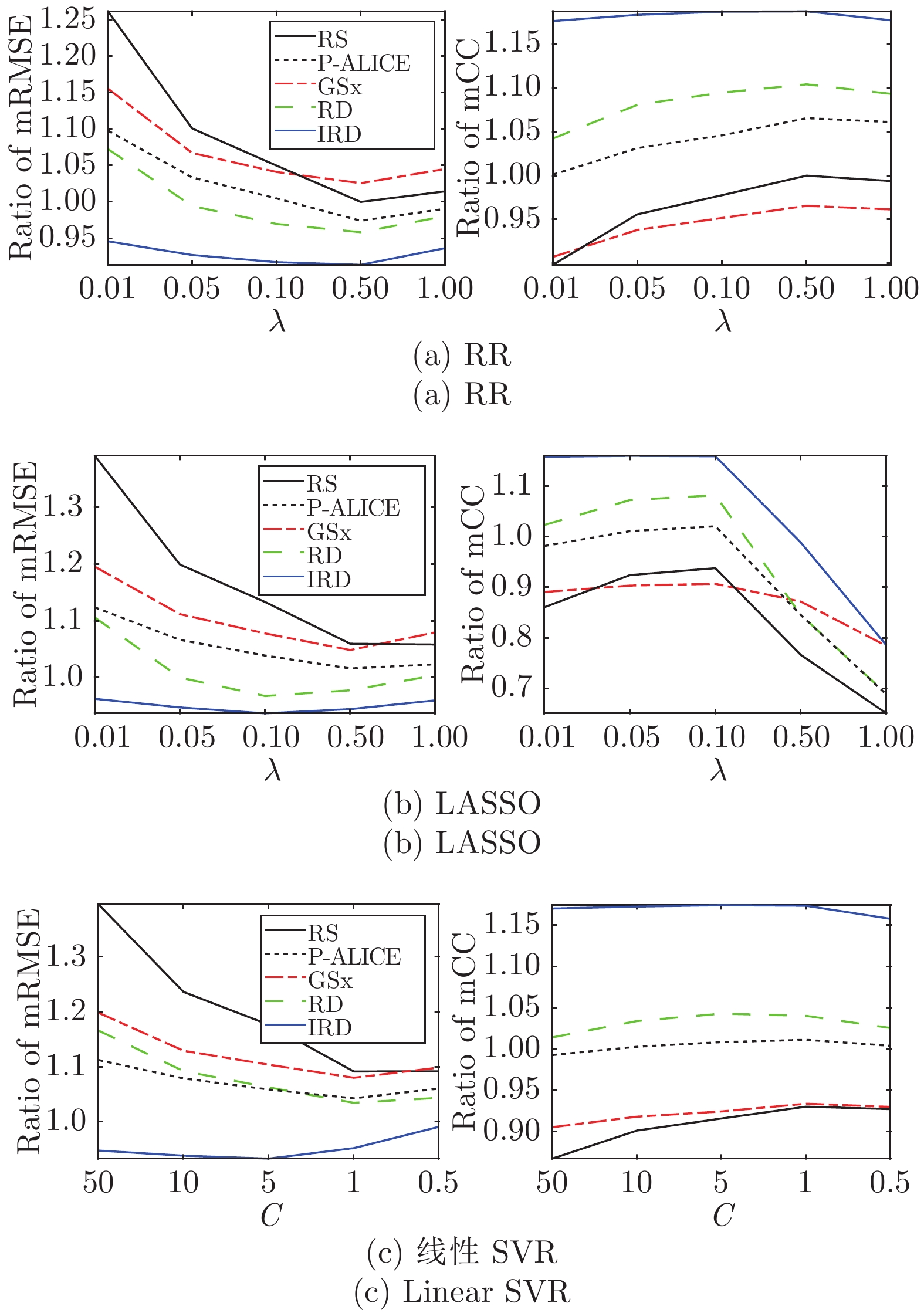

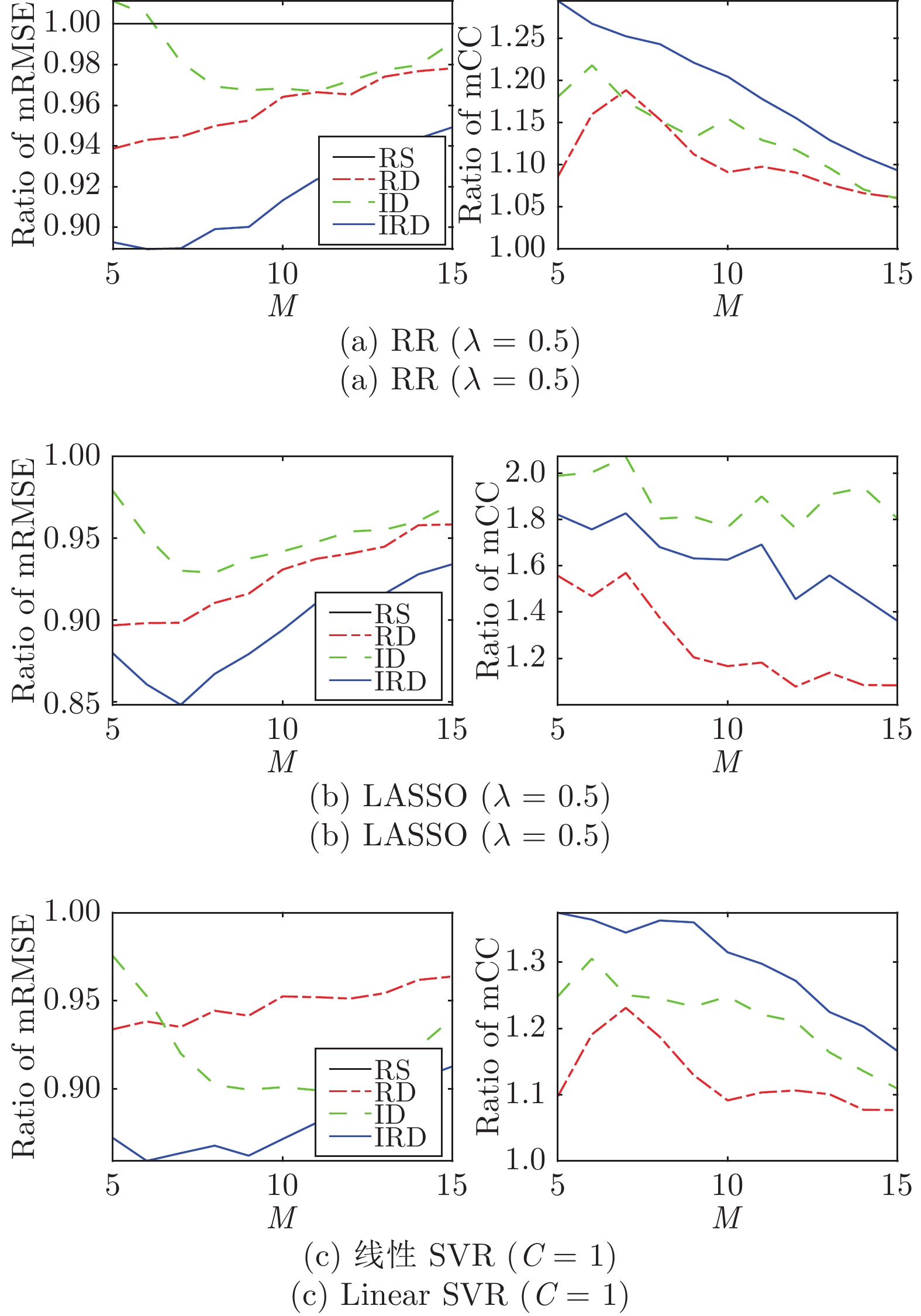

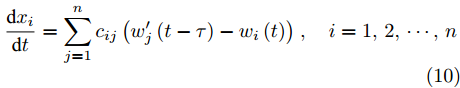

k https://archive.ics.uci.edu/ml/datasets/Wine+Quality表 3 AUC-mRMSE/sRMSE和AUC-mCC/sCC的提升百分比

Table 3 Percentage improvements of the AUCs of the mean/std RMSEs and the mean/std CCs

回归模型 性能指标 相对于 RS 的提升百分比 P-ALICE GSx RD IRD RR RMSE Mean 2.58 −2.57 4.15 8.63 std 2.75 3.98 36.60 34.84 CC Mean 6.54 −3.43 10.39 18.70 std 12.74 29.47 35.03 42.97 LASSO RMSE Mean 4.22 0.84 7.58 10.81 std 6.77 0.85 43.45 39.84 CC Mean 25.06 69.41 25.67 60.63 std 6.39 31.05 22.46 29.82 SVR RMSE Mean 4.21 0.66 5.23 12.12 std 6.62 −0.19 33.99 38.69 CC Mean 9.71 −1.65 12.46 28.99 std 11.10 25.78 34.97 43.25 表 4 非参数多重检验的

$p$ 值($\alpha=0.05$ ; 如果$p<\alpha/2$ 拒绝$H_0$ ).Table 4

$p$ -values of non-parametric multiple comparisons ($\alpha=0.05$ ; reject$H_0$ if$p<\alpha/2$ )回归模型 性能指标 IRD versus RS P-ALICE GSx RD RR RMSE 0.0000 0.0003 0.0000 0.0284 CC 0.0000 0.0000 0.0000 0.0005 LASSO RMSE 0.0000 0.0004 0.0000 0.0596 CC 0.0000 0.0000 0.0000 0.0000 SVR RMSE 0.0000 0.0000 0.0000 0.0018 CC 0.0000 0.0000 0.0000 0.0000 -

[1] Mehrabian A. Basic Dimensions for a General Psychological Theory: Implications for Personality, Social, Environmental, and Developmental Studies. Cambridge: Oelgeschlager, Gunn & Hain, 1980. [2] Grimm M, Kroschel K, Narayanan S. The Vera am Mittag German audio-visual emotional speech database. In: Proceedings of the 2008 IEEE International Conference on Multimedia and Expo (ICME). Hannover, Germany: IEEE, 2008. 865−868 [3] Bradley M M, Lang P J. The International Affective Digitized Sounds: Affective Ratings of Sounds and Instruction Manual, Technical Report No. B-3, University of Florida, USA, 2007. [4] Joo J I, Wu D R, Mendel J M, Bugacov A. Forecasting the post fracturing response of oil wells in a tight reservoir. In: Proceedings of the 2009 SPE Western Regional Meeting. San Jose, USA: SPE, 2009. [5] Settles B. Active Learning Literature Survey, Computer Sciences Technical Report 1648, University of Wisconsin, USA, 2009. [6] Burbidge R, Rowland J J, King R D. Active learning for regression based on query by committee. In: Proceedings of the 8th International Conference on Intelligent Data Engineering and Automated Learning. Birmingham, UK: Springer, 2007. 209−218 [7] Cai W B, Zhang M H, Zhang Y. Batch mode active learning for regression with expected model change. IEEE Transactions on Neural Networks and Learning Systems, 2017, 28(7): 1668-1681 doi: 10.1109/TNNLS.2016.2542184 [8] Cai W B, Zhang Y, Zhou J. Maximizing expected model change for active learning in regression. In: Proceedings of the 13th International Conference on Data Mining. Dallas, USA: IEEE, 2013. 51−60 [9] Cohn D A, Ghahramani Z, Jordan M I. Active learning with statistical models. Journal of Artificial Intelligence Research, 1996, 4(1): 129-145 [10] Freund Y, Seung H S, Shamir E, Tishby N. Selective sampling using the query by committee algorithm. Machine Learning, 1997, 28(2-3): 133-168 [11] MacKay D J C. Information-based objective functions for active data selection. Neural Computation, 1992, 4(4): 590-604 doi: 10.1162/neco.1992.4.4.590 [12] Sugiyama M. Active learning in approximately linear regression based on conditional expectation of generalization error. The Journal of Machine Learning Research, 2006, 7: 141-166 [13] Sugiyama M, Nakajima S. Pool-based active learning in approximate linear regression. Machine Learning, 2009, 75(3): 249-274 doi: 10.1007/s10994-009-5100-3 [14] Castro R, Willett R, Nowak R. Faster rates in regression via active learning. In: Proceedings of the 18th International Conference on Neural Information Processing Systems. Vancouver, Canada: MIT Press, 2005. 179−186 [15] Wu D R, Lawhern V J, Gordon S, Lance B J, Lin C T. Offline EEG-based driver drowsiness estimation using enhanced batch-mode active learning (EBMAL) for regression. In: Proceedings of the 2016 IEEE International Conference on Systems, Man, and Cybernetics (SMC). Budapest, Hungary: IEEE, 2016. 730−736 [16] Yu H, Kim S. Passive sampling for regression. In: Proceedings of the 2010 IEEE International Conference on Data Mining. Sydney, Australia: IEEE, 2010. 1151−1156 [17] Wu D R. Pool-based sequential active learning for regression. IEEE Transactions on Neural Networks and Learning Systems, 2019, 30(5): 1348-1359 doi: 10.1109/TNNLS.2018.2868649 [18] Wu D R, Lin C T, Huang J. Active learning for regression using greedy sampling. Information Sciences, 2019, 474: 90-105 doi: 10.1016/j.ins.2018.09.060 [19] Liu Z, Wu D R. Integrating informativeness, representativeness and diversity in pool-based sequential active learning for regression. In: Proceedings of the 2020 International Joint Conference on Neural Networks (IJCNN). Glasgow, UK: IEEE, 2020. 1−7 [20] Wu D R, Huang J. Affect estimation in 3D space using multi-task active learning for regression. IEEE Transactions on Affective Computing, 2019, DOI: 10.1109/TAFFC.2019.2916040 [21] Tong S, Koller D. Support vector machine active learning with applications to text classification. Journal of Machine Learning Research, 2001, 2: 45-66 [22] Lewis D D, Catlett J. Heterogeneous uncertainty sampling for supervised learning. In: Proceedings of the 8th International Conference on Machine Learning (ICML). New Brunswick, Canada: Elsevier, 1994. 148−156 [23] Lewis D D, Gale W A. A sequential algorithm for training text classifiers. In: Proceedings of the 17th Annual International ACM SIGIR Conference on Research and Development in Information Retrieval. Dublin, Ireland: Springer, 1994. 3−12 [24] Grimm M, Kroschel K. Emotion Estimation in Speech Using a 3D Emotion Space Concept. Austria: I-Tech, 2007. 281−300 [25] Grimm M, Kroschel K, Mower E, Narayanan S. Primitives-based evaluation and estimation of emotions in speech. Speech Communication, 2007, 49(10-11): 787-800 doi: 10.1016/j.specom.2007.01.010 [26] Wu D R, Parsons T D, Mower E, Narayanan S. Speech emotion estimation in 3D space. In: Proceedings of the 2010 IEEE International Conference on Multimedia and Expo (ICME). Singapore, Singapore: IEEE, 2010. 737−742 [27] Wu D R, Parsons T D, Narayanan S S. Acoustic feature analysis in speech emotion primitives estimation. In: Proceedings of the 2010 InterSpeech. Makuhari, Japan: ISCA, 2010. 785−788 [28] Dunn O J. Multiple comparisons among means. Journal of the American Statistical Association, 1961, 56(293): 52-64 doi: 10.1080/01621459.1961.10482090 [29] Benjamini Y, Hochberg Y. Controlling the false discovery rate: A practical and powerful approach to multiple testing. Journal of the Royal Statistical Society: Series B (Methodological), 1995, 57(1): 289-300 doi: 10.1111/j.2517-6161.1995.tb02031.x [30] van der Maaten L, Hinton G. Visualizing data using t-SNE. Journal of Machine Learning Research, 2008, 9: 2579-2605 期刊类型引用(7)

1. 贺忠海,朱温涵,陈旭旺,张晓芳. 基于自适应密度聚类的多准则主动学习方法. 仪器仪表学报. 2024(03): 179-187 .  百度学术

百度学术2. 李艳红,任霖,王素格,李德玉. 非平衡数据流在线主动学习方法. 自动化学报. 2024(07): 1389-1401 .  本站查看

本站查看3. 张书瑶,刘长良,王梓齐,刘帅,刘卫亮. 基于改进实例学习算法的风电机组齿轮箱状态监测. 动力工程学报. 2024(10): 1620-1631 .  百度学术

百度学术4. 尹春勇,陈双双. 结合微聚类和主动学习的流分类方法. 计算机工程与应用. 2023(20): 254-265 .  百度学术

百度学术5. 赵小康,赵鑫,朱启兵,黄敏. 一种基于无监督主动学习的苹果品质光谱无损检测模型构建方法. 光谱学与光谱分析. 2022(01): 282-291 .  百度学术

百度学术6. 刘瑾,赵晶,冯瑛敏,周超,姜美君,章辉. 基于梯度提升决策树的电力物联网用电负荷预测. 智慧电力. 2022(08): 46-53 .  百度学术

百度学术7. 石运来,崔运鹏,杜志钢. 基于BERT和深度主动学习的农业新闻文本分类方法. 农业图书情报学报. 2022(08): 19-29 .  百度学术

百度学术其他类型引用(11)

-

下载:

下载:

下载:

下载: