The State of the Art and Prospects of Lip Reading

-

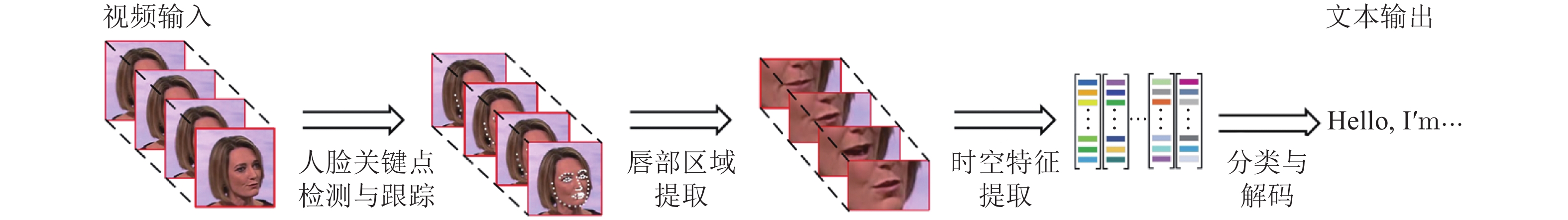

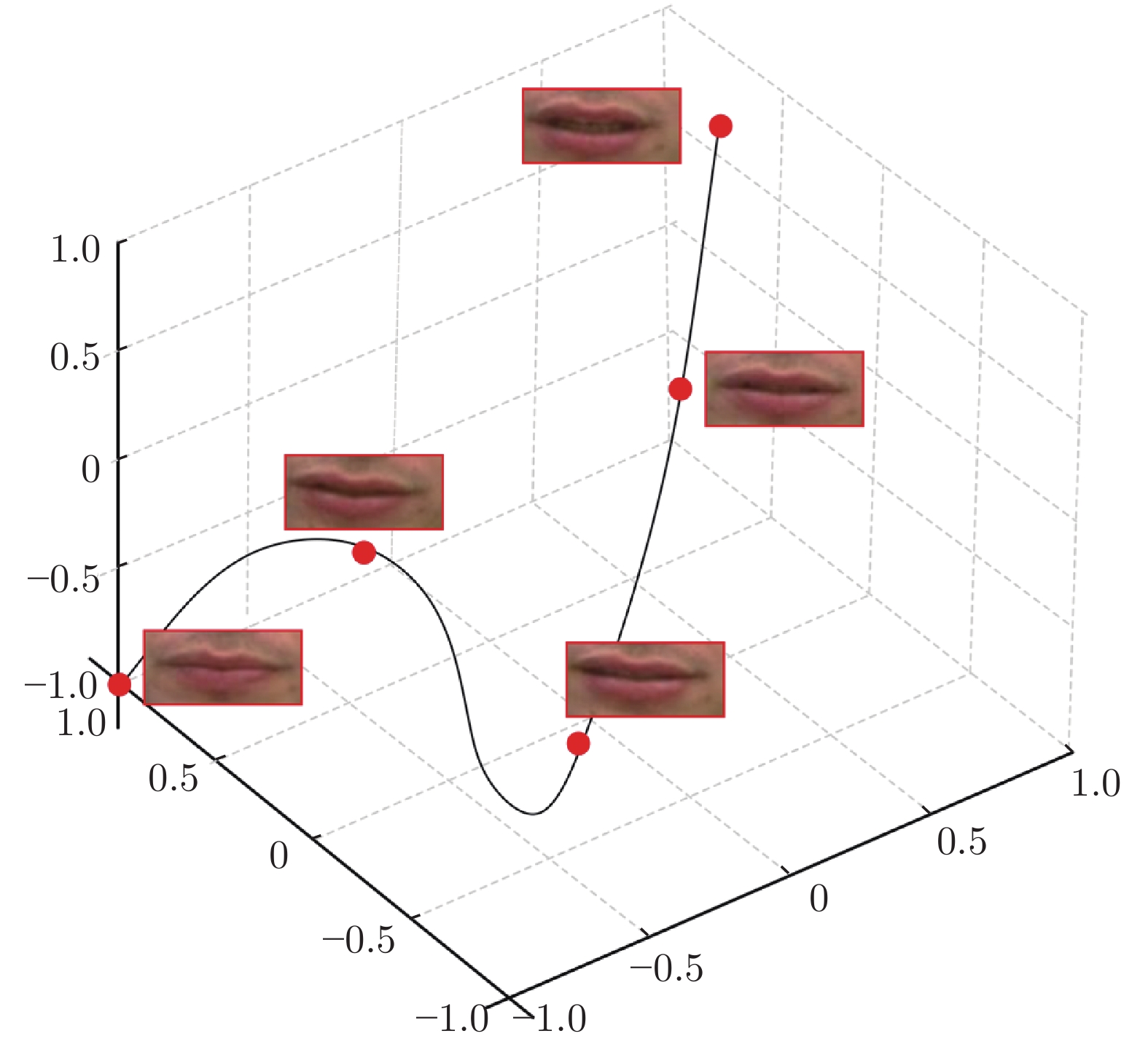

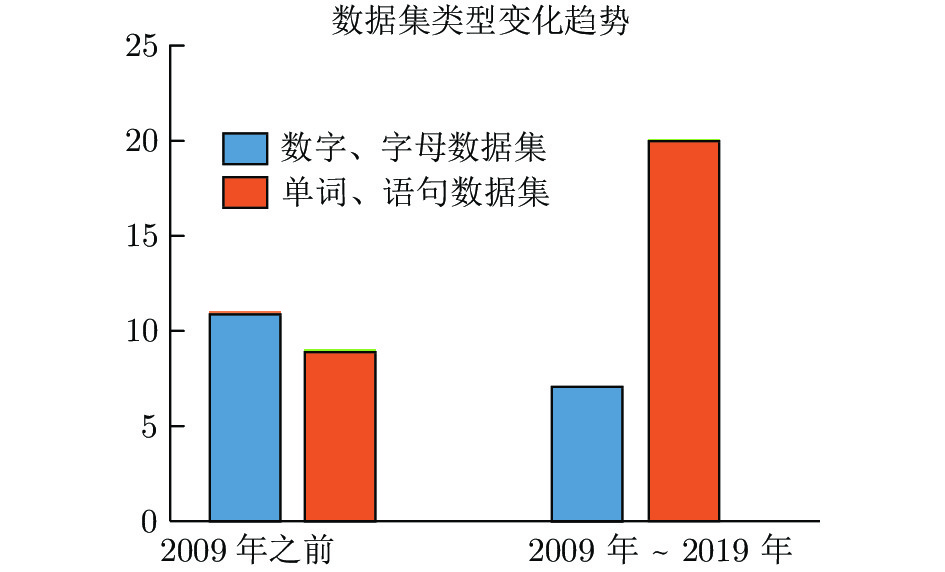

摘要: 唇读, 也称视觉语言识别, 旨在通过说话者嘴唇运动的视觉信息, 解码出其所说文本内容. 唇读是计算机视觉和模式识别领域的一个重要问题, 在公共安防、医疗、国防军事和影视娱乐等领域有着广泛的应用价值. 近年来, 深度学习技术极大地推动了唇读研究进展. 本文首先阐述了唇读研究的内容和意义, 并深入剖析了唇读研究面临的难点与挑战; 然后介绍了目前唇读研究的现状与发展水平, 对近期主流唇读方法进行了梳理、归类和评述, 包括传统方法和近期的基于深度学习的方法; 最后, 探讨唇读研究潜在的问题和可能的研究方向. 以期引起大家对唇读问题的关注与兴趣, 并推动与此相关问题的研究进展.Abstract: Lip reading, also known as visual speech recognition, aims to infer the content of a speech through the motion of the speaker´s mouth. Lip reading is an important issue in the field of computer vision and pattern recognition. It has a wide range of applications in the fields of public security, medical, defense military and professional filming. In recent years, deep learning technology has greatly promoted the progress of lip reading research. Starting from the definition of lip reading problem, this paper first expounds the content and significance of lip reading research, and deeply analyzes the difficulties and challenges of lip reading research. Then, the recent achievements of lip reading research are introduced, and the current mainstream lip reading methods are combed, categorized and reviewed as well, including traditional methods and recent methods based on deep learning. Finally, the potential problems and possible research directions of lip reading research are discussed to arouse the attention and interest of this research, and promote the research progress of related issues.

-

胰腺癌具有侵袭性强、转移早、恶性程度高、发展较快、预后较差等特征, 根据美国癌症协会报道, 其5年生存率低于10%, 死亡率非常高[1]. 胰腺癌已成为严重威胁人类健康的重要疾病, 并对临床医学构成巨大挑战. 胰腺的准确分割对胰腺癌检测识别等任务起着至关重要的作用. 胰腺处于人体后腹部的解剖位置, 其脏器影像常被遮挡不易识别, 且其形状和空间位置多变, 在腹部CT图像中所占比例较小, 其准确分割问题亟待解决.

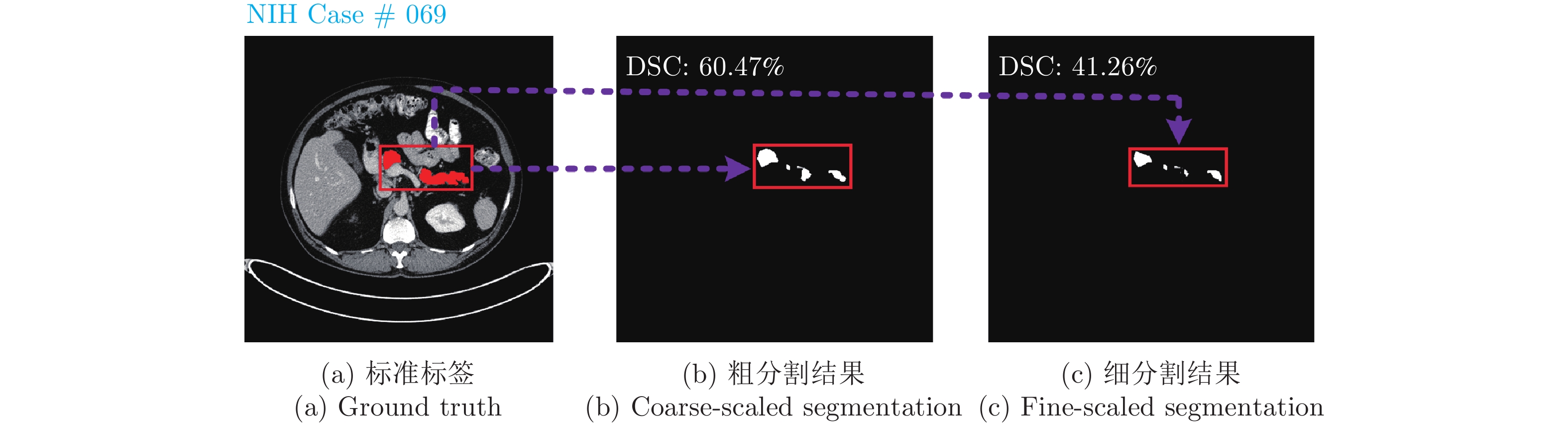

近年来, 由于深度神经网络的发展以及全卷积网络(Fully convolutional network, FCN)[2]的出现, 医学图像分割准确率取得了较大提升. 针对不同患者间胰腺形态差异性较大的解剖特征, 基于单阶段深度学习分割算法极易受其较大背景区域影响, 导致分割准确率下降. 现阶段常用解决方法是基于由粗到细的分割算法[3-6], 通过粗分割阶段输出掩码进行定位, 只保留胰腺及其周围部分区域作为细分割阶段网络输入, 减小背景区域对目标区域影响, 提高分割精度. 由粗到细的分割算法虽然减少了腹部影像背景区域对目标区域的干扰, 但是针对形态和空间位置多变的胰腺小器官增强前景区域同样重要. 同时粗分割阶段仅保留了定位框的位置信息, 却丢失了胰腺输出分割掩码的先验特征信息, 从而细分割阶段缺少粗分割阶段上下文信息, 有时会获得相比粗分割阶段更差的分割结果, 如图1所示. 此外, 由于在CT影像中胰腺与邻近器官密度较为接近、组织重叠部分界限分辨困难, 未合理利用相邻切片预测分割掩码上下文信息常导致误分割现象, 如图2所示. 结合相邻预测分割掩码容易看出, 中间切片存在误分割区域(红色部分), 合理利用预测分割掩码切片上下文信息能够校准误分割区域.

针对胰腺细分割阶段缺少粗分割阶段上下文信息的问题, 文献[3]提出了固定点的分割方法. 训练阶段使用胰腺标注数据训练粗分割网络, 然后使用粗分割网络的预测结果对原CT图像进行定位、剪裁, 只保留胰腺及其周围部分区域作为细分割网络输入, 通过反向传播, 优化细分割结果. 测试阶段, 固定细分割网络参数, 使用细分割网络预测掩码获得定位框并剪裁CT图像, 再次输入细分割网络, 迭代此过程获得优化的分割掩码, 以此缓解缺少阶段上下文信息的问题. 但是此分割方法本质上仅循环利用细分割定位框的位置信息, 缺少对分割掩码的循环利用, 缺少联合训练, 导致分割效果提升有限.

针对如何合理利用切片上下文信息解决胰腺与邻近器官密度较为接近、组织重叠部分界限分辨困难导致的误分割问题, 研究者提出了利用卷积长短期记忆网络(Convolutional long short-term memory, CLSTM)[7]和三维分割网络的方法[8-10]. 文献[8]将相邻CT切片输入到卷积门控循环单元(Convolutional gated recurrent units, CGRU)[11], 使当前隐藏层输出信息融合到下一时序隐藏层中, 通过前向传播, 当前隐藏层可获得融合之前切片上下文信息的输出表示. 文献[9]通过双向卷积循环神经网络, 同时利用当前层前后切片上下文信息进行胰腺分割. 但是目前大多数基于卷积循环神经网络的分割方法在利用切片上下文信息时, 只能按照输入切片顺序、逆序或结合顺序和逆序的方式. 这些方式严重依赖输入序列顺序, 并且相隔越远的切片在前向传播过程中能够共享的上下文信息越少. 与前述方法不同, 文献[10]将邻近切片输入三维卷积神经网络, 有效利用切片上下文信息, 改善了分割结果. 但基于三维卷积神经网络的分割方法, 受限于三维训练数据量过少和显存消耗过大, 大多数方法是基于局部三维块的分割. 虽然局部块中切片上下文信息得到了合理利用, 但是全局三维信息却缺乏连续性, 导致分割掩码存在过多噪点. 相比于三维图像分割方法需要解决三维图像数据量过少及参数量过多带来的显存问题, 基于卷积循环神经网络的二维图像分割方法存在的问题可以通过设计算法解决.

根据以上分析, 本文针对现有基于由粗到细的二维胰腺分割方法中存在的问题, 设计了循环显著性校准网络, 其结合更多的阶段上下文信息和切片上下文信息. 通过设计的卷积自注意力校准模块跨顺序利用切片上下文信息校准每一阶段的胰腺分割掩码, 循环使用前一阶段的胰腺分割掩码定位目标区域, 增强当前阶段的网络输入, 完成分割任务的联合优化. 提出的方法在公开数据集上进行了实验验证, 结果表明其有效地解决了上述胰腺分割任务中存在的问题. 本文的主要贡献如下:

1) 提出循环显著性校准网络, 循环利用前一阶段胰腺分割掩码显著性增强当前阶段胰腺区域特征, 通过联合训练获取更多的阶段上下文信息.

2) 设计了卷积自注意力模块, 使得胰腺所有输入切片预测分割掩码之间可以平行地进行跨顺序上下文信息互监督, 校准预测分割掩码.

3) 在NIH (National institutes of health)和MSD (Medical segmentation decathlon)胰腺数据集进行了大量实验, 实验结果验证了提出方法的有效性及先进性.

1. 相关工作

由粗到细的两阶段分割方法. 由粗到细的两阶段分割方法主要分为两类: 基于传统算法和基于深度学习的方法. 前者主要使用如超像素、图谱等传统算法获得粗分割结果, 再通过随机森林、Graph-cut等方法获得细分割结果[12-13]; 后者主要是基于深度学习的粗细分割方法[14], 基于数据驱动、自动化学习模型参数, 进行像素级别分类, 因其高精度和稳定性, 逐渐取代传统由粗到细的分割方法.

基于深度学习的粗细分割方法在粗分割训练阶段, 输入CT切片

$ {M^C} $ , 经过粗分割卷积神经网络$ {f(M^C,{\theta}^C)} $ , 预测结果记为$ {N^C} $ , 与真实标签(Ground truth)$ {Y} $ 进行损失计算, 通过反向传播优化粗分割结果. 在细分割训练阶段, 针对粗分割网络预测结果, 使用最小外接矩形算法获得胰腺位置坐标$ (p_x, p_y, w, h) $ , 对CT输入$ {M^C} $ 进行剪裁, 获得感兴趣区域$ {M^F} $ 作为细分割网络$ {f(M^F,{\theta}^F)} $ 的输入; 获得细分割网络输出预测结果$ {N^F} $ 并还原图像大小, 记为$ {Y^P} $ , 与真实标签(Ground truth)$ {Y} $ 进行损失计算, 通过反向传播优化细分割结果. 其中, 上标参数$ {C} $ 、$ {F} $ 分别表示粗分割阶段和细分割阶段;$ {(p_x, p_y)} $ ,$ {w} $ ,$ {h} $ 分别表示外接矩形框的左上角坐标, 宽和高;$ {{\theta}^C} $ ,$ {{\theta}^F} $ 分别表示粗、细分割网络参数.在测试阶段, 将CT切片输入训练好的粗细分割网络即可获得测试结果. 粗细分割方法中使用的网络主要是基于UNet[15], FCN[2]以及基于这两个基础结构的改进网络. 本文在粗细分割方法的基础上针对胰腺解剖性质提出基于循环显著性校准网络的胰腺分割方法, 均使用UNet[15]作为基础骨干网络.

胰腺分割方法. 传统医学图像分割常用方法有水平集[16]、混合概率图模型[17]和活动轮廓模型[18]等. 随着深度学习的发展, 基于卷积神经网络(Convolutional neural network, CNN)的分割方法由于其较高的精确度和较好的泛化性逐渐取代传统方法. 目前大多数基于深度学习的胰腺分割方法核心思想来源于FCN[2], FCN改进了卷积神经网络, 用卷积层替代最后的全连接层, 同时将浅层语义特征通过上采样与深层特征相融合, 补充分割目标的位置信息, 提高了分割准确率. 另一种常用于胰腺分割的方法采用“编码器−解码器”结构[15], 编码器负责逐层提取渐进的高级语义特征, 解码器通过反卷积或上采样的方法逐层恢复图像分辨率至原图大小, 同一层次编码器和解码器通过跳跃结构相连接.

由于胰腺形状、大小和位置多变, 上述单个阶段基于FCN或“编码器−解码器”结构的分割网络难以获得准确分割结果. 文献[3]首先提出了基于卷积神经网络由粗到细的两阶段分割算法, 使用粗分割掩码的位置信息剪裁细分割阶段网络的输入, 减小背景区域对胰腺区域分割的影响. 相比于文献[3], 文献[19]更进一步, 在使用由粗到细的两阶段分割算法的同时, 通过设计轻量化模块减少了粗细分割阶段模型的参数; 而文献[20]则直接以中心点为基础剪裁图像作为细分割网络的输入. 文献[21]利用肝脏、脾脏和肾脏的位置信息定位胰腺器官, 这不同于上述直接通过粗分割定位胰腺器官的方法. 文献[22]提出基于由下至上的方法, 首先使用超像素分块进行粗分割, 然后基于超像素块集成分割结果. 文献[23]使用最大池化方法融合CT切片三个轴信息, 获取候选区域, 在候选区域中从边缘至内部聚合分割结果. 文献[24]提出基于图谱的粗细分割方法, 改善了分割结果. 近来, 文献[25]提出了一种基于强化学习的两阶段分割算法. 首先, 使用DQN (Deep Q network)回归胰腺坐标位置, 剪裁只保留胰腺及其周围部分区域; 然后, 细分割阶段使用可行变卷积网络获得分割结果.

以上方法均取得了较为准确的分割结果, 但是将胰腺分割粗细两阶段分开训练, 细分割阶段缺少粗分割阶段上下文信息的问题, 依然难以用有效的方式处理. 文献[3]在测试阶段使用固定点算法, 固定细分割模型参数, 循环利用当前阶段预测分割掩码获取定位框位置信息作为下一阶段输入的先验, 以此达到使用之前阶段上下文信息的效果. 此方法本质上只迭代使用细分割定位框位置信息, 缺乏粗分割输出分割掩码的有效利用.

合理使用切片上下文信息解决胰腺误分割, 同样至关重要. 文献[8, 26]首先使用卷积神经网络提取特征, 然后利用卷积长短期记忆网络[7]提取切片上下文信息分割胰腺, 但切片上下文信息不能够跨顺序、平行化共享, 并且前向传播存在信息丢失的问题. 文献[27]使用对抗学习思想, 分别使用两个判别器约束主分割网络, 捕获空间语义信息和切片上下文信息, 但对抗网络的不稳定性使得训练和测试结果波动性较大. 文献[28]使用相邻切片局部块作为输入, 编码器部分使用三维卷积以递进的方式逐层融合切片上下文信息, 解码器部分使用二维转置卷积输出中间切片分割掩码. 由于CT切片之间层厚和层间距的差异性, 且局部块输入未使用任何插值方法, 捕捉到的三维切片上下文信息具有不一致性和局部性. 文献[10, 29-30]使用三维分割方法获取切片上下文信息, 受限于显存和三维数据量, 全局三维信息缺乏连续性.

针对现有胰腺分割方法中缺少阶段上下文信息的问题, 以及在使用循环卷积神经网络分割胰腺的过程中, 利用相邻切片上下文信息存在顺序依赖并且随着相邻切片间隔距离的增加导致全局信息正相关减少的问题, 本文提出了一种循环利用阶段上下文信息和切片上下文信息的二维胰腺图像分割网络. 首先, 将相邻CT切片作为粗分割网络输入, 获得粗分割掩码; 然后, 通过最小矩形框算法对获得的粗分割掩码进行胰腺区域坐标定位; 接着, 使用粗分割掩码作为权重增强细分割阶段输入切片的胰腺区域特征, 获取细分割掩码, 细分割掩码以同样的方式增强下一阶段分割网络的输入; 最后, 循环迭代上述过程直到达到指定停止条件. 通过此方法, 有效降低了分割平均误差, 提高了分割方法的稳定性.

2. 本文方法

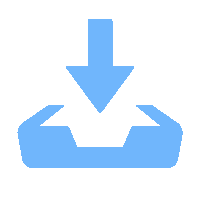

针对当前由粗到细的两阶段胰腺分割算法利用阶段上下文信息和切片上下文信息存在的问题, 本文提出了循环显著性校准网络. 其采用UNet和卷积自注意力校准模块作为骨干网络, 接受相邻横断位胰腺CT切片输入; 当前阶段卷积自注意力校准模块利用切片上下文信息校准UNet输出掩码的同时, 利用自身输出掩码显著性增强下一阶段UNet网络输入; 循环UNet和卷积自注意力校准模块, 联合阶段上下文信息和切片上下文信息提升分割性能, 整体网络架构如图3所示.

2.1 循环显著性校准网络

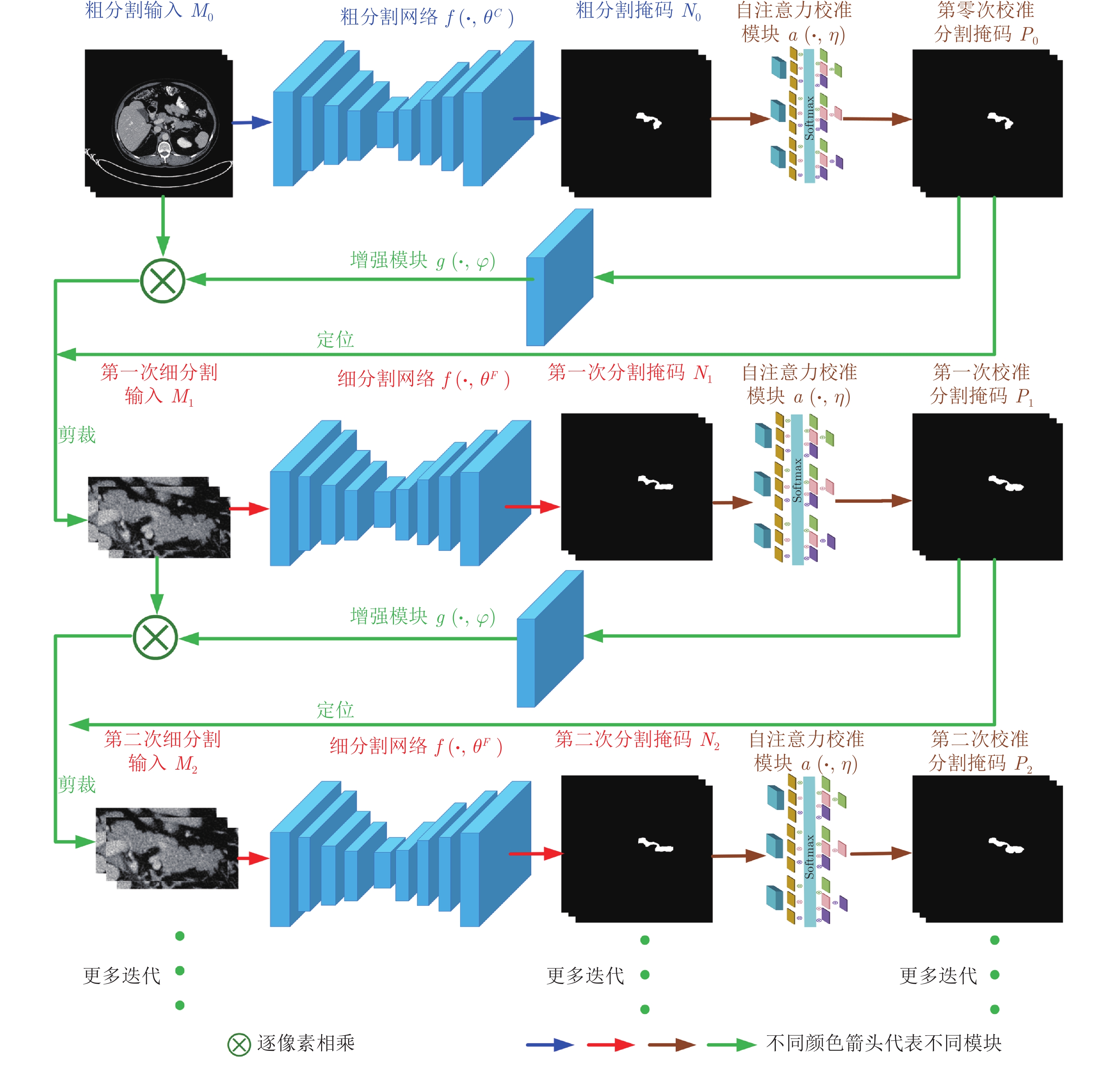

本文聚焦于联合阶段间和胰腺序列图像切片上下文信息提升分割准确率. 为了合理利用当前阶段胰腺分割掩码的位置和形状等先验信息, 显著增强下一阶段分割网络的输入; 同时, 通过平行、跨顺序直接利用相邻切片分割掩码改善自身明显误分割现象, 提出循环显著性校准网络, 其分割迭代过程如图4所示. 循环显著性指每个阶段的校准分割掩码

$ P $ 经增强模块$ {g(P,\varphi )} $ 特征提取后获得像素矩阵, 此像素矩阵为胰腺前景相关矩阵. 使用此像素矩阵和下一阶段输入图像$ M $ 进行像素对像素相乘, 显著增强胰腺区域, 抑制背景区域.选择UNet基础分割网络模型

$ {f(\cdot ,\theta )} $ 作为骨干网络, 该模型的输入为胰腺的相邻CT切片, 记为$ X $ , 通过基础分割网络模型推断出输出掩码$ N $ . 由于胰腺与邻近器官密度较为接近、组织重叠部分界限分辨困难, 容易导致基础分割网络出现误分割现象. 因此, 本文基于切片上下文信息设计了卷积自注意力校准模块$ {a(\cdot,\eta )} $ , 校准基础分割网络输出的分割掩码$ N $ , 卷积自注意力校准模块的输出表示为$ P $ ; 为了能够获取更加准确的胰腺位置, 设置了固定分割掩码像素阈值0.5, 来二值化$ P $ , 其输出表示如式(1)所示.$$ \begin{equation} Z = \left\{\begin{aligned}&1, & P_{i j} \geq 0.5 \\&0, & P_{i j}<0.5 \end{aligned}\right. \end{equation} $$ (1) 其中,

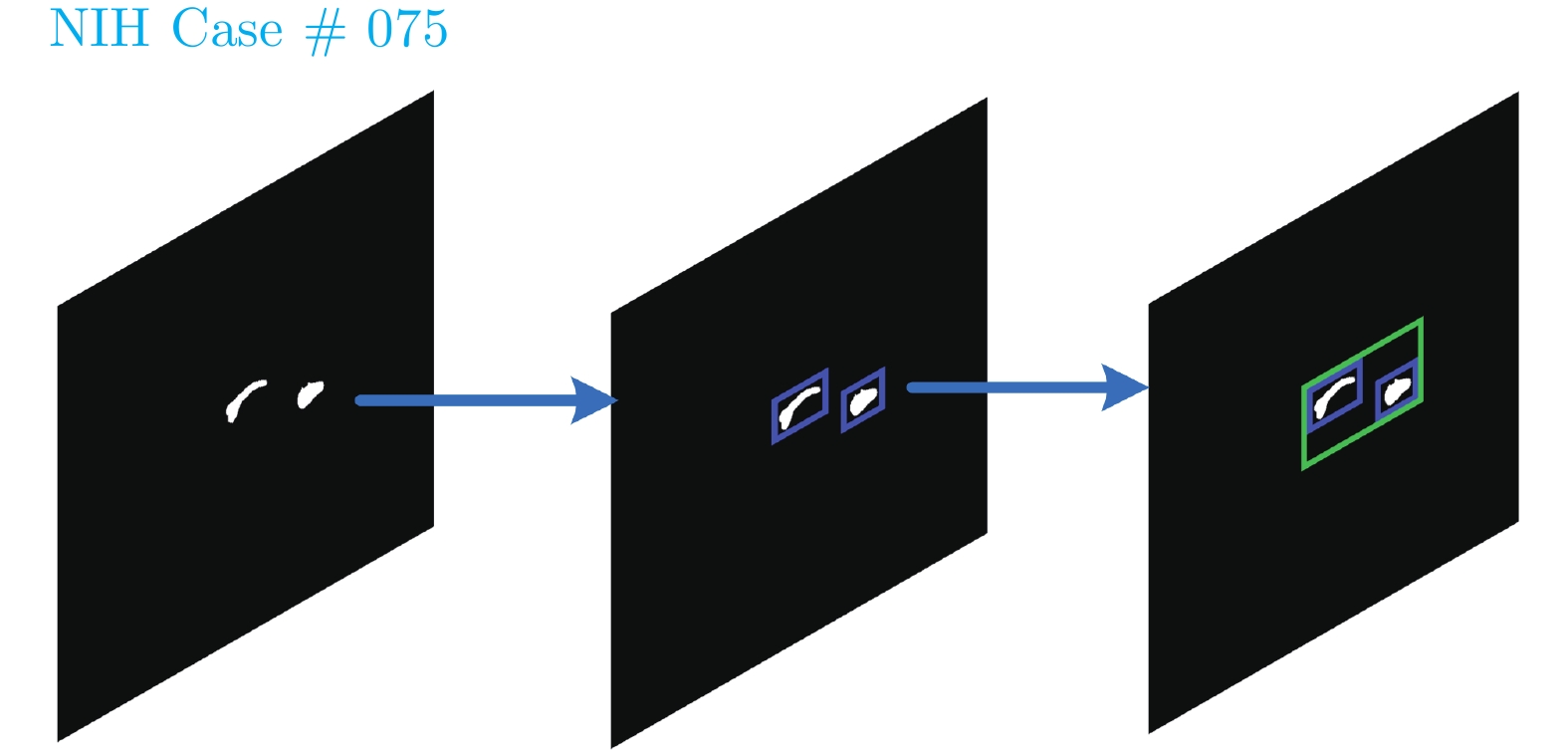

$ {i} $ 、$ {j} $ 为分割掩码中像素值位置坐标.通过对式(1)的输出

$ {Z} $ 应用最小矩形框算法获得包围胰腺分割掩码框的位置坐标$ { (p_x, p_y, w, h)} $ , 位置坐标获得过程如图5所示. 其中, 蓝色框为单个连通区域分割结果的定位, 绿色框为整合多段分割结果的定位.为改善下一阶段分割过程中缺少当前阶段上下文信息的问题, 使用校准模块输出分割掩码

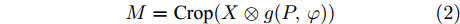

$ {P} $ 作为潜在变量输入到显著性增强模块$ {g(P,\varphi )} $ , 提取特征概率作为下一阶段分割网络输入$ X $ 的先验空间权重, 并结合上述定位坐标$ { (p_x, p_y, w, h)} $ 增强并缩小下一阶段网络的输入, 显著减小背景区域对分割的影响. 对于在整个腹部图像中区域占比较小, 形状和位置多变的胰腺器官来说, 此过程极为重要, 其显著增强了胰腺区域, 弱化了不相关区域. 过程如式(2)所示.$$ \begin{equation} M = {\rm{Crop}}(X \otimes g(P, \varphi)) \end{equation} $$ (2) 其中,

$ M $ 为增强并缩小的下一阶段输入;$ \otimes $ 表示对应像素点相乘; Crop表示利用定位坐标$(p_x, p_y, w, h)$ 对各阶段输入做剪裁.$ \theta $ ,$ \eta $ ,$ \varphi $ 为相应模块共享网络参数.图4右图是图4左图的展开形式, 其中

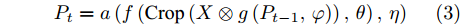

$ {M_0} $ 作为胰腺初始输入图像和$ {X} $ 相同, 其大小远大于其他阶段的网络输入$ {M_t \;(t>0)} $ , 所以第一次粗分割阶段和其余分割阶段网络参数$ \theta $ 应加以区分, 分别使用$ {\theta}^C $ 和$ {\theta}^F $ 表示. 在循环迭代过程中, 由于各分割阶段输入$ {X} $ 不变, 并且输入$ {X} $ 需与显著性增强模块$ {g(P,\varphi )} $ 输出作逐像素相乘, 为了保持$ {g(P,\varphi )} $ 是一个输入输出同大小的模块, 设置卷积核大小为$ 3\;\times 3 $ , 步长为1, 填充为1.整个循环迭代分割过程如式(3)所示.

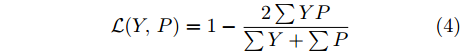

$$ \begin{equation} P_{t} = a\left(f\left({\rm{Crop}}\left(X \otimes g\left(P_{t-1}, \varphi\right)\right), \theta\right), \eta\right) \end{equation} $$ (3) 根据以上分析可以看出, 整个网络运算过程是可微的, 结合所有阶段损失函数进行联合训练. 本文采用DSC (Dice-S

$ {\phi} $ rensen coefficient)作为损失函数, 如式(4)所示.$$ \begin{equation} {\cal{L}}(Y, P) = 1-\frac{2 \sum Y P}{\sum Y+\sum P} \end{equation} $$ (4) 其中,

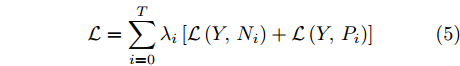

$ Y $ 是真实标签,$ P $ 为各阶段预测分割掩码.结合各阶段分割网络和卷积自注意力校准模块, DSC联合损失函数如式(5)所示.

$$ \begin{equation} {\cal{L}} = \sum\limits_{i = 0}^{T} {{{\lambda}}}_{i}\left[{\cal{L}}\left(Y, N_{i}\right)+{\cal{L}}\left(Y, P_{i}\right)\right] \end{equation} $$ (5) 其中,

$ T $ 为循环分割次数停止阈值. 由于粗分割阶段和其余分割阶段胰腺切片输入大小不一致, 粗分割阶段主要用于获取胰腺的初步定位和粗分割掩码, 故设置较小的权重参数, 且满足$3\lambda_{0} = \lambda_{1} = \lambda_{2} = \cdots = \lambda_{T} = 3/(3T+1)$ .2.2 卷积自注意力校准模块

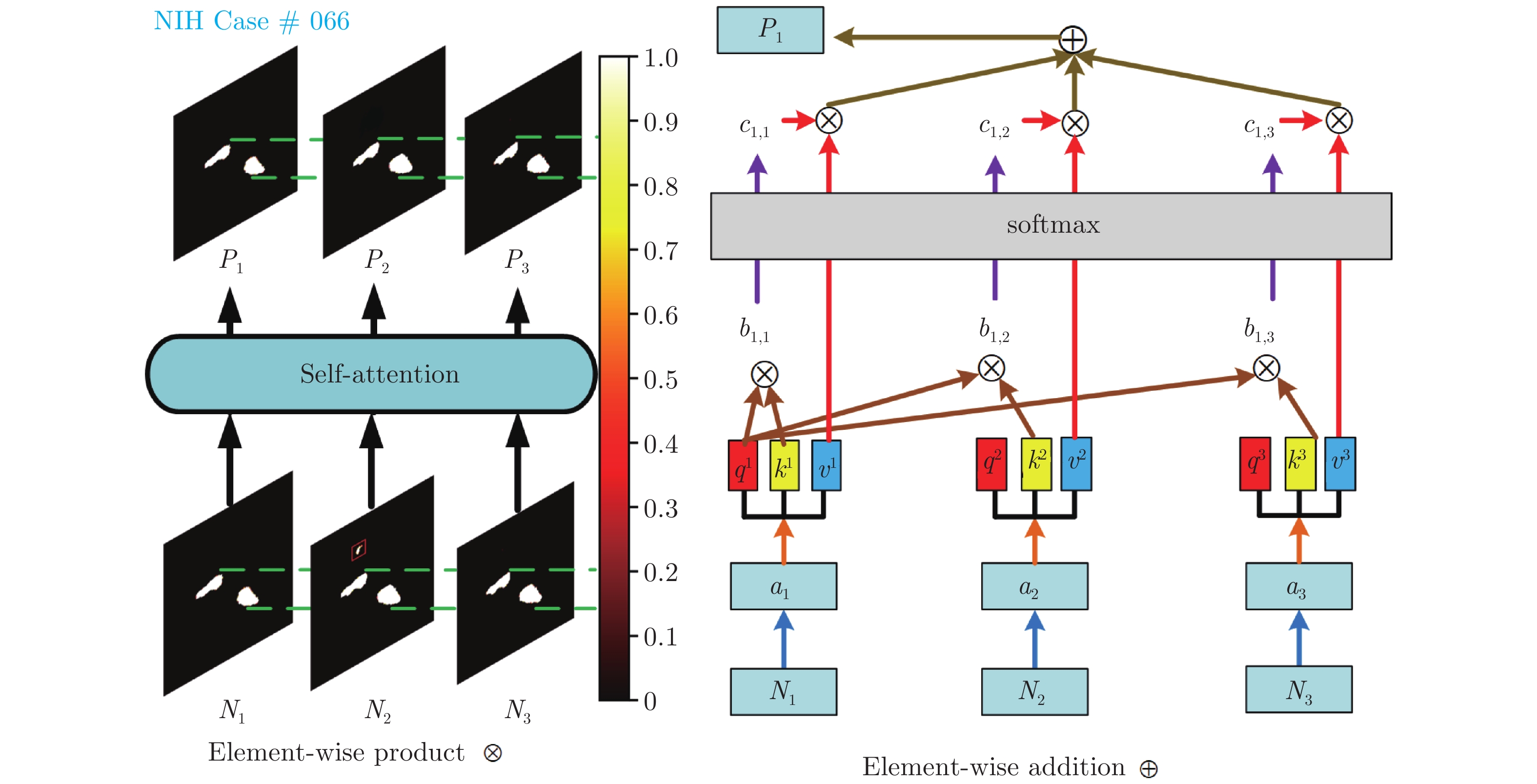

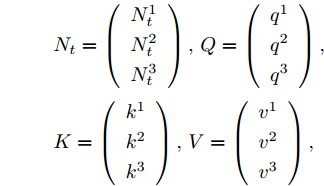

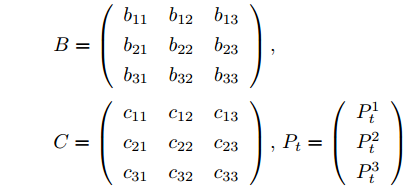

针对胰腺与邻近器官密度较为接近、组织重叠部分界限分辨困难而导致的误分割问题, 本文提出在循环显著性校准网络每个分割阶段嵌入卷积自注意力校准模块, 其合理利用切片上下文信息校准胰腺相邻切片误分割区域.

本文设计的卷积自注意力校准模块基于自注意力机制[31]. 自注意力机制在处理序列信息输入时, 能够跨顺序、平行化地与序列输入中其他时间点输入进行直接交互. 但由于自注意力机制使用的线性变换忽略了图像像素之间的空间关系, 本文提出卷积自注意力校准模块, 使用卷积操作替换线性变换. 卷积自注意力校准模块如图6左图所示(图中以批次大小3为例), 图6右图为图6左图中获得单张校准分割掩码的计算过程, 其余两张切片校准分割掩码获得过程计算方式类似, 图中

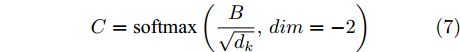

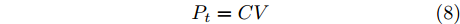

$ P $ 表示预测分割掩码的热力图显示, 中间是颜色条,其取值范围在$ [0,1.0] $ 之间. 为了描述和解释的方便, 将卷积自注意力校准模块中所有标量以向量或者矩阵的形式表示如下.$$ \begin{split} &N_{t} = \left(\begin{array}{l} N_{t}^{1} \\N_{t}^{2} \\N_{t}^{3} \end{array}\right), Q = \left(\begin{array}{l} q^{1} \\q^{2} \\q^{3} \end{array}\right), \\ &K = \left(\begin{array}{l} k^{1} \\k^{2} \\k^{3} \end{array}\right), V = \left(\begin{array}{l} v^{1} \\v^{2} \\v^{3} \end{array}\right), \end{split}\qquad\qquad $$ $$ \begin{split} &B = \left(\begin{array}{lll} b_{11} & b_{12} & b_{13} \\b_{21} & b_{22} & b_{23} \\b_{31} & b_{32} & b_{33} \end{array}\right),\\ &C = \left(\begin{array}{lll} c_{11} & c_{12} & c_{13} \\c_{21} & c_{22} & c_{23} \\c_{31} & c_{32} & c_{33} \end{array}\right), P_{t} = \left(\begin{array}{l} P_{t}^{1} \\P_{t}^{2} \\P_{t}^{3} \end{array}\right) \end{split} $$ 其中,

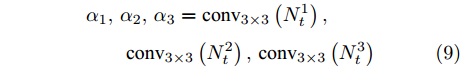

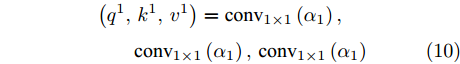

$ N_t $ 为分割网络$ f( M_t,\theta ) $ 输出的相邻分割掩码;$ Q $ 为查询特征向量;$ K $ ,$ V $ 为键值对特征向量;$ B $ 为相似度度量矩阵;$ C $ 为像素权重矩阵;$ P_t $ 为校准模块输出掩码.为了提取多样性的特征表示, 首先将相邻分割掩码

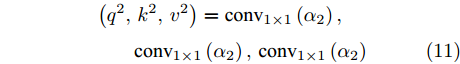

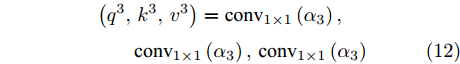

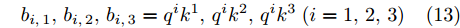

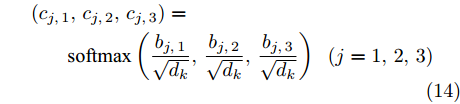

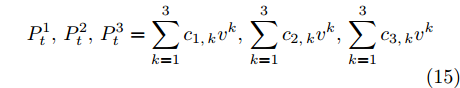

$ N_t $ 中的每一个元素分别通过$ 3\times3 $ 卷积, 获得输出$ \alpha_{1}, \alpha_{2}, \alpha_{3} $ ; 然后将输出特征$ \alpha_{1} $ 分别通过3个不同的$ 1\times1 $ 卷积, 获得$ N_t^1 $ 的查询特征向量$q^1$ 和键值对特征向量$k^1$ ,$v^1$ ; 输出特征$ \alpha_{2} $ ,$ \alpha_{3} $ 以与$ \alpha_{1} $ 相同的方式获得$ (q^2,k^2,v^2 ) $ 和$ (q^3,k^3,v^3 ) $ , 其中所有$ 3\times3 $ 和$ 1\times1 $ 卷积都保持输出和输入大小一致. 下面通过$ Q $ 、$ K $ 、$ V $ 来表示输出掩码$ P_t $ 获得的过程, 如式(6) ~ 式(8).$$ \begin{equation} B = Q K^{{\rm{T}}} \end{equation} $$ (6) $$ \begin{equation} C = {\rm{softmax}} \left(\frac{B}{ \sqrt{d_{k}}}, dim = -2\right) \end{equation} $$ (7) $$ \begin{equation} P_{t} = CV \end{equation} $$ (8) $ B $ 为$ N_t $ 中的每个元素的特征查询向量分别与其他元素的键向量作相似度度量获得的矩阵, 表示其他元素对当前元素的影响程度, 这里相似度度量是像素对像素的乘法操作,$ d_k $ 为键向量维度.$ C $ 通过$ {\rm{softmax}} $ 函数对相似度度量矩阵进行归一化,$ dim = -2 $ 表示对倒数第二个维度进行归一化; 获得像素权重矩阵以后, 与$ N_t $ 中的每个元素的值向量$ v $ 像素对像素相乘, 再进行融合获得最终输出表示$ P_t $ . 分别用式(9) ~ 式(15)表示详细计算过程, 其中卷积操作都拥有不同的参数.$$ \begin{split} &\alpha_{1}, \alpha_{2}, \alpha_{3} = {\rm{conv}}_{3 \times 3}\left(N_{t}^{1}\right), \\ &\qquad{\rm{conv}}_{3 \times 3}\left(N_{t}^{2}\right), {\rm{conv}}_{3 \times 3}\left(N_{t}^{3}\right) \end{split} $$ (9) $$ \begin{split} &\left(q^{1}, k^{1}, v^{1}\right) = {\rm{conv}}_{1 \times 1}\left(\alpha_{1}\right), \\ &\qquad{\rm{conv}}_{1 \times 1}\left(\alpha_{1}\right), {\rm{conv}}_{1 \times 1}\left(\alpha_{1}\right) \end{split} $$ (10) $$ \begin{split} &\left(q^{2}, k^{2}, v^{2}\right) = {\rm{conv}}_{1 \times 1}\left(\alpha_{2}\right),\\ &\qquad{\rm{conv}}_{1 \times 1}\left(\alpha_{2}\right), {\rm{conv}}_{1 \times 1}\left(\alpha_{2}\right) \end{split} $$ (11) $$ \begin{split} &\left(q^{3}, k^{3}, v^{3}\right) = {\rm{conv}}_{1 \times 1}\left(\alpha_{3}\right),\\ &\qquad{\rm{conv}}_{1 \times 1}\left(\alpha_{3}\right), {\rm{conv}}_{1 \times 1}\left(\alpha_{3}\right) \end{split} $$ (12) $$ \begin{equation} b_{i, 1}, b_{i, 2}, b_{i, 3} = q^{i} k^{1}, q^{i} k^{2}, q^{i} k^{3}\;(i = 1,2,3) \end{equation} $$ (13) $$ \begin{split} &\left(c_{j, 1}, c_{j, 2}, c_{j, 3}\right) =\\ &\qquad {\rm{softmax}} \left(\frac{b_{j, 1}}{ \sqrt{d_{k}}}, \frac{b_{j, 2}}{ \sqrt{d_{k}}}, \frac{b_{j, 3} }{ \sqrt{d_{k}}}\right)\;\;(j = 1,2,3) \end{split} $$ (14) $$ \begin{equation} P_{t}^{1}, P_{t}^{2}, P_{t}^{3} = \sum\limits_{k = 1}^{3} c_{1, k} v^{k}, \sum\limits_{k = 1}^{3} c_{2, k} v^{k}, \sum\limits_{k = 1}^{3} c_{3, k} v^{k} \end{equation} $$ (15) 3. 实验结果与分析项

3.1 数据集及预处理

本文实验使用NIH[22]胰腺分割数据集和MSD[32]胰腺分割数据集. NIH数据集总共包含82位受试者的CT样本, 每位受试者样本中CT切片数量最少181张, 最多466张, 每一张切片大小为

$ 512\;\times 512 $ 像素, 切片厚度在0.5 mm到1.0 mm之间; MSD数据集总共包含281位受试者的CT样本, 每位受试者样本中CT切片数量在37到751之间, 每一张切片大小为$ 512\times512 $ 像素.在本文实验中, 所有CT切片的HU (Housefield unit)值根据统计结果被限制在 [−120, 340], 并把CT切片及其对应标签归一化到[0, 1]之间, 同时随机做

$ [-15^{\circ}, 15^{\circ}] $ 随机旋转.3.2 实验方法细节及评价指标

实验使用Pytorch 1.2.0版本, 在Ubuntu 16.04操作系统的2块RTX 2080ti独立显卡进行训练, 训练时批次大小设置为3, 使用ReLU[33]作为激活函数, Adam[34]作为优化方法, 学习率

$ lr = 1.0\times10^{-4} $ , 受限于显存大小, 训练阶段最大迭代次数$ T $ 设置为4. 实验使用4折交叉验证方法确保结果的鲁棒性. 数据集被平均分为4份, 每次选择其中的3份作为训练集, 另1份作为验证集. 共实验4次, 计算平均DSC准确率, 作为最终结果.训练过程. 训练过程中需要通过反向传播算法最小化损失函数(式(5)). 值得注意的是: 训练过程前期, 由于网络参数的随机初始化, 各阶段产生了错误分割掩码, 所以训练初始阶段使用标准标签作为上下文先验, 增强并剪裁下一分割阶段网络的输入.

测试过程. 测试过程和训练过程不同, 测试阶段缺少标准标签, 所以使用各阶段分割掩码作为先验信息, 增强并缩小下一阶段的输入; 同样, 测试过程中不需要优化参数, 对于中间结果可以丢弃, 所以迭代次数的阈值不再限于GPU显存, 理论上可以无上界. 本文设定测试的循环分割次数停止阈值

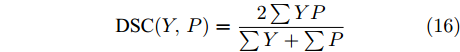

$ T $ 为6, 因为实验的观察结果表明, 当迭代次数较大时分割准确率提升有限.实验采用DSC作为评价指标, 如式(16)所示, 真实标签和分割掩码交集的两倍与真实标签和分割掩码并集的比值. 其中,

$ Y $ 是真实标签,$ P $ 为预测分割掩码.$$ \begin{equation} {\rm{DSC}}(Y, P) = \frac{2 \sum YP}{\sum Y+\sum P} \end{equation} $$ (16) 3.3 实验对比分析

本节基于公开数据集(NIH和MSD)设置不同实验对照组, 验证基于循环显著性校准网络的胰腺分割方法. 主要分为5部分: 1) 阶段上下文信息有效性分析; 2) 切片上下文信息有效性分析; 3)结合阶段上下文信息和切片上下文信息的循环显著性校准网络有效性分析; 4)输入切片数目对分割结果的影响; 5)网络模型参数量及时间消耗.

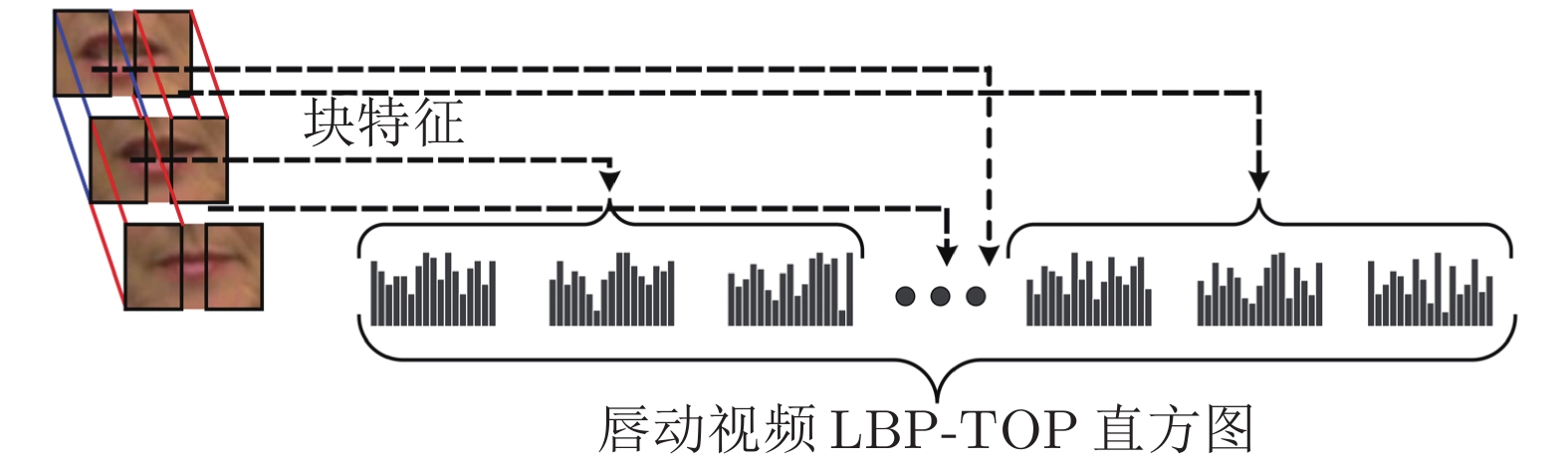

3.3.1 阶段上下文信息有效性分析

本节对比实验展示阶段上下文信息对于分割性能的影响, 分别进行了两部分实验.

1) 针对粗细分割分开训练、粗细分割联合训练以及循环显著性网络联合训练进行对比实验. 粗细分割联合训练以及循环显著性网络联合训练都使用了显著性增强模块利用阶段上下文信息, 其中每一分割阶段都去掉了卷积自注意力校准模块. 实验结果如表1所示. 其中粗细分割联合训练相比于粗细分割分开训练在两个数据集上都展示了更高的平均分割准确率和更低的标准差, 其主要因为显著性增强模块显著性增强胰腺区域并联合粗细分割阶段上下文信息进行联合优化; 而循环显著性网络联合训练相比于粗细分割联合训练带来的分割效果提升, 来源于使用更多的阶段上下文信息联合训练. 由上述分析可知, 更多的阶段上下文信息对于胰腺分割准确率提升有重要贡献.

表 1 粗细分割分开训练、联合训练和循环显著性联合训练分割结果Table 1 Segmentation of coarse-to-fine separate training, joint training and recurrent saliency joint training方法 平均 DSC (%) ± Std (%) 最大 DSC (%) 最小 DSC (%) NIH MSD NIH MSD NIH MSD 粗细分割分开训练 $81.96 \pm 5.79$ $78.92 \pm 9.61$ 89.58 89.91 48.39 51.23 粗细分割联合训练 $83.08 \pm 5.47$ $80.80 \pm 8.79$ 90.58 91.13 49.94 52.79 循环显著性网络联合训练 $85.56 \pm 4.79$ $83.24 \pm 5.93$ 91.14 92.80 62.82 64.47 2) 针对循环显著性网络测试阶段进行分析, 如表2所示. 随着第1次迭代, 在NIH数据集上, 胰腺的平均DSC准确率从76.81%上升到84.89%, 标准差从9.68%降到5.14%; 在MSD数据集上, 胰腺的平均DSC准确率从73.46%上升到81.67%, 标准差从11.73%降到8.05%. 由于粗分割阶段分割掩码上下文信息的引入, 平均DSC准确率和稳定性都有较大的提升. 但是, 后续迭代过程中, 由于分割掩码先验信息对于较准确分割结果作用的减少, 在两个数据集上平均DSC准确率和标准差仅仅小幅度上升和下降; 但对于最小DSC分割准确率提升明显, 分别从40.12%上升到最高的62.82%、47.76%上升到最高的64.47%, 有效提升了胰腺分割困难样本的DSC分割准确率.

表 2 循环显著性网络测试结果Table 2 Test results of recurrent saliency network segmentation迭代次数 平均 DSC (%) ± Std (%) 最大 DSC (%) 最小 DSC (%) NIH MSD NIH MSD NIH MSD 第 0 次迭代 (粗分割) $76.81 \pm 9.68$ $73.46 \pm 11.73$ 87.94 88.67 40.12 47.76 第 1 次迭代 $84.89 \pm 5.14$ $81.67 \pm 8.05$ 91.02 91.89 50.36 52.90 第 2 次迭代 $83.34\pm 5.07$ $82.23 \pm 7.57$ 90.96 91.94 53.73 56.81 第 3 次迭代 $85.63 \pm 4.96$ $82.78 \pm 6.83$ 91.08 92.32 57.96 58.04 第 4 次迭代 $85.79 \pm 4.83$ $82.94 \pm 6.46$ 91.15 92.56 62.97 63.73 第 5 次迭代 $85.82 \pm 4.82$ $83.15 \pm 6.04$ 91.20 92.77 62.85 63.99 第 6 次迭代 $85.86 \pm 4.79$ $83.24 \pm 5.93$ 91.14 92.80 62.82 64.47 3.3.2 切片上下文信息有效性分析

本节对比实验展示切片上下文信息对于胰腺分割性能的影响, 分别进行了两部分实验.

1) 针对粗细分割以及循环显著性网络联合训练在添加和未添加卷积自注意力校准模块利用切片上下文信息情况下, 进行实验结果分析, 如表3所示. 相对于未添加卷积自注意力校准模块的粗细分割联合训练, 添加了卷积自注意力校准模块的粗细分割联合训练在NIH数据集上, 胰腺平均DSC准确率提升了1.64%, 标准差下降了0.40%; 在MSD数据集上, 胰腺平均DSC准确率提升了1.29%, 标准差下降了0.88%. 在两个数据集上, 胰腺最小DSC分割准确率也有所上升. 同样, 循环显著性网络联合训练在添加卷积自注意力校准模块(本文方法)时, 相比于未添加卷积自注意力校准模块, 其分割性能在分割准确率和稳定性上均提升明显. 由此可以看出, 卷积自注意力校准模块能够利用切片上下文信息改善胰腺分割结果.

表 3 添加校准模块结果对比Table 3 Comparison results of adding calibration module方法 平均 DSC (%) ± Std (%) 最大 DSC (%) 最小 DSC (%) NIH MSD NIH MSD NIH MSD 粗细分割联合训练未添加校准模块 $83.08 \pm 5.47$ $80.80 \pm 8.79$ 90.58 91.13 49.94 52.79 粗细分割联合训练添加校准模块 $84.72 \pm 5.07$ $82.09 \pm 7.91$ 90.98 92.90 50.27 53.35 循环显著性网络未添加校准模块 $85.86 \pm 4.79$ $83.24 \pm 5.93$ 91.14 92.80 62.82 64.47 循环显著性网络添加校准模块 $87.11 \pm 4.02$ $85.13 \pm 5.17$ 92.57 94.48 67.30 68.24 2) 针对本文方法中校准模块分别基于卷积自注意力或者基于卷积循环神经网络在分割胰腺时进行实验对比, 如表4所示. 将本文方法框架中卷积自注意力校准模块分别换成单层卷积长短期记忆循环神经网络(CLSTM)[7]、单层卷积门控单元(ConvGRU)[11]和单层轨迹门控循环单元(TrajGRU)[35]等卷积循环神经网络, 进行实验对比. 从两个数据集的实验结果可以看出, 基于卷积自注意力的校准模块不管是在胰腺平均DSC分割准确率、标准差或者最大、最小分割准确率上都要好于部分基于卷积循环神经网络的校准模块[7, 11, 35].

表 4 胰腺分割基于CLSTM和自注意力结果对比Table 4 Comparison results based on CLSTM and self-attention mechanism in pancreas segmentation方法 平均 DSC (%) ± Std (%) 最大 DSC (%) 最小 DSC (%) NIH MSD NIH MSD NIH MSD 基于 CLSTM 校准模块 $86.13 \pm 4.54$ $84.21 \pm 5.80$ 91.20 93.47 63.18 64.76 基于 ConvGRU 校准模块 $86.34 \pm 4.21$ $84.41\pm 5.62$ 92.31 94.05 65.73 66.02 基于 TrajGRU 校准模块 $86.96 \pm 4.14$ $84.87 \pm 5.22$ 92.49 94.32 67.20 67.93 基于卷积自注意力校准模块 $ 87.11 \pm 4.02$ $85.13 \pm 5.17$ 92.57 94.48 67.30 68.24 3.3.3 循环显著性校准网络有效性分析

为进一步说明本文所提方法在胰腺分割方法中的优势, 本文方法与当前具有代表性的方法进行了比较.

NIH胰腺数据集上实验结果如表5所示, 本文方法与其他具有代表性的胰腺基准分割方法进行了比较. 相比于其他二维胰腺分割方法[3, 19-23, 26, 36], 在以下两方面改进: 1) 联合训练利用更多的阶段上下文信息; 2) 使用卷积自注意力校准模块校准每一阶段胰腺分割掩码. 平均DSC分割准确率从最高的85.40%提升到87.11%, 显著改善了胰腺平均分割结果; 最大分割准确率从最高的91.46%上升到92.57%. 相比于三维胰腺分割方法[5, 10, 29-30, 37], 本文提出的卷积自注意力校准模块充分利用切片上下文信息, 显著减少参数量(GPU显存消耗)的同时, 达到三维分割同等效果, 提高了运算效率, 并且将胰腺平均分割准确率从最高的86.19%提升到87.11%, 最大分割准确率从最高的91.90%上升到92.57%.

表 5 NIH数据集上不同分割方法结果比较(“—”表示文献中缺少参数说明)Table 5 Comparison of different segmentation methods on NIH dataset (“—” indicates a lack of reference in the literature)方法 分割维度 平均 DSC (%) ±

Std (%)最大 DSC (%) 最小 DSC (%) 文献 [22] 2D $71.80 \pm 10.70$ 86.90 25.00 文献 [23] 2D $81.27 \pm 6.27$ 88.96 50.69 文献 [36] 2D $82.40 \pm 6.70$ 90.10 60.00 文献 [3] 2D $82.37 \pm 5.68$ 90.85 62.43 文献 [37] 3D $84.59 \pm 4.86$ 91.45 69.62 文献 [10] 3D $85.99 \pm 4.51$ 91.20 57.20 文献 [5] 3D $85.93 \pm 3.42$ 91.48 75.01 文献 [29] 3D $82.47 \pm 5.50$ 91.17 62.36 文献 [20] 2D $82.87 \pm 1.00$ 87.67 81.18 文献 [19] 2D $84.90 \pm -$ 91.46 61.82 文献 [26] 2D $85.35 \pm 4.13$ 91.05 71.36 文献 [21] 2D $85.40 \pm 1.60$ — — 文献 [30] 3D $86.19 \pm -$ 91.90 69.17 本文方法 2D 87.11 ± 4.02 92.57 67.30 MSD胰腺数据集上实验结果如表6所示, 本文方法与具有代表性的胰腺基准分割方法进行了比较. 相比于二维分割方法[28], 平均DSC分割准确率从84.71%提升到85.13%, 标准差从7.13% 降到5.17%, 显著提升了胰腺分割方法的稳定性; 最小分割准确率从58.62% 上升到68.24%, 提高了困难样本的分割准确率. 相比于三维胰腺分割方法[38-40], 平均DSC分割准确率从最高的84.22%提升到85.13%; 最大和最小分割准确率均有所提升.

本文方法在NIH及MSD胰腺数据集上箱线图如图7所示. 本文对部分结果进行了展示, 如图8、图9所示. 选取了5个受试者样本, 同一行为同一个受试者不同切片的胰腺分割结果. 蓝色实线代表预测结果, 红色实线代表真实标签. 从图中可以看出, 本文方法分割结果和真实标签非常接近.

3.3.4 输入切片数目对分割结果的影响

为进一步说明胰腺输入切片数目对本文方法的影响, 将切片数目输入分别设置为3、5、7进行实验比较, 如表7和表8所示. 随着胰腺输入切片数目的增加, 平均DSC分割准确率和最大DSC分割准确率均有小幅度提升, 最小DSC分割准确率提升更为明显. 可以看出, 增加切片数目对于分割困难样本具有较大的帮助. 对于胰腺器官边界模糊的困难样本、胰腺周围脂肪与十二指肠灰度分布较为接近的困难样本以及切片中分割目标较小的困难样本, 结合更多的切片数目能够明显提升目标分割精度.

表 7 NIH数据集不同网络输入切片数目分割结果比较Table 7 Comparison of the segmentation of different network input slices on NIH dataset网络输入

切片数目分割维度 平均 DSC (%) ±

Std (%)最大 DSC (%) 最小 DSC (%) 3 2D $87.11\pm4.02$ 92.57 67.30 5 2D $87.53\pm3.74$ 92.69 69.32 7 2D $87.96\pm3.25$ 92.94 71.91 表 8 MSD数据集不同网络输入切片数目分割结果比较Table 8 Comparison of the segmentation of different network input slices on MSD dataset网络输入

切片数目分割维度 平均 DSC (%) ±

Std (%)最大 DSC (%) 最小 DSC (%) 3 2D $85.13\pm5.17$ 94.48 68.24 5 2D $85.86\pm5.01$ 94.75 70.31 7 2D $86.29\pm4.80$ 95.01 73.07 3.3.5 网络参数量及时间消耗

本文使用UNet作为基础骨干网络, 除粗分割阶段, 后续分割阶段共享网络参数, 减少了参数量. 相比于FCN[2]经典分割网络, 提出的分割模型具有更少的参数量, 如表9所示. 虽然相比于单阶段的UNet[15], 3D UNet[41], AttentionUNet[42] 和UNet++[43]等分割网络, 参数量有所增加, 但是单阶段的分割方法分割精度较低; 相比于Fix-point[3]使用FCN作为骨干网络并且利用三个轴状面分别训练模型分割胰腺, 本文参数量显著减少; 相比于GGPFN[28], 虽然参数量有所增加, 但是分割精度有所提升.

文献[44]使用胰腺器官的三个轴状面作为输入训练模型, 并且分割阶段使用额外两个模型融合视觉特征, 增加了时间消耗, 如表10所示. 文献[23]在三个轴状面上分别进行定位、分割, 在Titan X (12 GB) GPU上训练了9 ~ 12个小时. 文献[22]使用由下至上的方法, 首先使用超像素分块, 然后基于超像素分块集成分割结果, 每个阶段分开训练. 而本文胰腺分割方法使用端到端的训练方法, 降低了每个病例的平均测试时间. 文献[3]使用固定点方法, 分别使用三个FCN训练胰腺的三个轴状面输入图像, 循环使用细分割掩码位置信息优化分割掩码, 显著增加了时间消耗. 相比于上述方法, 本文胰腺分割方法虽然增加了循环显著性模块和校准模块, 但循环显著性模块和校准模块设计简单并且基于矩阵运算, 运算时间增加不明显, 并且本文方法仅基于横断面作为输入, 使用UNet[14]而非FCN[2]作为分割骨干网络, 显著减少参数量及前馈传播时间. 相比于文献[36]基于循环卷积神经网络使用多切片作为输入, 本文方法训练及测试时间有所增加, 但分割精确度提升明显.

表 10 不同分割方法时间消耗比较(“—”表示文献中缺少参数说明)Table 10 Comparison of time consumption of different segmentation methods (“—” indicates a lack of reference in the literature)4. 总结与展望

针对胰腺分割面临的问题, 本文提出了基于循环显著性校准网络的胰腺分割方法. 其主要贡献在于: 1)利用更多的阶段上下文信息联合训练, 改善了传统由粗到细胰腺分割方法仅使用粗分割阶段输出掩码定位框坐标信息作为细分割网络输入的先验, 导致缺少阶段上下文信息的问题; 2) 使用卷积自注意力校准模块跨顺序、平行化利用相邻切片上下文信息的同时, 自动校准每一分割阶段输出掩码, 解决了胰腺与邻近器官密度较为接近、组织重叠部分界限分辨困难导致的误分割问题. 和其他胰腺分割方法相比, 本文方法显著提高了样本平均DSC分割准确率并改善了困难样本分割结果. 本文方法可用于辅助医疗诊断, 后续研究将考虑如何进一步利用更多的阶段上下文信息及切片上下文信息改善分割结果的同时, 使用模型蒸馏方法轻量化模型框架.

-

图 2 唇读难点示例. (a)第一行为单词place的实例, 第二行为单词please的实例, 唇形变化难以区分, 图片来自GRID数据集; (b)上下两行分别为单词wind在不同上下文环境下的不同读法/wind/与/waind/实例, 唇形变化差异较大; (c)上下两行分别为两位说话人说同一个单词after的实例, 唇形变化存在差异, 图片来自LRS3-TED数据集; (d)说话人在说话过程中头部姿态实时变化实例. 上述对比实例均采用相同的视频时长和采样间隔.

Fig. 2 Challenging examples of lip reading. (a) The upper line is an instance of the word place, the lower line is an instance of the word please; (b) The upper and lower lines are respectively different pronunciation of word wind in different contexts; (c) The upper and lower lines respectively tell the same word after, with big difference in lip motion; (d) An example of a real-time change in the head posture of the speaker during the speech. The above comparison examples all use the same video duration and sampling interval.

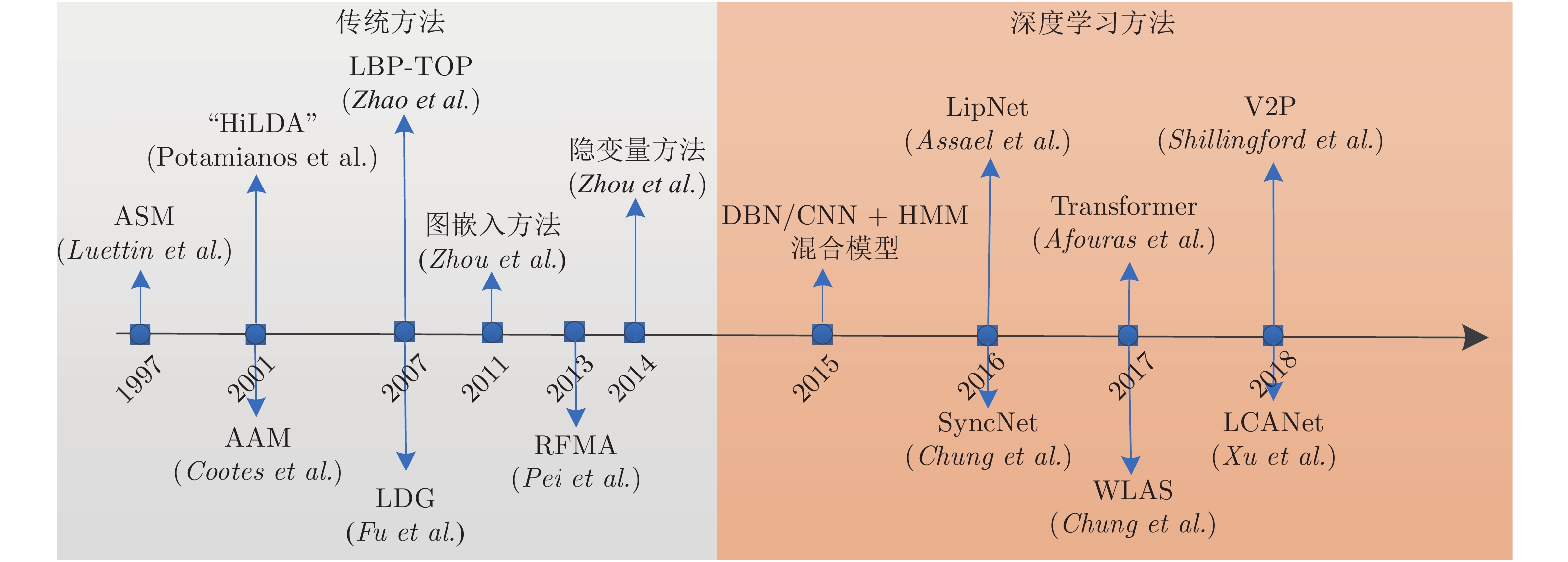

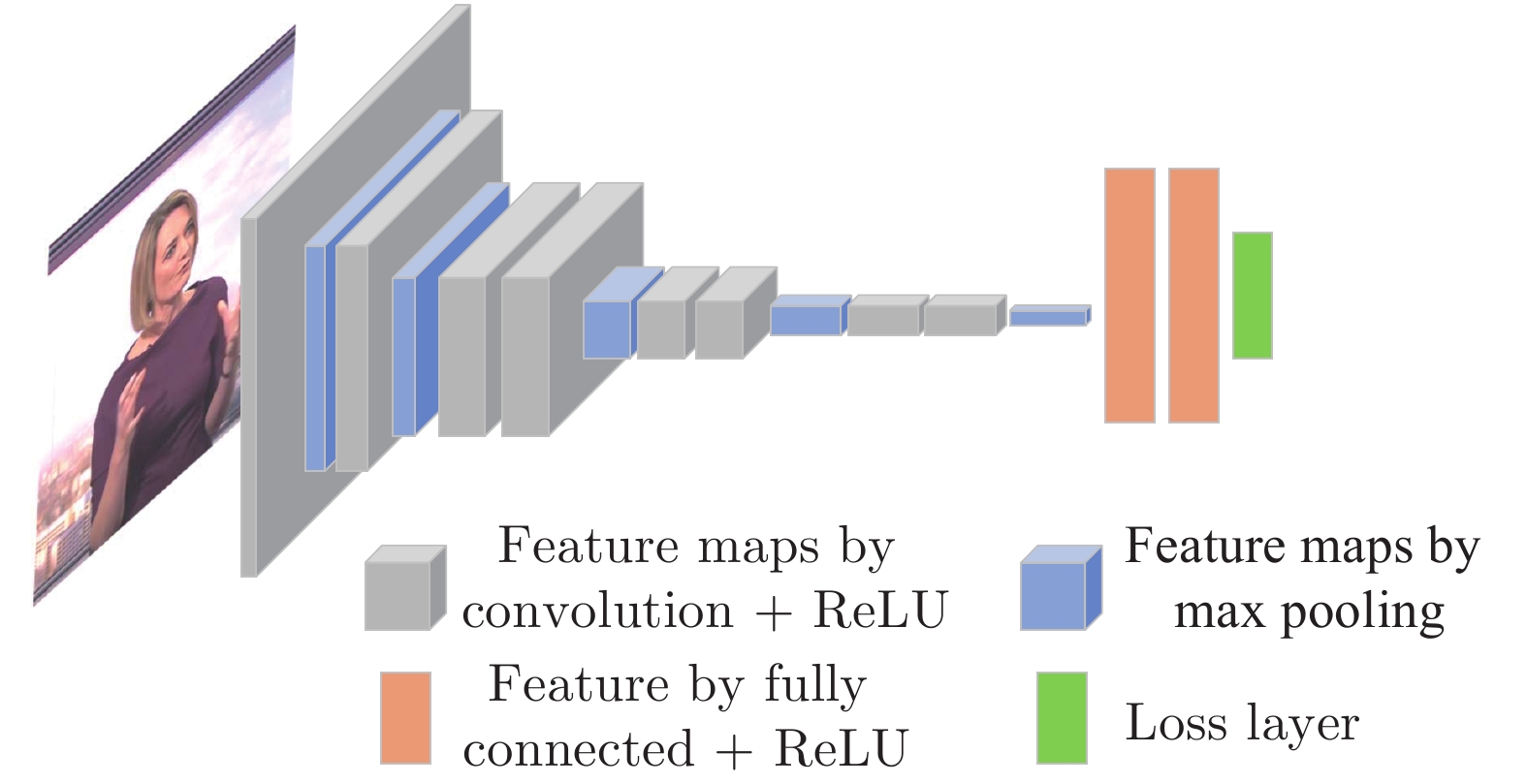

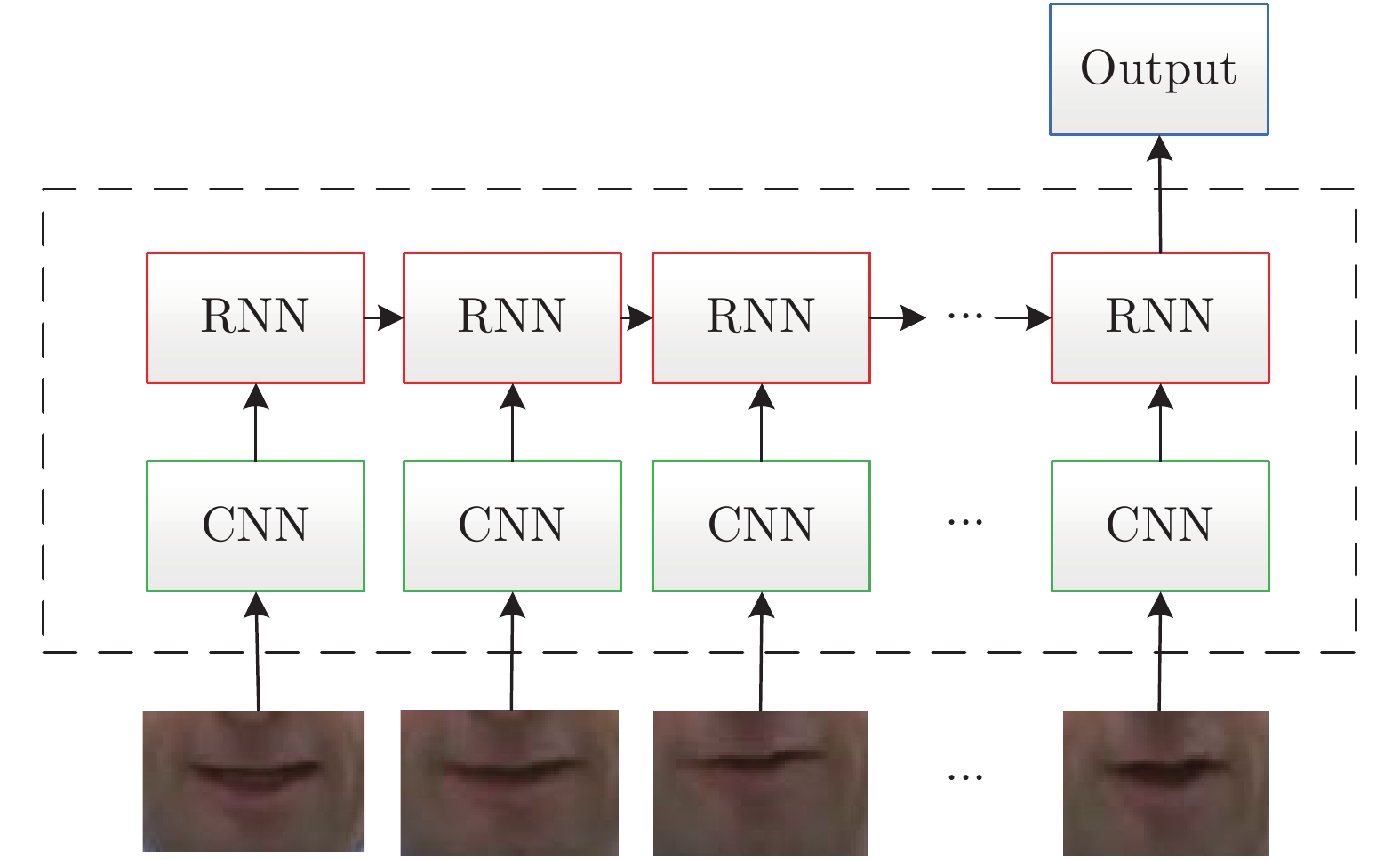

图 4 唇读研究过程中代表性方法. 传统特征提取方法: 主动形状模型ASM[51], 主动表观模型AAM[39], HiLDA[38], LBP-TOP[52], 局部判别图模型[40], 图嵌入方法[53], 随机森林流形对齐RFMA[41], 隐变量方法[54]; 深度学习方法: DBN/CNN+HMM混合模型[42-48], SyncNet[55], LipNet[49], WLAS[10], Transformer[50], LCANet[56], V2P[15].

Fig. 4 Representative methods in the process of lip reading research. Traditional feature extraction methods:ASM[51], AAM[39], HiLDA[38], LBP-TOP[52], LDG[40], Graph Embedding[53], RFMA[41], Hidden variable method[54]; Deep learning based methods: DBN/CNN+HMM hybrid model[42-48], SyncNet[55], LipNet[49], WLAS[10], Transformer[50], LCANet[56], V2P[15].

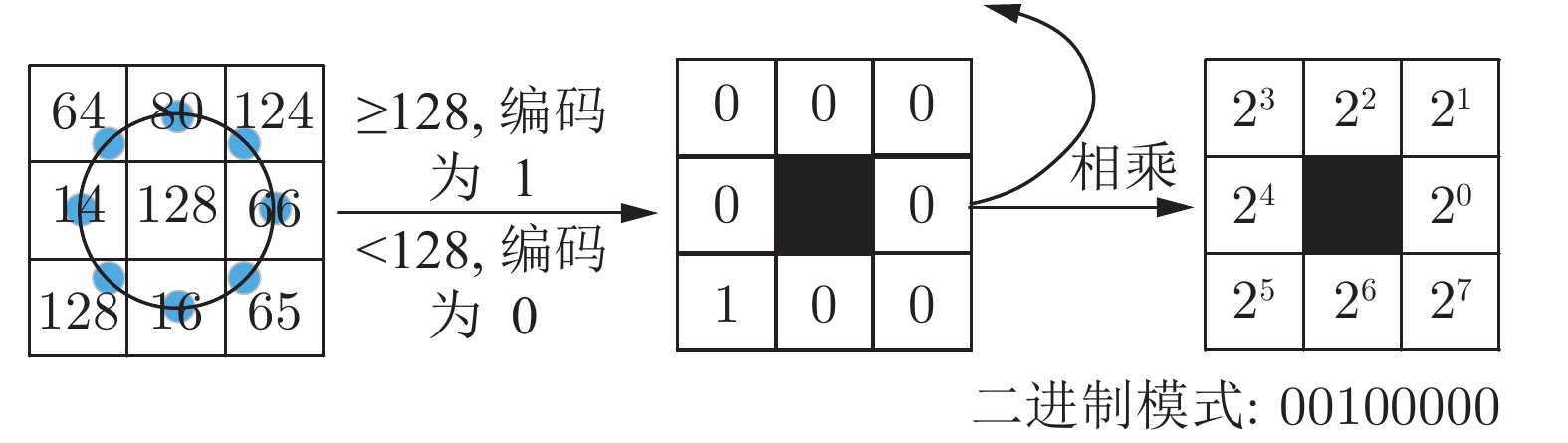

表 1 传统时空特征提取算法优缺点总结

Table 1 A summary of advantages and disadvantages of traditional spatiotemporal feature extraction methods

时空特征提取方法 代表性方法 优势 不足 基于表观的 全局图像线性变换[38,57,60-63],

图嵌入与流形[40-41, 53-54,65],

LBP-TOP[52,66], HOG[67], 光流[29, 68]···1) 特征提取速度快;

2) 无需复杂的人工建模.1) 对唇部区域提取精度要求高;

2) 对环境变化、姿态变化、噪声敏感;

3) 不同讲话者之间泛化性能较差.基于形状的 轮廓描述[69-72],

AFs[73], 形状模型[74-75]···1) 具有良好的可解释性;

2) 不同讲话者之间泛化性能较好;

3) 能有效去除冗余信息.1) 会造成部分有用信息丢失;

2) 需要大量的人工标注;

3) 对于姿态变化非常敏感.形状表观融合的 形状+表观特征串联[76-77],

形状表观模型[39]···1) 特征表达能力较强;

2) 不同讲话者之间泛化性能较好.1) 模型复杂,运算量大;

2) 需要大量的人工标注.表 3 单词、短语和语句识别数据集, 其中(s)代表不同语句的数量. 下载地址为: MIRACL-VC[171], LRW[172], LRW-1000[173], GRID[174], OuluVS[175], VIDTIMIT[176], LILiR[177], MOBIO[178], TCD-TIMIT[179], LRS[180], VLRF[181]

Table 3 Word, phrase and sentence lip reading datasets and their download link: MIRACL-VC[171], LRW[172], LRW-1000[173], GRID[174], OuluVS[175], VIDTIMIT[176], LILiR[177], MOBIO[178], TCD-TIMIT[179], LRS[180], VLRF[181]

数据集 语种 识别任务 词汇量 话语数目 说话人数目 姿态 分辨率 谷歌引用 发布年份 IBMViaVoice 英语 语句 10 500 24 325 290 0 704 × 480, 30 fps 299 2000 VIDTIMIT 英语 语句 346 (s) 430 43 0 512 × 384, 25 fps 51 2002 AVICAR 英语 语句 1 317 10 000 100 −15 $\sim$ 15720 × 480, 30 fps 170 2004 AV-TIMIT 英语 语句 450 (s) 4 660 233 0 720 × 480, 30 fps 127 2004 GRID 英语 短语 51 34 000 34 0 720 × 576, 25 fps 700 2006 IV2 法语 语句 15 (s) 4 500 300 0,90 780 × 576, 25 fps 19 2008 UWB-07-ICAV 捷克语 语句 7 550 (s) 10 000 50 0 720 × 576, 50 fps 16 2008 OuluVS 英语 短语 10 (s) 1 000 20 0 720 × 576, 25 fps 211 2009 WAPUSK20 英语 短语 52 2 000 20 0 640 × 480, 32 fps 16 2010 LILiR 英语 语句 1 000 2 400 12 0, 30, 45, 60, 90 720 × 576, 25 fps 67 2010 BL 法语 语句 238 (s) 4 046 17 0, 90 720 × 576, 25 fps 12 2011 UNMC-VIER 英语 语句 11 (s) 4 551 123 0, 90 708 × 640, 25 fps 8 2011 MOBIO 英语 语句 30 186 152 0 640 × 480, 16 fps 175 2012 MIRACL-VC 英语 单词 10 1 500 15 0 640 × 480, 15 fps 22 2014 短语 10 (s) 1 500 Austalk 英语 单词 966 966 000 1 000 0 640 × 480 11 2014 语句 59 (s) 59 000 MODALITY 英语 单词 182 (s) 231 35 0 1 920 × 1 080, 100 fps 23 2015 RM-3000 英语 语句 1 000 3 000 1 0 360 × 640, 60 fps 7 2015 IBM AV-ASR 英语 语句 10 400 262 0 704 × 480, 30 fps 103 2015 TCD-TIMIT 英语 语句 5 954 (s) 6 913 62 0, 30 1920 × 1080, 30 fps 59 2015 OuluVS2 英语 短语 10 1 590 53 0, 30, 45, 60, 90 1920 × 1080, 30 fps 46 2015 语句 530 (s) 530 LRW 英语 单词 500 550 000 1 000+ 0 $\sim$ 30256 × 256, 25 fps 115 2016 HAVRUS 俄语 语句 1 530 (s) 4 000 20 0 640 × 480, 200 fps 13 2016 LRS2-BBC 英语 语句 62 769 144 482 1 000+ 0 $\sim$ 30160 × 160, 25 fps 172 2017 VLRF 西班牙语 语句 1 374 10 200a 24 0 1 280 × 720, 50 fps 6 2017 LRS3-TED 英语 语句 70 000 151 819 1 000+ −90 $\sim$ 90224 × 224, 25 fps 2 2018 LRW-1000 中文 单词 1 000 745 187 2 000+ −90 $\sim$ 901 920 × 1 080, 25 fps 0 2018 LSVSR 英语 语句 127 055 2 934 899 1 000+ −30 $\sim$ 30128 × 128, 23 ~ 30 fps 16 2018 表 2 字母、数字识别数据集. 下载地址为: AVLetters[152], AVICAR[153], XM2VTS[154], BANCA[155], CUAVE[156], VALID[157], CENSREC-1-AV[158], Austalk[159], OuluVS2[160]

Table 2 Alphabet and digit lip reading datasets and their download link: AVLetters[152], AVICAR[153], XM2VTS[154], BANCA[155], CUAVE[156], VALID[157], CENSREC-1-AV[158], Austalk[159], OuluVS2[160]

数据集 语种 识别任务 类别数目 话语数目 说话人数目 姿态 分辨率 谷歌引用 发布年份 AVLetters 英语 字母 26 780 10 0 376 × 288, 25 fps 507 1998 XM2VTS 英语 数字 10 885 295 0 720 × 576, 25 fps 1 617 1999 BANCA 多语种 数字 10 29 952 208 0 720 × 576, 25 fps 530 2003 AVICAR 英语 字母 26 26 000 100 −15 $\sim$ 15720 × 480, 30 fps 170 2004 数字 13 23 000 CUAVE 英语 数字 10 7 000+ 36 −90, 0, 90 720 × 480, 30 fps 292 2002 VALID 英语 数字 10 530 106 0 720 × 576, 25 fps 38 2005 AVLetters2 英语 字母 26 910 5 0 1 920 × 1 080, 50 fps 62 2008 IBMSR 英语 数字 10 1 661 38 −90, 0, 90 368 × 240, 30 fps 17 2008 CENSREC-1-AV 日语 数字 10 5 197 93 0 720 × 480, 30 fps 25 2010 QuLips 英语 数字 10 3 600 2 −90 $\sim$ 90720 × 576, 25 fps 21 2010 Austalk 英语 数字 10 24 000 1 000 0 640 × 480 11 2014 OuluVS2 英语 数字 10 159 53 0 $\sim$ 901 920 × 1 080, 30 fps 46 2015 表 4 不同数据集下代表性方法比较

Table 4 Comparison of representative methods under different datasets

数据集 识别任务 参考文献 模型 主要实验条件 识别率 前端特征提取 后端分类器 音频信号 讲话者依赖 外部语言模型 最小识别单元 AVLetters 字母 [41] RFMA × √ × 字母 69.60 % [48] RTMRBM SVM √ √ × 字母 66.00 % [42] ST-PCA Autoencoder × × × 字母 64.40 % [52] LBP-TOP SVM × √ × 字母 62.80 % × × 43.50 % [193] DBNF+DCT LSTM × √ × 字母 58.10 % CUAVE 数字 [102] AAM HMM √ × × 数字 83.00 % [91] HOG+MBH SVM × × × 数字 70.10 % √ × 90.00 % [194] DBNF DNN-HMM × × × 音素 64.90 % [60] DCT HMM √ × × 数字 60.40 % LRW 单词 [128] 3D-CNN+ResNet BiLSTM × × × 单词 83.00 % [131] 3D-CNN+ResNet BiGRU × × × 单词 82.00 % √ × 98.00 % [10] CNN LSTM+Attention × × × 单词 76.20 % [9] CNN × × × 单词 61.10 % GRID 短语 [56] 3D-CNN+highway BiGRU+Attention × √ × 字符 97.10 % [10] CNN LSTM+Attention × √ × 单词 97.00 % [134] Feed-forward LSTM × √ × 单词 84.70 % √ 95.90 % [49] 3D-CNN BiGRU × × × 字符 93.40 % [126] HOG SVM × √ × 单词 71.20 % LRS3-TED 语句 [151] 3D-CNN+ResNet Transformer+seq2seq × × √ 字符 41.10 % Transformer +CTC 33.70 % [15] 3DCNN BiLSTM+CTC × × √ 音素 44.90 % -

[1] McGurk H, MacDonald J. Hearing lips and seeing voices. Nature, 1976, 264(5588): 746−748 doi: 10.1038/264746a0 [2] Potamianos G, Neti C, Gravier G, Garg A, Senior A W. Recent advances in the automatic recognition of audiovisual speech. Proceedings of the IEEE, 2003, 91(9): 1306−1326 doi: 10.1109/JPROC.2003.817150 [3] Calvert G A, Bullmore E T, Brammer M J, Campbell R, Williams S C R, McGuire P K, et al. Activation of auditory cortex during silent lipreading. Science, 1997, 276(5312): 593−596 doi: 10.1126/science.276.5312.593 [4] Deafness and hearing loss [online] available:https://www.who.int/news-room/fact-sheets/detail/deafness-and-hearing-loss, July 1, 2019 [5] Tye-Murray N, Sommers M S, Spehar B. Audiovisual integration and lipreading abilities of older adults with normal and impaired hearing. Ear and Hearing, 2007, 28(5): 656−668 doi: 10.1097/AUD.0b013e31812f7185 [6] Akhtar Z, Micheloni C, Foresti G L. Biometric liveness detection: Challenges and research opportunities. IEEE Security and Privacy, 2015, 13(5): 63−72 doi: 10.1109/MSP.2015.116 [7] Rekik A, Ben-Hamadou A, Mahdi W. Human machine interaction via visual speech spotting. In: Proceedings of the 2015 International Conference on Advanced Concepts for Intelligent Vision Systems. Catania, Italy: Springer, 2015. 566−574 [8] Suwajanakorn S, Seitz S M, Kemelmacher-Shlizerman I. Synthesizing obama: Learning lip sync from audio. ACM Transactions on Graphics, 2017, 36(4): Article No.95 [9] Chung J S, Zisserman A. Lip reading in the wild. In: Proceedings of the 2016 Asian Conference on Computer Vision. Taiwan, China: Springer, 2016. 87−103 [10] Chung J S, Senior A, Vinyals O, Zisserman A. Lip reading sentences in the wild. In: Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition. Honolulu, USA: IEEE, 2017. 3444−3453 [11] Chen L, Li Z, K Maddox R K, Duan Z, Xu C. Lip movements generation at a glance. In: Proceedings of the 2018 European Conference on Computer Vision. Munich, Germany: Springer, 2018. 538−553 [12] Gabbay A, Shamir A, Peleg S. Visual speech enhancement. arXiv preprint arXiv: 1711.08789, 2017 [13] 黄雅婷, 石晶, 许家铭, 徐波. 鸡尾酒会问题与相关听觉模型的研究现状与展望. 自动化学报, 2019, 45(2): 234−251Huang Ya-Ting, Shi Jing, Xu Jia-Ming, Xu Bo. Research advances and perspectives on the cocktail party problem and related auditory models. Acta Automatica Sinica, 2019, 45(2): 234−251 [14] Akbari H, Arora H, Cao L L, Mesgarani N. Lip2AudSpec: Speech reconstruction from silent lip movements video. In: Proceedings of the 2018 IEEE International Conference on Acoustics, Speech and Signal Processing. Calgary, Canada: IEEE, 2018. 2516−2520 [15] Shillingford B, Assael Y, Hoffman M W, Paine T, Hughes C, Prabhu U, et al. Large-scale visual speech recognition. arXiv preprint arXiv: 1807.05162, 2018 [16] Mandarin Audio-Visual Speech Recognition Challenge [online] available: http://vipl.ict.ac.cn/homepage/mavsr/index.html, July 1, 2019 [17] Potamianos G, Neti C, Luettin J, Matthews I. Audio-visual automatic speech recognition: An overview. Issues in Visual and Audio-Visual Speech Processing. Cambridge: MIT Press, 2004. 1−30 [18] Zhou Z H, Zhao G Y, Hong X P, Pietikainen M. A review of recent advances in visual speech decoding. Image and Vision Computing, 2014, 32(9): 590−605 doi: 10.1016/j.imavis.2014.06.004 [19] Fernandez-Lopez A, Sukno F M. Survey on automatic lip-reading in the era of deep learning. Image and Vision Computing, 2018, 78: 53−72 doi: 10.1016/j.imavis.2018.07.002 [20] 姚鸿勋, 高文, 王瑞, 郎咸波. 视觉语言-唇读综述. 电子学报, 2001, 29(2): 239−246 doi: 10.3321/j.issn:0372-2112.2001.02.025Yao Hong-Xun, Gao Wen, Wang Rui, Lang Xian-Bo. A survey of lipreading-one of visual languages. Acta Electronica Sinica, 2001, 29(2): 239−246 doi: 10.3321/j.issn:0372-2112.2001.02.025 [21] Cox S J, Harvey R W, Lan Y, et al. The challenge of multispeaker lip-reading. In: Proceedings of AVSP. 2008: 179−184 [22] Messer K, Matas J, Kittler J, et al. XM2VTSDB: The extended M2VTS database. In: Proceedings of the Second International Conference on Audio and Video-based Biometric Person Authentication. 1999, 964: 965−966 [23] Bailly-Bailliére E, Bengio S, Bimbot F, Hamouz M, Kittler J, Mariéthoz J, et al. The BANCA database and evaluation protocol. In: Proceedings of the 2003 International Conference on Audio- and Video-based Biometric Person Authentication. Guildford, United Kingdom: Springer, 2003. 625−638 [24] Ortega A, Sukno F, Lleida E, Frangi A F, Miguel A, Buera L, et al. AV@CAR: A Spanish multichannel multimodal corpus for in-vehicle automatic audio-visual speech recognition. In: Proceedings of the 4th International Conference on Language Resources and Evaluation. Lisbon, Portugal: European Language Resources Association, 2004. 763−766 [25] Lee B, Hasegawa-Johnson M, Goudeseune C, Kamdar S, Borys S, Liu M, et al. AVICAR: Audio-visual speech corpus in a car environment. In: Proceedings of the 8th International Conference on Spoken Language Processing. Jeju Island, South Korea: International Speech Communication Association, 2004. 2489−2492 [26] Twaddell W F. On defining the phoneme. Language, 1935, 11(1): 5−62 [27] Woodward M F, Barber C G. Phoneme perception in lipreading. Journal of Speech and Hearing Research, 1960, 3(3): 212−222 doi: 10.1044/jshr.0303.212 [28] Fisher C G. Confusions among visually perceived consonants. Journal of Speech and Hearing Research, 1968, 11(4): 796−804 doi: 10.1044/jshr.1104.796 [29] Cappelletta L, Harte N. Viseme definitions comparison for visual-only speech recognition. In: Proceedings of the 19th European Signal Processing Conference. Barcelona, Spain: IEEE, 2011. 2109−2113 [30] Wu Y, Ji Q. Facial landmark detection: A literature survey. International Journal of Computer Vision, 2019, 127(2): 115−142 doi: 10.1007/s11263-018-1097-z [31] Chrysos G G, Antonakos E, Snape P, Asthana A, Zafeiriou S. A comprehensive performance evaluation of deformable face tracking "in-the-wild". International Journal of Computer Vision, 2018, 126(2-4): 198−232 doi: 10.1007/s11263-017-0999-5 [32] Koumparoulis A, Potamianos G, Mroueh Y, et al. Exploring ROI size in deep learning based lipreading. In: Proceedings of AVSP. 2017: 64−69 [33] Deller J R Jr, Hansen J H L, Proakis J G. Discrete-Time Processing of Speech Signals. New York: Macmillan Pub. Co, 1993. [34] Rabiner L R, Juang B H. Fundamentals of Speech Recognition. Englewood Cliffs: Prentice Hall, 1993. [35] Young S, Evermann G, Gales M J F, Hain T, Kershaw D, Liu X Y, et al. The HTK Book. Cambridge: Cambridge University Engineering Department, 2002. [36] Povey D, Ghoshal A, Boulianne G, Burget L, Glembek O, Goel N, et al. The Kaldi speech recognition toolkit. In: IEEE 2011 Workshop on Automatic Speech Recognition and Understanding. Hilton Waikoloa Village, Big Island, Hawaii, US: IEEE, 2011. [37] Matthews I, Cootes T F, Bangham J A, Cox S, Harvey R. Extraction of visual features for lipreading. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2002, 24(2): 198−213 doi: 10.1109/34.982900 [38] Potamianos G, Graf H P, Cosatto E. An image transform approach for HMM based automatic lipreading. In: Proceedings of 1998 International Conference on Image Processing. Chicago, USA: IEEE, 1998. 173−177 [39] Cootes T F, Edwards G J, Taylor C J. Active appearance models. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2001, 23(6): 681−685 doi: 10.1109/34.927467 [40] Fu Y, Zhou X, Liu M, Hasegawa-Johnson M, Huang T S. Lipreading by locality discriminant graph. In: Proceedings of 2007 IEEE International Conference on Image Processing. San Antonio, USA: IEEE, 2007. III−325−III−328 [41] Pei Y R, Kim T K, Zha H B. Unsupervised random forest manifold alignment for lipreading. In: Proceedings of 2013 IEEE International Conference on Computer Vision. Sydney, Australia: IEEE, 2013. 129−136 [42] Ngiam J, Khosla A, Kim M, Nam J, Lee H, Ng A Y. Multimodal deep learning. In: Proceeding of the 28th International Conference on Machine Learning. Washington, USA: ACM, 2011. 689−696 [43] Salakhutdinov R, Mnih A, Hinton G. Restricted Boltzmann machines for collaborative filtering. In: Proceedings of the 24th International Conference on Machine Learning. Corvallis, USA: ACM, 2007. 791−798 [44] Huang J, Kingsbury B. Audio-visual deep learning for noise robust speech recognition. In: Proceedings of 2013 IEEE International Conference on Acoustics, Speech and Signal Processing. Vancouver, Canada: IEEE, 2013. 7596−7599 [45] Ninomiya H, Kitaoka N, Tamura S, et al. Integration of deep bottleneck features for audio-visual speech recognition. In: Proceedings of the 16th Annual Conference of the International Speech Communication Association. 2015. [46] Sui C, Bennamoun M, Togneri R. Listening with your eyes: Towards a practical visual speech recognition system using deep Boltzmann machines. In: Proceedings of the IEEE International Conference on Computer Vision. Santiago, Chile: IEEE, 2015. 154−162 [47] Noda K, Yamaguchi Y, Nakadai K, Okuno H G, Ogata T. Audio-visual speech recognition using deep learning. Applied Intelligence, 2015, 42(4): 722−737 doi: 10.1007/s10489-014-0629-7 [48] Hu D, Li X L, Lu X Q. Temporal multimodal learning in audiovisual speech recognition. In: Proceedings of 2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas, USA: IEEE, 2016. 3574−3582 [49] Assael Y M, Shillingford B, Whiteson S, De Freitas N. LipNet: End-to-end sentence-level lipreading. arXiv preprint arXiv:1611.01599, 2016 [50] Afouras T, Chung J S, Zisserman A. Deep lip reading: A comparison of models and an online application. arXiv preprint arXiv:1806.06053, 2018 [51] Luettin J, Thacker N A. Speechreading using probabilistic models. Computer Vision and Image Understanding, 1997, 65(2): 163−178 doi: 10.1006/cviu.1996.0570 [52] Zhao G Y, Barnard M, Pietikäinen M. Lipreading with local spatiotemporal descriptors. IEEE Transactions on Multimedia, 2009, 11(7): 1254−1265 doi: 10.1109/TMM.2009.2030637 [53] Zhou Z H, Zhao G Y, Pietikäinen M. Towards a practical lipreading system. In: Proceedings of IEEE Conference on Computer Vision and Pattern Recognition. Providence, RI, USA: IEEE, 2011. 137−144 [54] Zhou Z H, Hong X P, Zhao G Y, Pietikäinen M. A compact representation of visual speech data using latent variables. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2014, 36(1): 1 [55] Chung J S, Zisserman A. Out of time: Automated lip sync in the wild. In: Proceedings of Asian Conference on Computer Vision. Taiwan, China: Springer, 2016. 251−263 [56] Xu K, Li D W, Cassimatis N, Wang X L. LCANet: End-to-end lipreading with cascaded attention-CTC. In: Proceedings of the 13th IEEE International Conference on Automatic Face & Gesture Recognition. Xi'an, China: IEEE, 2018.−548−555 [57] Lucey P J, Potamianos G, Sridharan S. A unified approach to multi-pose audio-visual ASR. In: Proceedings of the 8th Annual Conference of the International Speech Communication Association. Antwerp, Belgium: Causal Productions Pty Ltd., 2007. 650−653 [58] Almajai I, Cox S, Harvey R, Lan Y X. Improved speaker independent lip reading using speaker adaptive training and deep neural networks. In: Proceedings of 2016 IEEE International Conference on Acoustics, Speech and Signal Processing. Shanghai, China: IEEE, 2016. 2722−2726 [59] Seymour R, Stewart D, Ming J. Comparison of image transform-based features for visual speech recognition in clean and corrupted videos. EURASIP Journal on Image and Video Processing, 2007, 2008(1): Article No.810362 [60] Estellers V, Gurban M, Thiran J P. On dynamic stream weighting for audio-visual speech recognition. IEEE Transactions on Audio, Speech, and Language Processing, 2012, 20(4): 1145−1157 doi: 10.1109/TASL.2011.2172427 [61] Potamianos G, Neti C, Iyengar G, Senior A W, Verma A. A cascade visual front end for speaker independent automatic speechreading. International Journal of Speech Technology, 2001, 4(3−4): 193−208 [62] Lucey P J, Sridharan S, Dean D B. Continuous pose-invariant lipreading. In: Proceedings of the 9th Annual Conference of the International Speech Communication Association (Interspeech 2008) incorporating the 12th Australasian International Conference on Speech Science and Technology (SST 2008). Brisbane Australia: International Speech Communication Association, 2008. 2679−2682 [63] Lucey P J, Potamianos G, Sridharan S. Patch-based analysis of visual speech from multiple views. In: Proceedings of the International Conference on Auditory-Visual Speech Processing 2008. Moreton Island, Australia: AVISA, 2008. 69−74 [64] Tim Sheerman-Chase, Eng-Jon Ong, Richard Bowden. Cultural Factors in the Regression of Non-verbal Communication Perception. In Workshop on Human Interaction in Computer Vision, Barcelona, 2011 [65] Zhou Z H, Zhao G Y, Pietikäinen M. Lipreading: A graph embedding approach. In: Proceedings of the 20th International Conference on Pattern Recognition. Istanbul, Turkey: IEEE, 2010. 523−526 [66] Zhao G Y, Pietikäinen M. Dynamic texture recognition using local binary patterns with an application to facial expressions. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2007, 29(6): 915−928 doi: 10.1109/TPAMI.2007.1110 [67] Dalal N, Triggs B. Histograms of oriented gradients for human detection. In: Proceedings of 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition. San Diego, USA: IEEE, 2005. 886−893 [68] Mase K, Pentland A. Automatic lipreading by optical-flow analysis. Systems and Computers in Japan, 1991, 22(6): 67−76 doi: 10.1002/scj.4690220607 [69] Aleksic P S, Williams J J, Wu Z L, Katsaggelos A K. Audio-visual speech recognition using MPEG-4 compliant visual features. EURASIP Journal on Advances in Signal Processing, 2002, 2002(1): Article No. 150948 [70] Brooke N M. Using the visual component in automatic speech recognition. In: Proceedings of the 4th International Conference on Spoken Language Processing. Philadelphia, USA: IEEE, 1996. 1656−1659 [71] Cetingul H E, Yemez Y, Erzin E, Tekalp A M. Discriminative analysis of lip motion features for speaker identification and speech-reading. IEEE Transactions on Image Processing, 2006, 15(10): 2879−2891 doi: 10.1109/TIP.2006.877528 [72] Nefian A V, Liang L H, Pi X B, Liu X X, Murphy K. Dynamic Bayesian networks for audio-visual speech recognition. EURASIP Journal on Advances in Signal Processing, 2002, 2002(11): Article No.783042 doi: 10.1155/S1110865702206083 [73] Kirchhoff K. Robust speech recognition using articulatory information Elektronische Ressource. 1999. [74] Cootes T F, Taylor C J, Cooper D H, Graham J. Active shape models-their training and application. Computer Vision and Image Understanding, 1995, 61(1): 38−59 doi: 10.1006/cviu.1995.1004 [75] Luettin J, Thacker N A, Beet S W. Speechreading using shape and intensity information. In: Proceedings of the 4th International Conference on Spoken Language Processing. Philadelphia, USA: IEEE, 1996. 58−61 [76] Dupont S, Luettin J. Audio-visual speech modeling for continuous speech recognition. IEEE Transactions on Multimedia, 2000, 2(3): 141−151 doi: 10.1109/6046.865479 [77] Chan M T. HMM-based audio-visual speech recognition integrating geometric- and appearance-based visual features. In: Proceedings of the 4th Workshop on Multimedia Signal Processing. Cannes, France: IEEE, 2001. 9−14 [78] Roweis S T, Sau L K. Nonlinear dimensionality reduction by locally linear embedding. Science, 2000, 290(5500): 2323−2326 doi: 10.1126/science.290.5500.2323 [79] Tenenbaum J B, de Silva V, Langford J C. A global geometric framework for nonlinear dimensionality reduction. Science, 2000, 290(5500): 2319−2323 doi: 10.1126/science.290.5500.2319 [80] Yan S C, Xu D, Zhang B Y, Zhang H J, Yang Q, Lin S. Graph embedding and extensions: A general framework for dimensionality reduction. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2007, 29(1): 40−51 doi: 10.1109/TPAMI.2007.250598 [81] Fu Y, Yan S C, Huang T S. Classification and feature extraction by simplexization. IEEE Transactions on Information Forensics and Security, 2008, 3(1): 91−100 doi: 10.1109/TIFS.2007.916280 [82] Ojala T, Pietikäinen M, Harwood D. A comparative study of texture measures with classification based on featured distributions. Pattern Recognition, 1996, 29(1): 51−59 doi: 10.1016/0031-3203(95)00067-4 [83] Ojala T, Pietikäinen M, Mäenpää T. Multiresolution gray-scale and rotation invariant texture classification with local binary patterns. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2002, 24(7): 971−987 doi: 10.1109/TPAMI.2002.1017623 [84] 刘丽, 赵凌君, 郭承玉, 王亮, 汤俊. 图像纹理分类方法研究进展和展望. 自动化学报, 2018, 44(4): 584−607Liu Li, Zhao Ling-Jun, Guo Cheng-Yu, Wang Liang, Tang Jun. Texture classification: State-of-the-art methods and prospects. Acta Automatica Sinica, 2018, 44(4): 584−607 [85] Pietikäinen M, Hadid A, Zhao G, Ahonen T. Computer Vision Using Local Binary Patterns. London: Springer, 2011. [86] Liu L, Chen J, Fieguth P, Zhao G Y, Chellappa R, Pietikäinen M. From BoW to CNN: Two decades of texture representation for texture classification. International Journal of Computer Vision, 2019, 127(1): 74−109 doi: 10.1007/s11263-018-1125-z [87] 刘丽, 谢毓湘, 魏迎梅, 老松杨. 局部二进制模式方法综述. 中国图象图形学报, 2014, 19(12): 1696−1720 doi: 10.11834/jig.20141202Liu Li, Xie Yu-Xiang, Wei Ying-Mei, Lao Song-Yang. Survey of Local Binary Pattern method. Journal of Image and Graphics, 2014, 19(12): 1696−1720 doi: 10.11834/jig.20141202 [88] Horn B K P, Schunck B G. Determining optical flow. Artificial Intelligence, 1981, 17(1-3): 185−203 doi: 10.1016/0004-3702(81)90024-2 [89] Bouguet J Y. Pyramidal implementation of the affine Lucas Kanade feature tracker description of the algorithm. Intel Corporation, 2001, 5: 1−9 [90] Lucas B D, Kanade T. An iterative image registration technique with an application to stereo vision. In: Proceedings of the 7th International Joint Conference on Artificial Intelligence. San Francisco, CA, United States: Morgan Kaufmann Publishers Inc., 1981. 674−679 [91] Rekik A, Ben-Hamadou A, Mahdi W. An adaptive approach for lip-reading using image and depth data. Multimedia Tools and Applications, 2016, 75(14): 8609−8636 doi: 10.1007/s11042-015-2774-3 [92] Shaikh A A, Kumar D K, Yau W C, Azemin M Z C, Gubbi J. Lip reading using optical flow and support vector machines. In: Proceedings of the 3rd International Congress on Image and Signal Processing. Yantai, China: IEEE, 2010. 327−330 [93] Goldschen A J, Garcia O N, Petajan E. Continuous optical automatic speech recognition by lipreading. In: Proceedings of the 28th Asilomar Conference on Signals, Systems and Computers. Pacific Grove, CA, USA: IEEE, 1994. 572−577 [94] King S, Frankel J, Livescu K, McDermott E, Richmond K, Wester M. Speech production knowledge in automatic speech recognition. The Journal of the Acoustical Society of America, 2007, 121(2): 723−742 doi: 10.1121/1.2404622 [95] Kirchhoff K, Fink G A, Sagerer G. Combining acoustic and articulatory feature information for robust speech recognition. Speech Communication, 2002, 37(3−4): 303−319 doi: 10.1016/S0167-6393(01)00020-6 [96] Livescu K, Cetin O, Hasegawa-Johnson M, King S, Bartels C, Borges N, et al. Articulatory feature-based methods for acoustic and audio-visual speech recognition: Summary from the 2006 JHU Summer Workshop. In: Proceedings of the 2007 IEEE International Conference on Acoustics, Speech and Signal Processing. Honolulu, USA: IEEE. 2007. IV−621−IV−624 [97] Saenko K, Livescu K, Glass J, Darrell T. Production domain modeling of pronunciation for visual speech recognition. In: Proceeding of the 2005 IEEE International Conference on Acoustics, Speech, and Signal Processing. Philadelphia, USA: IEEE. 2005. v/473−v/476 [98] Saenko K, Livescu K, Glass J, Darrell T. Multistream articulatory feature-based models for visual speech recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2009, 31(9): 1700−1707 doi: 10.1109/TPAMI.2008.303 [99] Saenko K, Livescu K, Siracusa M, Wilson K, Glass J, Darrell T. Visual speech recognition with loosely synchronized feature streams. In: Proceeding of the 10th IEEE International Conference on Computer Vision. Beijing, China: IEEE. 2005. 1424−1431 [100] Papcun G, Hochberg J, Thomas T R, Laroche F, Zacks J, Levy S. Inferring articulation and recognizing gestures from acoustics with a neural network trained on x-ray microbeam data. The Journal of the Acoustical Society of America, 1992, 92(2): 688−700 doi: 10.1121/1.403994 [101] Matthews I, Potamianos G, Neti C, Luettin J. A comparison of model and transform-based visual features for audio-visual LVCSR. In: Proceedings of the 2001 IEEE International Conference on Multimedia and Expo. Tokyo, Japan: IEEE, 2001. 825−828 [102] Papandreou G, Katsamanis A, Pitsikalis V, Maragos P. Adaptive multimodal fusion by uncertainty compensation with application to audiovisual speech recognition. IEEE Transactions on Audio, Speech, and Language Processing, 2009, 17(3): 423−435 doi: 10.1109/TASL.2008.2011515 [103] Hilder S, Harvey R W, Theobald B J. Comparison of human and machine-based lip-reading. In: Proceedings of the 2009 AVSP. 2009: 86−89 [104] Lan Y X, Theobald B J, Harvey R. View independent computer lip-reading. In: Proceedings of the 2012 IEEE International Conference on Multimedia and Expo. Melbourne, Australia: IEEE, 2012. 432−437 [105] Lan Y X, Harvey R, Theobald B J. Insights into machine lip reading. In: Proceedings of the 2012 IEEE International Conference on Acoustics, Speech and Signal Processing. Kyoto, Japan: IEEE, 2012. 4825−4828 [106] Bear H L, Harvey R. Decoding visemes: Improving machine lip-reading. In: Proceedings of the 2016 IEEE International Conference on Acoustics, Speech and Signal Processing. Shanghai, China: IEEE, 2016. 2009−2013 [107] LeCun Y, Bengio Y, Hinton G. Deep learning. Nature, 2015, 521(7553): 436−444 doi: 10.1038/nature14539 [108] Hinton G E, Salakhutdinov R R. Reducing the dimensionality of data with neural networks. Science, 2006, 313(5786): 504−507 doi: 10.1126/science.1127647 [109] Hong X P, Yao H X, Wan Y Q, Chen R. A PCA based visual DCT feature extraction method for lip-reading. In: Proceedings of the 2006 International Conference on Intelligent Information Hiding and Multimedia. Pasadena, USA: IEEE, 2006. 321−326 [110] Krizhevsky A, Sutskever I, Hinton G E. ImageNet classification with deep convolutional neural networks. In: Proceedings of the 25th International Conference on Neural Information Processing Systems. Red Hook, NY, United States: Curran Associates Inc., 2012. 1097−1105 [111] Simonyan K, Zisserman A. Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv: 1409.1556, 2014 [112] Szegedy C, Liu W, Jia Y Q, Sermanet P, Reed S, Anguelov D, et al. Going deeper with convolutions. In: Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition. Boston, USA: IEEE, 2015. 1−9 [113] He K M, Zhang X Y, Ren S Q, Sun J. Deep residual learning for image recognition. In: Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas, USA: IEEE, 2016. 770−778 [114] Huang G, Liu Z, Van Der Maaten L, Weinberger K Q. Densely connected convolutional networks. In: Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition. Hawaii, USA: IEEE, 2017. 2261−2269 [115] Hu J, Shen L, Sun G. Squeeze-and-excitation networks. In: Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Salt Lake City, Utah, USA: IEEE, 2018. 7132−7141 [116] Liu L, Ouyang W L, Wang X G, Fieguth P, Chen J, Liu X W, et al. Deep learning for generic object detection: A survey. arXiv preprint arXiv: 1809.02165, 2018 [117] Long J, Shelhamer E, Darrell T. Fully convolutional networks for semantic segmentation. In: Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition. Boston, USA: IEEE, 2015. 3431−3440 [118] Graves A, Mohamed A, Hinton G. Speech recognition with deep recurrent neural networks. In: Proceedings of the 2013 IEEE international Conference on Acoustics, Speech and Signal Processing. Vancouver, Canada: IEEE, 2013. 6645−6649 [119] Noda K, Yamaguchi Y, Nakadai K, Okuno H G, Ogata T. Lipreading using convolutional neural network. In: Proceedings of the 15th Annual Conference of the International Speech Communication Association. Singapore: ISCA, 2014. 1149−1153 [120] Ji S W, Xu W, Yang M, Yu K. 3D convolutional neural networks for human action recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2013, 35(1): 221−231 doi: 10.1109/TPAMI.2012.59 [121] Herath S, Harandi M, Porikli F. Going deeper into action recognition: A survey. Image and Vision Computing, 2017, 60: 4−21 doi: 10.1016/j.imavis.2017.01.010 [122] Mroueh Y, Marcheret E, Goel V. Deep multimodal learning for audio-visual speech recognition. In: Proceedings of the 2015 IEEE International Conference on Acoustics, Speech and Signal Processing. Queensland, Australia: IEEE, 2015. 2130−2134 [123] Thangthai K, Harvey R W, Cox S J, et al. Improving lip-reading performance for robust audiovisual speech recognition using DNNs. In: Proceedings of the 2015 AVSP. 2015: 127−131. [124] Gers F A, Schmidhuber J, Cummins F. Learning to forget: Continual prediction with LSTM. Neural Computation, 2000, 12(10): 2451−2471 doi: 10.1162/089976600300015015 [125] Chung J, Gulcehre C, Cho K, Bengio Y. Empirical evaluation of gated recurrent neural networks on sequence modeling. arXiv preprint arXiv: 1412.3555, 2014 [126] Wand M, Koutník J, Schmidhuber J. Lipreading with long short-term memory. In: Proceedings of the 2016 IEEE International Conference on Acoustics, Speech and Signal Processing. Shanghai, China: IEEE, 2016. 6115−6119 [127] Garg A, Noyola J, Bagadia S. Lip reading using CNN and LSTM, Technical Report, CS231n Project Report, Stanford University, USA, 2016. [128] Stafylakis T, Tzimiropoulos G. Combining residual networks with LSTMs for lipreading. arXiv preprint arXiv: 1703.04105, 2017 [129] Graves A, Fernández S, Gomez F, Schmidhuber J. Connectionist temporal classification: Labelling unsegmented sequence data with recurrent neural networks. In: Proceedings of the 23rd International Conference on Machine Learning. New York: ACM, 2006. 369−376 [130] Miao Y, Gowayyed M, Metze F. EESEN: End-to-end speech recognition using deep RNN models and WFST-based decoding. In: Proceedings of the 2015 IEEE Workshop on Automatic Speech Recognition and Understanding. Arizona, USA: IEEE, 2015. 167−174 [131] Petridis S, Stafylakis T, Ma P, Cai F P, Tzimiropoulos G, Pantic M. End-to-end audiovisual speech recognition. In: Proceedings of the 2018 IEEE International Conference on Acoustics, Speech and Signal Processing. Calgary, Canada: IEEE, 2018. 6548−6552 [132] Fung I, Mak B. End-to-end low-resource lip-reading with Maxout Cnn and Lstm. In: Proceedings of the 2018 IEEE International Conference on Acoustics, Speech and Signal Processing. Calgary, Canada: IEEE, 2018. 2511−2515 [133] Wand M, Schmidhuber J. Improving speaker-independent lipreading with domain-adversarial training. arXiv preprint arXiv: 1708.01565, 2017 [134] Wand M, Schmidhuber J, Vu N T. Investigations on end-to-end audiovisual fusion. In: Proceedings of the 2018 IEEE International Conference on Acoustics, Speech and Signal Processing. Calgary, Canada: IEEE, 2018. 3041−3045 [135] Srivastava R K, Greff K, Schmidhuber J. Training very deep networks. In: Proceedings of the 28th International Conference on Neural Information Processing Systems. Cambridge, MA United States: MIT Press, 2015. 2377−2385 [136] Sutskever I, Vinyals O, Le Q V. Sequence to sequence learning with neural networks. In: Proceedings of the 27th International Conference on Neural Information Processing Systems. Cambridge, MA: MIT Press, 2014. 3104−3112 [137] Bahdanau D, Cho K, Bengio Y. Neural machine translation by jointly learning to align and translate. arXiv preprint arXiv: 1409.0473, 2014 [138] Chaudhari S, Polatkan G, Ramanath R, Mithal V. An attentive survey of attention models. arXiv preprint arXiv: 1904.02874, 2019 [139] Wang F, Tax D M J. Survey on the attention based RNN model and its applications in computer vision. arXiv preprint arXiv: 1601.06823, 2016 [140] Chung J S, Zisserman A. Lip reading in profile. In: Proceedings of the British Machine Vision Conference. Guildford: BMVA Press, 2017. 155.1−155.11 [141] Russakovsky O, Deng J, Su H, Krause J, Satheesh S, Ma S, et al. ImageNet large scale visual recognition challenge. International Journal of Computer Vision, 2015, 115(3): 211−252 doi: 10.1007/s11263-015-0816-y [142] Saitoh T, Zhou Z H, Zhao G Y, Pietikäinen M. Concatenated frame image based cnn for visual speech recognition. In: Proceedings of the 2016 Asian Conference on Computer Vision. Taiwan, China: Springer, 2016. 277−289 [143] Lin M, Chen Q, Yan S C. Network in network. arXiv preprint arXiv: 1312.4400, 2013 [144] Petridis S, Li Z W, Pantic M. End-to-end visual speech recognition with LSTMs. In: Proceedings of the 2017 IEEE International Conference on Acoustics, Speech and Signal Processing. New Orleans, USA: IEEE, 2017. 2592−2596 [145] Petridis S, Wang Y J, Li Z W, Pantic M. End-to-end audiovisual fusion with LSTMS. arXiv preprint arXiv: 1709.04343, 2017 [146] Petridis S, Wang Y J, Li Z W, Pantic M. End-to-end multi-view lipreading. arXiv preprint arXiv: 1709.00443, 2017 [147] Petridis S, Shen J, Cetin D, Pantic M. Visual-only recognition of normal, whispered and silent speech. In: Proceedings of the 2018 IEEE International Conference on Acoustics, Speech and Signal Processing. Calgary, Canada: IEEE, 2018. 6219−6223 [148] Moon S, Kim S, Wang H H. Multimodal transfer deep learning with applications in audio-visual recognition. arXiv preprint arXiv: 1412.3121, 2014 [149] Chollet F. Xception: Deep learning with depthwise separable convolutions. In: Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition. Honolulu, Hawaii, USA: IEEE, 2017. 1800−1807 [150] Vaswani A, Shazeer N, Parmar N, Uszkoreit J, Jones L, Gomez A N, et al. Attention is all you need. In: Proceedings of the 31st International Conference on Neural Information Processing Systems. New York, United States: Curran Associates Inc., 2017. 6000−6010 [151] Afouras T, Chung J S, Senior A, et al. Deep audio-visual speech recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2018.DOI: 10.1109/TPAMI.2018.2889052 [152] AV Letters Database [Online], available: http://www2.cmp.uea.ac.uk/~bjt/avletters/, October 27, 2020 [153] AVICAR Project: Audio-Visual Speech Recognition in a Car [Online], available: http://www.isle.illinois.edu/sst/AVICAR/#information, October 27, 2020 [154] The Extended M2VTS Database [Online], available: http://www.ee.surrey.ac.uk/CVSSP/xm2vtsdb/, October 27, 2020 [155] The BANCA Database [Online], available: http://www.ee.surrey.ac.uk/CVSSP/banca/, October 27, 2020 [156] CUAVE Group Set [Online], available: http://people.csail.mit.edu/siracusa/avdata/, October 27, 2020 [157] VALID: Visual quality Assessment for Light field Images Dataset [Online], available: https://www.epfl.ch/labs/mmspg/downloads/valid/, October 27, 2020 [158] Speech Resources Consortium [Online], available: http://research.nii.ac.jp/src/en/data.html, October 27, 2020 [159] AusTalk [Online], available: https://austalk.edu.au/about/corpus/, October 27, 2020 [160] OULUVS2: A MULTI-VIEW AUDIOVISUAL DATABASE [Online], available: http://www.ee.oulu.fi/research/imag/OuluVS2/, October 27, 2020 [161] Patterson E K, Gurbuz S, Tufekci Z, Gowdy J N. CUAVE: A new audio-visual database for multimodal human-computer interface research. In: Proceedings of the 2002 IEEE International Conference on Acoustics, Speech, and Signal Processing. Orlando, Florida, USA: IEEE, 2002. II−2017−II−2020 [162] Fox N A, O'Mullane B A, Reilly R B. VALID: A new practical audio-visual database, and comparative results. In: Proceedings of the 2005 International Conference on Audio-and Video-Based Biometric Person Authentication. Berlin, Germany: Springer, 2005. 777−786 [163] Anina I, Zhou Z H, Zhao G Y, Pietikäinen M. OuluVS2: A multi-view audiovisual database for non-rigid mouth motion analysis. In: Proceedings of the 11th IEEE International Conference and Workshops on Automatic Face and Gesture Recognition. Ljubljana, Slovenia: IEEE, 2015. 1−5 [164] Estival D, Cassidy S, Cox F, et al. AusTalk: an audio-visual corpus of Australian English. In: Proceedings of the 2014 LREC 2014. [165] Tamura S, Miyajima C, Kitaoka N, et al. CENSREC-1-AV: An audio-visual corpus for noisy bimodal speech recognition. In: Proceedings of the Auditory-Visual Speech Processing 2010. 2010. [166] Pass A, Zhang J G, Stewart D. An investigation into features for multi-view lipreading. In: Proceedings of the 2010 IEEE International Conference on Image Processing. Hong Kong, China: IEEE, 2010. 2417−2420 [167] Neti C, Potamianos G, Luettin J, et al. Audio visual speech recognition. IDIAP, 2000. [168] Sanderson C. The vidtimit database. IDIAP, 2002. [169] Jankowski C, Kalyanswamy A, Basson S, Spitz J. NTIMIT: A phonetically balanced, continuous speech, telephone bandwidth speech database. In: Proceedings of the 1990 International Conference on Acoustics, Speech, and Signal Processing. Albuquerque, New Mexico, USA: IEEE, 1990. 109−112 [170] Hazen T J, Saenko K, La C H, Glass J R. A segment-based audio-visual speech recognizer: Data collection, development, and initial experiments. In: Proceedings of the 6th International Conference on Multimodal Interfaces. State College, PA, USA: ACM, 2004. 235−242 [171] MIRACL-VC1 [Online], available: https://sites.google.com/site/achrafbenhamadou/-datasets/miracl-vc1, October 27, 2020 [172] The Oxford-BBC Lip Reading in the Wild (LRW) Dataset [Online], available: http://www.robots.ox.ac.uk/~vgg/data/lip_reading/lrw1.html, October 27, 2020 [173] LRW-1000: Lip Reading database [Online], available: http://vipl.ict.ac.cn/view_database.php?id=14, October 27, 2020 [174] The GRID audiovisual sentence corpus [Online], available: http://spandh.dcs.shef.ac.uk/gridcorpus/, October 27, 2020 [175] OuluVS database [Online], available: https://www.oulu.fi/cmvs/node/41315, October 27, 2020 [176] VidTIMIT Audio-Video Dataset [Online], available: http://conradsanderson.id.au/vidtimit/#downloads, October 27, 2020 [177] LiLiR [Online], available: http://www.ee.surrey.ac.uk/Projects/LILiR/datasets.html, October 27, 2020 [178] MOBIO [Online], available: https://www.idiap.ch/dataset/mobio, October 27, 2020 [179] TCD-TIMIT [Online], available: https://sigmedia.tcd.ie/TCDTIMIT/, October 27, 2020 [180] Lip Reading Datasets [Online], available: http://www.robots.ox.ac.uk/~vgg/data/lip_reading/, October 27, 2020 [181] Visual Lip Reading Feasibility (VRLF) [Online], available: https://datasets.bifrost.ai/info/845, October 27, 2020 [182] Rekik A, Ben-Hamadou A, Mahdi W. A new visual speech recognition approach for RGB-D cameras. In: Proceedings of the 2014 International Conference Image Analysis and Recognition. Vilamoura, Portugal: Springer, 2014. 21−28 [183] McCool C, Marcel S, Hadid A, Pietikäinen M, Matejka P, Cernockỳ J, et al. Bi-modal person recognition on a mobile phone: Using mobile phone data. In: Proceedings of the 2012 IEEE International Conference on Multimedia and Expo Workshops. Melbourne, Australia: IEEE, 2012. 635−640 [184] Howell D. Confusion Modelling for Lip-Reading [Ph. D. dissertation], University of East Anglia, Norwich, 2015 [185] Harte N, Gillen E. TCD-TIMIT: An audio-visual corpus of continuous speech. IEEE Transactions on Multimedia, 2015, 17(5): 603−615 doi: 10.1109/TMM.2015.2407694 [186] Verkhodanova V, Ronzhin A, Kipyatkova I, Ivanko D, Karpov A, Zelezny M. HAVRUS corpus: High-speed recordings of audio-visual Russian speech. In: Proceedings of the 2016 International Conference on Speech and Computer. Budapest, Hungary: Springer, 2016. 338−345 [187] Fernandez-Lopez A, Martinez O, Sukno F M. Towards estimating the upper bound of visual-speech recognition: The visual lip-reading feasibility database. In: Proceedings of the 12th IEEE International Conference on Automatic Face & Gesture Recognition. Washington, USA: IEEE, 2017. 208−215 [188] Cooke M, Barker J, Cunningham S, Shao X. An audio-visual corpus for speech perception and automatic speech recognition. The Journal of the Acoustical Society of America, 2006, 120(5): 2421−2424 doi: 10.1121/1.2229005 [189] Vorwerk A, Wang X, Kolossa D, et al. WAPUSK20-A Database for Robust Audiovisual Speech Recognition. In: Proceedings of the 2010 LREC. 2010. [190] Czyzewski A, Kostek B, Bratoszewski P, Kotus J, Szykulski M. An audio-visual corpus for multimodal automatic speech recognition. Journal of Intelligent Information Systems, 2017, 49(2): 167−192 doi: 10.1007/s10844-016-0438-z [191] Afouras T, Chung J S, Zisserman A. LRS3-TED: A large-scale dataset for visual speech recognition. arXiv preprint arXiv: 1809.00496, 2018 [192] Yang S, Zhang Y H, Feng D L, Yang M M, Wang C H, Xiao J Y, et al. LRW-1000: A naturally-distributed large-scale benchmark for lip reading in the wild. In: Proceedings of the 14th IEEE International Conference on Automatic Face and Gesture Recognition. Lille, France: IEEE, 2019. 1−8 [193] Petridis S, Pantic M. Deep complementary bottleneck features for visual speech recognition. In: Proceedings of the 2016 IEEE International Conference on Acoustics, Speech and Signal Processing. Shanghai, China: IEEE, 2016. 2304−2308 [194] Rahmani M H, Almasganj F. Lip-reading via a DNN-HMM hybrid system using combination of the image-based and model-based features. In: Proceedings of the 3rd International Conference on Pattern Recognition and Image Analysis. Shahrekord, Iran: IEEE, 2017. 195−199 [195] Dosovitskiy A, Fischer P, Ilg E, Häusser P, Hazirbas C, Golkov V, et al. FlowNet: Learning optical flow with convolutional networks. In: Proceedings of the 2015 IEEE International Conference on Computer Vision. Santiago, Chile: IEEE, 2015. 2758−2766 [196] Ilg E, Mayer N, Saikia T, Keuper M, Dosovitskiy A, Brox T. FlowNet 2.0: Evolution of optical flow estimation with deep networks. In: Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition. Hawaii, USA: IEEE, 2017. 1647−1655 [197] Simonyan K, Zisserman A. Two-stream convolutional networks for action recognition in videos. In: Proceedings of the 27th International Conference on Neural Information Processing Systems. Cambridge, MA, United States: MIT Press, 2014. 568−576 [198] Feichtenhofer C, Pinz A, Zisserman A. Convolutional two-stream network fusion for video action recognition. In: Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas, USA: IEEE, 2016. 1933−1941 [199] Jaderberg M, Simonyan K, Zisserman A, Kavukcuoglu K. Spatial transformer networks. In: Proceedings of the 28th International Conference on Neural Information Processing Systems. Cambridge, MA, United States: MIT Press, 2015. 2017−2025 [200] Bhagavatula C, Zhu C C, Luu K, Savvides M. Faster than real-time facial alignment: A 3D spatial transformer network approach in unconstrained poses. In: Proceedings of the 2017 IEEE International Conference on Computer Vision. Venice, Italy: IEEE, 2017. 4000−4009 [201] Baltrušaitis T, Ahuja C, Morency L P. Multimodal machine learning: A survey and taxonomy. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2019, 41(2): 423−443 doi: 10.1109/TPAMI.2018.2798607 [202] Loizou P C. Speech Enhancement: Theory and Practice. Boca Raton, FL: CRC Press, 2013. [203] Hou J C, Wang S S, Lai Y H, Tsao Y, Chang H W, Wang H M. Audio-visual speech enhancement based on multimodal deep convolutional neural network. arXiv preprint arXiv: 1703.10893, 2017 [204] Ephrat A, Halperin T, Peleg S. Improved speech reconstruction from silent video. In: Proceedings of the 2017 IEEE International Conference on Computer Vision Workshops. Venice, Italy: IEEE, 2017. 455−462 [205] Gabbay A, Shamir A, Peleg S. Visual speech enhancement. arXiv preprint arXiv: 1711.08789, 2017. 期刊类型引用(0)

其他类型引用(4)

-

下载:

下载:

下载:

下载: